🚀🚀🚀

Yolov5同时添加ASFF与新检测层🚀🚀🚀

前言

博主前段时间学习Yolov5其实是为了做毕业设计,目前毕业需要的任务都已完成就没有继续研究Yolov5的改进方法了,但是看到前面的博文评论区中有几位朋友想让我写一下如何同时添加ASFF与新检测层,便应邀动笔,不过没有再用自己的数据集试一下相关指标是否有改善(因为用自己的电脑跑一遍几十个小时实在是太久了),话不多说,请看下文。

第一步

在models/common.py文件最下面添加下面的代码:

def add_conv(in_ch, out_ch, ksize, stride, leaky=True):

"""

Add a conv2d / batchnorm / leaky ReLU block.

Args:

in_ch (int): number of input channels of the convolution layer.

out_ch (int): number of output channels of the convolution layer.

ksize (int): kernel size of the convolution layer.

stride (int): stride of the convolution layer.

Returns:

stage (Sequential) : Sequential layers composing a convolution block.

"""

stage = nn.Sequential()

pad = (ksize - 1) // 2

stage.add_module('conv', nn.Conv2d(in_channels=in_ch,

out_channels=out_ch, kernel_size=ksize, stride=stride,

padding=pad, bias=False))

stage.add_module('batch_norm', nn.BatchNorm2d(out_ch))

if leaky:

stage.add_module('leaky', nn.LeakyReLU(0.1))

else:

stage.add_module('relu6', nn.ReLU6(inplace=True))

return stage

class ASFF_4L(nn.Module):

def __init__(self, level, rfb=False, vis=False):

super(ASFF_4L, self).__init__()

self.level = level

# 特征金字塔从上到下三层的channel数

# 对应特征图大小(以640*640输入为例)分别为20*20, 40*40, 80*80

self.dim = [512, 256, 128, 64]

self.inter_dim = self.dim[self.level]

if level==0: # 特征图20*20的一层,channel数512

self.stride_level_1 = add_conv(256, self.inter_dim, 3, 2)

self.stride_level_2 = add_conv(128, self.inter_dim, 3, 2)

self.stride_level_3 = add_conv(64, self.inter_dim, 3, 2)

self.expand = add_conv(self.inter_dim, 512, 3, 1)

elif level==1: # 特征图40*40的一层,channel数256

self.compress_level_0 = add_conv(512, self.inter_dim, 1, 1)

self.stride_level_2 = add_conv(128, self.inter_dim, 3, 2)

self.stride_level_3 = add_conv(64, self.inter_dim, 3, 2)

self.expand = add_conv(self.inter_dim, 256, 3, 1)

elif level==2: # 特征图80*80的一层,channel数128

self.compress_level_0 = add_conv(512, self.inter_dim, 1, 1)

self.compress_level_1 = add_conv(256, self.inter_dim, 1, 1)

self.stride_level_3 = add_conv(64, self.inter_dim, 3, 2)

self.expand = add_conv(self.inter_dim, 128, 3, 1)

elif level==3: # 特征图160*160的一层,channel数64

self.compress_level_0 = add_conv(512, self.inter_dim, 1, 1)

self.compress_level_1 = add_conv(256, self.inter_dim, 1, 1)

self.compress_level_2 = add_conv(128, self.inter_dim, 1, 1)

self.expand = add_conv(self.inter_dim, 64, 3, 1)

compress_c = 8 if rfb else 16 #when adding rfb, we use half number of channels to save memory

self.weight_level_0 = add_conv(self.inter_dim, compress_c, 1, 1)

self.weight_level_1 = add_conv(self.inter_dim, compress_c, 1, 1)

self.weight_level_2 = add_conv(self.inter_dim, compress_c, 1, 1)

self.weight_level_3 = add_conv(self.inter_dim, compress_c, 1, 1)

self.weight_levels = nn.Conv2d(compress_c*4, 4, kernel_size=1, stride=1, padding=0)

self.vis= vis

def forward(self, x_level_0, x_level_1, x_level_2, x_level_3):

if self.level==0: # 20*20

level_0_resized = x_level_0 # 原特征图

level_1_resized = self.stride_level_1(x_level_1) # 卷积后自然缩小

level_2_downsampled_inter =F.max_pool2d(x_level_2, 3, stride=2, padding=1) # 尺寸缩小

level_2_resized = self.stride_level_2(level_2_downsampled_inter) # 尺寸缩小同时调整通道

level_3_downsampled_inter =F.max_pool2d(x_level_3, 5, stride=4, padding=2)

level_3_resized = self.stride_level_3(level_3_downsampled_inter)

elif self.level==1: # 40*40

level_0_compressed = self.compress_level_0(x_level_0) # 通道压缩

level_0_resized =F.interpolate(level_0_compressed, scale_factor=2, mode='nearest') # 放大第一层特征图

level_1_resized =x_level_1 # 原特征图

level_2_resized =self.stride_level_2(x_level_2) # 尺寸缩小同时调整通道

level_3_downsampled_inter =F.max_pool2d(x_level_3, 3, stride=2, padding=1) # 缩小

level_3_resized = self.stride_level_3(level_3_downsampled_inter)

elif self.level==2: # 80*80

level_0_compressed = self.compress_level_0(x_level_0)

level_0_resized =F.interpolate(level_0_compressed, scale_factor=4, mode='nearest') # 放大第一层特征图

level_1_compressed = self.compress_level_1(x_level_1)

level_1_resized =F.interpolate(level_1_compressed, scale_factor=2, mode='nearest') # 放大第二层特征图

level_2_resized =x_level_2

level_3_resized = self.stride_level_3(x_level_3)

elif self.level==3: # 160*160

level_0_compressed = self.compress_level_0(x_level_0)

level_0_resized =F.interpolate(level_0_compressed, scale_factor=8, mode='nearest') # 放大第一层特征图

level_1_compressed = self.compress_level_1(x_level_1)

level_1_resized =F.interpolate(level_1_compressed, scale_factor=4, mode='nearest') # 放大第二层特征图

level_2_compressed = self.compress_level_2(x_level_2)

level_2_resized =F.interpolate(level_2_compressed, scale_factor=2, mode='nearest') # 放大第三层特征图

level_3_resized =x_level_3

level_0_weight_v = self.weight_level_0(level_0_resized)

level_1_weight_v = self.weight_level_1(level_1_resized)

level_2_weight_v = self.weight_level_2(level_2_resized)

level_3_weight_v = self.weight_level_3(level_3_resized)

levels_weight_v = torch.cat((level_0_weight_v, level_1_weight_v, level_2_weight_v, level_3_weight_v),1)

levels_weight = self.weight_levels(levels_weight_v)

levels_weight = F.softmax(levels_weight, dim=1)

fused_out_reduced = level_0_resized * levels_weight[:,0:1,:,:]+\

level_1_resized * levels_weight[:,1:2,:,:]+\

level_2_resized * levels_weight[:,2:3,:,:]+\

level_3_resized * levels_weight[:,3:,:,:]

out = self.expand(fused_out_reduced)

if self.vis:

return out, levels_weight, fused_out_reduced.sum(dim=1)

else:

return out

第二步

在models/yolo.py文件的Detect类下面添加下面的类(我的是在92行加的):

class ASFF_4L_Detect(Detect):

# ASFF model for improvement

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

super().__init__(nc, anchors, ch, inplace)

self.nl = len(anchors)

self.asffs = nn.ModuleList(ASFF_4L(i) for i in range(self.nl))

self.detect = Detect.forward

def forward(self, x): # x中的特征图从大到小,与ASFF_4L中顺序相反,因此输入前先反向

x = x[::-1]

for i in range(self.nl):

x[i] = self.asffs[i](*x)

return self.detect(self, x[::-1])

第三步

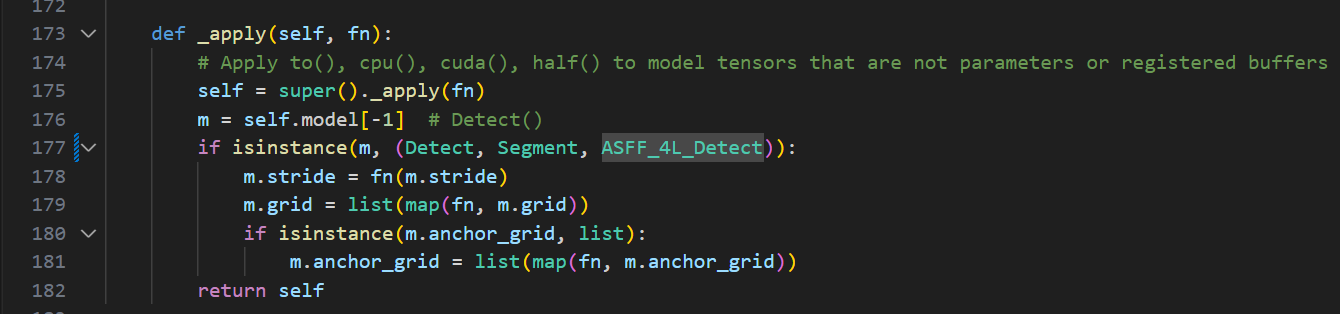

在有yolo.py这个文件中,出现 Detect, Segment这个代码片段的地方加入ASFF_4L_Detect,例如我的177行中改动后变成(一共有三处地方需要修改,不要漏了,不然会报错):

第四步

在models文件夹下新创建一个文件,命名为yolov5s-four-layer-ASFF.yaml,然后把下面的内容粘贴上去:

# YOLOv5 🚀 by Ultralytics, GPL-3.0 license

# Parameters

nc: 2 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [4,5, 8,10, 22,18] # P2/4

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 v6.0 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Conv, [64, 6, 2, 2]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 6, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 3, C3, [1024]],

[-1, 1, SPPF, [1024, 5]], # 9

]

# YOLOv5 v6.0 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [1]], # cat backbone P3

# add feature extration layer

[-1, 3, C3, [256, False]], # 17

[-1, 1, Conv, [128, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 2], 1, Concat, [1]], # cat backbone P3

# add detect layer

[-1, 3, C3, [128, False]], # 21 (P4/4-minium)

[-1, 1, Conv, [128, 3, 2]],

[[-1, 18], 1, Concat, [1]], # cat head P3

# end

[-1, 3, C3, [256, False]], # 24 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 27 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 30 (P5/32-large)

[[21, 24, 27, 30], 1, ASFF_4L_Detect, [nc, anchors]], # Detect(P2, P3, P4, P5)

]

第五步

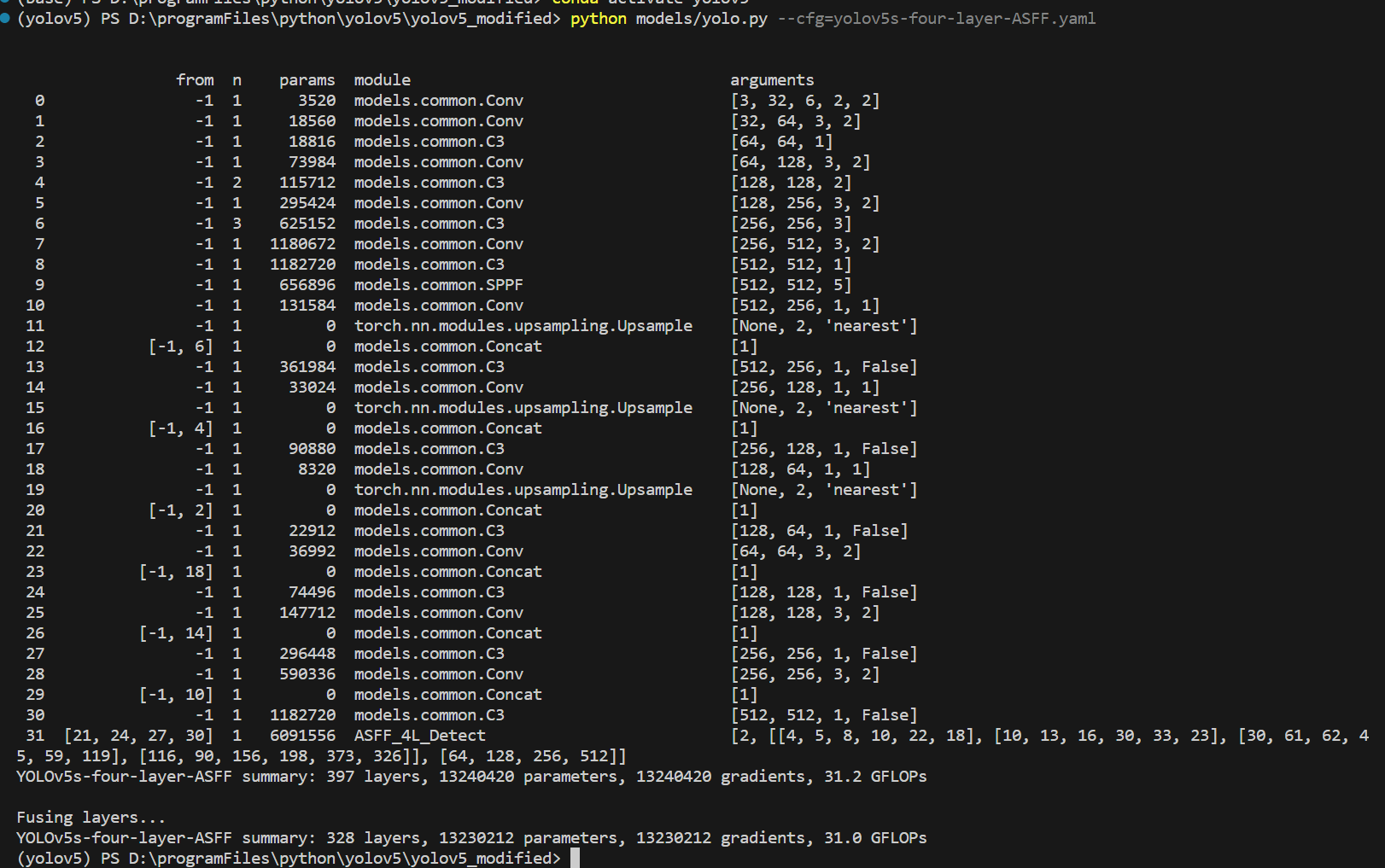

查看一下效果,在终端输入命令:

python models/yolo.py --cfg=yolov5s-ASFF.yaml

运行后可以看到我们同时修改添加ASFF与新检测层的模型相关参数:

后续训练只需要指定cfg文件为yolov5s-four-layer-ASFF.yaml就行了

如果觉得本文对你有帮助,不妨动动小手点个赞,你的三连是作者更新的最大动力😊🌹

2380

2380

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?