目录

svm推导

smo优化推导

代码部分

import sys

from numpy import *

#from math import *

def loadDataSet(filename):

fr = open(filename)

data = []

label = []

for line in fr.readlines():

lineAttr = line.strip().split('\t')

data.append([float(x) for x in lineAttr[:-1]])

label.append(float(lineAttr[-1]))

return data,label

def selectJrand(i,m):

j = i

while j == i:

j = int(random.uniform(0,m))

return j

def clipAlpha(a_j,H,L):

if a_j > H:

a_j = H

if L > a_j:

a_j = L

return a_j

def smoSimple(data,label,C,toler,maxIter):

dataMatrix = mat(data)

labelMatrix = mat(label).transpose()

b = 0.0

iter = 0

m,n = shape(dataMatrix)

alpha = mat(zeros((m,1)))

while iter < maxIter:

alphapairChanged = 0

for i in range(m):

fxi = float(multiply(alpha,labelMatrix).T * (dataMatrix * dataMatrix[i,:].T)) + b

Ei = fxi - float(labelMatrix[i])

if labelMatrix[i] * Ei < -toler and alpha[i] < C or labelMatrix[i] * Ei > toler and alpha[i] > 0:

j = selectJrand(i,m)

fxj = float(multiply(alpha,labelMatrix).T * (dataMatrix * dataMatrix[j,:].T)) + b

Ej = fxj - float(labelMatrix[j])

alphaIOld = alpha[i].copy()

alphaJOld = alpha[j].copy()

if labelMatrix[i] != labelMatrix[j]:

L = max(0,alpha[j] - alpha[i])

H = min(C,C+alpha[j]-alpha[i])

else:

L = max(0,alpha[i]+alpha[j] - C)

H = min(C,alpha[j]+alpha[i])

if L==H:

print ("L==H")

continue

eta = 2.0 * dataMatrix[i,:]*dataMatrix[j,:].T - dataMatrix[i,:]*dataMatrix[i,:].T - dataMatrix[j,:]*dataMatrix[j,:].T

if eta >= 0:

print ("eta >= 0")

continue

alpha[j] -= labelMatrix[j]*(Ei-Ej)/eta

alpha[j] = clipAlpha(alpha[j],H,L)

if abs(alpha[j] - alphaJOld) < 0.00001 :

print ("%d not move enough"%j)

continue

alpha[i] += labelMatrix[j]*labelMatrix[i]*(alphaJOld - alpha[j])

b1 = b - Ei -labelMatrix[i]*(alpha[i] - alphaIOld)*dataMatrix[i,:]*dataMatrix[i,:].T \

-labelMatrix[j]*(alpha[j]-alphaJOld)*dataMatrix[i,:]*dataMatrix[j,:].T

b2 = b - Ej -labelMatrix[i]*(alpha[i] - alphaIOld)*dataMatrix[i,:]*dataMatrix[j,:].T \

-labelMatrix[j]*(alpha[j]-alphaJOld)*dataMatrix[j,:]*dataMatrix[j,:].T

if alpha[i] > 0 and alpha[i] < C:

b = b1

elif alpha[j] > 0 and alpha[j] < C:

b = b2

else:

b = (b1+b2)/2.0

alphapairChanged +=1

print ("第%d迭代 第%d个值,更改了%d次" %(iter,i,alphapairChanged))

if alphapairChanged == 0:

iter +=1

else:

iter = 0

print ("迭代次数: %d" % iter)

return b,alpha

def main():

data,label = loadDataSet('testSet.txt')

print (data)

print (label)

b,alpha = smoSimple(data,label,0.6,0.001,40)

print (b)

print (alpha)

for i in range(100):

if alpha[i]>0:

print (data[i],label[i])

if __name__ == '__main__':

sys.exit(main())数据集

3.542485 1.977398 -1

3.018896 2.556416 -1

7.551510 -1.580030 1

2.114999 -0.004466 -1

8.127113 1.274372 1

7.108772 -0.986906 1

8.610639 2.046708 1

2.326297 0.265213 -1

3.634009 1.730537 -1

0.341367 -0.894998 -1

3.125951 0.293251 -1

2.123252 -0.783563 -1

0.887835 -2.797792 -1

7.139979 -2.329896 1

1.696414 -1.212496 -1

8.117032 0.623493 1

8.497162 -0.266649 1

4.658191 3.507396 -1

8.197181 1.545132 1

1.208047 0.213100 -1

1.928486 -0.321870 -1

2.175808 -0.014527 -1

7.886608 0.461755 1

3.223038 -0.552392 -1

3.628502 2.190585 -1

7.407860 -0.121961 1

7.286357 0.251077 1

2.301095 -0.533988 -1

-0.232542 -0.547690 -1

3.457096 -0.082216 -1

3.023938 -0.057392 -1

8.015003 0.885325 1

8.991748 0.923154 1

7.916831 -1.781735 1

7.616862 -0.217958 1

2.450939 0.744967 -1

7.270337 -2.507834 1

1.749721 -0.961902 -1

1.803111 -0.176349 -1

8.804461 3.044301 1

1.231257 -0.568573 -1

2.074915 1.410550 -1

-0.743036 -1.736103 -1

3.536555 3.964960 -1

8.410143 0.025606 1

7.382988 -0.478764 1

6.960661 -0.245353 1

8.234460 0.701868 1

8.168618 -0.903835 1

1.534187 -0.622492 -1

9.229518 2.066088 1

7.886242 0.191813 1

2.893743 -1.643468 -1

1.870457 -1.040420 -1

5.286862 -2.358286 1

6.080573 0.418886 1

2.544314 1.714165 -1

6.016004 -3.753712 1

0.926310 -0.564359 -1

0.870296 -0.109952 -1

2.369345 1.375695 -1

1.363782 -0.254082 -1

7.279460 -0.189572 1

1.896005 0.515080 -1

8.102154 -0.603875 1

2.529893 0.662657 -1

1.963874 -0.365233 -1

8.132048 0.785914 1

8.245938 0.372366 1

6.543888 0.433164 1

-0.236713 -5.766721 -1

8.112593 0.295839 1

9.803425 1.495167 1

1.497407 -0.552916 -1

1.336267 -1.632889 -1

9.205805 -0.586480 1

1.966279 -1.840439 -1

8.398012 1.584918 1

7.239953 -1.764292 1

7.556201 0.241185 1

9.015509 0.345019 1

8.266085 -0.230977 1

8.545620 2.788799 1

9.295969 1.346332 1

2.404234 0.570278 -1

2.037772 0.021919 -1

1.727631 -0.453143 -1

1.979395 -0.050773 -1

8.092288 -1.372433 1

1.667645 0.239204 -1

9.854303 1.365116 1

7.921057 -1.327587 1

8.500757 1.492372 1

1.339746 -0.291183 -1

3.107511 0.758367 -1

2.609525 0.902979 -1

3.263585 1.367898 -1

2.912122 -0.202359 -1

1.731786 0.589096 -1

2.387003 1.573131 -1

本文涉及两部分内容:SVM和SMO。

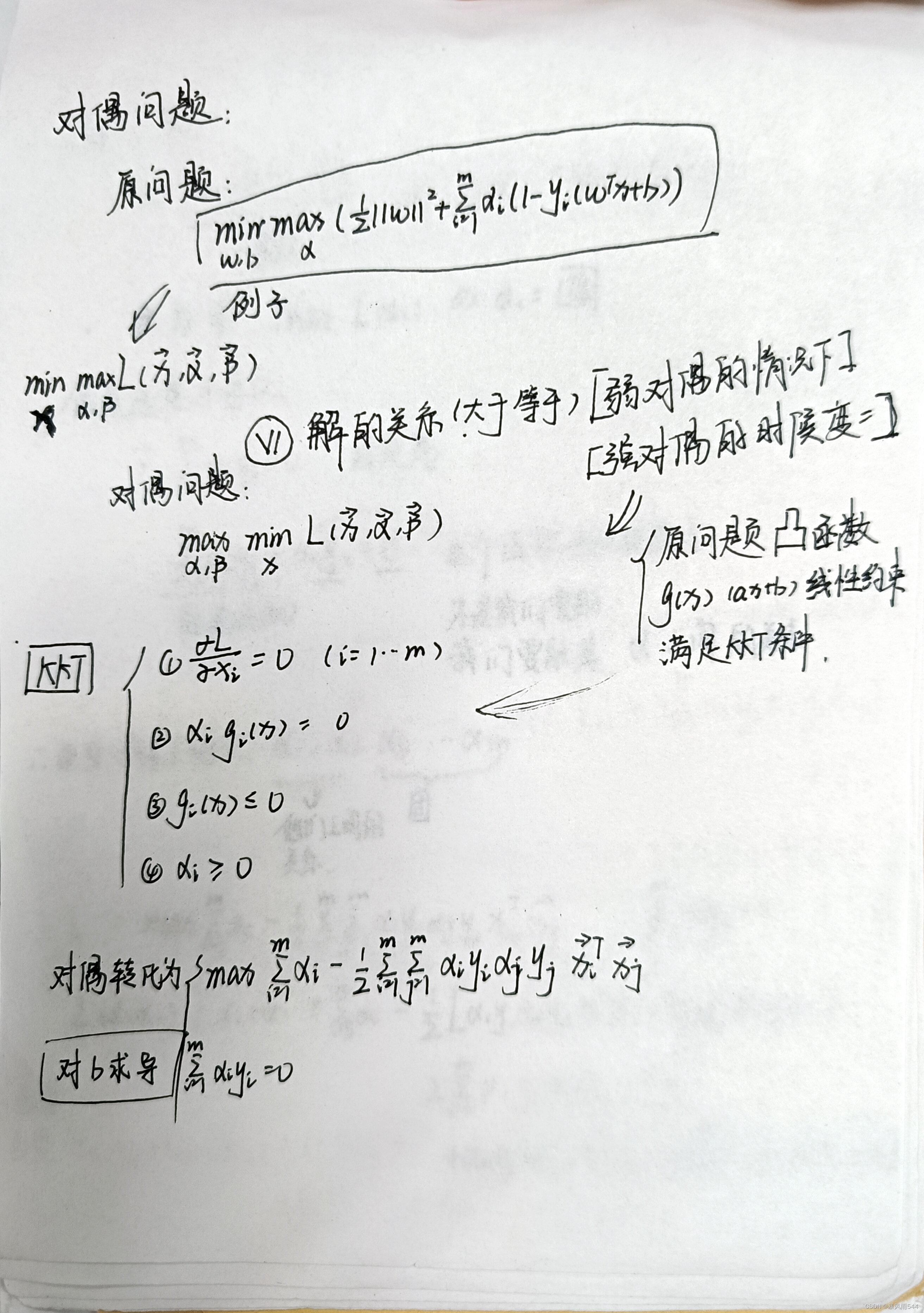

SVM,即支持向量机,是一种二分类模型。它的基本思想是找到一个能够将两类样本分开的超平面,使得两类样本距离该超平面的最小距离最大。在推导SVM的过程中,首先需要引入拉格朗日乘子法,将约束条件转化为拉格朗日函数的形式。然后通过求解拉格朗日函数的极值来得到模型的解。具体而言,首先推导出对偶问题,然后通过求解对偶问题得到模型的解。

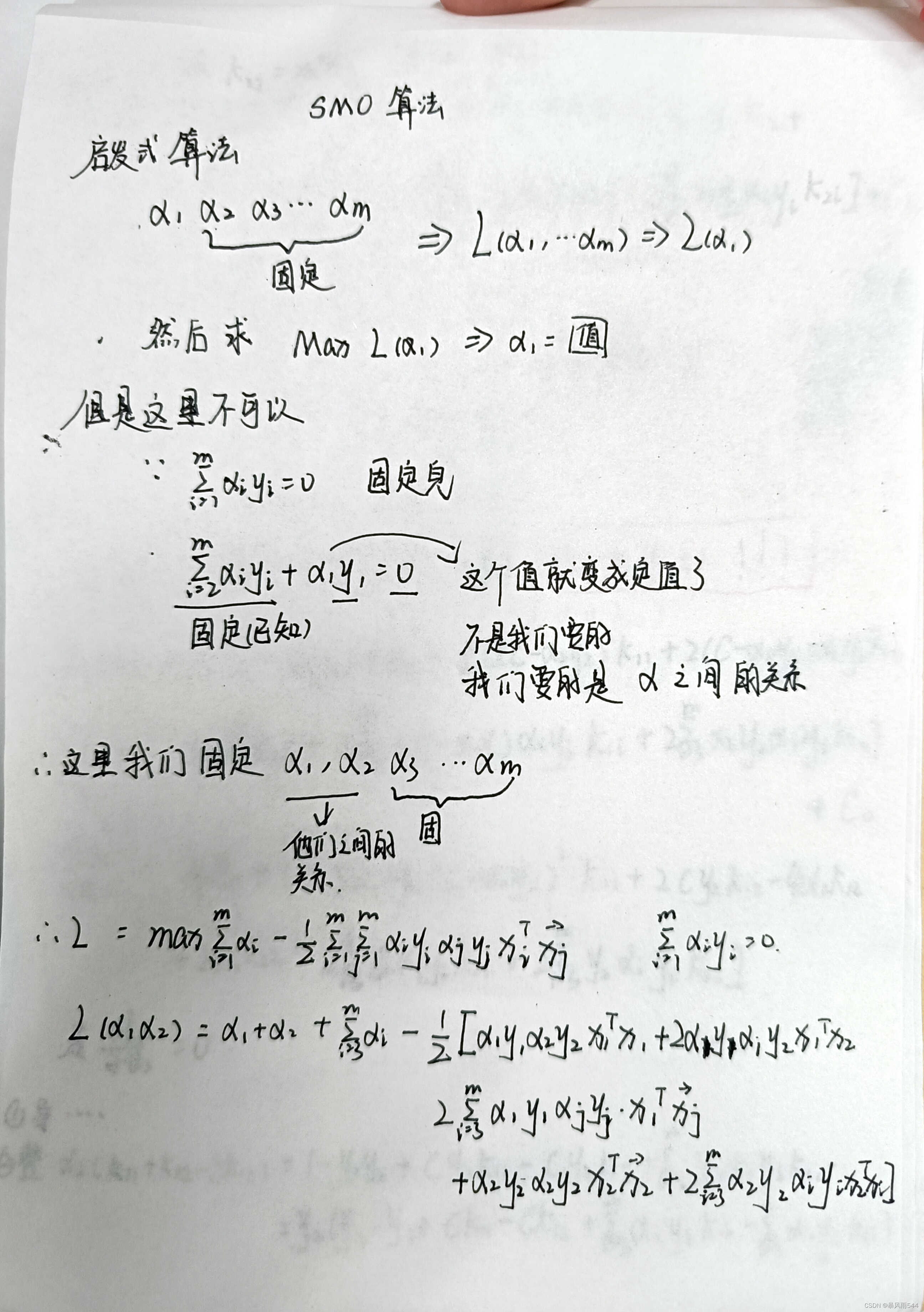

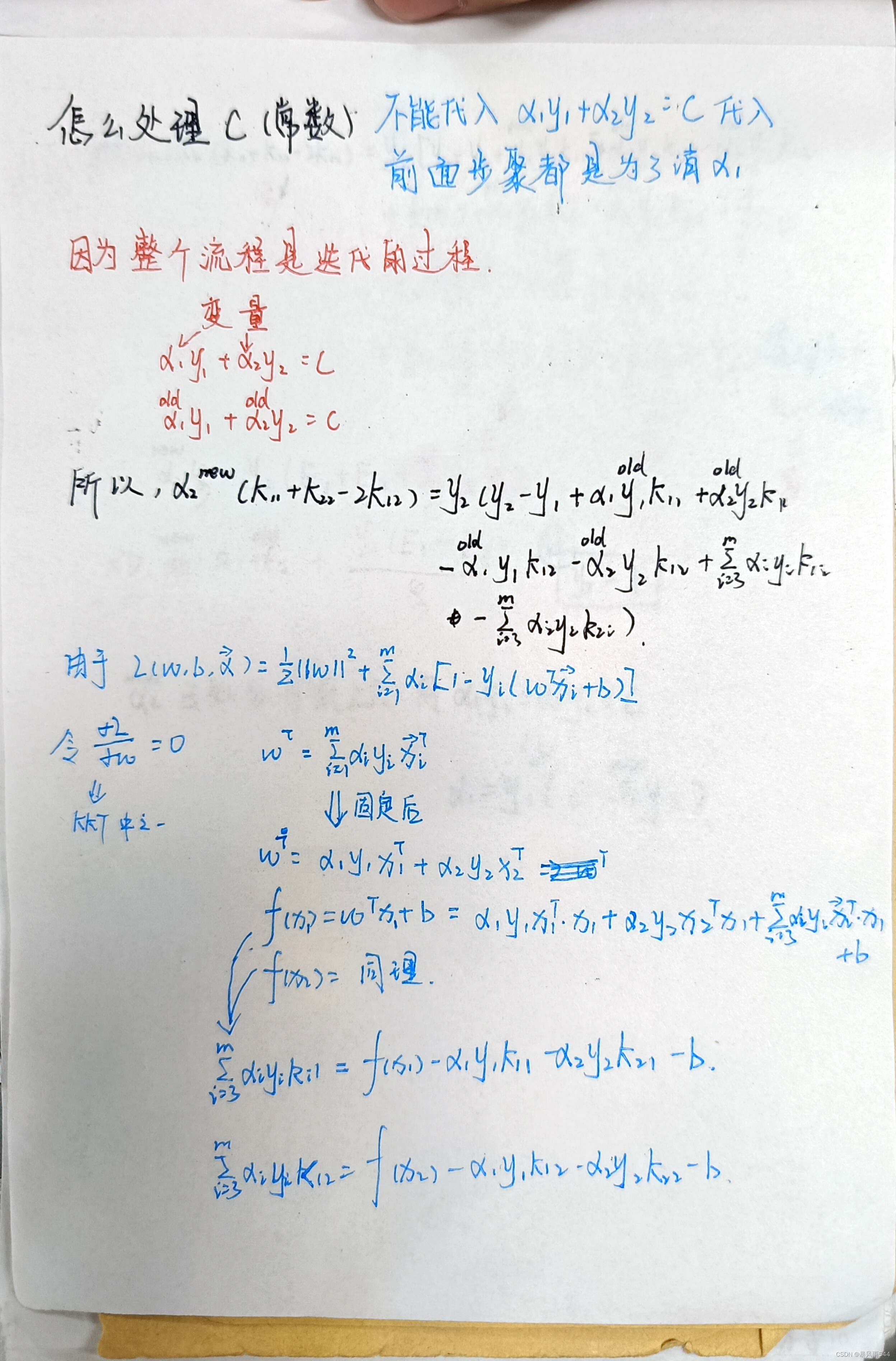

SMO,即序列最小优化算法,是一种用于解决SVM中拉格朗日函数的对偶问题的优化算法。SMO算法的基本思想是,将大优化问题分解为多个小优化问题,每次只优化其中的两个变量,通过依次优化这些变量来求解整个问题。具体而言,SMO算法每次选择两个变量进行更新,而其它变量不变。这两个变量可以通过启发式的方法(如最大化步长)来选择。SMO算法中用到了KKT条件,通过检查KKT条件来判断哪些变量应该参与到优化中。同时,SMO算法还利用了SMO中的启发式规则,可以大大降低求解SVM的时间。

1199

1199

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?