参考文章:Llama3-Tutorial/docs/llava.md at main · SmartFlowAI/Llama3-Tutorial (github.com)配置环境

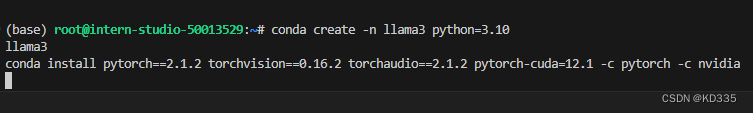

conda create -n llama3 python=3.10

conda activate llama3

conda install pytorch==2.1.2 torchvision==0.16.2 torchaudio==2.1.2 pytorch-cuda=12.1 -c pytorch -c nvidia

cd ~

git clone -b v0.1.18 https://github.com/InternLM/XTuner

cd XTuner

pip install -e .[all]clone 本教程仓库

cd ~

git clone https://github.com/SmartFlowAI/Llama3-Tutorial- InternStudio按照以下代码输入

-

mkdir -p ~/model cd ~/model ln -s /root/share/new_models/meta-llama/Meta-Llama-3-8B-Instruct .mkdir -p ~/model cd ~/model ln -s /root/share/new_models/openai/clip-vit-large-patch14-336 .mkdir -p ~/model cd ~/model ln -s /root/share/new_models/xtuner/llama3-llava-iter_2181.pth .cd ~ git clone https://github.com/InternLM/tutorial -b camp2 python ~/tutorial/xtuner/llava/llava_data/repeat.py \ -i ~/tutorial/xtuner/llava/llava_data/unique_data.json \ -o ~/tutorial/xtuner/llava/llava_data/repeated_data.json \ -n 200然后训练启动

xtuner train ~/Llama3-Tutorial/configs/llama3-llava/llava_llama3_8b_instruct_qlora_clip_vit_large_p14_336_lora_e1_finetune.py --work-dir ~/llama3_llava_pth --deepspeed deepspeed_zero2在训练好之后,我们将原始 image projector 和 我们微调得到的 image projector 都转换为 HuggingFace 格式。

xtuner convert pth_to_hf ~/Llama3-Tutorial/configs/llama3-llava/llava_llama3_8b_instruct_qlora_clip_vit_large_p14_336_lora_e1_finetune.py \

~/model/llama3-llava-iter_2181.pth \

~/llama3_llava_pth/pretrain_iter_2181_hf

xtuner convert pth_to_hf ~/Llama3-Tutorial/configs/llama3-llava/llava_llama3_8b_instruct_qlora_clip_vit_large_p14_336_lora_e1_finetune.py \

~/llama3_llava_pth/iter_1200.pth \

~/llama3_llava_pth/iter_1200_hf在转换完成后,我们就可以在命令行简单体验一下微调后模型的效果了。

问题1:Describe this image. 问题2:What is the equipment in the image?

Pretrain 模型

export MKL_SERVICE_FORCE_INTEL=1 xtuner chat /root/model/Meta-Llama-3-8B-Instruct \ --visual-encoder /root/model/clip-vit-large-patch14-336 \ --llava /root/llama3_llava_pth/pretrain_iter_2181_hf \ --prompt-template llama3_chat \ --image /root/tutorial/xtuner/llava/llava_data/test_img/oph.jpg

此时可以看到,Pretrain 模型只会为图片打标签,并不能回答问题。

Finetune 后 模型

export MKL_SERVICE_FORCE_INTEL=1 xtuner chat /root/model/Meta-Llama-3-8B-Instruct \ --visual-encoder /root/model/clip-vit-large-patch14-336 \ --llava /root/llama3_llava_pth/iter_1200_hf \ --prompt-template llama3_chat \ --image /root/tutorial/xtuner/llava/llava_data/test_img/oph.jpg

经过 Finetune 后,我们可以发现,模型已经可以根据图片回答我们的问题了。

494

494

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?