In

It is related to the (historically earliest)

Definition

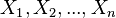

Let

-

这是连续多于n/2次选取一个事件的概率,[0,1].

这是连续多于n/2次选取一个事件的概率,[0,1].

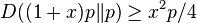

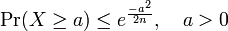

The Chernoff bound shows that

-

- 之上的S表示概率.

- 之下的S表示事件发生的总次数.

- 上面的式子就是

-

Indeed, noticing that  , we get by the multiplicative form of Chernoff bound (see below or Corollary 13.3 in Sinclair's class notes),

, we get by the multiplicative form of Chernoff bound (see below or Corollary 13.3 in Sinclair's class notes),

![Chernoff <wbr>bound P\left[S\le\frac{n}{2}\right]=P\left[S\le\left(1-\left(1-\frac{1}{2p}\right)\right)\mu\right]\leq e^{-\frac{\mu}{2}\left(1-\frac{1}{2p}\right)^2}=e^{-\frac{1}{2p}n\left(p-\frac{1}{2}\right)^2}](http://upload.wikimedia.org/math/1/7/1/171250593bc79b99fd81cb1d5dc9aeb7.png)

This result admits various generalizations as outlined below. One can encounter many flavours of Chernoff bounds: the original

[edit]A motivating example

The simplest case of Chernoff bounds is used to bound the success probability of majority agreement for n independent, equally likely events.

A simple motivating example is to consider a biased coin. One side (say, Heads), is more likely to come up than the other, but you don't know which and would like to find out. The obvious solution is to flip it many times and then choose the side that comes up the most. But how many times do you have to flip it to be confident that you've chosen correctly?

In our example, let

If the coin is noticeably biased, say coming up on one side 60% of the time (p  ) accuracy after 150 flips

) accuracy after 150 flips . If it is 90% biased, then a mere 10 flips suffices. If the coin is only biased a tiny amount, like most real coins are, the number of necessary flips becomes much larger.

. If it is 90% biased, then a mere 10 flips suffices. If the coin is only biased a tiny amount, like most real coins are, the number of necessary flips becomes much larger.

More practically, the Chernoff bound is used in

Notice that if

The first step in the proof of Chernoff bounds

The Chernoff bound for a random variable  , is obtained by applying

, is obtained by applying

From

For any

In particular optimizing over t and using independence we obtain,

-

![Chernoff <wbr>bound \Pr\left[X \ge a\right] \leq \min_{t>0} {\prod_i E[e^{tX_i}] \over e^{ta}}.](http://upload.wikimedia.org/math/6/c/5/6c56c77afc8064e72585657a857015c5.png)

(1)

Similarly,

and so,

[edit]Precise statements and proofs

[edit]Theorem for additive form (absolute error)

The following Theorem is due to

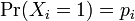

Assume random variables

![Chernoff <wbr>bound p = E \left [X_i \right ]](http://upload.wikimedia.org/math/6/5/e/65e8f48e9c48a7831e863575eef6ddd0.png) ,

,  , and

, and  . Then

. Then

and

where

is the

, then

, then ![Chernoff <wbr>bound \Pr\left[ X>mp+x \right] \leq \exp(-x^2/2mp(1-p)) .](http://upload.wikimedia.org/math/6/9/7/6976d89bd429a29f9bafeb63e5828b6c.png)

[edit]Proof

The proof starts from the general inequality (1) above.  . Taking

. Taking

Now, knowing that ![Chernoff <wbr>bound \Pr[X_i = 1] = p](http://upload.wikimedia.org/math/0/7/5/07572387fa1a159f3dc2807c165596f5.png) ,

, ![Chernoff <wbr>bound \Pr[X_i = 0] = (1-p)](http://upload.wikimedia.org/math/7/1/0/710bb7f75b6461c7a9f8cc0ee6075e38.png) , we have

, we have

Therefore we can easily compute the infimum, using calculus and some logarithms. Thus,

Setting the last equation to zero and solving, we have

so that  .

.

Thus,  .

.

As  , we see that

, we see that  , so our bound is satisfied on

, so our bound is satisfied on  . Having solved for

. Having solved for  , we can plug back into the equations above to find that

, we can plug back into the equations above to find that

We now have our desired result, that

To complete the proof for the symmetric case, we simply define the random variable  , apply the same proof, and plug it into our bound.

, apply the same proof, and plug it into our bound.

[edit]Simpler bounds

A simpler bound follows by relaxing the theorem using  , which follows from the

, which follows from the

. This results in a special case of

. This results in a special case of

, which is stronger for

, which is stronger for  , is also used.

, is also used.

[edit]Theorem for multiplicative form of Chernoff bound (relative error)

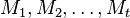

Let random variables

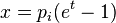

. Then, if we let

. Then, if we let

, for any

, for any

[edit]Proof

According to (1),

The third line above follows because

. This is identical to the calculation above in the proof of the

. This is identical to the calculation above in the proof of the

Rewriting

), we set

), we set  . The same result can be obtained by directly replacinga

. The same result can be obtained by directly replacinga  .[1]

.[1]

Thus,

If we simply set

, we can substitute and find

, we can substitute and find

This proves the result desired. A similar proof strategy can be used to show that

[edit]Better Chernoff bounds for some special cases

We can obtain stronger bounds using simpler proof techniques for some special cases of symmetric random variables.

Let

-

.

.

(a)  .

.

Then,

-

,

,

and therefore also

-

.

(b) ![Chernoff <wbr>bound \Pr(X_i = 1) = \Pr(X_i = 0) = \frac{1}{2}, \mathbf{E}[X] = \mu = \frac{n}{2}](http://upload.wikimedia.org/math/6/8/1/68163779a5d3278dc271f8e0493ed9c3.png)

Then,

-

,

,

-

,

,

-

,

,

-

.

.

[edit]Applications of Chernoff bound

Chernoff bounds have very useful applications in

The set balancing problem arises while designing statistical experiments. Typically while designing a statistical experiment, given the features of each participant in the experiment, we need to know how to divide the participants into 2 disjoint groups such that each feature is roughly as balanced as possible between the two groups. Refer to this

Chernoff bounds are also used to obtain tight bounds for permutation routing problems which reduce

[edit]Matrix Chernoff bound

Rudolf Ahlswede

If

,

,

where

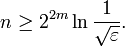

Notice that the number of samples in the inequality depends logarithmically on  . In general, unfortunately, such a dependency is inevitable: take for example a diagonal random sign matrix of dimension

. In general, unfortunately, such a dependency is inevitable: take for example a diagonal random sign matrix of dimension  . The operator norm of the sum of

. The operator norm of the sum of

. In order to achieve a fixed bound on the maximum deviation with constant probability, it is easy to see that

. In order to achieve a fixed bound on the maximum deviation with constant probability, it is easy to see that

The following theorem can be obtained by assuming

[edit]Theorem without the dependency on the dimensions

Let

![Chernoff <wbr>bound \Vert \mathbf{E}[M] \Vert_2 \leq 1](http://upload.wikimedia.org/math/1/b/4/1b470987749db4eeec17b453f4c36e4f.png)

. Set

. Set

-

.

.

If

where

.

.

![Chernoff <wbr>bound \Pr\left[X \ge a\right] = \Pr\left[e^{tX} \ge e^{ta}\right] \le \frac{ E[e^{tX}]}{e^{ta}} = {\prod_i E[e^{tX_i}]\over e^{ta}}.](http://upload.wikimedia.org/math/0/9/7/0971519306bef0bdad94de5992ff3ce3.png)

![Chernoff <wbr>bound \Pr\left[X \le a\right] = \Pr\left[e^{-tX} \ge e^{-ta}\right]](http://upload.wikimedia.org/math/4/b/a/4bae3d1db62d5384e5d9c4329cda7aa7.png)

![Chernoff <wbr>bound \Pr\left[X \le a\right] \leq \min_{t>0} e^{ta} \prod_i E[e^{-tX_i}] .](http://upload.wikimedia.org/math/c/e/b/ceb77fd03bb34b8a1519c26b55210e6a.png)

![Chernoff <wbr>bound \begin{align} &\Pr\left[ \frac 1 m \sum X_i \geq p + \varepsilon \right] \ &\qquad\leq \left ( {\left (\frac{p}{p + \varepsilon}\right )}^{p+\varepsilon} {\left (\frac{1 - p}{1 -p - \varepsilon}\right )}^{1 - p- \varepsilon}\right ) ^m = e^{ - D(p+\varepsilon\|p) m} \end{align}](http://upload.wikimedia.org/math/9/7/9/9793d242cfdd24b255ac9e7a78dbf304.png)

![Chernoff <wbr>bound \begin{align} &\Pr\left[ \frac 1 m \sum X_i \leq p - \varepsilon \right] \ &\qquad\leq \left ( {\left (\frac{p}{p - \varepsilon}\right )}^{p-\varepsilon} {\left (\frac{1 - p}{1 -p + \varepsilon}\right )}^{1 - p+ \varepsilon}\right ) ^m = e^{ - D(p-\varepsilon\|p) m}, \end{align}](http://upload.wikimedia.org/math/3/5/1/351197aef50bc71b58cb8060f43f5962.png)

![Chernoff <wbr>bound \Pr\left[ \frac{1}{m} \sum X_i \ge q\right] \le \inf_{t>0} \frac{E \left[\prod e^{t X_i}\right]}{e^{tmq}} = \inf_{t>0} \left[\frac{ E\left[e^{tX_i} \right] }{e^{tq}}\right]^m .](http://upload.wikimedia.org/math/2/2/9/229d15bdd41dae46e3adfd6689b66fe6.png)

![Chernoff <wbr>bound \left[\frac{ E\left[e^{tX_i} \right] }{e^{tq}}\right]^m = \left[\frac{p e^t + (1-p)}{e^{tq} }\right]^m = [pe^{(1-q)t} + (1-p)e^{-qt}]^m.](http://upload.wikimedia.org/math/8/2/5/8253e790764153366a0b9505d8154a4a.png)

![Chernoff <wbr>bound \begin{align} &\log(pe^{(1-q)t} + (1-p)e^{-qt}) = \log[e^{-qt}(1-p+pe^t)] \ &\qquad = \log\left[e^{-q \log\left(\frac{(1-p)q}{(1-q)p}\right)}\right] + \log\left[1-p+pe^{\log\left(\frac{1-p}{1-q}\right)}e^{\log\frac{q}{p}}\right] \ &\qquad = -q\log\frac{1-p}{1-q} -q \log\frac{q}{p} + \log\left[1-p+ p\left(\frac{1-p}{1-q}\right)\frac{q}{p}\right] \ &\qquad = -q\log\frac{1-p}{1-q} -q \log\frac{q}{p} + \log\left[\frac{(1-p)(1-q)}{1-q}+\frac{(1-p)q}{1-q}\right] \ &\qquad = -q\log\frac{q}{p} + (1-q)\log\frac{1-p}{1-q} = -D(q \| p). \end{align}](http://upload.wikimedia.org/math/f/7/c/f7c1c492a44353a9e0951de5e3c2da27.png)

![Chernoff <wbr>bound \Pr\left[\frac{1}{m}\sum X_i \ge p + \varepsilon\right] \le e^{-D(p+\varepsilon\|p) m}.](http://upload.wikimedia.org/math/7/6/5/765370d4625926e193b4ba4be8ae9ffb.png)

![Chernoff <wbr>bound \Pr \left[ X > (1+\delta)\mu\right] < \left(\frac{e^\delta}{(1+\delta)^{(1+\delta)}}\right)^\mu.](http://upload.wikimedia.org/math/c/c/6/cc6c1bc14b614b143bde62264eef9285.png)

![Chernoff <wbr>bound \begin{align} \Pr[X > (1 + \delta)\mu)] & \le \inf_{t > 0} \frac{\mathbf{E}\left[\prod_{i=1}^n\exp(tX_i)\right]}{\exp(t(1+\delta)\mu)} \ & = \inf_{t > 0} \frac{\prod_{i=1}^n\mathbf{E}[\exp(tX_i)]}{\exp(t(1+\delta)\mu)} \ & = \inf_{t > 0} \frac{\prod_{i=1}^n\left[p_i\exp(t) + (1-p_i)\right]}{\exp(t(1+\delta)\mu)} \end{align}](http://upload.wikimedia.org/math/5/c/5/5c55bef6d6dd76ac309dd1e0cf82be9c.png)

![Chernoff <wbr>bound \begin{align} &\Pr[X > (1+\delta)\mu] < \frac{\prod_{i=1}^n\exp(p_i(e^t-1))}{\exp(t(1+\delta)\mu)} \ &\qquad = \frac{\exp\left((e^t-1)\sum_{i=1}^n p_i\right)}{\exp(t(1+\delta)\mu)} = \frac{\exp((e^t-1)\mu)}{\exp(t(1+\delta)\mu)}. \end{align}](http://upload.wikimedia.org/math/9/3/8/938ff25f1a0cff33bd2232dbff865ef6.png)

![Chernoff <wbr>bound \frac{\exp((e^t-1)\mu)}{\exp(t(1+\delta)\mu)} = \frac{\exp((1+\delta - 1)\mu)}{(1+\delta)^{(1+\delta)\mu}} = \left[\frac{\exp(\delta)}{(1+\delta)^{(1+\delta)}}\right]^\mu](http://upload.wikimedia.org/math/9/0/1/901e0a65d4e596a7608adf3b1d141cae.png)

![Chernoff <wbr>bound \Pr[X < (1-\delta)\mu] < \exp(-\mu\delta^2/2).](http://upload.wikimedia.org/math/b/7/8/b78466366df8a0f4efaaca5942c0cfcc.png)

![Chernoff <wbr>bound \Pr \left( \bigg\Vert \frac{1}{t} \sum_{i=1}^t M_i - \mathbf{E}[M] \bigg\Vert_2 > \varepsilon \right) \leq d \exp \left( -C \frac{\varepsilon^2 t}{\gamma^2} \right).](http://upload.wikimedia.org/math/4/2/2/422e91b10caad11d4e8f3af28f7e72f1.png)

![Chernoff <wbr>bound \Pr \left( \bigg\Vert \frac{1}{t} \sum_{i=1}^t M_i - \mathbf{E}[M] \bigg\Vert_2 > \varepsilon \right) \leq \frac{1}{\mathbf{poly}(t)}](http://upload.wikimedia.org/math/3/7/3/37300d50c88e67fdd53e24f71d164896.png)

7693

7693

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?