文章目录

31.Pandas和数据库查询语言SQL的对比

- Pandas:Python最流行的数据处理与数据分析的类库

- SQL:结构化查询语言,用于对MySQL、Oracle等关系型数据库的增删改查

两者都是对“表格型”数据的操作和查询,所以很多语法都能对应起来

对比列表:

- SELECT数据查询

- WHERE按条件查询

- in和not in的条件查询

- groupby分组统计

- JOIN数据关联

- UNION数据合并

- Order Limit先排序后分页

- 取每个分组group的top n

- UPDATE数据更新

- DELETE删除数据

df = pd.read_csv("./datas/titanic/titanic_train.csv")

df.head()

(1)SELECT数据查询

# SQL:

sql = """

SELECT PassengerId, Sex, Age, Survived

FROM titanic

LIMIT 5;

"""

# Pandas

df[["PassengerId", "Sex", "Age", "Survived"]].head(5)

df.head(5)类似select * from table limit 5,查询所有的字段

(2)WHERE按条件查询

# SQL:

sql = """

SELECT *

FROM titanic

where Sex='male' and Age>=20.0 and Age<=40.0

LIMIT 5;

"""

# 使用括号的方式,级联多个条件|

condition = (df["Sex"]=="male") & (df["Age"]>=20.0) & (df["Age"]<=40.0)

condition.value_counts()

df[condition].head(5)

(3)in和not in的条件查询

df["Pclass"].unique()

array([3, 1, 2], dtype=int64)

# SQL:

sql = """

SELECT *

FROM titanic

where Pclass in (1,2)

LIMIT 5;

"""

# in

df[df["Pclass"].isin((1,2))].head()

# not in

df[~df["Pclass"].isin((1,2))].head()

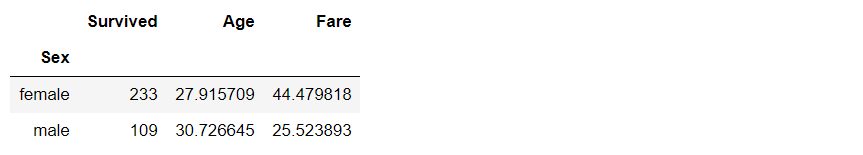

(4)groupby分组统计

单个列的聚合

# SQL:

sql = """

SELECT

-- 分性别的存活人数

sum(Survived),

-- 分性别的平均年龄

mean(Age)

-- 分性别的平均票价

mean(Fare)

FROM titanic

group by Sex

"""

df.groupby("Sex").agg({"Survived":np.sum, "Age":np.mean, "Fare":np.mean})

多个列的聚合

# SQL:

sql = """

SELECT

-- 不同存活和性别分组的,平均年龄

mean(Age)

-- 不同存活和性别分组的,平均票价

mean(Fare)

FROM titanic

group by Survived, Sex

"""

df.groupby(["Survived", "Sex"]).agg({"Age":np.mean, "Fare":np.mean})

(5)JOIN数据关联

# 电影评分数据集,评分表

df_rating = pd.read_csv("./datas/ml-latest-small/ratings.csv")

df_rating.head(5)

# 电影评分数据集,电影信息表

df_movies = pd.read_csv("./datas/ml-latest-small/movies.csv")

df_movies.head(5)

# SQL:

sql = """

SELECT *

FROM

rating join movies

on(rating.movieId=movies.movieId)

limit 5

"""

df_merged = pd.merge(left=df_rating, right=df_movies, on="movieId")

df_merged.head(5)

(6)UNION数据合并

df1 = pd.DataFrame({'city': ['Chicago', 'San Francisco', 'New York City'],

'rank': range(1, 4)})

df1

df2 = pd.DataFrame({'city': ['Chicago', 'Boston', 'Los Angeles'],

'rank': [1, 4, 5]})

df2

# SQL:

sql = """

SELECT city, rank

FROM df1

UNION ALL

SELECT city, rank

FROM df2;

"""

# pandas

pd.concat([df1, df2])

(7)Order Limit先排序后分页

# SQL:

sql = """

SELECT *

from titanic

order by Fare

limit 5

"""

df.sort_values("Fare", ascending=False).head(5)

(8)取每个分组group的top n

# 按(Survived,Sex)分组,取Age的TOP 2

df.groupby(["Survived", "Sex"]).apply(

lambda df:df.sort_values("Age", ascending=False).head(2))

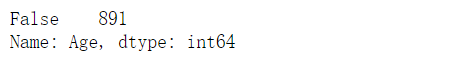

(9)UPDATE数据更新

df.info()

# SQL:

sql = """

UPDATE titanic

set Age=0

where Age is null

"""

condition = df["Age"].isna()

condition.value_counts()

df[condition] = 0

df["Age"].isna().value_counts()

(10)DELETE删除数据

# SQL:

sql = """

DELETE FROM titanic

where Age=0

"""

df_new = df[df["Age"]!=0]

df_new[df_new["Age"]==0]

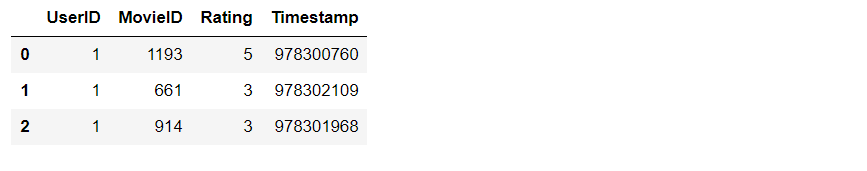

32.Pandas实现groupby聚合后不同列数据统计

df = pd.read_csv(

"./datas/movielens-1m/ratings.dat",

sep="::",

engine='python',

names="UserID::MovieID::Rating::Timestamp".split("::")

)

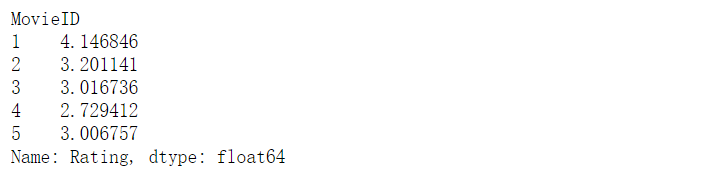

32.1 聚合后单列-单指标统计

# 每个MovieID的平均评分

result = df.groupby("MovieID")["Rating"].mean()

result.head()

32.2 聚合后单列-多指标统计

(1)方法1:agg函数传入多个结果列名=函数名形式

result = df.groupby("MovieID")["Rating"].agg(

mean="mean", max="max", min=np.min

)

result.head()

(2)方法2:agg函数传入字典,key是column名,value是函数列表

# 每个MoiveID的最高评分、最低评分、平均评分

result = df.groupby("MovieID").agg(

{"Rating":['mean', 'max', np.min]}

)

result.head()

result.columns = ['age_mean', 'age_min', 'age_max']

result.head()

32.3 聚合后多列-多指标统计

(1)方法1:agg函数传入字典,key是原列名,value是原列名和函数元组

# 回忆:agg函数的两种形式,等号代表“把结果赋值给新列”,字典/元组代表“对这个列运用这些函数”

result = df.groupby("MovieID").agg(

rating_mean=("Rating", "mean"),

rating_min=("Rating", "min"),

rating_max=("Rating", "max"),

user_count=("UserID", lambda x : x.nunique())

)

result.head()

(2)方法2:agg函数传入字典,key是原列名,value是函数列表

统计后是二级索引,需要做索引处理

result = df.groupby("MovieID").agg(

{

"Rating": ['mean', 'min', 'max'],

"UserID": lambda x :x.nunique()

}

)

result.head()

result["Rating"].head(3)

result.columns = ["rating_mean", "rating_min","rating_max","user_count"]

result.head()

(3)方法3:使用groupby之后apply对每个子df单独统计

def agg_func(x):

"""注意,这个x是子DF"""

# 这个Series会变成一行,字典KEY是列名

return pd.Series({

"rating_mean": x["Rating"].mean(),

"rating_min": x["Rating"].min(),

"rating_max": x["Rating"].max(),

"user_count": x["UserID"].nunique()

})

result = df.groupby("MovieID").apply(agg_func)

result.head()

33.Pandas将Excel存入MySQL

df = pd.read_excel("./course_datas/c23_excel_vlookup/学生信息表.xlsx")

df.head()

# 展示索引的name

df.index.name

df.index.name = "id"

df.head()

创建sqlalchemy对象连接MySQL

SQLAlchemy是Python中的ORM框架, Object-Relational Mapping,把关系数据库的表结构映射到对象上。

- 官网:https://www.sqlalchemy.org/

- 如果sqlalchemy包不存在,用这个命令安装:pip install sqlalchemy

- 需要安装依赖Python库:pip install mysql-connector-python

可以直接执行SQL语句

from sqlalchemy import create_engine

engine = create_engine("mysql+mysqlconnector://root:123456@127.0.0.1:3306/test", echo=False)

33.1 方法1:当数据表不存在时,每次覆盖整个表

每次运行会drop table,新建表

df.to_sql(name='student', con=engine, if_exists="replace")

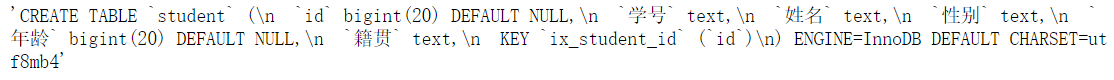

engine.execute("show create table student").first()[1]

print(engine.execute("show create table student").first()[1])

engine.execute("select count(1) from student").first()

(24, )

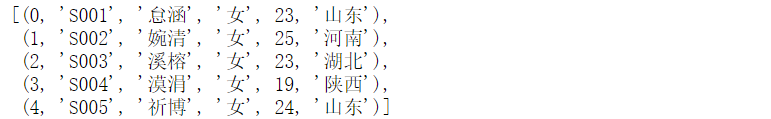

engine.execute("select * from student limit 5").fetchall()

33.2 方法2:当数据表存在时,每次新增数据

场景:每天会新增一部分数据,要添加到数据表,怎么处理?

df_new = df.loc[:4, :]

df_new

df_new.to_sql(name='student', con=engine, if_exists="append")

engine.execute("SELECT * FROM student where id<5 ").fetchall()

问题解决:先根据数据KEY删除旧数据

df_new.index

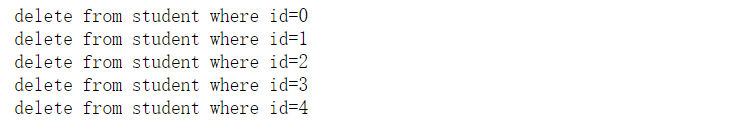

for id in df_new.index:

## 先删除要新增的数据

delete_sql = f"delete from student where id={id}"

print(delete_sql)

engine.execute(delete_sql)

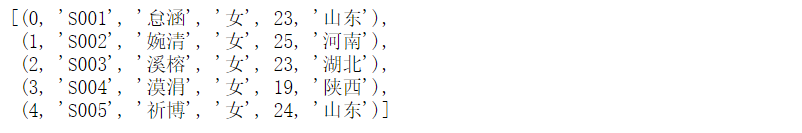

engine.execute("SELECT * FROM student where id<5 ").fetchall()

[ ]

engine.execute("select count(1) from student").first()

(19,)

# 新增数据到表中

df_new.to_sql(name='student', con=engine, if_exists="append")

engine.execute("SELECT * FROM student where id<5 ").fetchall()

engine.execute("SELECT count(1) FROM student").first()

(24,)

34.Pandas处理Excel复杂多列到多行转换

file_path = "./course_datas/c39_explode_to_manyrows/读者提供的数据-输入.xlsx"

df = pd.read_excel(file_path)

(1)把多列合并到一起

# 提取待合并的所有列名,一会可以把它们drop掉

merge_names = list(df.loc[:, "Supplier":].columns.values)

merge_names

def merge_cols(x):

"""

x是一个行Series,把它们按分隔符合并

"""

# 删除为空的列

x = x[x.notna()]

# 使用x.values用于合并

y = x.values

# 合并后的列表,每个元素是"Supplier" + "Supplier PN"对

result = []

# range的步长为2,目的是每两列做合并

for idx in range(0, len(y), 2):

# 使用竖线作为"Supplier" + "Supplier PN"之间的分隔符

result.append(f"{y[idx]}|{y[idx+1]}")

# 将所有两两对,用#分割,返回一个大字符串

return "#".join(result)

# 添加新列,把待合并的所有列变成一个大字符串

df["merge"] = df.loc[:, "Supplier":].apply(merge_cols, axis=1)

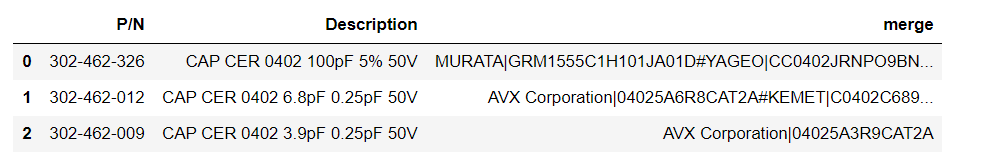

df

# 把不用的列删除掉

df.drop(merge_names, axis=1, inplace=True)

df

(2)使用explode把一列变多行

# 先将merge列变成list的形式

df["merge"] = df["merge"].str.split("#")

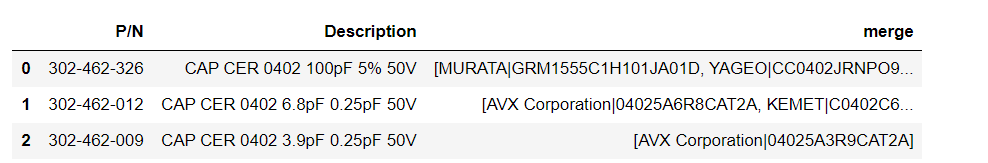

df

# 执行explode变成多行

df_explode = df.explode("merge")

df_explode

(3)将一列还原成结果的多列

# 分别从merge中提取两列

df_explode["Supplier"]=df_explode["merge"].str.split("|").str[0]

df_explode["Supplier PN"]=df_explode["merge"].str.split("|").str[1]

df_explode

# 把merge列删除掉,得到最终数据

df_explode.drop("merge", axis=1, inplace=True)

df_explode

35.Pandas怎样实现groupby聚合后字符串列的合并

需求:

计算每个月的最高温度、最低温度、出现的风向列表、出现的空气质量列表

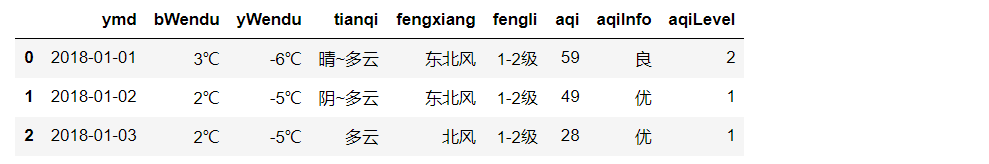

数据输入

数据输出

fpath = "./datas/beijing_tianqi/beijing_tianqi_2018.csv"

df = pd.read_csv(fpath)

df.head(3)

知识:使用df.info()可以查看每列的类型

df.info()

知识:series怎样从str类型变成int

df["bWendu"] = df["bWendu"].str.replace("℃", "").astype('int32')

df["yWendu"] = df["yWendu"].str.replace("℃", "").astype('int32')

df.head(3)

知识:进行日期列解析,可以方便提取月份

df["ymd"] = pd.to_datetime(df["ymd"])

df["ymd"].dt.month

知识:series可以用Series.unique去重

df["fengxiang"].unique()

知识:可以用",".join(series)实现数组合并成大字符串

",".join(df["fengxiang"].unique())

35.1 方法1

result = (

df.groupby(df["ymd"].dt.month)

.agg(

# 新列名 = (原列名,函数)

最高温度=("bWendu", "max"),

最低温度=("yWendu", "min"),

风向列表=("fengxiang", lambda x : ",".join(x.unique())),

空气质量列表=("aqiInfo", lambda x : ",".join(x.unique()))

)

.reset_index()

.rename(columns={"ymd":"月份"})

)

35.2 方法2

def agg_func(x):

"""注意,这个x是每个分组的dataframe"""

return pd.Series({

"最高温度": x["bWendu"].max(),

"最低温度": x["yWendu"].min(),

"风向列表": ",".join(x["fengxiang"].unique()),

"空气质量列表": ",".join(x["aqiInfo"].unique())

})

result = df \

.groupby(df["ymd"].dt.month) \

.apply(agg_func) \

.reset_index() \

.rename(columns={"ymd":"月份"})

36.Pandas处理Excel一列变多列

df = pd.read_excel("./course_datas/c42_split_onecolumn_tomany/学生数据表.xlsx")

def split_func(line):

line["姓名"], line["性别"], line["年龄"], line["城市"] = line["数据"].split(":")

return line

df = df.apply(split_func, axis=1)

df.drop(["数据"], axis=1, inplace=True)

37.map、apply、applymap、transform

import numpy as np

import pandas as pd

import pymysql

from pyecharts import options as opts

from pyecharts.charts import Bar, Line, Grid

from pyecharts.commons.utils import JsCode

from pyecharts.globals import ThemeType

conn = pymysql.connect(

host = '127.0.0.1',

user = 'root',

password = '123',

database = 'cloudmusic',

charset = 'utf8'

)

df = pd.read_sql("select * from playlists", con=conn)

37.1 map

把对应的数据逐个当作参数传入字典或函数中,得到映射后的值

# 把男的替换为1,女的替换为0

# 使用字典进行映射

df["gender"].map({"男": 1, "女": 0}).head()

# 使用函数

def gender_map(x):

gender = 1 if x == "男" else 0

return gender

df["gender"].map(gender_map).head()

37.2 apply

apply能够传入功能更为复杂的函数, 对行或列进行操作

# 给tracks_num都加3

def apply_track(x, bias):

return x + bias

# 以元组的方式传入额外的参数

df["tracks_num"].apply(apply_track, args=(3,)).head()

# axis=0表示对着一列进行操作

# axis=1表示对着一行进行操作

df[["share_count", "comment_count"]].apply(np.sum, axis=0)

df[["share_count", "comment_count"]].apply(np.sum, axis=1).head()

37.3 applymap

对每个单元格进行操作

# 保留两位小数

df[["share_count", "comment_count"]].applymap(lambda x: "%.2f" % x).head()

37.4 transform

把分组后的计算结果显示在每一行

df["user_type_avg"] = df.groupby("user_type")["tracks_num"].transform("mean")

df[["id", "user_type", "tracks_num", "user_type_avg"]].head(10)

38.Pandas对日期的处理

218

218

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?