文章目录

作者:HandsomeWu

声明:本文仅完成了作业部分,未对原理进行阐述

由于作者水平限制,原理部分暂时不能更新出来,但是整个机器人课程中,这部分又非常有用,故先发出占坑用于大家完成作业,感兴趣的同学可以关注我后续更新原理部分。

所有课程共有6次作业,后续计划将6次作业的原理和实验整理完全,旨在帮助自己和同学们更好的掌握这部分知识和VREP的使用。

暂时还没有想好整体的框架,故先一个一个发出。

练习4:基于线性的扩展卡尔曼滤波器的机器人定位

1 Introduction

上节讲了如何将雷达数据提取出线性结构,本节讲述如何根据这些线性结构获得一张机器人可用于自身定位的图。

2 Kalman Filter Localization

Extended Kalman Filter 被分为两步

- 预测:

prediction step - 更新:

update step

2.1 状态预测: State Prediction

任务:

-

修改transitionFunction函数,使其具有如下功能

%输入:上一状态、控制、轮间距 %输出:当前状态预测、状态雅可比、动作雅可比 function [f, F_x, F_u] = transitionFunction(x,u, b)

验证:

- Run the function

validateTransitionFunction(). 如果正确,会输出一条地面真实路径

插入代码:

function [f, F_x, F_u] = transitionFunction(x,u, b)

f = x + [ (u(1)+u(2))/2 * cos(x(3) + (u(2)-u(1))/(2*b) );

(u(1)+u(2))/2 * sin(x(3) + (u(2)-u(1))/(2*b) );

(u(2)-u(1))/b ];

F_x = [1, 0, -sin(x(3) - (u(1) - u(2))/(2*b))*(u(1)/2 + u(2)/2);

0, 1, cos(x(3) - (u(1) - u(2))/(2*b))*(u(1)/2 + u(2)/2);

0, 0, 1 ];

F_u = [cos(x(3) - (u(1) - u(2))/(2*b))/2 + (sin(x(3) + (u(1) - u(2))/(2*b))*(u(1)/2 + u(2)/2))/(2*b),... %这里一定要加上换行符号

cos(x(3) - (u(1) - u(2))/(2*b))/2 - (sin(x(3) + (u(1) - u(2))/(2*b))*(u(1)/2 + u(2)/2))/(2*b);

sin(x(3) - (u(1) - u(2))/(2*b))/2 - (cos(x(3) + (u(1) - u(2))/(2*b))*(u(1)/2 + u(2)/2))/(2*b),...

sin(x(3) - (u(1) - u(2))/(2*b))/2 + (cos(x(3) + (u(1) - u(2))/(2*b))*(u(1)/2 + u(2)/2))/(2*b);

-1/b,1/b];

end

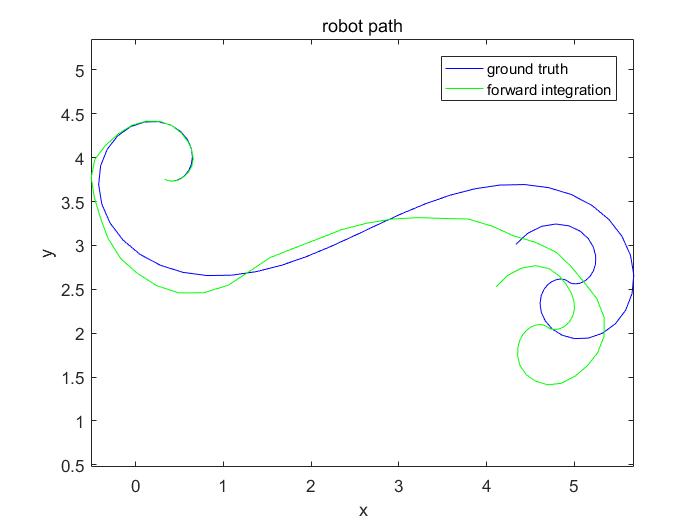

运行测试函数得图(记得要将transitionFunction.m文件和要运行的文件放到同一个文件夹下)

注:在validateTransitionFunction()中,精度总是不够,检查式子无误,测试了一组数据,误差也很小,故将精度要求放低至tolerance = 1e-2;

小结:可见,预测效果虽可以进行跟踪,但是仍有比较大的累计误差

2.2 状态更新:State Update

2.2.1 Measurement Function

任务:

- 修改

measurementFunction函数,使其给定一个状态S和map m,能够给出一个预测z - 同时,计算出状态S的雅可比模型

验证:

- Run the function

validateMeasurementFunction(),输出正确即可

插入代码:

function [h, H_x] = measurementFunction(x, m)

h = [m(1) - x(3);

m(2) - (x(1)*cos(m(1)) + x(2)*sin(m(1)))];

H_x = [0, 0, -1;

-cos(m(1)), -sin(m(1)), 0];

[h(1), h(2), isRNegated] = normalizeLineParameters(h(1), h(2));

if isRNegated

H_x(2, :) = - H_x(2, :);

end

运行测试函数(要将normalizeLineParameters.m,measurementFunction.m放到一起)

输出结果:

>> validateMeasurementFunction

measurement function appears to be correct!

2.2.2 Measurement Association

任务:

- 修改

associateMeasurements.m函数,满足感知到的线与地图正确关联的功能

验证:

- Run the function

validateAssociations(),输出正确即可

插入代码:

function [v, H, R] = associateMeasurements(x, P, Z, R, M, g)

nMeasurements = size(Z,2);

nMapEntries = size(M,2);

d = zeros(nMeasurements, nMapEntries);

v = zeros(2, nMeasurements * nMapEntries);

H = zeros(2, 3, nMeasurements * nMapEntries);

for i = 1 : nMeasurements

for j = 1 : nMapEntries

[z_priori, H(:, :, j + (i-1) * nMapEntries)] = measurementFunction(x, M(:,j));

v(:,j + (i-1) * nMapEntries) = Z(:,i) - z_priori;

W = H(:, :, j + (i-1) * nMapEntries) * P * H(:, :, j + (i-1) * nMapEntries)' + R(:,:,i);

d(i,j) = v(:,j + (i-1) * nMapEntries)' * inv(W) * v(:,j + (i-1) * nMapEntries);

end

end

% line feature matching (pp. 341)

% association of each measurement to the map point with minimal distance

[minima, map_index] = min(d');

[measurement_index] = find(minima < g^2);

map_index = map_index(measurement_index);

v = v(:, map_index + (measurement_index-1)*nMapEntries);

H = H(:, :, map_index + (measurement_index-1)*nMapEntries);

R = R(:, :, measurement_index);

end

运行测试函数(同样记得添加getOrder.m函数,不然会报错)

%输出结果

>> validateAssociations

measurement function appears to be correct!

association function appears to be correct!

2.3 更新估计:Updating the Estimate

任务:

- 完成

filterStep.m函数

验证:

- Run the function

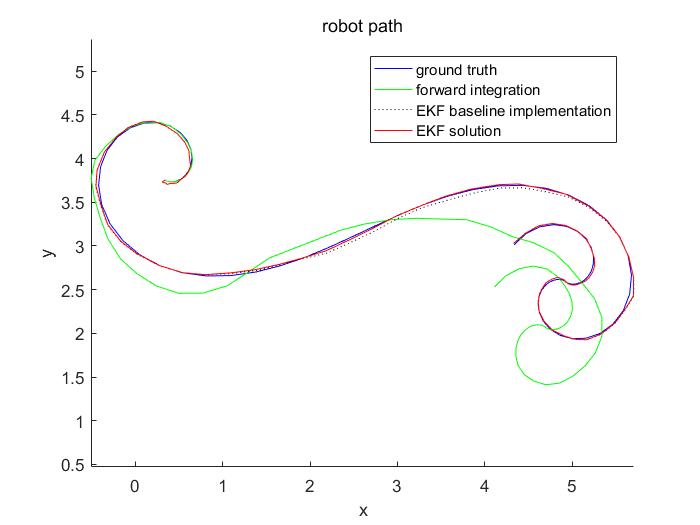

validateFilter() - 若正确则输出:base_line、滤波器输出、控制输入的正向集成(如例2.1),跟踪精度会更加精确

插入代码:

function [x_posteriori, P_posteriori] = filterStep(x, P, u, Z, R, M, k, g, b)

% [x_posteriori, P_posteriori] = filterStep(x, P, u, z, R, M, k, g, b)

% returns an a posteriori estimate of the state and its covariance

% additional bells and whistles in case no line was detected, please

% incorporate this at a sensical position in your code

% propagate the state (p. 337)

Q = k*diag(abs(u));

[x_priori, F_x, F_u] = transitionFunction(x, u, b);

P_priori = F_x * P * F_x' + F_u * Q * F_u';

if size(Z,2) == 0

x_posteriori = x_priori;

P_posteriori = P_priori;

return;

end

[v, H, R] = associateMeasurements(x_priori, P_priori, Z, R, M, g);

y = reshape(v, [], 1);

H = reshape(permute(H, [1,3,2]), [], 3);

R = blockDiagonal(R);

% update state estimates (pp. 335)

S = H * P_priori * H' + R;

K = P_priori * (H' / S);

P_posteriori = (eye(size(P_priori)) - K*H) * P_priori;

x_posteriori = x_priori + K * y;

end

运行测试函数(同样记得添加blockDiagonal.m`函数,不然会报错)

%%输出结果

>> validateFilter

State transition function appears to be correct!

measurement function appears to be correct!

association function appears to be correct!

可以看到跟踪效果精度很高,比forward integration方法高了很多

3 V-REP Experiment

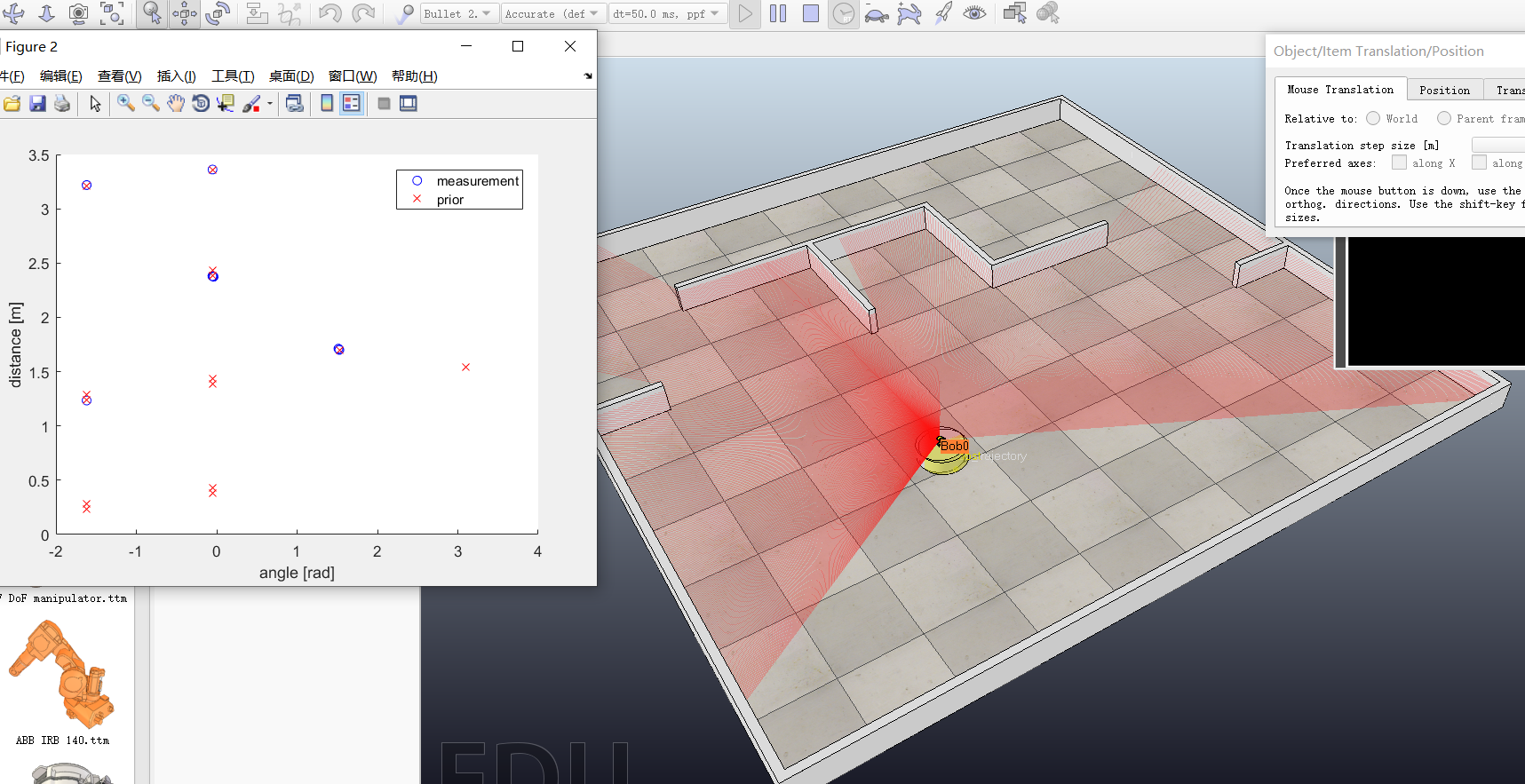

至此,直线提取和EKF定位便全部实现,并且单独测试通过,现在可以看下联合仿真效果

Task:任务

- 完成

incrementalLocalization()函数:使其输入先前位姿、控制,雷达扫描数据 。输出其下一步估计位姿和协方差

Validation:验证

- 打开VREP,导入

scene/mooc_exercises.ttt模型,点击仿真,可以看到机器人、墙、雷达扫描覆盖图像 - Matlab运行

vrepSimulation.m函数,可以看到机器人运行圆形路径 - 关闭实际的机器人,你会看到一个黄色的模拟机器人,它表示着估计的位姿

- 但是,就像在现实环境中一样,可能估计效果会不好。这是因为可能墙面的测量值和机器人位姿不能很好的关联起来

- 根据观察到的墙壁的数量和方向,全局姿势的可观察性可能会受到影响,从而导致状态估计受损。

- 调整一下机器人的起始位置,重启仿真

插入代码:

function [x_posterori, P_posterori] = incrementalLocalization(x, P, u, S, M, params, k, g, b)

% [x_posterori, P_posterori] = incrementalLocalization(x, P, u, S, R, M,

% k, b, g) returns the a posterori estimate of the state and its covariance,

% given the previous state estimate, control inputs, laser measurements and

% the map

C_TR = diag([repmat(0.1^2, 1, size(S, 2)) repmat(0.1^2, 1, size(S, 2))]);

[z, R, ~] = extractLinesPolar(S(1,:), S(2,:), C_TR, params);

figure(2), cla, hold on;

z_prior = zeros(size(M));

for k = 1:size(M,2)

z_prior(:,k) = measurementFunction(x, M(:,k));

end

plot(z(1,:), z(2,:),'bo');

plot(z_prior(1,:), z_prior(2,:),'rx');

xlabel('angle [rad]'); ylabel('distance [m]')

legend('measurement','prior')

drawnow;

% estimate robot pose

[x_posterori, P_posterori] = filterStep(x, P, u, z, R, M, k, g, b);

end

仿真结果:

调整机器人的位置重新仿真

同时也可以看到测量点和先验值的动态跟踪关系

附下个人公众号:东秦小站

- 喜欢跑步、科技、机器人

- 研究领域:强化学习,AGI

若能为你带来价值~ 那会是我最开心的事

914

914

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?