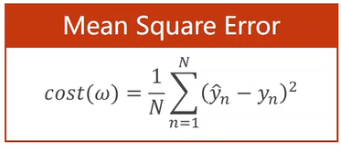

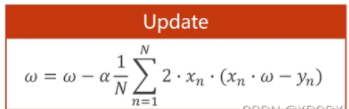

公式推导:

code:

import numpy as np

import matplotlib.pyplot as plt

x_data = [1.0, 2.0, 3.0]

y_data = [2.0, 4.0, 6.0]

w = 1.0

epoch_list = []

cost_list = []

def forward(x):

return x * w

def cost(xs,ys):

cost = 0

for x, y in zip(xs, ys):

y_pred = forward(x)

cost += (y_pred - y) ** 2 # 单个损失函数

return cost / len(xs)

def gradient(xs, ys):

grad = 0

for x, y in zip(xs, ys):

grad += 2 * x * (x * w - y) # 公式 cost对w求偏导的公式

return grad / len(xs)

print('Predict(before training)', 4, forward(4))

for epoch in range(100):

cost_val = cost(x_data, y_data)

grad_val = gradient(x_data, y_data)

w -= 0.01 * grad_val

epoch_list.append(epoch)

cost_list.append(cost(x_data, y_data))

print('Epoch:', epoch, 'w=', w, 'loss=', cost_val)

print('Predict(after training)', 4, forward(4))

plt.plot(epoch_list, cost_list)

plt.xlabel('epoch')

plt.ylabel('loss')

plt.show()

效果图:

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?