1.1 Classification and logistic regression

classification problem is just like the regression problem, except that the values y we now want to predict take on only a small number of discrete values. For now, we will focus on the binary classification problem in which y can take on only two values, 0 and 1. For instance, if we are trying to build a spam classifier for email, then x(i) may be some features of a piece of email, and y may be 1 if it is a piece of spam mail, and 0 otherwise. 0 is also called the negative class, and 1 the positive class, and they are sometimes also denoted by the symbols “-” and “+.” Given x(i), the corresponding y(i) is also called the label for the training example.

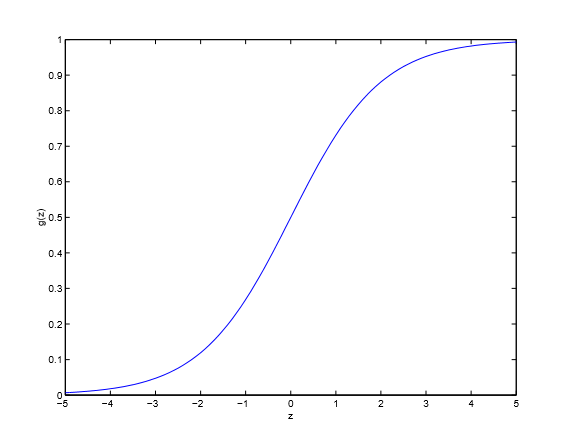

1.2 Logistic regression

logistic function

where

useful property

given the logistic regression model, how do we fit θ for it?

Let us assume that:

written more compactly as:

maximize the log likelihood

maximize the likelihood

This therefore gives us the stochastic gradient ascent rule:

1.3 Digression: The perceptron learning algorithm

Consider modifying the logistic regression method to “force” it to output values that are either 0 or 1 or exactly. To do so, it seems natural to change the definition of g to be the threshold function:

If we then let hθ(x)=g(θTx) as before but using this modified definition of g, and if we use the update rule :

then we have the perceptron learning algorithm.

451

451

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?