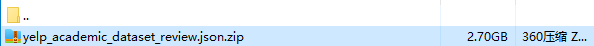

最近做实验需要用到Yelp数据集,但是Yelp官网直接下载的数据集是非常大的,压缩之后多有2.7G,解压后大概6.4G左右(可怕),原始数据集是将很多年份的评论数据放在一起的。

大多数文献都是使用的一年的Yelp数据就可以了,那我们也一样分年份整理出来就可以,最后存储形式遵循Amazon数据集的json+gzip格式。废话不多说,直接上代码。

# python3.6

# encoding : utf-8 -*-

# @author : YingqiuXiong

# @e-mail : 1916728303@qq.com

# @file : yelpData.py

# @Time : 2022/4/14 9:46

import io

import json

import os

import zipfile

import gzip

data_dir = "../../../../../../Data/xiongyingqiu/yelp/"

dataFile_name = "yelp_academic_dataset_review.json"

# 按年份存储

file_2016 = gzip.open(os.path.join(data_dir, "reviews_yelp_2016.json.gz"), "a")

lines_2016 = []

file_2017 = gzip.open(os.path.join(data_dir, "reviews_yelp_2017.json.gz"), "a")

lines_2017 = []

file_2018 = gzip.open(os.path.join(data_dir, "reviews_yelp_2018.json.gz"), "a")

lines_2018 = []

file_2019 = gzip.open(os.path.join(data_dir, "reviews_yelp_2019.json.gz"), "a")

lines_2019 = []

filename = data_dir + dataFile_name + ".zip"

zfile = zipfile.ZipFile(filename, "r")

name = "yelp_academic_dataset_review.json"

f = zfile.open(name) # .readlines()

while True:

line = f.readline()

if not line:

break

js = json.loads(line)

# 忽略用户id或产品id不清晰的评论

if str(js['user_id']) == 'unknown':

print("unknown user id")

continue

if str(js['business_id']) == 'unknown':

print("unkown item id")

continue

year = str(str(js["date"]).split(" ")[0]).split("-")[0]

if year == "2016":

line = json.dumps(js) + "\n"

lines_2016.append(line)

elif year == "2017":

line = json.dumps(js) + "\n"

lines_2017.append(line)

elif year == "2018":

line = json.dumps(js) + "\n"

lines_2018.append(line)

elif year == "2019":

line = json.dumps(js) + "\n"

lines_2019.append(line)

else:

continue

print("~~~~~read raw data over!~~~~~")

def writeGzip(gzipFile, lines):

with io.TextIOWrapper(gzipFile, encoding='utf-8') as enc:

enc.writelines(lines)

gzipFile.close()

writeGzip(file_2016, lines_2016)

print("~~~~~write 2016 over!~~~~~")

writeGzip(file_2017, lines_2017)

print("~~~~~write 2017 over!~~~~~")

writeGzip(file_2018, lines_2018)

print("~~~~~write 2018 over!~~~~~")

writeGzip(file_2019, lines_2019)

print("~~~~~write 2019 over!~~~~~")

lines_2016.extend(lines_2017)

lines_2017.clear()

lines_2016.extend(lines_2018)

lines_2018.clear()

print("~~~~~161718 over!~~~~~")

file_161718 = gzip.open(os.path.join(data_dir, "reviews_yelp_161718.json.gz"), "a")

writeGzip(file_161718, lines_2016)

print("~~~~~write 161718 over!~~~~~")

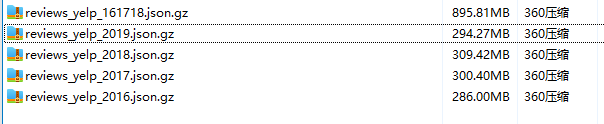

最后附上分解完成的各个文件:

161718是把三年的合并到一起的。

===2022.4.16更新=

分年份处理的数据集还是太大了,有6百多万条数据,这次更新增加了处理成5_score的代码,即保证数据集的每个用户和产品至少有5条评论。

后面有时间再把每个文件上传到百度网盘。

3504

3504

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?