1. Image Gradients

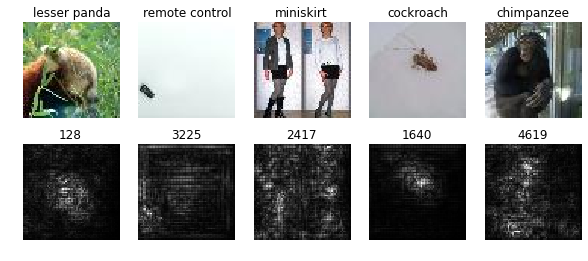

1.1 Saliency maps

As the issue[1] says, the class saliency map

M

scores, cache = model.forward(X, mode='test')

dscores = np.ones(scores.shape)

dX, _ = model.backward(dscores, cache)

saliency = np.max(np.abs(dX), axis = 1)1.2 Fooling Images

It will be fail some times, so try more times.

while iter<100 and current_predict!= target_y:

scores, cache = model.forward(X_fooling, mode='test')

current_predict = scores[0].argmax()

if current_predict!= target_y:

dscores = np.zeros(scores.shape)

dscores[:,target_y] = max(scores[:,current_predict] - scores[:,target_y],100)

dscores[:,current_predict] = min(-scores[:,current_predict] + scores[:,target_y],-100)

w, _ = model.backward(dscores, cache)

X_fooling +=w2. Image Generation

2.1 Classes visualization

Class Model Visualization - Google Groups

It’s clearly addressed in the Google Groups above. The procedure is related to the ConvNet training procedure, where the back-propagation is used to optimise the layer weights[1].

First, initialize the dscores, set the class y <script id="MathJax-Element-560" type="math/tex">y</script> of dscores to be ‘1’. Then, using gradient descent, computing gradients with respect to the generated image. Finally, update the image with the weights, learning_rate and L2 regularization.

scores, cache = model.forward(X, mode='test')

dscores = np.zeros(scores.shape)

dscores[:, target_y] = 1

dX, _ = model.backward(dscores, cache)

X += learning_rate * (dX + 2 * l2_reg * X)2.2 Feature Inversion

There is a discuss about the inversion. Also here is video to help u understand.

layer_out, layer_cache = model.forward(X, start=None, end=layer, mode='test')

loss_noreg = np.sum((layer_out - target_feats)** 2) # loss w.o. regularization term

dloss = 2 * (layer_out - target_feats)

dX, _ = model.backward(dloss, layer_cache)

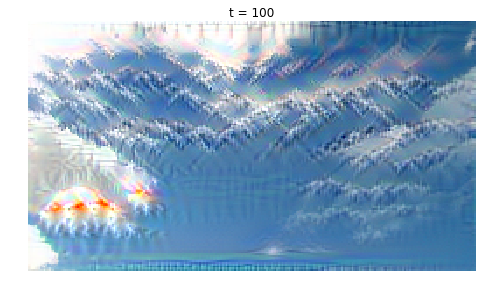

X -= learning_rate * (dX + 2 * l2_reg * X)2.3 DeepDream

“We pick some layer from the network, pass the starting image through the network to extract features at the chosen layer, set the gradient at that layer equal to the activations themselves, and then backpropagate to the image. This has the effect of modifying the image to amplify the activations at the chosen layer of the network.

For DeepDream we usually extract features from one of the convolutional layers, allowing us to generate images of any resolution.”

layer_out, layer_cache = model.forward(X, start=None, end=layer, mode='test')

dX,_ = model.backward(layer_out, layer_cache)

X += learning_rate * dX3. references:

- [1]Visualising image classification models and saliency maps Code

- [2]Understanding Neural Networks Through Deep Visualization Post

- [3]Inceptionism: Going Deeper into Neural Networks Post Code

- [4]Understanding Deep Image Representations by Inverting Them pdf Code

- [5]Intriguing properties of neural networks pdf

1638

1638

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?