1. Softmax

如果有n类,则权重w为n个向量组成的矩阵。

Θ

\Theta

Θ是特征向量x与对应权重向量

w

i

w_i

wi之间的角度。在softmax中,只需要

Θ

1

<

Θ

2

\Theta _{1}<\Theta _{2}

Θ1<Θ2即可判定x属于第1类。

2. L-Softmax

首次提出angular margin的思想。对softmax进行改造,引入角度间隔系数m。m是一个大于1的数,需要在

m

∗

Θ

1

<

Θ

2

m*\Theta _{1}<\Theta _{2}

m∗Θ1<Θ2的情况下,才判定x属于第1类,相比softmax的判别要求更加严格。

3. A-Softmax

与L-Softmax类似,不同的是对权重w L2正则化并把bias设定为0之后才引入角度间隔系数m。

4. AM-Softmax

对特征x和权重w L2正则化后,引入余弦间隔距离m。要求

c

o

s

Θ

1

−

m

>

c

o

s

Θ

2

cos\Theta_{1} -m > cos\Theta_2

cosΘ1−m>cosΘ2才判定x属于第1类。由于标准化后的两个向量,它们的欧氏距离的平方与余弦距离成正比,因此,这里实际上就是要求归一化后的特征x与归一化后的权重

w

i

w_i

wi欧氏距离很近,即两个向量之间的夹角很小。AM-Softmax比A-Softmax的优势在于:A-Softmax倍角计算是要通过倍角公式,反向传播时不方便求导,而只减m反向传播时导数不用变化。

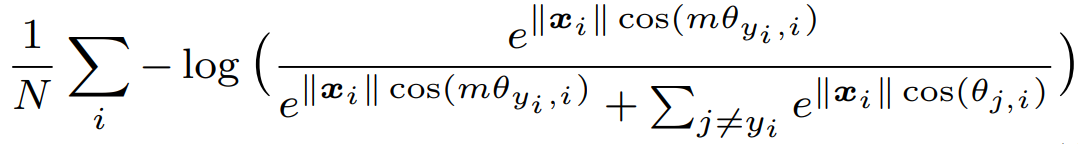

5. ArcFace

对特征x和权重w L2正则化,并引入角度间隔距离m。需要在

Θ

1

+

m

<

Θ

2

\Theta _{1}+m<\Theta _{2}

Θ1+m<Θ2的情况下,才判定x属于第1类,比单纯的softmax更加严格。

与AM-Softmax不同的是ArcFace引入角度距离,而非余弦距离。因为相比余弦距离,增加角度对分类的影响更加直接。

与A-Softmax有两点不同:

1.A-Softmax是乘以一个角度系数,而ArcFace是加上一个角度。

2.A-Softmax只对权重w 进行L2正则化,而ArcFace是对权重w和特征向量x都进行了正则化,再乘以一个缩放比例s。

6. 补充一个很关键的知识点:

标准化后的两个向量,它们的欧氏距离的平方与余弦距离成正比

7. xmind总结

8. ArcFace代码

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import matplotlib.pyplot as plt

class Net(nn.Module):

def __init__(self):

super().__init__()

self.layer1 = nn.Sequential(

nn.Conv2d(1, 16, 3, 1), # 26

nn.BatchNorm2d(16),

nn.PReLU(),

nn.Conv2d(16, 32, 3, 1), # 24

nn.BatchNorm2d(32),

nn.PReLU(),

nn.MaxPool2d(2, 2), # 12

nn.Conv2d(32, 64, 3, 1), # 10

nn.BatchNorm2d(64),

nn.PReLU(),

nn.Conv2d(64, 64, 3, 1), # 8

nn.BatchNorm2d(64),

nn.PReLU(),

nn.Conv2d(64, 128, 3, 1), # 6

nn.BatchNorm2d(128),

nn.PReLU(),

nn.Conv2d(128, 128, 3, 1), # 4

nn.BatchNorm2d(128),

nn.PReLU(),

nn.MaxPool2d(2, 2) # 2

)

self.layer2 = nn.Linear(2*2*128, 2)

# self.layer3 = nn.Linear(2, 10)

self.arcsoft = ArcNet(10, 2)

def visualize(self, feat, labels, epoch):

# plt.ion()

color = ['#ff0000', '#ffff00', '#00ff00', '#00ffff', '#0000ff',

'#ff00ff', '#990000', '#999900', '#009900', '#009999']

plt.clf()

for i in range(10):

plt.plot(feat[labels == i, 0], feat[labels == i, 1], '.', c=color[i])

plt.legend(['0', '1', '2', '3', '4', '5', '6', '7', '8', '9'], loc='upper right')

# plt.xlim(xmin=-5,xmax=5)

# plt.ylim(ymin=-5,ymax=5)

plt.title("epoch=%d" % epoch)

plt.savefig('./images/epoch=%d.jpg' % epoch)

# plt.draw()

# plt.pause(0.001)

def forward(self, x):

x = self.layer1(x)

x = x.view(x.size(0), -1)

y_feature = self.layer2(x) # N, 2

y_output = torch.log(self.arcsoft(y_feature))

return y_feature, y_output

class ArcNet(nn.Module):

def __init__(self, cls_num, feature_dim):

super().__init__()

self.W = nn.Parameter(torch.randn(feature_dim, cls_num), requires_grad=True)

def forward(self, feature, m=1):

w = F.normalize(self.W, dim=0) # 2, 10

x = F.normalize(feature, dim=1) # N, 2

# sw = torch.sqrt(torch.sum(torch.pow(w, 2))) # 1

# sx = torch.sqrt(torch.sum(torch.pow(x, 2))) # 1

# print(sw.shape, sx.shape)

# s = sw * sx

s = 30

cosa = torch.matmul(x, w) / s # N 10

a = torch.acos(cosa) # N 10

a1 = torch.exp(s * torch.cos(a + m)) # N 10

a2 = torch.sum(torch.exp(s * cosa), dim=1, keepdim=True) # N 1

a3 = torch.exp(s * cosa) # N 10

a4 = torch.exp(s * torch.cos(a + m)) # N 10

arcsoft = torch.div(a1, a2 - a3 + a4) # N 10

return arcsoft

import torch

import torch.nn as nn

import torch.utils.data as data

import torchvision

import torchvision.transforms as transforms

from arcsoft.net import Net

import os

import numpy as np

if __name__ == '__main__':

save_path = "models/net_arcloss.pth"

train_data = torchvision.datasets.MNIST(root="./MNIST", download=True, train=True,

transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize(mean=[0.5,],std=[0.5,])]))

train_loader = data.DataLoader(dataset=train_data, shuffle=True, batch_size=200,num_workers=4)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

net = Net().to(device)

if os.path.exists(save_path):

net.load_state_dict(torch.load(save_path))

else:

print("NO Param")

lossfn_cls = nn.NLLLoss()

optimzer = torch.optim.Adam(net.parameters())

# optimzer_arcloss = torch.optim.SGD(net.arcsoft.parameters(),lr=0.001, momentum=0.9)

# optimzer = torch.optim.SGD(net.parameters(),lr=1e-3, momentum=0.9)

# optimzer = torch.optim.SGD(net.parameters(),lr=1e-3, momentum=0.9, weight_decay=0.0005)

epoch = 0

while True:

feat_loader = []

label_loader = []

for i, (x, y) in enumerate(train_loader):

x = x.to(device)

y = y.to(device)

feature,output = net.forward(x)

# print(feature.shape) #[N,2]

# print(output.shape) #[N,10]

loss = lossfn_cls(output, y)

optimzer.zero_grad()

loss.backward()

optimzer.step()

feat_loader.append(feature)

label_loader.append(y)

if i % 120 == 0:

print("epoch:",epoch,"i:",i,"arcsoftmax_loss:",loss.item())

feat = torch.cat(feat_loader, 0)

labels = torch.cat(label_loader, 0)

net.visualize(feat.data.cpu().numpy(), labels.data.cpu().numpy(), epoch)

epoch += 1

torch.save(net.state_dict(), save_path)

if epoch == 150:

break

9. 可视化结果

softmax:

arcface:

论文地址:

L-Softmax

A-Softmax

AM-Softmax

ArcFace

参考资料:

人脸识别中的常见softmax函数总结

Softmax、L-Softmax、A-Softmax的整理对比

人脸识别系列(十六):AMSoftmax

人脸识别:arcFace Loss详解

368

368

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?