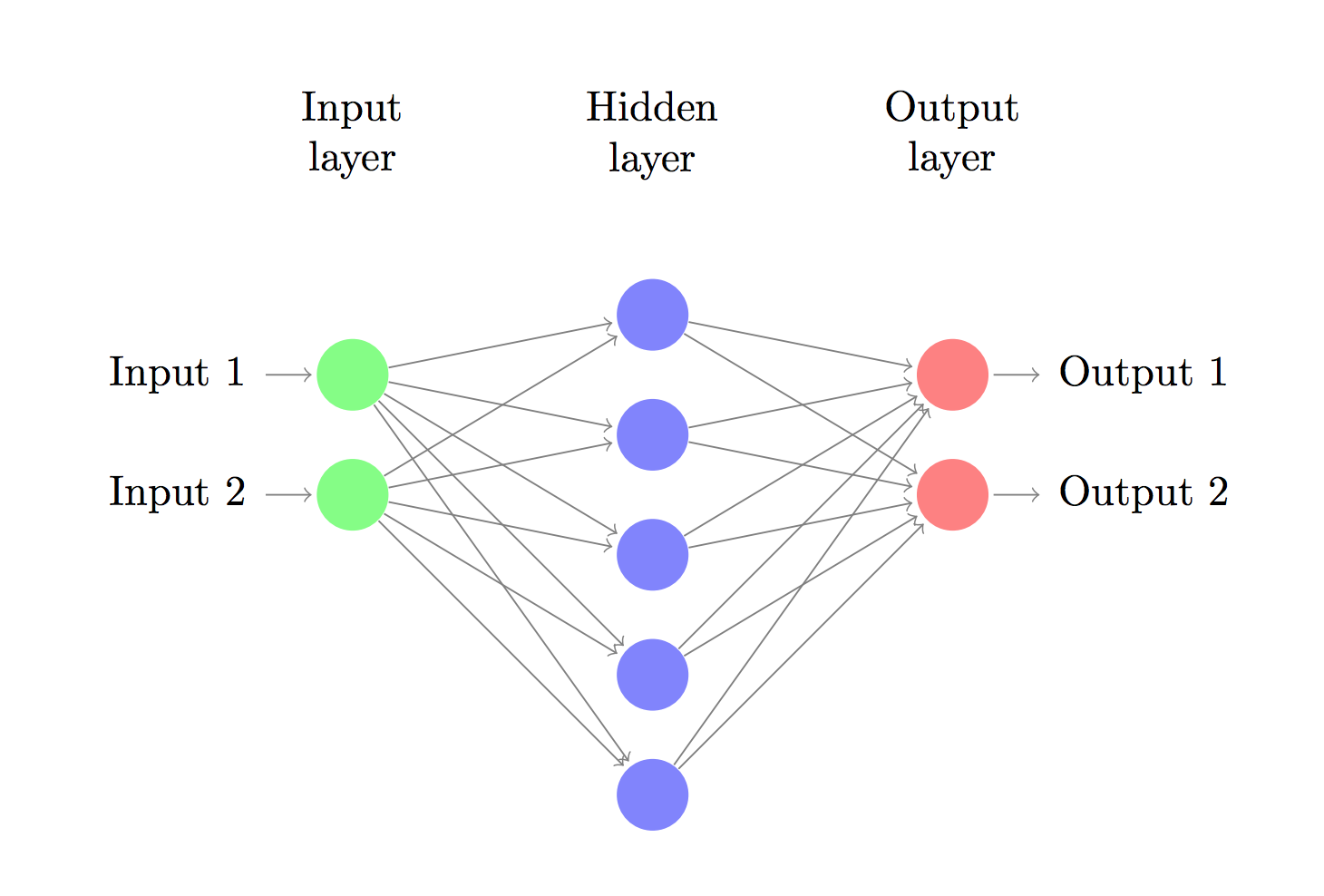

一、构建一个简单的神经网络

神经网络的两个重要超参数W,和learning_rate的设置对网络的好坏有重要的影响,合理的设置超参数是很重要的,下面通过简单的实验来看看是怎么影响网络的。

二、学习率的设置

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

import matplotlib.pyplot as plt

import csv

mnist = input_data.read_data_sets("MNIST_data/", one_hot=True)

def weight(shape):

init = tf.random_normal(shape=shape, stddev=0.01)

return tf.Variable(init)

def baise(shape):

init = tf.random_normal(shape=shape, stddev=0.001)

return tf.Variable(init)

def conv(x, w, k, b):

return tf.nn.relu(tf.nn.bias_add(tf.nn.conv2d(x, w, strides=[1, k, k, 1], padding="VALID"), b))

def max_pool(x, k):

return tf.nn.max_pool(x, ksize=[1, k, k, 1], strides=[1, k, k, 1], padding="VALID")

X = tf.placeholder(tf.float32, [None, 784])

Y = tf.placeholder(tf.float32, [None, 10])

X_input = tf.reshape(X, [-1, 28, 28, 1])

w1 = weight([5, 5, 1,16])

b1 = baise([16])

con1 = conv(X_input, w1, 1, b1)

pool1 = max_pool(con1, 2)

pool1 = tf.reshape(pool1, [-1, 12 * 12 * 16])

wc1 = weight([12 * 12 * 16, 10])

bc1 = baise([10])

fc1 = tf.nn.elu(tf.matmul(pool1, wc1) + bc1)

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=Y, logits=fc1))

correct_pred = tf.equal(tf.argmax(Y, 1), tf.argmax(fc1, 1))

accuracy = tf.reduce_mean(tf.cast(correct

本文探讨了神经网络中学习率和权重初始化如何影响网络性能。学习率的选择应平衡快速收敛与稳定性,而权重初始化如随机正态分布的选择则能影响loss下降速度和收敛行为。适当的数据归一化和避免权重全为0是初始化的重要考量,以防止网络对称并促进有效学习。

本文探讨了神经网络中学习率和权重初始化如何影响网络性能。学习率的选择应平衡快速收敛与稳定性,而权重初始化如随机正态分布的选择则能影响loss下降速度和收敛行为。适当的数据归一化和避免权重全为0是初始化的重要考量,以防止网络对称并促进有效学习。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

631

631

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?