当你安装了tensorflow后,tensorflow自带的教程演示了如何使用卷积神经网络来识别手写数字。代码路径为tensorflow-master\tensorflow\examples\tutorials\mnist\mnist_deep.py。

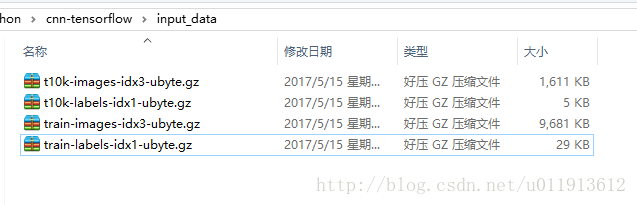

为了快速测试该程序,我提前将需要的mnist手写数字库下载到了工程目录(我在pycharm中新建了工程,并把mnist_deep.py中的代码拷贝过去)下的input_data目录下:

然后,需要修改程序中指定mnist图片库路径的代码,

if __name__ == '__main__':

print("main run")

parser = argparse.ArgumentParser()

parser.add_argument('--data_dir', type=str,

**default='input_data'**,

help='Directory for storing input data')

FLAGS, unparsed = parser.parse_known_args()

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)将这里的default的值改为input_data即可。然后程序就可以运行了。默认会训练20000批次,每个批次50个数据。运行完成后准确率达到99.2%。

接下来,这里会对该程序做一点分析,并且做一些修改,来验证一些咱们的猜想,并且加深对代码的理解。

# Copyright 2015 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

"""A deep MNIST classifier using convolutional layers.

See extensive documentation at

https://www.tensorflow.org/get_started/mnist/pros

"""

# Disable linter warnings to maintain consistency with tutorial.

# pylint: disable=invalid-name

# pylint: disable=g-bad-import-order

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import argparse

import sys

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

FLAGS = None

def deepnn(x):

"""deepnn builds the graph for a deep net for classifying digits.

Args:

x: an input tensor with the dimensions (N_examples, 784), where 784 is the

number of pixels in a standard MNIST image.

Returns:

A tuple (y, keep_prob). y is a tensor of shape (N_examples, 10), with values

equal to the logits of classifying the digit into one of 10 classes (the

digits 0-9). keep_prob is a scalar placeholder for the probability of

dropout.

"""

# Reshape to use within a convolutional neural net.

# Last dimension is for "features" - there is only one here, since images are

# grayscale -- it would be 3 for an RGB image, 4 for RGBA, etc.

x_image = tf.reshape(x, [-1, 28, 28, 1])

# First convolutional layer - maps one grayscale image to 32 feature maps.

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

# Pooling layer - downsamples by 2X.

h_pool1 = max_pool_2x2(h_conv1)

# Second convolutional layer -- maps 32 feature maps to 64.

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

# Second pooling layer.

h_pool2 = max_pool_2x2(h_conv2)

# Fully connected layer 1 -- after 2 round of downsampling, our 28x28 image

# is down to 7x7x64 feature maps -- maps this to 1024 features.

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*

本文介绍了使用TensorFlow的卷积神经网络(CNN)识别MNIST手写数字的步骤,包括数据加载、网络构建、训练过程以及激活函数的探讨。通过增加卷积层和尝试不同激活函数,分析了模型性能,并展示了参数的保存与恢复方法。

本文介绍了使用TensorFlow的卷积神经网络(CNN)识别MNIST手写数字的步骤,包括数据加载、网络构建、训练过程以及激活函数的探讨。通过增加卷积层和尝试不同激活函数,分析了模型性能,并展示了参数的保存与恢复方法。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

265

265

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?