图1-1 C3结构图

图1-2 BottleNeck和ConvBNSiLU结构图

Conv、BottleNeck、C3的代码如下:

class Conv(nn.Module):

# Standard convolution 通用卷积模块,包括1卷积1BN1激活,激活默认SiLU,可用变量指定,不激活时用nn.Identity()占位,直接返回输入

def \_\_init\_\_(self, c1, c2, k=1, s=1, p=None, g=1, act=True): # ch_in, ch_out, kernel, stride, padding, groups

super(Conv, self).\_\_init\_\_()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = nn.SiLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuseforward(self, x):

return self.act(self.conv(x))

class Bottleneck(nn.Module):

# Standard bottleneck 残差块

def \_\_init\_\_(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super(Bottleneck, self).\_\_init\_\_()

c_ = int(c2 \* e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

def forward(self, x): # 如果shortcut并且输入输出通道相同则跳层相加

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3(nn.Module):

# CSP Bottleneck with 3 convolutions

def \_\_init\_\_(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

super(C3, self).\_\_init\_\_()

c_ = int(c2 \* e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 \* c_, c2, 1) # act=FReLU(c2)

self.m = nn.Sequential(\*[Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)]) # n个残差组件(Bottleneck)

# self.m = nn.Sequential(\*[CrossConv(c\_, c\_, 3, 1, g, 1.0, shortcut) for \_ in range(n)])

def forward(self, x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), dim=1))

二、轻量化C3模块

轻量化C3的改进思路是将原C3模块中使用的普通卷积,全部替换为深度可分离卷积,其余结构不变,改进后的DP_Conv、DP_BottleNeck、DP_C3的代码如下:

class DP\_Conv(nn.Module):

def \_\_init\_\_(self, c1, c2, k=1, s=1, p=None, g=1, act=True): # ch_in, ch_out, kernel, stride, padding, groups

super(DP_Conv, self).\_\_init\_\_()

self.conv = nn.Conv2d(c1, c1, kernel_size=3, stride=1, padding=1, groups=c1)

self.conv = nn.Conv2d(c1, c2, kernel_size=1, stride=s)

self.bn = nn.BatchNorm2d(c2)

self.act = nn.SiLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

def fuseforward(self, x):

return self.act(self.conv(x))

class DP\_Bottleneck(nn.Module):

def \_\_init\_\_(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super(DP_Bottleneck, self).\_\_init\_\_()

c_ = int(c2 \* e) # hidden channels

self.cv1 = DP\_Conv(c1, c_, 1)

self.cv2 = DP\_Conv(c_, c2, 1)

self.add = shortcut and c1 == c2

def forward(self, x): # 如果shortcut并且输入输出通道相同则跳层相加

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class DP\_C3(nn.Module):

# CSP Bottleneck with 3 convolutions

def \_\_init\_\_(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

super(DP_C3, self).\_\_init\_\_()

c_ = int(c2 \* e) # hidden channels

self.cv1 = DP\_Conv(c1, c_, 1)

self.cv2 = DP\_Conv(c1, c_, 1)

self.cv3 = DP\_Conv(2 \* c_, c2, 1) # act=FReLU(c2)

self.m = nn.Sequential(\*[DP\_Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)]) # n个残差组件(Bottleneck)

# self.m = nn.Sequential(\*[CrossConv(c\_, c\_, 3, 1, g, 1.0, shortcut) for \_ in range(n)])

def forward(self, x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), dim=1))

修改后的网络结构如下:

# parameters

nc: 20 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 backbone

## 学习路线:

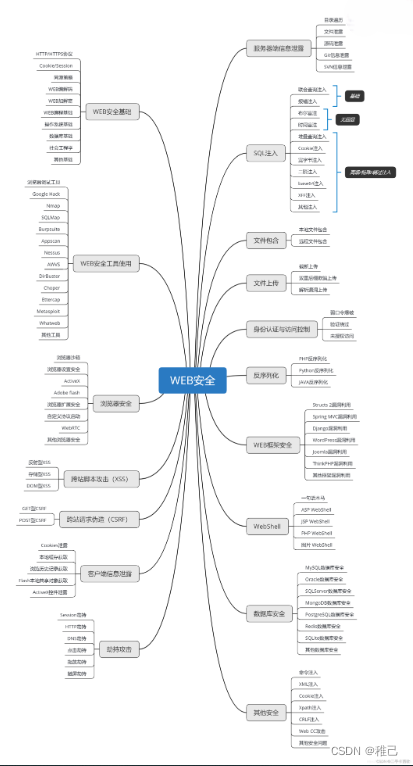

这个方向初期比较容易入门一些,掌握一些基本技术,拿起各种现成的工具就可以开黑了。不过,要想从脚本小子变成黑客大神,这个方向越往后,需要学习和掌握的东西就会越来越多以下是网络渗透需要学习的内容:

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以点击这里获取](https://bbs.csdn.net/topics/618540462)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

1894

1894

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?