音乐生成舞蹈

1. EDGE: Editable Dance Generation From Music

Abstract

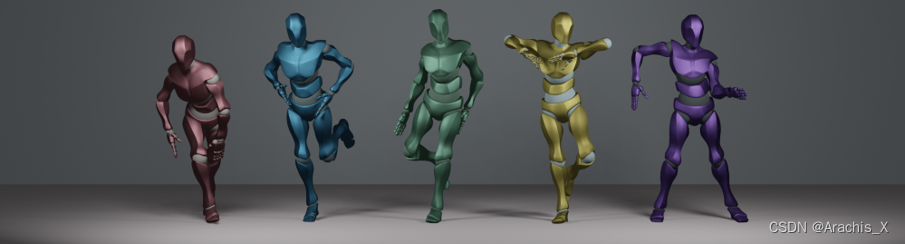

Dance is an important human art form, but creating new dances can be difficult and time-consuming. In this work, we introduce Editable Dance GEneration (EDGE), a state-of-the-art method for editable dance generation that is capable of creating realistic, physically-plausible dances while remaining faithful to the input music. EDGE uses a transformer-based diffusion model paired with Jukebox, a strong music feature extractor, and confers powerful editing capabilities well-suited to dance, including joint-wise conditioning, and in-betweening. We introduce a new metric for physical plausibility, and evaluate dance quality generated by our method extensively through (1) multiple quantitative metrics on physical plausibility, beat alignment, and diversity benchmarks, and more importantly, (2) a large-scale user study, demonstrating a significant improvement over previous state-of-the-art methods. Qualitative samples from our model can be found at our website.

舞蹈是人类重要的艺术形式,但创作新舞蹈既困难又耗时。

在这项工作中,我们介绍了可编辑舞蹈生成技术(Editable Dance GEneration,EDGE),这是一种最先进的可编辑舞蹈生成方法,能够在忠实于输入音乐的同时,创建逼真、物理上可信的舞蹈。

EDGE 采用基于transformer的扩散模型,与强大的音乐特征提取器 Jukebox 搭配使用,并赋予强大的舞蹈编辑功能,包括联合调节和中间调节。

我们引入了一种新的物理可信度指标,并通过(1)物理可信度、节拍对齐和多样性基准等多个量化指标,以及更重要的(2)大规模用户研究,对我们的方法生成的舞蹈质量进行了广泛评估,结果表明我们的方法比以往最先进的方法有显著改进。

您可以在我们的网站上找到我们模型的定性样本。

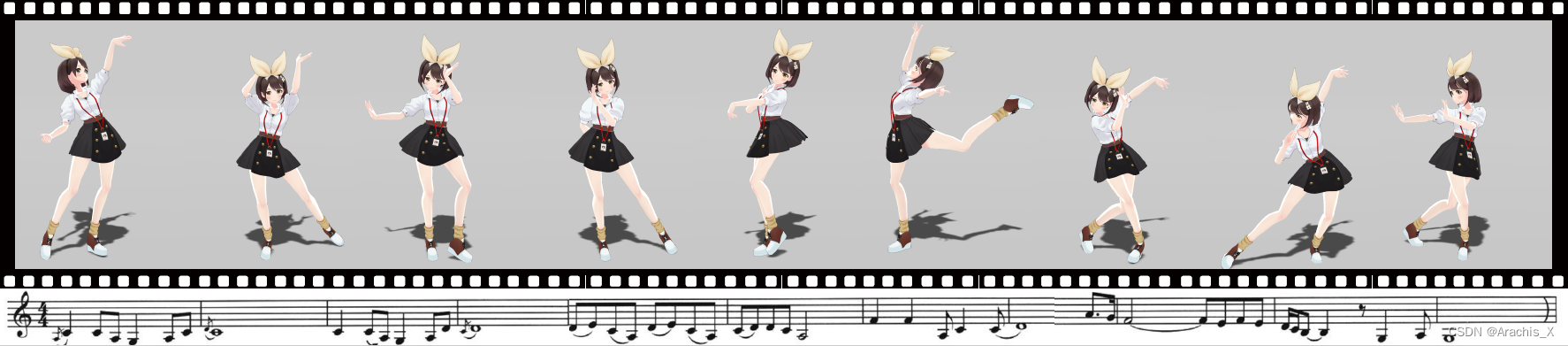

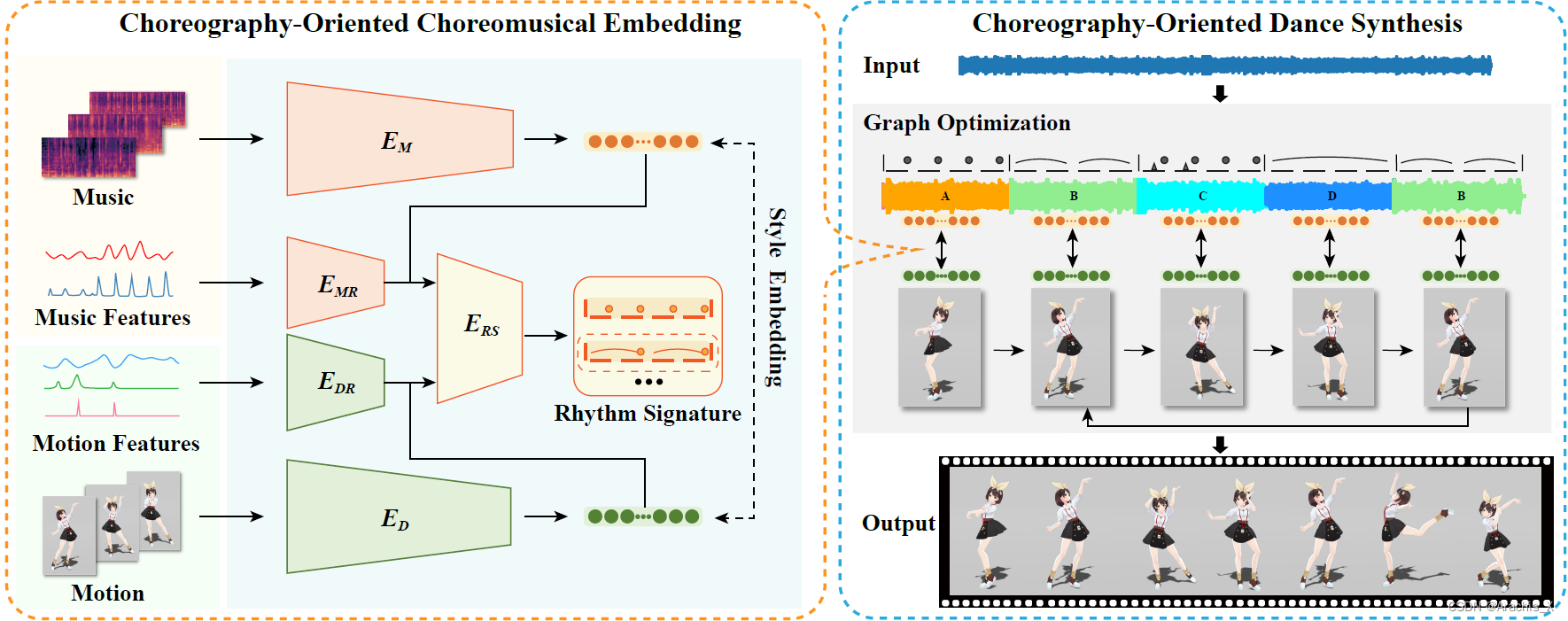

2. ChoreoMaster : Choreography-Oriented Music-Driven Dance Synthesis

SIGGRAPH 2021

论文地址

论文主页

论文解读

代码地址(无)

3. ChoreoNet: Towards Music to Dance Synthesis with Choreographic Action Unit

2020.9

论文地址

代码地址(无)

4. Music2Dance: DanceNet for Music-driven Dance Generation

2020.2 Computer Vision and Pattern Recognition

论文地址

代码地址(无)

425

425

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?