1、文章概述

论文地址: https://arxiv.org/abs/2405.12979

代码地址:OmniGlue: Generalizable Feature Matching with Foundation Model GuidanceDESCRIPTION META TAG![]() https://hwjiang1510.github.io/OmniGlue/ 现如今,大多数现有的局部特征匹配研究仍旧集中在有大量训练数据的特定视觉领域,当前的模型难以泛化到其他领域,OmniGlue是首个以泛化为核心原则设计的可学习图像匹配器,增强了对训练时未可见领域的泛化能力。

https://hwjiang1510.github.io/OmniGlue/ 现如今,大多数现有的局部特征匹配研究仍旧集中在有大量训练数据的特定视觉领域,当前的模型难以泛化到其他领域,OmniGlue是首个以泛化为核心原则设计的可学习图像匹配器,增强了对训练时未可见领域的泛化能力。

2、Methodology

2.1 Framework

OmniGlue利用视觉基础模型DINOv2的广泛知识来指导特征匹配效果,通过一个新颖的关键点位置引导注意力机制,将空间和外观信息分离,从而增强匹配描述符。与此同时,它还采用了SuperPoint进行关键点检测和特征提取,并构建了密集链接的图像内和图像间的关键点图。再通过自注意力和交叉注意力机制进行信息传播,同时仅使用关键点位置信息引导,避免了对训练分布的过度依赖。最终,通过优化的匹配层生成两个图像中的关键点映射。

- DINOv2:是一种用于在大型图像数据集上预训练图像编码器,以获得具有语义的视觉特征。这些特征可用于广泛的视觉任务,无需微调即可获得与有监督模型相当的性能。

- SuperPoint:通过对图像进行多次不同的尺度或角度变换来帮助网络能够在不同视角、不同尺度观测到特征点。

2.2 Demo详解

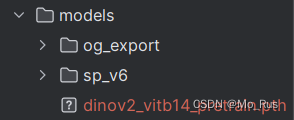

需要下载的demo模型文件

主要代码:

omniglue_extract.py

这段代码主要用于封装TensorFlow和自定义特征提取模型 (SuperPoint 和 DINO),以执行基于提取特征和训练的匹配模型 (og_export) 的图像匹配功能。它能够找到和过滤两幅图像之间的匹配关键点,并返回匹配的关键点位置及其置信度分数。

import numpy as np

from omniglue import dino_extract

from omniglue import superpoint_extract

from omniglue import utils

import tensorflow as tf

#DINO_FEATURE_DIM表示DINO特征的维度,MATCH_THRESHOLD设置了匹配阈值,用于根据置信度分数过滤匹配。

DINO_FEATURE_DIM = 768

MATCH_THRESHOLD = 1e-3

#定义类OmniGlue

class OmniGlue:

# TODO(omniglue): class docstring

def __init__(

self,

og_export: str,

sp_export: str | None = None,

dino_export: str | None = None,

) -> None:

self.matcher = tf.saved_model.load(og_export)

if sp_export is not None:

self.sp_extract = superpoint_extract.SuperPointExtract(sp_export)

if dino_export is not None:

self.dino_extract = dino_extract.DINOExtract(dino_export, feature_layer=1)

#定义函数FindMatches,用于在两幅图像之间找到匹配的关键点

def FindMatches(self, image0: np.ndarray, image1: np.ndarray):

"""TODO(omniglue): docstring."""

height0, width0 = image0.shape[:2]

height1, width1 = image1.shape[:2]

#使用superpoint提取特征

sp_features0 = self.sp_extract(image0)

sp_features1 = self.sp_extract(image1)

#使用dino提取特征

dino_features0 = self.dino_extract(image0)

dino_features1 = self.dino_extract(image1)

#对提取的dino特征计算描述符

dino_descriptors0 = dino_extract.get_dino_descriptors(

dino_features0,

tf.convert_to_tensor(sp_features0[0], dtype=tf.float32),

tf.convert_to_tensor(height0, dtype=tf.int32),

tf.convert_to_tensor(width0, dtype=tf.int32),

DINO_FEATURE_DIM,

)

dino_descriptors1 = dino_extract.get_dino_descriptors(

dino_features1,

tf.convert_to_tensor(sp_features1[0], dtype=tf.float32),

tf.convert_to_tensor(height1, dtype=tf.int32),

tf.convert_to_tensor(width1, dtype=tf.int32),

DINO_FEATURE_DIM,

)

#构造输入,使用提取的特征和描述付构建匹配模式的输入数据

inputs = self._construct_inputs(

width0,

height0,

width1,

height1,

sp_features0,

sp_features1,

dino_descriptors0,

dino_descriptors1,

)

#匹配输出,调用保存的模型获取输出

og_outputs = self.matcher.signatures['serving_default'](**inputs)

soft_assignment = og_outputs['soft_assignment'][:, :-1, :-1]

#使用阈值将其转换为匹配矩阵

match_matrix = (

utils.soft_assignment_to_match_matrix(soft_assignment, MATCH_THRESHOLD)

.numpy()

.squeeze()

)

#根据supeipoint的特征置信分数过滤匹配

#过滤掉置信度为0的关键点匹配

match_indices = np.argwhere(match_matrix)

keep = []

for i in range(match_indices.shape[0]):

match = match_indices[i, :]

if (sp_features0[2][match[0]] > 0.0) and (

sp_features1[2][match[1]] > 0.0

):

keep.append(i)

match_indices = match_indices[keep]

#格式化匹配的关键点极其置信度,以供输出

match_kp0s = []

match_kp1s = []

match_confidences = []

for match in match_indices:

match_kp0s.append(sp_features0[0][match[0], :])

match_kp1s.append(sp_features1[0][match[1], :])

match_confidences.append(soft_assignment[0, match[0], match[1]])

match_kp0s = np.array(match_kp0s)

match_kp1s = np.array(match_kp1s)

match_confidences = np.array(match_confidences)

return match_kp0s, match_kp1s, match_confidences

### Private methods ###

#构建并返回匹配模型所需的张量字典 (inputs),包括关键点、描述符、分数和图像尺寸。

def _construct_inputs(

self,

width0,

height0,

width1,

height1,

sp_features0,

sp_features1,

dino_descriptors0,

dino_descriptors1,

):

inputs = {

'keypoints0': tf.convert_to_tensor(

np.expand_dims(sp_features0[0], axis=0),

dtype=tf.float32,

),

'keypoints1': tf.convert_to_tensor(

np.expand_dims(sp_features1[0], axis=0), dtype=tf.float32

),

'descriptors0': tf.convert_to_tensor(

np.expand_dims(sp_features0[1], axis=0), dtype=tf.float32

),

'descriptors1': tf.convert_to_tensor(

np.expand_dims(sp_features1[1], axis=0), dtype=tf.float32

),

'scores0': tf.convert_to_tensor(

np.expand_dims(np.expand_dims(sp_features0[2], axis=0), axis=-1),

dtype=tf.float32,

),

'scores1': tf.convert_to_tensor(

np.expand_dims(np.expand_dims(sp_features1[2], axis=0), axis=-1),

dtype=tf.float32,

),

'descriptors0_dino': tf.expand_dims(dino_descriptors0, axis=0),

'descriptors1_dino': tf.expand_dims(dino_descriptors1, axis=0),

'width0': tf.convert_to_tensor(

np.expand_dims(width0, axis=0), dtype=tf.int32

),

'width1': tf.convert_to_tensor(

np.expand_dims(width1, axis=0), dtype=tf.int32

),

'height0': tf.convert_to_tensor(

np.expand_dims(height0, axis=0), dtype=tf.int32

),

'height1': tf.convert_to_tensor(

np.expand_dims(height1, axis=0), dtype=tf.int32

),

}

return inputs

1568

1568

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?