吴恩达Coursera课程 DeepLearning.ai 编程作业系列,本文为《改善深层神经网络:超参数调试、正则化以及优化 》部分的第二周“优化算法”的课程作业,同时增加了一些辅助的测试函数。

另外,本节课程笔记在此:《 吴恩达Coursera深度学习课程 DeepLearning.ai 提炼笔记(2-2)– 优化算法》,如有任何建议和问题,欢迎留言。

Optimization Methods

Until now, you’ve always used Gradient Descent to update the parameters and minimize the cost. In this notebook, you will learn more advanced optimization methods that can speed up learning and perhaps even get you to a better final value for the cost function. Having a good optimization algorithm can be the difference between waiting days vs. just a few hours to get a good result.

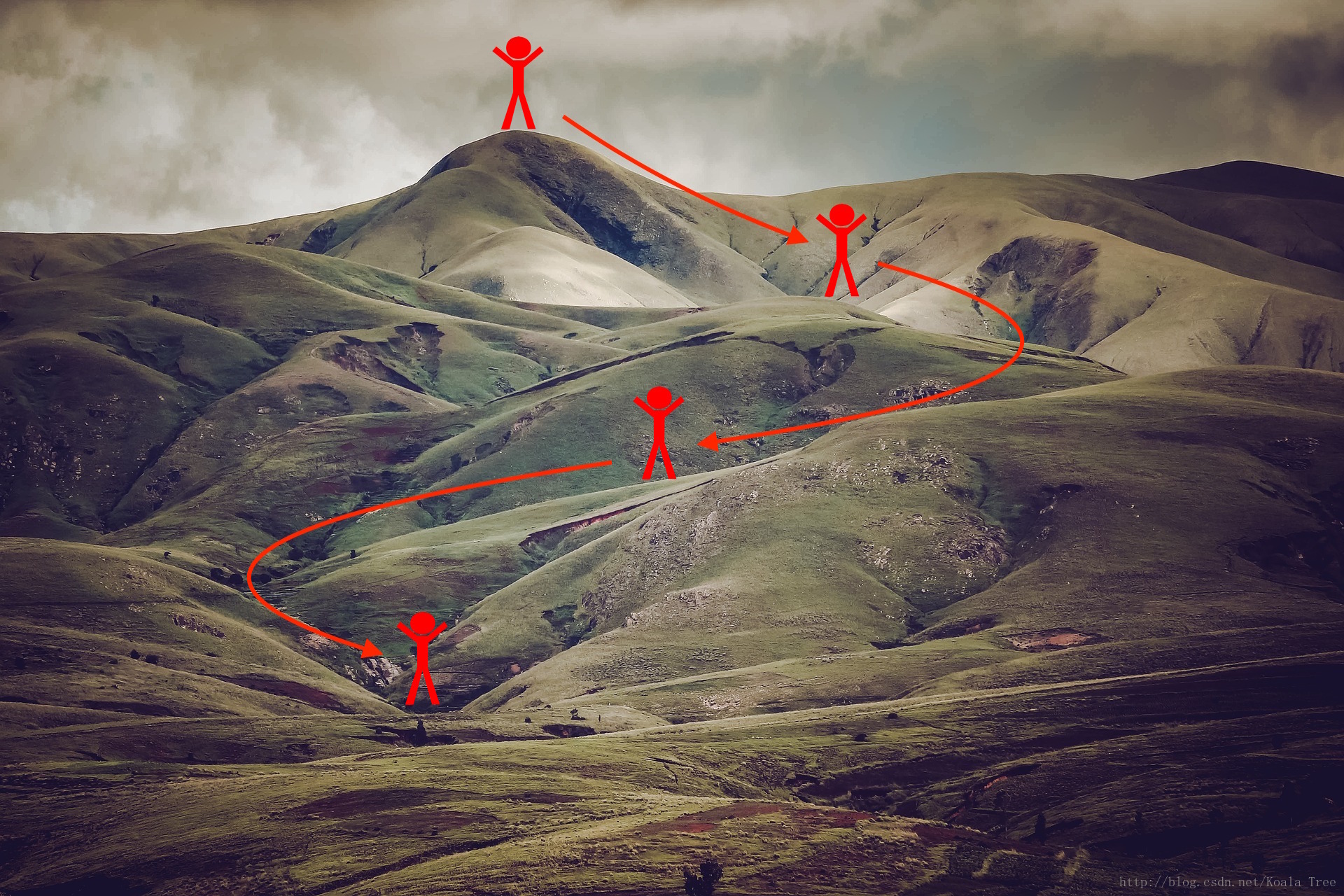

Gradient descent goes “downhill” on a cost function J J . Think of it as trying to do this:

At each step of the training, you update your parameters following a certain direction to try to get to the lowest possible point.

Notations: As usual,

da for any variable a.

To get started, run the following code to import the libraries you will need.

import numpy as np

import matplotlib.pyplot as plt

import scipy.io

import math

import sklearn

import sklearn.datasets

from opt_utils import load_params_and_grads, initialize_parameters, forward_propagation, backward_propagation

from opt_utils import compute_cost, predict, predict_dec, plot_decision_boundary, load_dataset

from testCases import *

%matplotlib inline

plt.rcParams['figure.figsize'] = (7.0, 4.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'You can get the support code from here.

There are some help function:

def load_params_and_grads(seed=1):

np.random.seed(seed)

W1 = np.random.randn(2,3)

b1 = np.random.randn(2,1)

W2 = np.random.randn(3,3)

b2 = np.random.randn(3,1)

dW1 = np.random.randn(2,3)

db1 = np.random.randn(2,1)

dW2 = np.random.randn(3,3)

db2 = np.random.randn(3,1)

return W1, b1, W2, b2, dW1, db1, dW2, db2

def initialize_parameters(layer_dims):

"""

Arguments:

layer_dims -- python array (list) containing the dimensions of each layer in our network

Returns:

parameters -- python dictionary containing your parameters "W1", "b1", ..., "WL", "bL":

W1 -- weight matrix of shape (layer_dims[l], layer_dims[l-1])

b1 -- bias vector of shape (layer_dims[l], 1)

Wl -- weight matrix of shape (layer_dims[l-1], layer_dims[l])

bl -- bias vector of shape (1, layer_dims[l])

Tips:

- For example: the layer_dims for the "Planar Data classification model" would have been [2,2,1].

This means W1's shape was (2,2), b1 was (1,2), W2 was (2,1) and b2 was (1,1). Now you have to generalize it!

- In the for loop, use parameters['W' + str(l)] to access Wl, where l is the iterative integer.

"""

np.random.seed(3)

parameters = {}

L = len(layer_dims) # number of layers in the network

for l in range(1, L):

parameters['W' + str(l)] = np.random.randn(layer_dims[l], layer_dims[l-1])* np.sqrt(2 / layer_dims[l-1])

parameters['b' + str(l)] = np.zeros((layer_dims[l], 1))

assert(parameters['W' + str(l)].shape == layer_dims[l], layer_dims[l-1])

assert(parameters['W' + str(l)].shape == layer_dims[l], 1)

return parameters

def forward_propagation(X, parameters):

"""

Implements the forward propagation (and computes the loss) presented in Figure 2.

Arguments:

X -- input dataset, of shape (input size, number of examples)

parameters -- python dictionary containing your parameters "W1", "b1", "W2", "b2", "W3", "b3":

W1 -- weight matrix of shape ()

b1 -- bias vector of shape ()

W2 -- weight matrix of shape ()

b2 -- bias vector of shape ()

W3 -- weight matrix of shape ()

b3 -- bias vector of shape ()

Returns:

loss -- the loss function (vanilla logistic loss)

"""

# retrieve parameters

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

W3 = parameters["W3"]

b3 = parameters["b3"]

# LINEAR -> RELU -> LINEAR -> RELU -> LINEAR -> SIGMOID

z1 = np.dot(W1, X) + b1

a1 = relu(z1)

z2 = np.dot(W2, a1) + b2

a2 = relu(z2)

z3 = np.dot(W3, a2) + b3

a3 = sigmoid(z3)

cache = (z1, a1, W1, b1, z2, a2, W2, b2, z3, a3, W3, b3)

return a3, cache

def backward_propagation(X, Y, cache):

"""

Implement the backward propagation presented in figure 2.

Arguments:

X -- input dataset, of shape (input size, number of examples)

Y -- true "label" vector (containing 0 if cat, 1 if non-cat)

cache -- cache output from forward_propagation()

Returns:

gradients -- A dictionary with the gradients with respect to each parameter, activation and pre-activation variables

"""

m = X.shape[1]

(z1, a1, W1, b1, z2, a2, W2, b2, z3, a3, W3, b3) = cache

dz3 = 1./m * (a3 - Y)

dW3 = np.dot(dz3, a2.T)

db3 = np.sum(dz3, axis=1, keepdims = True)

da2 = np.dot(W3.T, dz3)

dz2 = np.multiply(da2, np.int64(a2 > 0))

dW2 = np.dot(dz2, a1.T)

db2 = np.sum(dz2, axis=1, keepdims = True)

da1 = np.dot(W2.T, dz2)

dz1 = np.multiply(da1, np.int64(a1 > 0))

dW1 = np.dot(dz1, X.T)

db1 = np.sum(dz1, axis=1, keepdims = True)

gradients = {

"dz3": dz3, "dW3": dW3, "db3": db3,

"da2": da2, "dz2": dz2, "dW2": dW2, "db2": db2,

"da1": da1, "dz1": dz1, "dW1": dW1, "db1": db1}

return gradients

def compute_cost(a3, Y):

"""

Implement the cost function

Arguments:

a3 -- post-activation, output of forward propagation

Y -- "true" labels vector, same shape as a3

Returns:

cost - value of the cost function

"""

m = Y.shape[1]

logprobs = np.multiply(-np.log(a3),Y) + np.multiply(-np.log(1 - a3), 1 - Y)

cost = 1./m * np.sum(logprobs)

return cost

def predict(X, y, parameters):

"""

This function is used to predict the results of a n-layer neural network.

Arguments:

X -- data set of examples you would like to label

parameters -- parameters of the trained model

Returns:

p -- predictions for the given dataset X

"""

m = X.shape[1]

p = np.zeros((1,m), dtype = np.int)

# Forward propagation

a3, caches = forward_propagation(X, parameters)

# convert probas to 0/1 predictions

for i in range(0, a3.shape[1]):

if a3[0,i] > 0.5:

p[0,i] = 1

else:

p[0,i] = 0

# print results

#print ("predictions: " + str(p[0,:]))

#print ("true labels: " + str(y[0,:]))

print("Accuracy: " + str(np.mean((p[0,:] == y[0,:]))))

return p

def predict_dec(parameters, X):

"""

Used for plotting decision boundary.

Arguments:

parameters -- python dictionary containing your parameters

X -- input data of size (m, K)

Returns

predictions -- vector of predictions of our model (red: 0 / blue: 1)

"""

# Predict using forward propagation and a classification threshold of 0.5

a3, cache = forward_propagation(X, parameters)

predictions = (a3 > 0.5)

return predictions

def plot_decision_boundary(model, X, y):

# Set min and max values and give it some padding

x_min, x_max = X[0, :].min() - 1, X[0, :].max() + 1

y_min, y_max = X[1, :].min() - 1, X[1, :].max() + 1

h = 0.01

# Generate a grid of points with distance h between them

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

# Predict the function value for the whole grid

Z = model(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

# Plot the contour and training examples

plt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)

plt.ylabel('x2')

plt.xlabel('x1')

plt.scatter(X[0, :], X[1, :], c=y, cmap=plt.cm.Spectral)

plt.show()

def load_dataset():

np.random.seed(3)

train_X, train_Y = sklearn.datasets.make_moons(n_samples=300, noise=.2) #300 #0.2

# Visualize the data

plt.scatter(train_X[:, 0], train_X[:, 1], c=train_Y, s=40, cmap=plt.cm.Spectral);

train_X = train_X.T

train_Y = train_Y.reshape((1, train_Y.shape[0]))

return train_X, train_Y1 - Gradient Descent

A simple optimization method in machine learning is gradient descent (GD). When you take gradient steps with respect to all m m examples on each step, it is also called Batch Gradient Descent.

Warm-up exercise: Implement the gradient descent update rule. The gradient descent rule is, for

:

where L is the number of layers and α α is the learning rate. All parameters should be stored in the parameters dictionary. Note that the iterator l starts at 0 in the for loop while the first parameters are W[1] W [ 1 ] and b[1] b [ 1 ] . You need to shift l to l+1 when coding.

# GRADED FUNCTION: update_parameters_with_gd

def update_parameters_with_gd(parameters, grads, learning_rate):

"""

Update parameters using one step of gradient descent

Arguments:

parameters -- python dictionary containing your parameters to be updated:

parameters['W' + str(l)] = Wl

parameters['b' + str(l)] = bl

grads -- python dictionary containing your gradients to update each parameters:

grads['dW' + str(l)] = dWl

grads['db' + str(l)] = dbl

learning_rate -- the learning rate, scalar.

Returns:

parameters -- python dictionary containing your updated parameters

"""

L = len(parameters) // 2 # number of layers in the neural networks

# Update rule for each parameter

for l in range(L):

### START CODE HERE ### (approx. 2 lines)

parameters["W" + str(l+1)] = parameters["W" + str(l+1)] - learning_rate*grads["dW" + str(l+1)]

parameters["b" + str(l+1)] = parameters["b" + str(l+1)] - learning_rate*grads["db" + str(l+1)]

### END CODE HERE ###

return parametersparameters, grads, learning_rate = update_parameters_with_gd_test_case()

parameters = update_parameters_with_gd(parameters, grads, learning_rate)

print("W1 = " + str(parameters["W1"]))

print("b1 = " + str(parameters["b1"]))

print("W2 = " + str(parameters["W2"]))

print("b2 = " + str(parameters["b2"]))W1 = [[ 1.63535156 -0.62320365 -0.53718766]

[-1.07799357 0.85639907 -2.29470142]]

b1 = [[ 1.74604067]

[-0.75184921]]

W2 = [[ 0.32171798 -0.25467393 1.46902454]

[-2.05617317 -0.31554548 -0.3756023 ]

[ 1.1404819 -1.09976462 -0.1612551 ]]

b2 = [[-0.88020257]

[ 0.02561572]

[ 0.57539477]]

A variant of this is Stochastic Gradient Descent (SGD), which is equivalent to mini-batch gradient descent where each mini-batch has just 1 example. The update rule that you have just implemented does not change. What changes is that you would be computing gradients on just one training example at a time, rather than on the whole training set. The code examples below illustrate the difference between stochastic gradient descent and (batch) gradient descent.

- (Batch) Gradient Descent:

X = data_input

Y = labels

parameters = initialize_parameters(layers_dims)

for i in range(0, num_iterations):

# Forward propagation

a, caches = forward_propagation(X, parameters)

# Compute cost.

cost = compute_cost(a, Y)

# Backward propagation.

grads = backward_propagation(a, caches, parameters)

# Update parameters.

parameters = update_parameters(parameters, grads)

- Stochastic Gradient Descent:

X = data_input

Y = labels

parameters = initialize_parameters(layers_dims)

for i in range(0, num_iterations):

for j in range(0, m):

# Forward propagation

a, caches = forward_propagation(X[:,j], parameters)

# Compute cost

cost = compute_cost(a, Y[:,j])

# Backward propagation

grads = backward_propagation(a, caches, parameters)

# Update parameters.

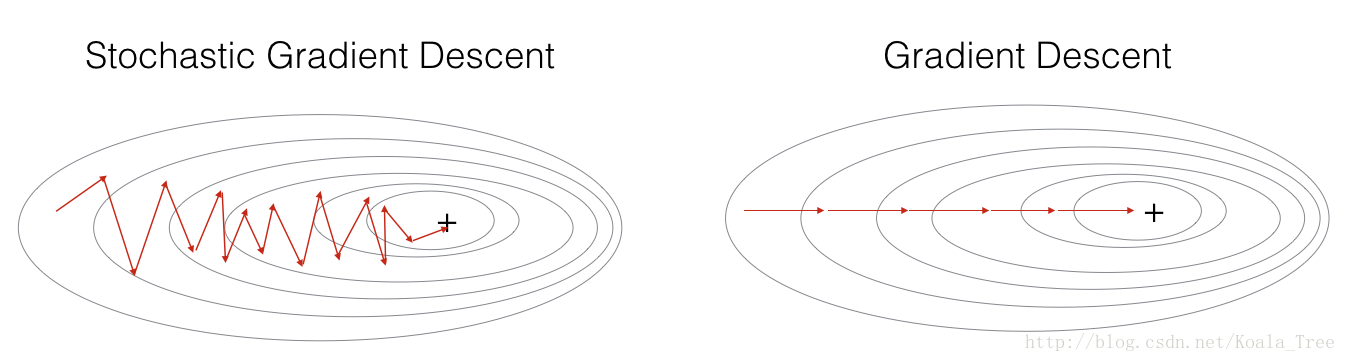

parameters = update_parameters(parameters, grads)In Stochastic Gradient Descent, you use only 1 training example before updating the gradients. When the training set is large, SGD can be faster. But the parameters will “oscillate” toward the minimum rather than converge smoothly. Here is an illustration of this:

“+” denotes a minimum of the cost. SGD leads to many oscillations to reach convergence. But each step is a lot faster to compute for SGD than for GD, as it uses only one training example (vs. the whole batch for GD).

Note also that implementing SGD requires 3 for-loops in total:

1. Over the number of iterations

2. Over the m m training examples

3. Over the layers (to update all parameters, from

to (W[L],b[L]) ( W [ L ] , b [ L ] ) )

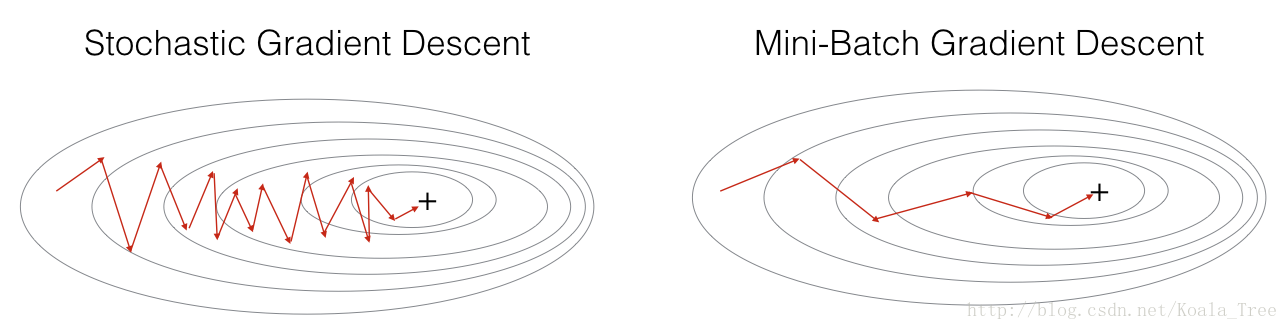

In practice, you’ll often get faster results if you do not use neither the whole training set, nor only one training example, to perform each update. Mini-batch gradient descent uses an intermediate number of examples for each step. With mini-batch gradient descent, you loop over the mini-batches instead of looping over individual training examples.

“+” denotes a minimum of the cost. Using mini-batches in your optimization algorithm often leads to faster optimization.

What you should remember:

- The difference between gradient descent, mini-batch gradient descent and stochastic gradient descent is the number of examples you use to perform one update step.

- You have to tune a learning rate hyperparameter α α .

- With a well-turned mini-batch size, usually it outperforms either gradient descent or stochastic gradient descent (particularly when the training set is large).

2 - Mini-Batch Gradient descent

Let’s learn how to build mini-batches from the training set (X, Y).

There are two steps:

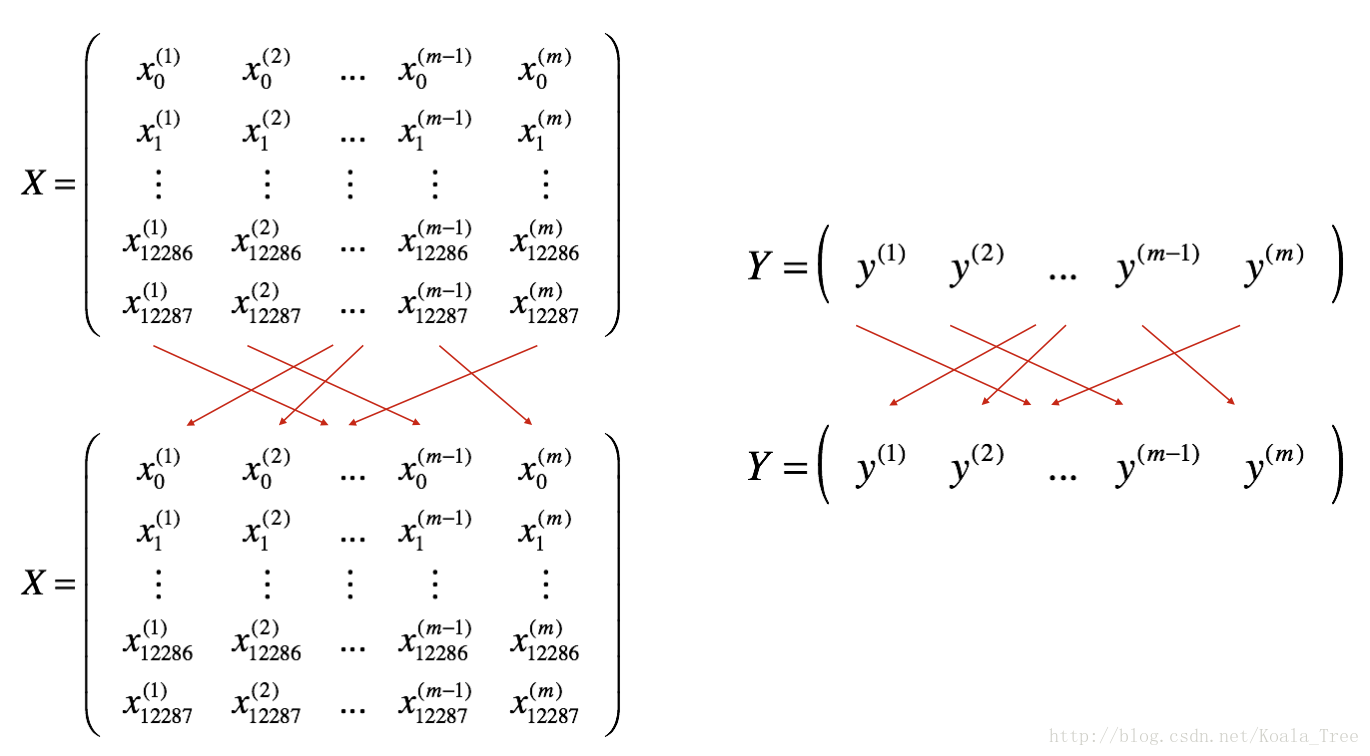

- Shuffle: Create a shuffled version of the training set (X, Y) as shown below. Each column of X and Y represents a training example. Note that the random shuffling is done synchronously between X and Y. Such that after the shuffling the ith i t h column of X is the example corresponding to the ith i t h label in Y. The shuffling step ensures that examples will be split randomly into different mini-batches.

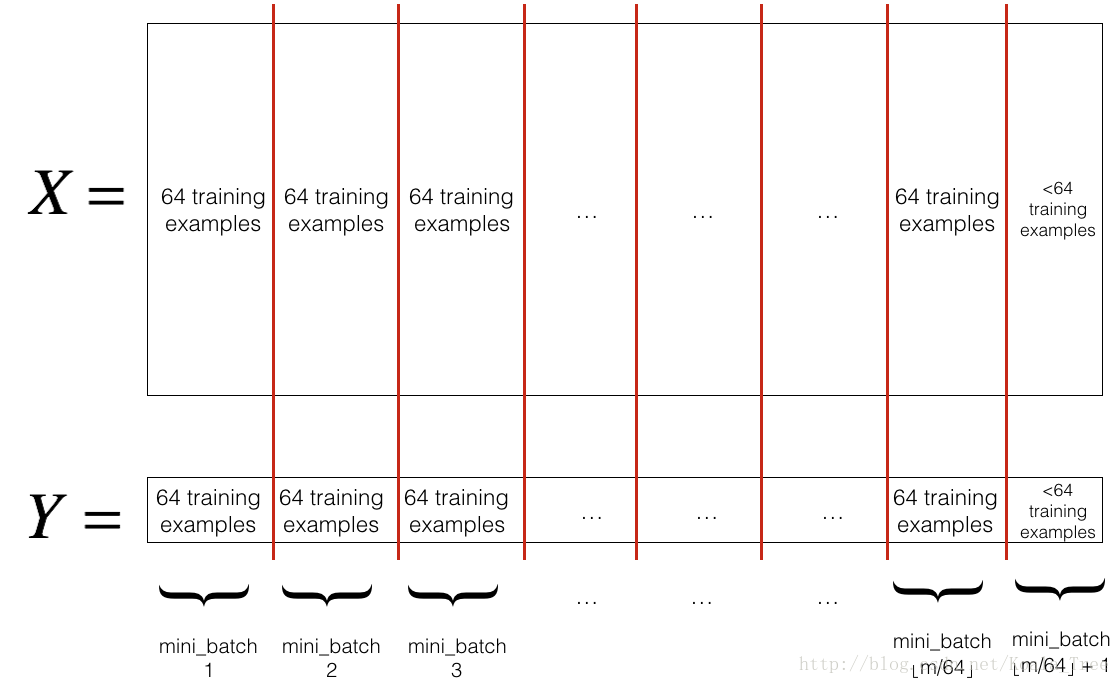

- Partition: Partition the shuffled (X, Y) into mini-batches of size

mini_batch_size(here 64). Note that the number of training examples is not always divisible bymini_batch_size. The last mini batch might be smaller, but you don’t need to worry about this. When the final mini-batch is smaller than the fullmini_batch_size, it will look like this:

Exercise: Implement random_mini_batches. We coded the shuffling part for you. To help you with the partitioning step, we give you the following code that selects the indexes for the 1st 1 s t and 2nd

本文介绍了吴恩达深度学习课程中关于优化算法的内容,包括梯度下降、小批量梯度下降、动量优化和Adam优化器。通过比较不同优化方法,强调了Adam在训练神经网络时的优势,特别是在复杂问题上。

本文介绍了吴恩达深度学习课程中关于优化算法的内容,包括梯度下降、小批量梯度下降、动量优化和Adam优化器。通过比较不同优化方法,强调了Adam在训练神经网络时的优势,特别是在复杂问题上。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1152

1152

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?