EfficientNetv1主要是用NAS(Neural Architecture Search)技术来搜索网络的图像输入分辨率r,网络的深度depth以及channel的宽度width三个参数的合理化配置。在之前的一些论文中,基本都是通过改变上述3个参数中的一个来提升网络的性能,而EfficientNetv1就是同时来探索这三个参数的影响。

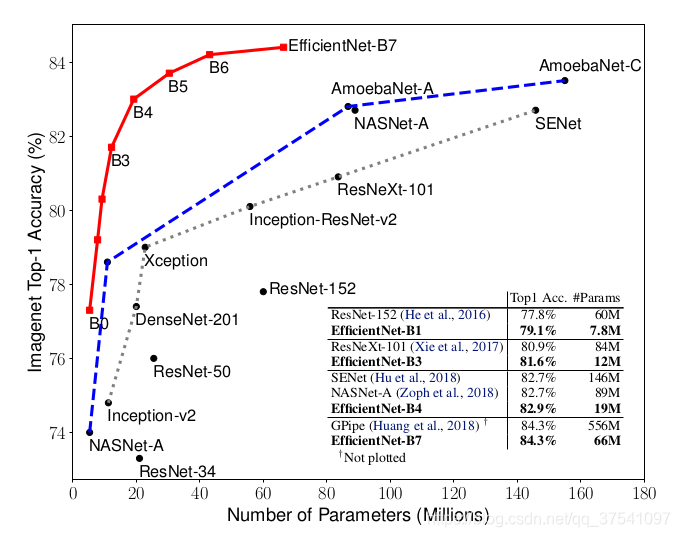

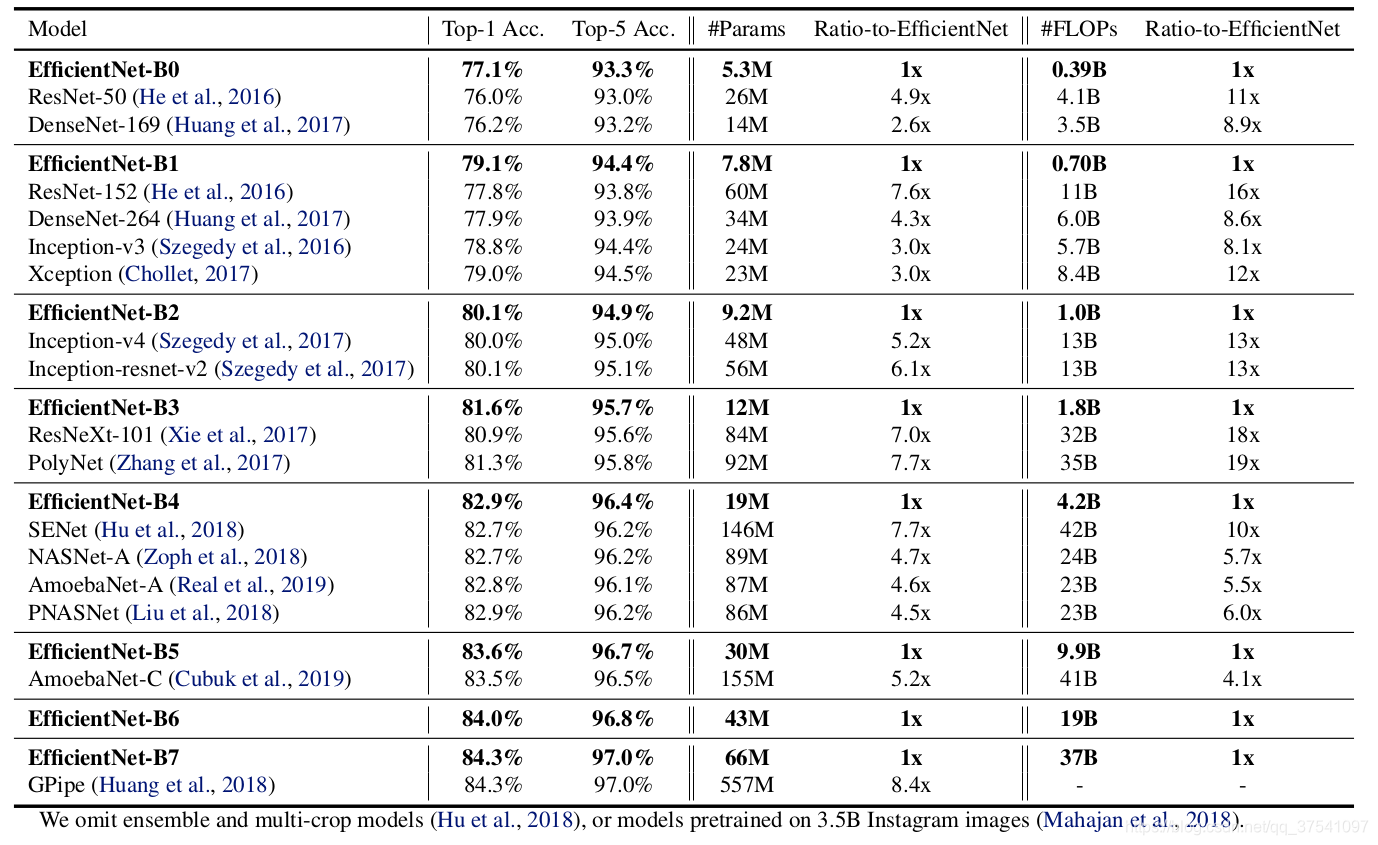

可以看到,EfficientNetv1网络系列的精度和参数量都比之前的模型好,精度高,参数量小,但是参数量小并不意味着模型训练速度和推理速度更快,EfficientNetv1由于输入图片的分辨率大小而非常吃显存。

1.EfficientNetv1的网络结构的调整

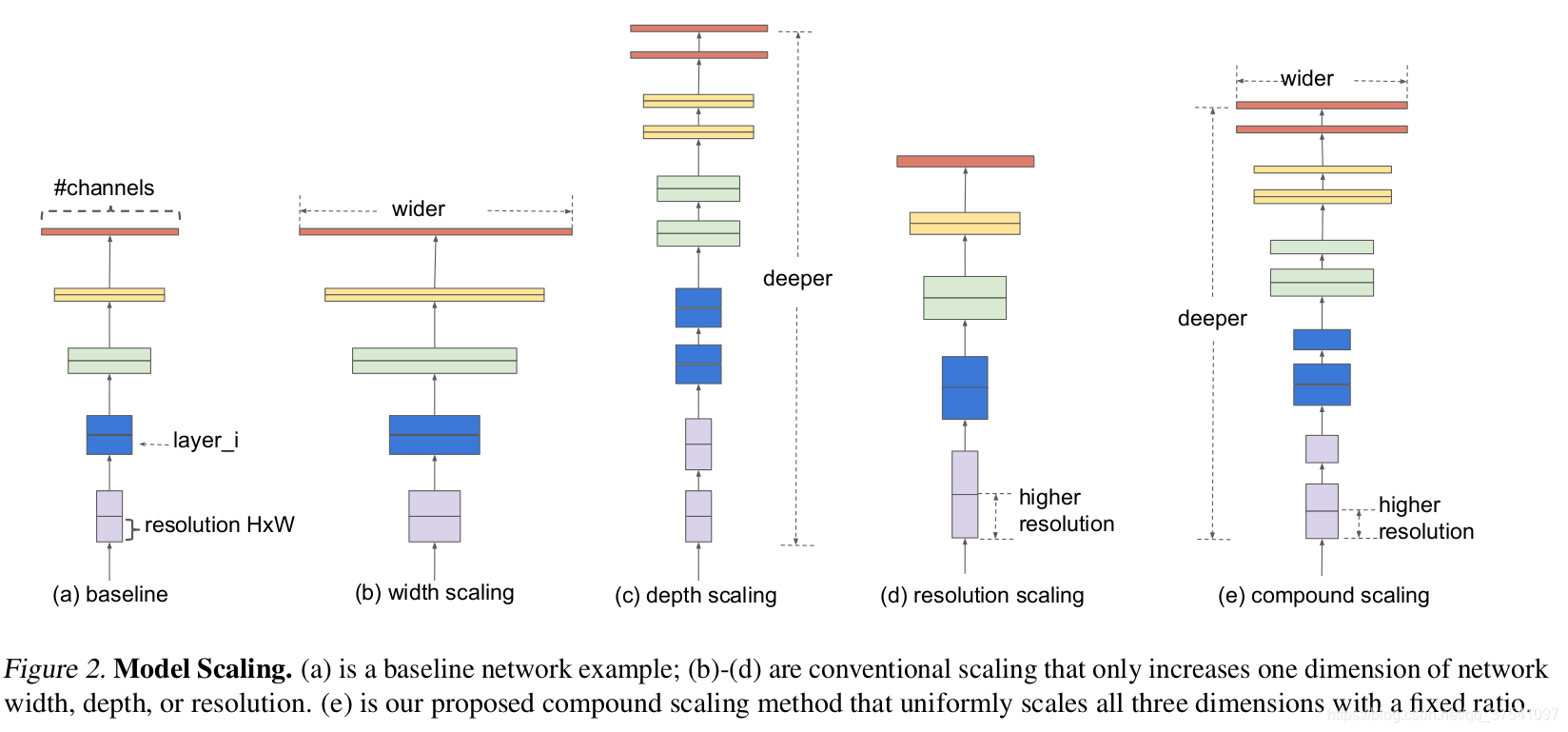

在之前的一些论文中,有的会通过增加网络的width即增加卷积核的个数(增加特征矩阵的channels)来提升网络的性能如图(b)所示,有的会通过增加网络的深度即使用更多的层结构来提升网络的性能如图(c)所示,有的会通过增加输入网络的分辨率来提升网络的性能如图(d)所示。而在本篇论文中会同时增加网络的width、网络的深度以及输入网络的分辨率来提升网络的性能如图(e)所示:

1)根据以往的经验,增加网络的深度depth能够得到更加丰富、复杂的特征并且能够很好的应用到其它任务中。但网络的深度过深会面临梯度消失,训练困难的问题。

2)增加网络的width能够获得更高细粒度的特征并且也更容易训练,但对于width很大而深度较浅的网络往往很难学习到更深层次的特征。

3)增加输入网络的图像分辨率能够潜在得获得更高细粒度的特征模板,但对于非常高的输入分辨率,准确率的增益也会减小。并且大分辨率图像会增加计算量。

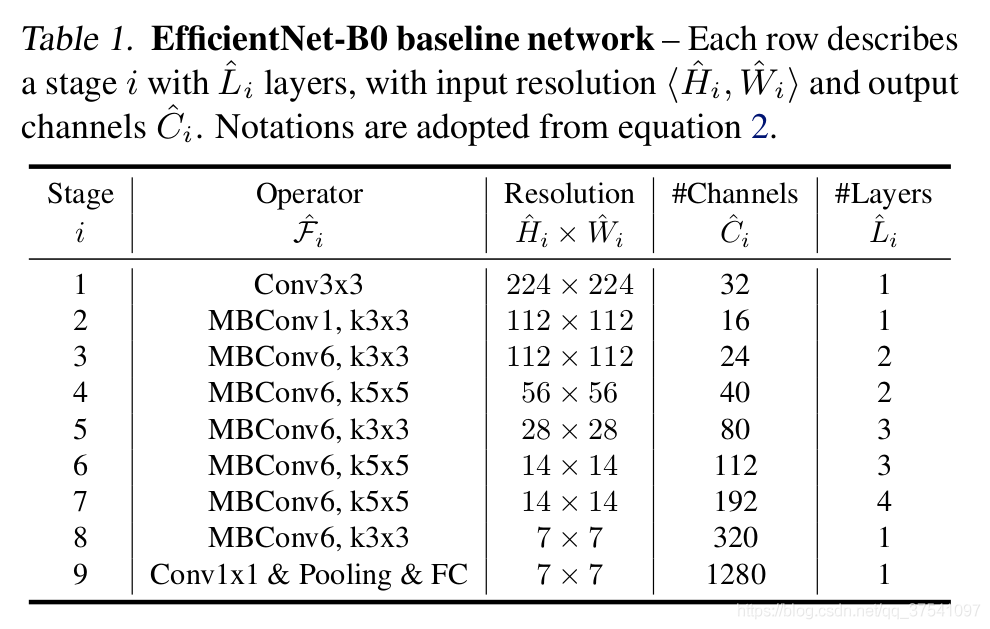

2.EfficientNetv1的网络结构

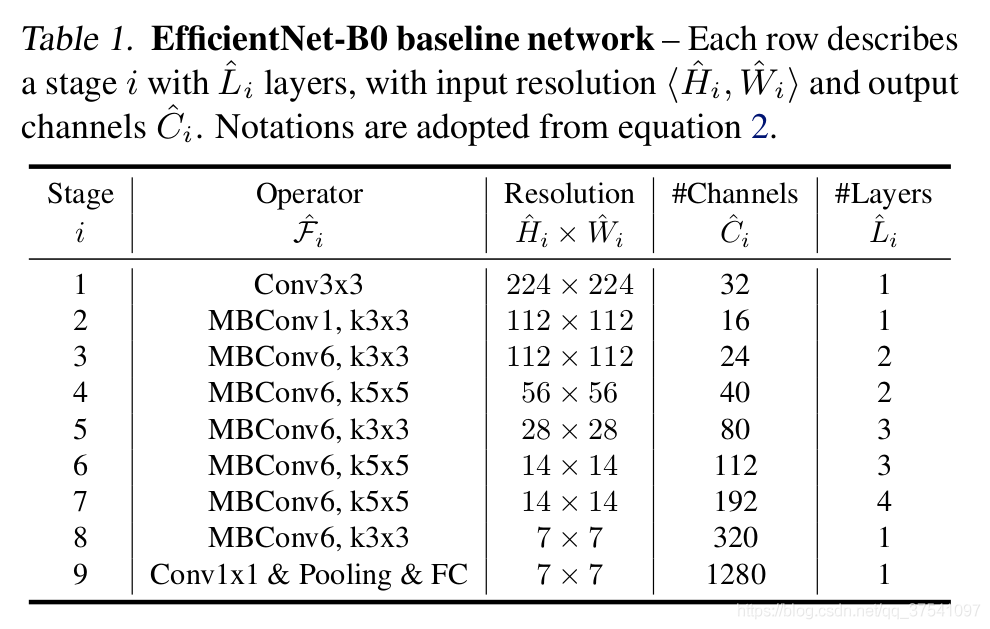

下表为EfficientNet-B0的网络框架(B1-B7就是在B0的基础上修改Resolution,Channels以及Layers),可以看出网络总共分成了9个Stage,第一个Stage就是一个卷积核大小为3x3步距为2的普通卷积层(包含BN和激活函数Swish),Stage2~Stage8都是在重复堆叠MBConv结构(最后一列的Layers表示该Stage重复MBConv结构多少次),而Stage9由一个普通的1x1的卷积层(包含BN和激活函数Swish)一个平均池化层和一个全连接层组成。表格中每个MBConv后会跟一个数字1或6,这里的1或6就是倍率因子n即MBConv中第一个1x1的卷积层会将输入特征矩阵的channels扩充为n倍,其中k3x3或k5x5表示MBConv中Depthwise Conv所采用的卷积核大小。Channels表示通过该Stage后输出特征矩阵的Channels。Layers表示每个stage中Operator block的数量。

2.1MBConv

MBConv其实就是MobileNetV3网络中的InvertedResidualBlock,但也有些许区别。一个是采用的激活函数不一样(EfficientNet的MBConv中使用的都是Swish激活函数),在每个MBConv中都加入了SE(Squeeze-and-Excitation)模块。另一个是当MBConv具有shortcut时,都会有Dropout结构。

如图所示,MBConv结构主要由一个1x1的普通卷积(升维作用,包含BN和Swish),一个kxk的Depthwise Conv卷积(包含BN和Swish)k的具体值可看EfficientNet-B0的网络框架主要有3x3和5x5两种情况,一个SE模块,一个1x1的普通卷积(降维作用,包含BN),一个Droupout层构成。搭建过程中还需要注意几点:

1)第一个升维的1x1卷积层,它的卷积核个数是输入特征矩阵channel的n倍,n∈{1,6}。当n = 1 n=1n=1时,不要第一个升维的1x1卷积层,即Stage2中的MBConv结构都没有第一个升维的1x1卷积层(这和MobileNetV3网络类似)。

2)关于shortcut连接,仅当输入MBConv结构的特征矩阵与输出的特征矩阵shape相同时才存在(代码中可通过stride==1 and inputc_channels==output_channels条件来判断)。

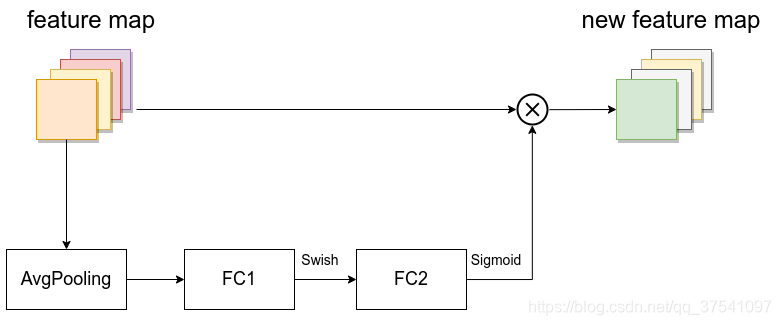

3)SE模块如下所示,由一个全局平均池化,两个全连接层组成。第一个全连接层的节点个数是输入该MBConv特征矩阵channels的1/4,且使用Swish激活函数。第二个全连接层的节点个数等于Depthwise Conv层输出的特征矩阵channels,且使用Sigmoid激活函数。

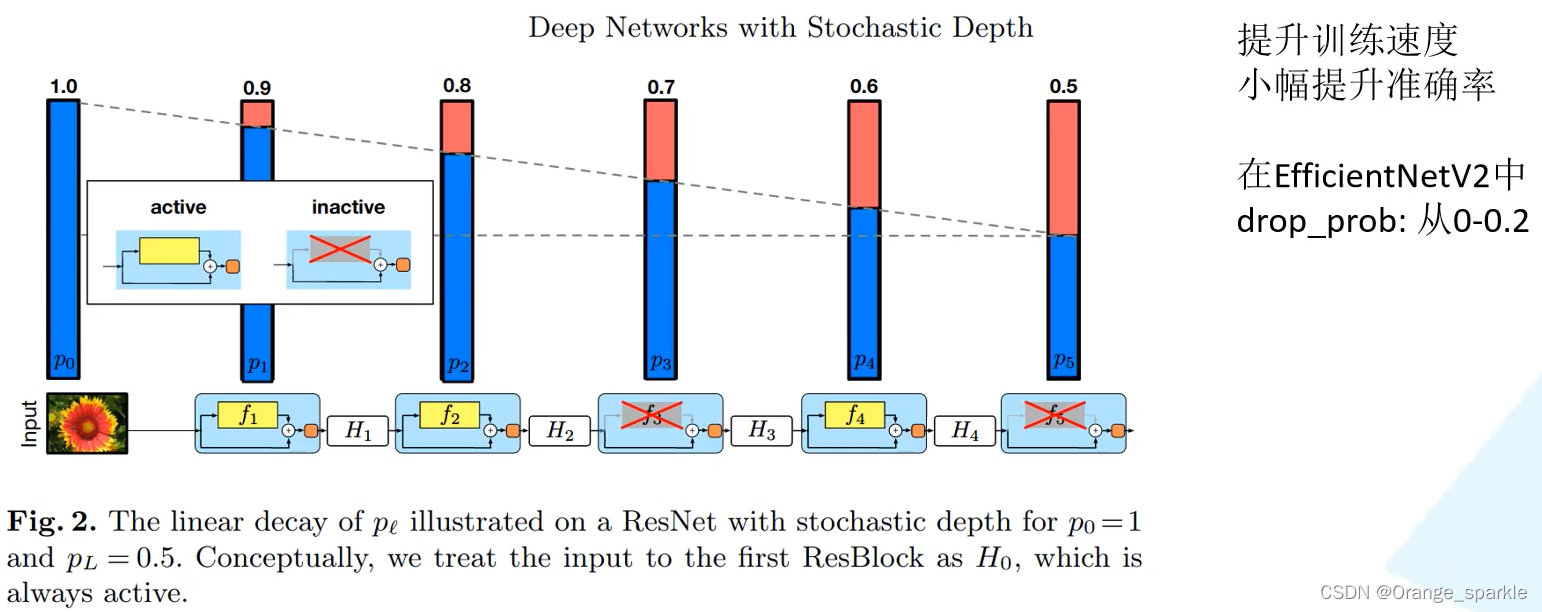

4)Dropout

需要注意的是,这里的Dropout层是Stochastic Depth,即会随机丢掉整个block的主分支(只剩捷径分支,相当于直接跳过了这个block)也可以理解为减少了网络的深度。具体可参考Deep Networks with Stochastic Depth这篇文章。

2.2 EfficientNet(B0-B7)

2.2.1EfficientNetB0

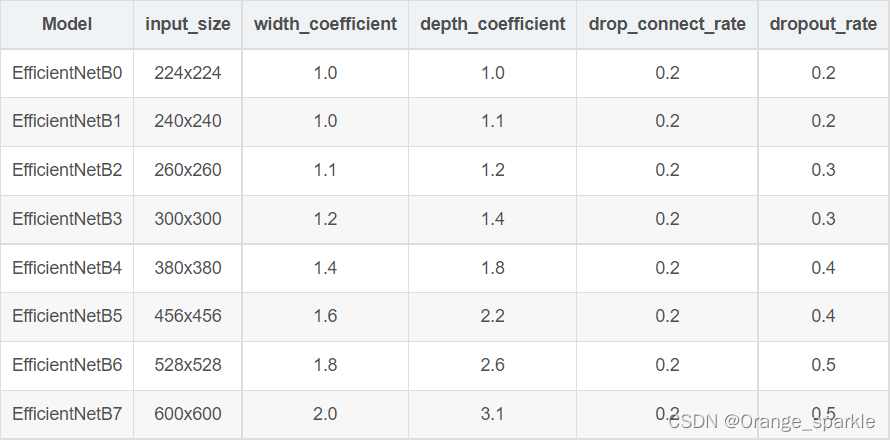

2.2.2 EfficientNet(B0-B7)

1)input_size代表训练网络时输入网络的图像大小

2)width_coefficient代表channel维度上的倍率因子,比如在 EfficientNetB0中Stage1的3x3卷积层所使用的卷积核个数是32,那么在B6中就是32 × 1.8 = 57.6 接着取整到离它最近的8的整数倍即56,其它Stage同理。

3)depth_coefficient代表depth维度上的倍率因子(仅针对Stage2到Stage8),比如在EfficientNetB0中Stage7的L=4,那么在B6中就是4 × 2.6 = 10.4接着向上取整即11.

4)drop_connect_rate是在MBConv结构中dropout层使用的drop_rate(注意,在源码实现中只有使用shortcut的时候才有Dropout层)。

5)dropout_rate是最后一个全连接层前的dropout层(在stage9的Pooling与FC之间)的dropout_rate。

3.EfficientNetv1网络性能

4.EfficientNetv1模型代码

import math

import copy

from functools import partial

from collections import OrderedDict

from typing import Optional, Callable

import torch

import torch.nn as nn

from torch import Tensor

from torch.nn import functional as F

def _make_divisible(ch, divisor=8, min_ch=None):

"""

This function is taken from the original tf repo.

It ensures that all layers have a channel number that is divisible by 8

It can be seen here:

https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet/mobilenet.py

"""

if min_ch is None:

min_ch = divisor

new_ch = max(min_ch, int(ch + divisor / 2) // divisor * divisor)

# Make sure that round down does not go down by more than 10%.

if new_ch < 0.9 * ch:

new_ch += divisor

return new_ch

def drop_path(x, drop_prob: float = 0., training: bool = False):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

"Deep Networks with Stochastic Depth", https://arxiv.org/pdf/1603.09382.pdf

This function is taken from the rwightman.

It can be seen here:

https://github.com/rwightman/pytorch-image-models/blob/master/timm/models/layers/drop.py#L140

"""

if drop_prob == 0. or not training:

return x

keep_prob = 1 - drop_prob

shape = (x.shape[0],) + (1,) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNets

random_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)

random_tensor.floor_() # binarize

output = x.div(keep_prob) * random_tensor

return output

class DropPath(nn.Module):

"""

Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

"Deep Networks with Stochastic Depth", https://arxiv.org/pdf/1603.09382.pdf

"""

def __init__(self, drop_prob=None):

super(DropPath, self).__init__()

self.drop_prob = drop_prob

def forward(self, x):

return drop_path(x, self.drop_prob, self.training)

class ConvBNActivation(nn.Sequential):

def __init__(self,

in_planes: int,

out_planes: int,

kernel_size: int = 3,

stride: int = 1,

groups: int = 1,

norm_layer: Optional[Callable[..., nn.Module]] = None,

activation_layer: Optional[Callable[..., nn.Module]] = None):

padding = (kernel_size - 1) // 2

if norm_layer is None:

norm_layer = nn.BatchNorm2d

if activation_layer is None:

activation_layer = nn.SiLU # alias Swish (torch>=1.7)

super(ConvBNActivation, self).__init__(nn.Conv2d(in_channels=in_planes,

out_channels=out_planes,

kernel_size=kernel_size,

stride=stride,

padding=padding,

groups=groups,

bias=False),

norm_layer(out_planes),

activation_layer())

class SqueezeExcitation(nn.Module):

def __init__(self,

input_c: int, # block input channel

expand_c: int, # block expand channel

squeeze_factor: int = 4):

super(SqueezeExcitation, self).__init__()

squeeze_c = input_c // squeeze_factor

self.fc1 = nn.Conv2d(expand_c, squeeze_c, 1)

self.ac1 = nn.SiLU() # alias Swish

self.fc2 = nn.Conv2d(squeeze_c, expand_c, 1)

self.ac2 = nn.Sigmoid()

def forward(self, x: Tensor) -> Tensor:

scale = F.adaptive_avg_pool2d(x, output_size=(1, 1))

scale = self.fc1(scale)

scale = self.ac1(scale)

scale = self.fc2(scale)

scale = self.ac2(scale)

return scale * x

class InvertedResidualConfig:

# kernel_size, in_channel, out_channel, exp_ratio, strides, use_SE, drop_connect_rate

def __init__(self,

kernel: int, # 3 or 5

input_c: int,

out_c: int,

expanded_ratio: int, # 1 or 6

stride: int, # 1 or 2

use_se: bool, # True

drop_rate: float,

index: str, # 1a, 2a, 2b, ...

width_coefficient: float):

self.input_c = self.adjust_channels(input_c, width_coefficient)

self.kernel = kernel

self.expanded_c = self.input_c * expanded_ratio

self.out_c = self.adjust_channels(out_c, width_coefficient)

self.use_se = use_se

self.stride = stride

self.drop_rate = drop_rate

self.index = index

@staticmethod

def adjust_channels(channels: int, width_coefficient: float):

return _make_divisible(channels * width_coefficient, 8)

class InvertedResidual(nn.Module):

def __init__(self,

cnf: InvertedResidualConfig,

norm_layer: Callable[..., nn.Module]):

super(InvertedResidual, self).__init__()

if cnf.stride not in [1, 2]:

raise ValueError("illegal stride value.")

self.use_res_connect = (cnf.stride == 1 and cnf.input_c == cnf.out_c)

layers = OrderedDict()

activation_layer = nn.SiLU # alias Swish

# expand

if cnf.expanded_c != cnf.input_c:

layers.update({"expand_conv": ConvBNActivation(cnf.input_c,

cnf.expanded_c,

kernel_size=1,

norm_layer=norm_layer,

activation_layer=activation_layer)})

# depthwise

layers.update({"dwconv": ConvBNActivation(cnf.expanded_c,

cnf.expanded_c,

kernel_size=cnf.kernel,

stride=cnf.stride,

groups=cnf.expanded_c,

norm_layer=norm_layer,

activation_layer=activation_layer)})

if cnf.use_se:

layers.update({"se": SqueezeExcitation(cnf.input_c,

cnf.expanded_c)})

# project

layers.update({"project_conv": ConvBNActivation(cnf.expanded_c,

cnf.out_c,

kernel_size=1,

norm_layer=norm_layer,

activation_layer=nn.Identity)})

self.block = nn.Sequential(layers)

self.out_channels = cnf.out_c

self.is_strided = cnf.stride > 1

# 只有在使用shortcut连接时才使用dropout层

if self.use_res_connect and cnf.drop_rate > 0:

self.dropout = DropPath(cnf.drop_rate)

else:

self.dropout = nn.Identity()

def forward(self, x: Tensor) -> Tensor:

result = self.block(x)

result = self.dropout(result)

if self.use_res_connect:

result += x

return result

class EfficientNet(nn.Module):

def __init__(self,

width_coefficient: float,

depth_coefficient: float,

num_classes: int = 1000,

dropout_rate: float = 0.2,

drop_connect_rate: float = 0.2,

block: Optional[Callable[..., nn.Module]] = None,

norm_layer: Optional[Callable[..., nn.Module]] = None

):

super(EfficientNet, self).__init__()

# kernel_size, in_channel, out_channel, exp_ratio, strides, use_SE, drop_connect_rate, repeats

default_cnf = [[3, 32, 16, 1, 1, True, drop_connect_rate, 1],

[3, 16, 24, 6, 2, True, drop_connect_rate, 2],

[5, 24, 40, 6, 2, True, drop_connect_rate, 2],

[3, 40, 80, 6, 2, True, drop_connect_rate, 3],

[5, 80, 112, 6, 1, True, drop_connect_rate, 3],

[5, 112, 192, 6, 2, True, drop_connect_rate, 4],

[3, 192, 320, 6, 1, True, drop_connect_rate, 1]]

def round_repeats(repeats):

"""Round number of repeats based on depth multiplier."""

return int(math.ceil(depth_coefficient * repeats))

if block is None:

block = InvertedResidual

if norm_layer is None:

norm_layer = partial(nn.BatchNorm2d, eps=1e-3, momentum=0.1)

adjust_channels = partial(InvertedResidualConfig.adjust_channels,

width_coefficient=width_coefficient)

# build inverted_residual_setting

bneck_conf = partial(InvertedResidualConfig,

width_coefficient=width_coefficient)

b = 0

num_blocks = float(sum(round_repeats(i[-1]) for i in default_cnf))

inverted_residual_setting = []

for stage, args in enumerate(default_cnf):

cnf = copy.copy(args)

for i in range(round_repeats(cnf.pop(-1))):

if i > 0:

# strides equal 1 except first cnf

cnf[-3] = 1 # strides

cnf[1] = cnf[2] # input_channel equal output_channel

cnf[-1] = args[-2] * b / num_blocks # update dropout ratio

index = str(stage + 1) + chr(i + 97) # 1a, 2a, 2b, ...

inverted_residual_setting.append(bneck_conf(*cnf, index))

b += 1

# create layers

layers = OrderedDict()

# first conv

layers.update({"stem_conv": ConvBNActivation(in_planes=3,

out_planes=adjust_channels(32),

kernel_size=3,

stride=2,

norm_layer=norm_layer)})

# building inverted residual blocks

for cnf in inverted_residual_setting:

layers.update({cnf.index: block(cnf, norm_layer)})

# build top

last_conv_input_c = inverted_residual_setting[-1].out_c

last_conv_output_c = adjust_channels(1280)

layers.update({"top": ConvBNActivation(in_planes=last_conv_input_c,

out_planes=last_conv_output_c,

kernel_size=1,

norm_layer=norm_layer)})

self.features = nn.Sequential(layers)

self.avgpool = nn.AdaptiveAvgPool2d(1)

classifier = []

if dropout_rate > 0:

classifier.append(nn.Dropout(p=dropout_rate, inplace=True))

classifier.append(nn.Linear(last_conv_output_c, num_classes))

self.classifier = nn.Sequential(*classifier)

# initial weights

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode="fan_out")

if m.bias is not None:

nn.init.zeros_(m.bias)

elif isinstance(m, nn.BatchNorm2d):

nn.init.ones_(m.weight)

nn.init.zeros_(m.bias)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.zeros_(m.bias)

def _forward_impl(self, x: Tensor) -> Tensor:

x = self.features(x)

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.classifier(x)

return x

def forward(self, x: Tensor) -> Tensor:

return self._forward_impl(x)

def efficientnet_b0(num_classes=1000):

# input image size 224x224

return EfficientNet(width_coefficient=1.0,

depth_coefficient=1.0,

dropout_rate=0.2,

num_classes=num_classes)

def efficientnet_b1(num_classes=1000):

# input image size 240x240

return EfficientNet(width_coefficient=1.0,

depth_coefficient=1.1,

dropout_rate=0.2,

num_classes=num_classes)

def efficientnet_b2(num_classes=1000):

# input image size 260x260

return EfficientNet(width_coefficient=1.1,

depth_coefficient=1.2,

dropout_rate=0.3,

num_classes=num_classes)

def efficientnet_b3(num_classes=1000):

# input image size 300x300

return EfficientNet(width_coefficient=1.2,

depth_coefficient=1.4,

dropout_rate=0.3,

num_classes=num_classes)

def efficientnet_b4(num_classes=1000):

# input image size 380x380

return EfficientNet(width_coefficient=1.4,

depth_coefficient=1.8,

dropout_rate=0.4,

num_classes=num_classes)

def efficientnet_b5(num_classes=1000):

# input image size 456x456

return EfficientNet(width_coefficient=1.6,

depth_coefficient=2.2,

dropout_rate=0.4,

num_classes=num_classes)

def efficientnet_b6(num_classes=1000):

# input image size 528x528

return EfficientNet(width_coefficient=1.8,

depth_coefficient=2.6,

dropout_rate=0.5,

num_classes=num_classes)

def efficientnet_b7(num_classes=1000):

# input image size 600x600

return EfficientNet(width_coefficient=2.0,

depth_coefficient=3.1,

dropout_rate=0.5,

num_classes=num_classes)

1254

1254

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?