基于MLP和CNN的姓氏分类

目录

2.2.3 构建SurnameDataset提供对数据集的结构化访问

1.关于MLP和CNN

1.1 什么是MLP?

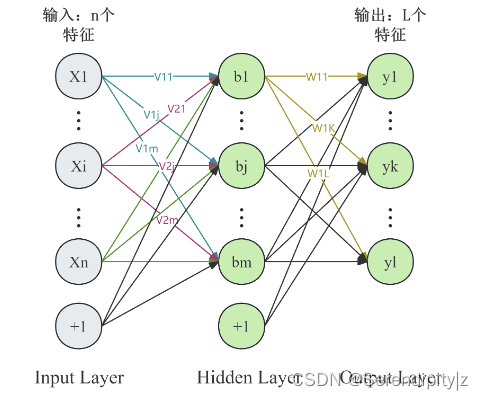

MLP,即多层感知器(Multilayer Perceptron),是一种前馈型的人工神经网络(Artificial Neural Network, ANN),是神经网络中最基本也是最重要的模型之一。MLP特别适合处理非线性问题,通过增加网络的深度(即隐藏层的数量)来增强模型的学习能力和表达复杂特征的能力。

MLP的核心特征在于使用了全连接层(fully connected layers)。在全连接层中,每一层的每个神经元都与前一层的所有神经元相连,形成一个密集的权重矩阵。这种全局连接的方式使得MLP能够对输入数据进行全局特征的提取。

1.2 MLP架构

1.2.1 主要组成

MLP主要由输入层、隐藏层、激活函数、输出层、权重和偏置、损失函数、优化算法等部分组成。

输入层接收原始数据,每个神经元对应输入数据的一个特征。输入层并不执行任何计算,只是简单地传递数据给下一层。

隐藏层位于输入层和输出层之间的神经网络层,可以有一个或多个。每个隐藏层包含若干个神经元,这些神经元通过加权求和与激活函数组合的方式来对输入数据进行变换,以学习数据的复杂特征表示。隐藏层是MLP模型能够学习和解决非线性问题的关键。

激活函数应用于每个神经元的输出,用于引入非线性,使得网络能够学习并表示复杂的决策边界。常用的激活函数有sigmoid、tanh、ReLU等。

输出层网络的最后一层,负责根据隐藏层的输出给出最终的预测或分类结果。输出层的神经元数量取决于任务的性质。

权重和偏置连接各层神经元的权重决定了信息如何在神经网络中传播和变换,偏置项则是为了增加模型的灵活性,使得神经元可以对输入产生非零响应。

损失函数衡量模型预测输出与实际标签之间的差异,常见的损失函数有均方误差用于回归任务,交叉熵损失用于分类任务。

优化算法如梯度下降及其变种,用于在训练过程中更新网络中的权重和偏置,以最小化损失函数,从而提升模型的预测性能。

MLP通过反向传播(Backpropagation)算法来学习这些权重和偏置,以减小损失函数的值,实现对数据的高效拟合。在不同的应用场景下,MLP的架构(如隐藏层的数量、每层的神经元数量、激活函数的选择等)可以灵活调整以满足特定任务的需求。

1.2.2 重要参数

1)隐藏层的数量

在理论上,增加隐藏层的数量可以增加模型的表示能力,使得 MLP 能够逼近任意复杂的非线性函数。因此,增加隐藏层的数量可能会增加模型对非线性问题的适应能力。

然而,在实际应用中,隐藏层数量增加并不总是会带来更好的效果,因为隐藏层数量的增加也会增加模型的复杂度,从而增加了模型的训练时间、计算成本和过拟合的风险。此外,增加隐藏层的数量也会增加超参数搜索的复杂度。

2)隐藏层的神经元数量

在 MLP 中,隐藏层的每个神经元都会接收上一层的输出,并对其进行加权求和并通过激活函数进行处理,从而产生隐藏层的输出。这些隐藏层的输出再传递给下一层,直到到达输出层为止。

因此,隐藏层的输出大小是一个重要的超参数,它直接影响着模型的表示能力和学习能力。通常情况下,隐藏层的输出大小是可以调整的,可以根据任务的复杂度和数据集的特征来确定。较大的隐藏层输出大小可能会增加模型的表示能力,但也会增加过拟合的风险。较小的隐藏层输出大小可能会减少模型的参数数量,从而降低模型的复杂度,但也可能会限制模型的表达能力。因此,选择合适的隐藏层输出大小是一项具有挑战性的任务。

1.3 什么是CNN?

CNN是一种深度学习模型,它模仿了人类大脑处理视觉信息的方式。它在图像识别等领域表现出色,因为它能够自动从图像中学习复杂的特征。CNN的架构由多层卷积层和池化层堆叠而成,这些层负责提取图像的特征并逐步构建更为复杂和抽象的特征表示。

CNN虽起源于图像处理,但因其强大的局部特征提取和层次特征学习能力,通过将文本数据转换为嵌入向量并应用卷积操作,能够有效捕捉文本中的模式和结构信息,实现对不同姓氏的高效分类,展现出跨领域的适用性和强大性能。

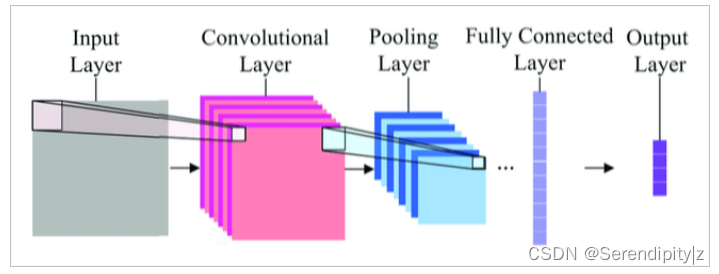

1.4 CNN架构

CNN由输入层,卷积层,池化层,全连接层,输出层组成,其中卷积层,池化层,全连接层最为重要。

卷积层

卷积核:在输入的向量序列上应用多个不同大小的卷积核(filter),每个卷积核滑动并执行卷积操作,以捕获局部特征,如特定的字符组合或模式。

特征图与通道:每个卷积核会产生一个特征图(Feature Map),表示特定特征的存在与否及其强度。多个卷积核形成多个通道,共同提取多样化的特征。

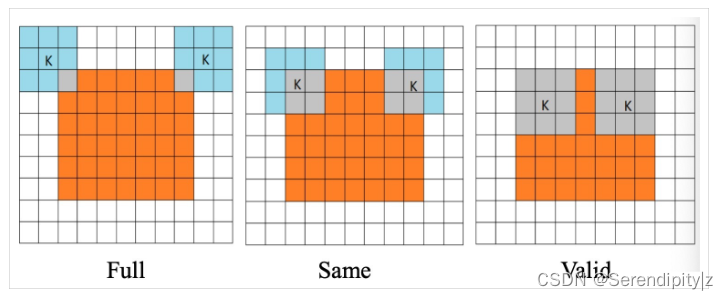

卷积方式有三种,分别是Full,Same,Valid。

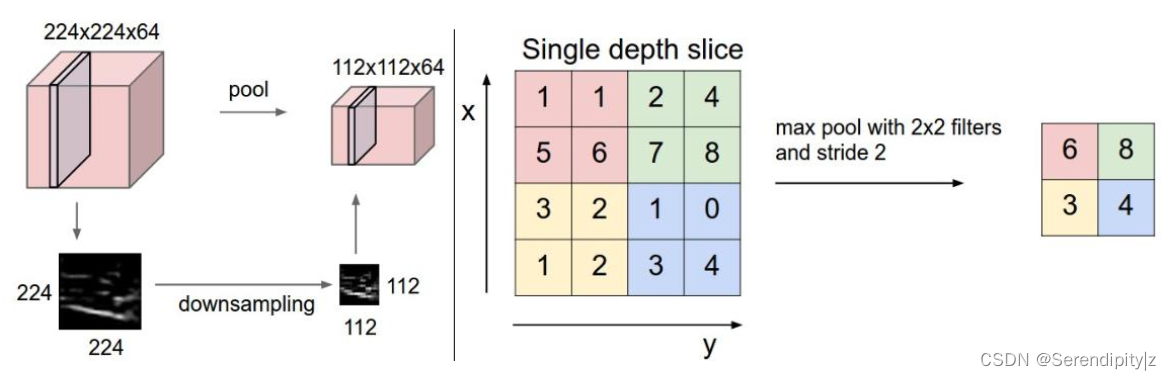

池化层

用于降低特征图的维度,同时保持最重要的特征信息。这对于减少计算量和避免过拟合都很有帮助。

全连接层

经过卷积和池化后的特征被展平(Flatten)成一维向量,然后传递给一个或多个全连接层。全连接层负责整合这些特征,并进行最终的分类决策。

2.代码部分

2.1 MLP

2.1.1 处理文本数据,构建词汇表

from argparse import Namespace

from collections import Counter

import json

import os

import string

import numpy as np

import pandas as pd

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.utils.data import Dataset, DataLoader

from tqdm import tqdm_notebook

class Vocabulary(object):

"""Class to process text and extract vocabulary for mapping"""

def __init__(self, token_to_idx=None, add_unk=True, unk_token="<UNK>"):

"""

Args:

token_to_idx (dict): a pre-existing map of tokens to indices

add_unk (bool): a flag that indicates whether to add the UNK token

unk_token (str): the UNK token to add into the Vocabulary

"""

if token_to_idx is None:

token_to_idx = {}

self._token_to_idx = token_to_idx

self._idx_to_token = {idx: token

for token, idx in self._token_to_idx.items()}

self._add_unk = add_unk

self._unk_token = unk_token

self.unk_index = -1

if add_unk:

self.unk_index = self.add_token(unk_token)

def to_serializable(self):

""" returns a dictionary that can be serialized """

return {'token_to_idx': self._token_to_idx,

'add_unk': self._add_unk,

'unk_token': self._unk_token}

@classmethod

def from_serializable(cls, contents):

""" instantiates the Vocabulary from a serialized dictionary """

return cls(**contents)

def add_token(self, token):

"""Update mapping dicts based on the token.

Args:

token (str): the item to add into the Vocabulary

Returns:

index (int): the integer corresponding to the token

"""

try:

index = self._token_to_idx[token]

except KeyError:

index = len(self._token_to_idx)

self._token_to_idx[token] = index

self._idx_to_token[index] = token

return index

def add_many(self, tokens):

"""Add a list of tokens into the Vocabulary

Args:

tokens (list): a list of string tokens

Returns:

indices (list): a list of indices corresponding to the tokens

"""

return [self.add_token(token) for token in tokens]

# 由token查找他的索引

def lookup_token(self, token):

"""Retrieve the index associated with the token

or the UNK index if token isn't present.

Args:

token (str): the token to look up

Returns:

index (int): the index corresponding to the token

Notes:

`unk_index` needs to be >=0 (having been added into the Vocabulary)

for the UNK functionality

"""

if self.unk_index >= 0:

return self._token_to_idx.get(token, self.unk_index)

else:

return self._token_to_idx[token]

# 由索引查找token

def lookup_index(self, index):

"""Return the token associated with the index

Args:

index (int): the index to look up

Returns:

token (str): the token corresponding to the index

Raises:

KeyError: if the index is not in the Vocabulary

"""

if index not in self._idx_to_token:

raise KeyError("the index (%d) is not in the Vocabulary" % index)

return self._idx_to_token[index]

def __str__(self):

return "<Vocabulary(size=%d)>" % len(self)

def __len__(self):

return len(self._token_to_idx)2.1.2 将文本数据转化为数值向量

class SurnameVectorizer(object):

""" The Vectorizer which coordinates the Vocabularies and puts them to use"""

def __init__(self, surname_vocab, nationality_vocab):

"""

Args:

surname_vocab (Vocabulary): maps characters to integers

nationality_vocab (Vocabulary): maps nationalities to integers

"""

self.surname_vocab = surname_vocab

self.nationality_vocab = nationality_vocab

# 进行one-hot编码

def vectorize(self, surname):

"""

Args:

surname (str): the surname

Returns:

one_hot (np.ndarray): a collapsed one-hot encoding

"""

vocab = self.surname_vocab

one_hot = np.zeros(len(vocab), dtype=np.float32)

for token in surname:

one_hot[vocab.lookup_token(token)] = 1

return one_hot

# 创建unk索引

@classmethod

def from_dataframe(cls, surname_df):

"""Instantiate the vectorizer from the dataset dataframe

Args:

surname_df (pandas.DataFrame): the surnames dataset

Returns:

an instance of the SurnameVectorizer

"""

surname_vocab = Vocabulary(unk_token="@")

nationality_vocab = Vocabulary(add_unk=False)

for index, row in surname_df.iterrows():

for letter in row.surname:

surname_vocab.add_token(letter)

nationality_vocab.add_token(row.nationality)

return cls(surname_vocab, nationality_vocab)

@classmethod

def from_serializable(cls, contents):

surname_vocab = Vocabulary.from_serializable(contents['surname_vocab'])

nationality_vocab = Vocabulary.from_serializable(contents['nationality_vocab'])

return cls(surname_vocab=surname_vocab, nationality_vocab=nationality_vocab)

def to_serializable(self):

return {'surname_vocab': self.surname_vocab.to_serializable(),

'nationality_vocab': self.nationality_vocab.to_serializable()}2.1.3 处理数据集,切分训练集,验证集和测试集

# 处理数据集,切分训练集,验证集和测试集

class SurnameDataset(Dataset):

def __init__(self, surname_df, vectorizer):

"""

Args:

surname_df (pandas.DataFrame): the dataset

vectorizer (SurnameVectorizer): vectorizer instatiated from dataset

"""

self.surname_df = surname_df

self._vectorizer = vectorizer

self.train_df = self.surname_df[self.surname_df.split=='train']

self.train_size = len(self.train_df)

self.val_df = self.surname_df[self.surname_df.split=='val']

self.validation_size = len(self.val_df)

self.test_df = self.surname_df[self.surname_df.split=='test']

self.test_size = len(self.test_df)

self._lookup_dict = {'train': (self.train_df, self.train_size),

'val': (self.val_df, self.validation_size),

'test': (self.test_df, self.test_size)}

self.set_split('train')

# Class weights

class_counts = surname_df.nationality.value_counts().to_dict()

def sort_key(item):

return self._vectorizer.nationality_vocab.lookup_token(item[0])

sorted_counts = sorted(class_counts.items(), key=sort_key)

frequencies = [count for _, count in sorted_counts]

self.class_weights = 1.0 / torch.tensor(frequencies, dtype=torch.float32)

@classmethod

def load_dataset_and_make_vectorizer(cls, surname_csv):

"""Load dataset and make a new vectorizer from scratch

Args:

surname_csv (str): location of the dataset

Returns:

an instance of SurnameDataset

"""

surname_df = pd.read_csv(surname_csv)

train_surname_df = surname_df[surname_df.split=='train']

return cls(surname_df, SurnameVectorizer.from_dataframe(train_surname_df))

@classmethod

def load_dataset_and_load_vectorizer(cls, surname_csv, vectorizer_filepath):

"""Load dataset and the corresponding vectorizer.

Used in the case in the vectorizer has been cached for re-use

Args:

surname_csv (str): location of the dataset

vectorizer_filepath (str): location of the saved vectorizer

Returns:

an instance of SurnameDataset

"""

surname_df = pd.read_csv(surname_csv)

vectorizer = cls.load_vectorizer_only(vectorizer_filepath)

return cls(surname_df, vectorizer)

@staticmethod

def load_vectorizer_only(vectorizer_filepath):

"""a static method for loading the vectorizer from file

Args:

vectorizer_filepath (str): the location of the serialized vectorizer

Returns:

an instance of SurnameVectorizer

"""

with open(vectorizer_filepath) as fp:

return SurnameVectorizer.from_serializable(json.load(fp))

def save_vectorizer(self, vectorizer_filepath):

"""saves the vectorizer to disk using json

Args:

vectorizer_filepath (str): the location to save the vectorizer

"""

with open(vectorizer_filepath, "w") as fp:

json.dump(self._vectorizer.to_serializable(), fp)

def get_vectorizer(self):

""" returns the vectorizer """

return self._vectorizer

def set_split(self, split="train"):

""" selects the splits in the dataset using a column in the dataframe """

self._target_split = split

self._target_df, self._target_size = self._lookup_dict[split]

def __len__(self):

return self._target_size

def __getitem__(self, index):

"""the primary entry point method for PyTorch datasets

Args:

index (int): the index to the data point

Returns:

a dictionary holding the data point's:

features (x_surname)

label (y_nationality)

"""

row = self._target_df.iloc[index]

surname_vector = \

self._vectorizer.vectorize(row.surname)

nationality_index = \

self._vectorizer.nationality_vocab.lookup_token(row.nationality)

return {'x_surname': surname_vector,

'y_nationality': nationality_index}

def get_num_batches(self, batch_size):

"""Given a batch size, return the number of batches in the dataset

Args:

batch_size (int)

Returns:

number of batches in the dataset

"""

return len(self) // batch_size

def generate_batches(dataset, batch_size, shuffle=True,

drop_last=True, device="cpu"):

"""

A generator function which wraps the PyTorch DataLoader. It will

ensure each tensor is on the write device location.

"""

dataloader = DataLoader(dataset=dataset, batch_size=batch_size,

shuffle=shuffle, drop_last=drop_last)

for data_dict in dataloader:

out_data_dict = {}

for name, tensor in data_dict.items():

out_data_dict[name] = data_dict[name].to(device)

yield out_data_dict2.1.4 定义MLP模型

# 定义模型,由两个线性模块和一个非线性处理组成

class SurnameClassifier(nn.Module):

""" A 2-layer Multilayer Perceptron for classifying surnames """

def __init__(self, input_dim, hidden_dim, output_dim):

"""

Args:

input_dim (int): the size of the input vectors

hidden_dim (int): the output size of the first Linear layer

output_dim (int): the output size of the second Linear layer

"""

super(SurnameClassifier, self).__init__()

self.fc1 = nn.Linear(input_dim, hidden_dim)

self.fc2 = nn.Linear(hidden_dim, output_dim)

def forward(self, x_in, apply_softmax=False):

"""The forward pass of the classifier

Args:

x_in (torch.Tensor): an input data tensor.

x_in.shape should be (batch, input_dim)

apply_softmax (bool): a flag for the softmax activation

should be false if used with the Cross Entropy losses

Returns:

the resulting tensor. tensor.shape should be (batch, output_dim)

"""

# 采用relu实现非线性处理

intermediate_vector = F.relu(self.fc1(x_in))

prediction_vector = self.fc2(intermediate_vector)

if apply_softmax:

prediction_vector = F.softmax(prediction_vector, dim=1)

return prediction_vector2.1.5 管理控制模型训练状态

def make_train_state(args):

return {'stop_early': False,

'early_stopping_step': 0,

'early_stopping_best_val': 1e8,

'learning_rate': args.learning_rate,

'epoch_index': 0,

'train_loss': [],

'train_acc': [],

'val_loss': [],

'val_acc': [],

'test_loss': -1,

'test_acc': -1,

'model_filename': args.model_state_file}

# 在模型性能提升时保存模型

def update_train_state(args, model, train_state):

"""Handle the training state updates.

Components:

- Early Stopping: Prevent overfitting.

- Model Checkpoint: Model is saved if the model is better

:param args: main arguments

:param model: model to train

:param train_state: a dictionary representing the training state values

:returns:

a new train_state

"""

# Save one model at least

if train_state['epoch_index'] == 0:

torch.save(model.state_dict(), train_state['model_filename'])

train_state['stop_early'] = False

# Save model if performance improved

elif train_state['epoch_index'] >= 1:

loss_tm1, loss_t = train_state['val_loss'][-2:]

# If loss worsened

if loss_t >= train_state['early_stopping_best_val']:

# Update step

train_state['early_stopping_step'] += 1

# Loss decreased

else:

# Save the best model

if loss_t < train_state['early_stopping_best_val']:

torch.save(model.state_dict(), train_state['model_filename'])

# Reset early stopping step

train_state['early_stopping_step'] = 0

# Stop early ?

train_state['stop_early'] = \

train_state['early_stopping_step'] >= args.early_stopping_criteria

return train_state

# 计算准确率

def compute_accuracy(y_pred, y_target):

_, y_pred_indices = y_pred.max(dim=1)

n_correct = torch.eq(y_pred_indices, y_target).sum().item()

return n_correct / len(y_pred_indices) * 100

def set_seed_everywhere(seed, cuda):

np.random.seed(seed)

torch.manual_seed(seed)

if cuda:

torch.cuda.manual_seed_all(seed)

def handle_dirs(dirpath):

if not os.path.exists(dirpath):

os.makedirs(dirpath)2.1.6 初始化参数,配置环境

args = Namespace(

# Data and path information

surname_csv="surnames_with_splits.csv",

vectorizer_file="vectorizer.json",

model_state_file="model.pth",

save_dir="surname_mlp",

# Model hyper parameters

hidden_dim=300,

# Training hyper parameters

seed=1337,

num_epochs=100,

early_stopping_criteria=5,

learning_rate=0.001,

batch_size=64,

# Runtime options

cuda=False,

reload_from_files=False,

expand_filepaths_to_save_dir=True,

)

if args.expand_filepaths_to_save_dir:

args.vectorizer_file = os.path.join(args.save_dir,

args.vectorizer_file)

args.model_state_file = os.path.join(args.save_dir,

args.model_state_file)

print("Expanded filepaths: ")

print("\t{}".format(args.vectorizer_file))

print("\t{}".format(args.model_state_file))

# Check CUDA

if not torch.cuda.is_available():

args.cuda = False

args.device = torch.device("cuda" if args.cuda else "cpu")

print("Using CUDA: {}".format(args.cuda))

# Set seed for reproducibility

set_seed_everywhere(args.seed, args.cuda)

# handle dirs

handle_dirs(args.save_dir)

if args.reload_from_files:

# training from a checkpoint

print("Reloading!")

dataset = SurnameDataset.load_dataset_and_load_vectorizer(args.surname_csv,

args.vectorizer_file)

else:

# create dataset and vectorizer

print("Creating fresh!")

dataset = SurnameDataset.load_dataset_and_make_vectorizer(args.surname_csv)

dataset.save_vectorizer(args.vectorizer_file)

vectorizer = dataset.get_vectorizer()

classifier = SurnameClassifier(input_dim=len(vectorizer.surname_vocab),

hidden_dim=args.hidden_dim,

output_dim=len(vectorizer.nationality_vocab))

2.1.7 训练过程

# 训练过程

classifier = classifier.to(args.device)

dataset.class_weights = dataset.class_weights.to(args.device)

loss_func = nn.CrossEntropyLoss(dataset.class_weights)

optimizer = optim.Adam(classifier.parameters(), lr=args.learning_rate)

scheduler = optim.lr_scheduler.ReduceLROnPlateau(optimizer=optimizer,

mode='min', factor=0.5,

patience=1)

train_state = make_train_state(args)

epoch_bar = tqdm_notebook(desc='training routine',

total=args.num_epochs,

position=0)

dataset.set_split('train')

train_bar = tqdm_notebook(desc='split=train',

total=dataset.get_num_batches(args.batch_size),

position=1,

leave=True)

dataset.set_split('val')

val_bar = tqdm_notebook(desc='split=val',

total=dataset.get_num_batches(args.batch_size),

position=1,

leave=True)

try:

for epoch_index in range(args.num_epochs):

train_state['epoch_index'] = epoch_index

# Iterate over training dataset

# setup: batch generator, set loss and acc to 0, set train mode on

dataset.set_split('train')

batch_generator = generate_batches(dataset,

batch_size=args.batch_size,

device=args.device)

running_loss = 0.0

running_acc = 0.0

classifier.train()

for batch_index, batch_dict in enumerate(batch_generator):

# the training routine is these 5 steps:

# --------------------------------------

# step 1. zero the gradients

optimizer.zero_grad()

# step 2. compute the output

y_pred = classifier(batch_dict['x_surname'])

# step 3. compute the loss

loss = loss_func(y_pred, batch_dict['y_nationality'])

loss_t = loss.item()

running_loss += (loss_t - running_loss) / (batch_index + 1)

# step 4. use loss to produce gradients

loss.backward()

# step 5. use optimizer to take gradient step

optimizer.step()

# -----------------------------------------

# compute the accuracy

acc_t = compute_accuracy(y_pred, batch_dict['y_nationality'])

running_acc += (acc_t - running_acc) / (batch_index + 1)

# update bar

train_bar.set_postfix(loss=running_loss, acc=running_acc,

epoch=epoch_index)

train_bar.update()

train_state['train_loss'].append(running_loss)

train_state['train_acc'].append(running_acc)

# Iterate over val dataset

# setup: batch generator, set loss and acc to 0; set eval mode on

dataset.set_split('val')

batch_generator = generate_batches(dataset,

batch_size=args.batch_size,

device=args.device)

running_loss = 0.

running_acc = 0.

classifier.eval()

# 由每轮产生的损失计算准确率

for batch_index, batch_dict in enumerate(batch_generator):

# compute the output

y_pred = classifier(batch_dict['x_surname'])

# step 3. compute the loss

loss = loss_func(y_pred, batch_dict['y_nationality'])

loss_t = loss.to("cpu").item()

running_loss += (loss_t - running_loss) / (batch_index + 1)

# compute the accuracy

acc_t = compute_accuracy(y_pred, batch_dict['y_nationality'])

running_acc += (acc_t - running_acc) / (batch_index + 1)

val_bar.set_postfix(loss=running_loss, acc=running_acc,

epoch=epoch_index)

val_bar.update()

train_state['val_loss'].append(running_loss)

train_state['val_acc'].append(running_acc)

train_state = update_train_state(args=args, model=classifier,

train_state=train_state)

scheduler.step(train_state['val_loss'][-1])

if train_state['stop_early']:

break

train_bar.n = 0

val_bar.n = 0

epoch_bar.update()

except KeyboardInterrupt:

print("Exiting loop")

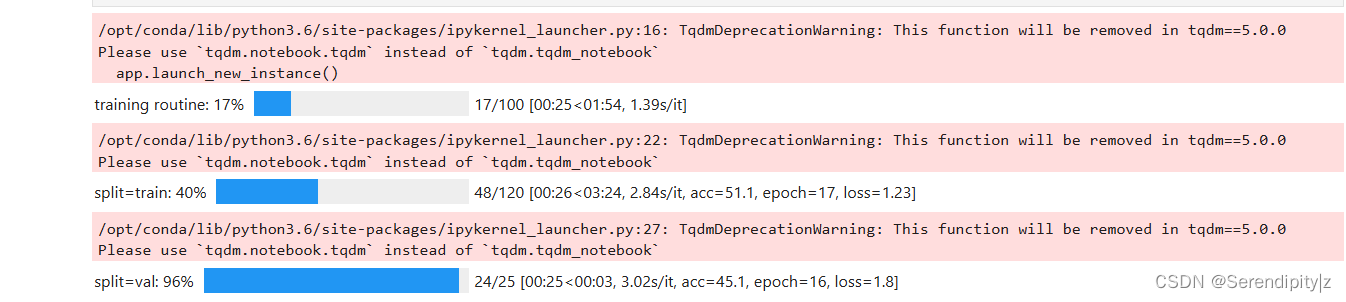

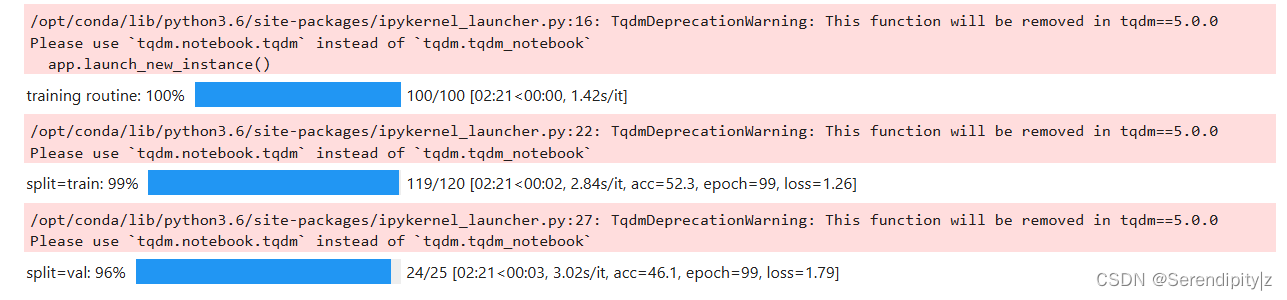

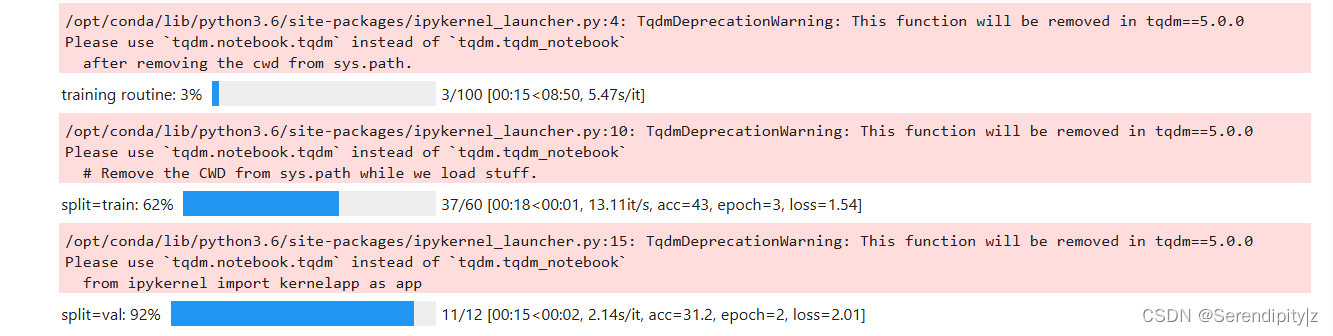

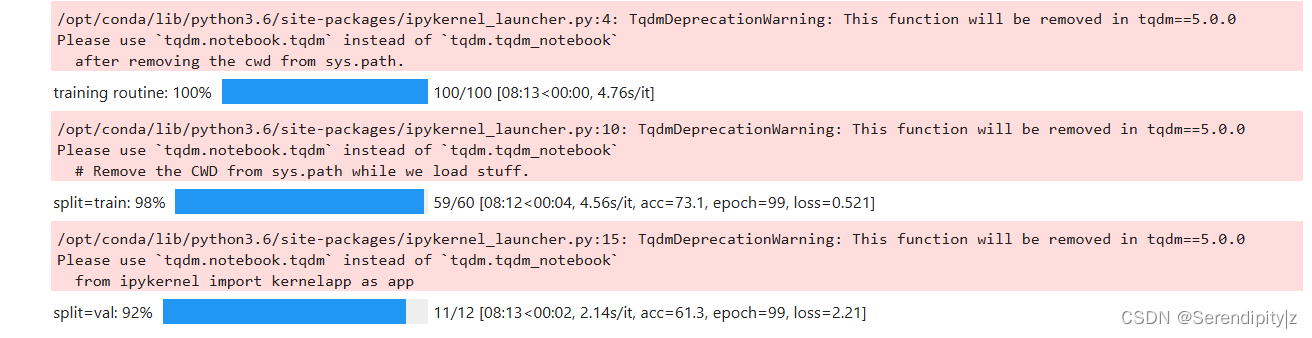

我的训练过程及结果:

2.1.8 损失和准确率

# compute the loss & accuracy on the test set using the best available model

# 使用效果最好的模型在测试集上计算损失和准确率

classifier.load_state_dict(torch.load(train_state['model_filename']))

classifier = classifier.to(args.device)

dataset.class_weights = dataset.class_weights.to(args.device)

loss_func = nn.CrossEntropyLoss(dataset.class_weights)

dataset.set_split('test')

batch_generator = generate_batches(dataset,

batch_size=args.batch_size,

device=args.device)

running_loss = 0.

running_acc = 0.

classifier.eval()

for batch_index, batch_dict in enumerate(batch_generator):

# compute the output

y_pred = classifier(batch_dict['x_surname'])

# compute the loss

loss = loss_func(y_pred, batch_dict['y_nationality'])

loss_t = loss.item()

running_loss += (loss_t - running_loss) / (batch_index + 1)

# compute the accuracy

acc_t = compute_accuracy(y_pred, batch_dict['y_nationality'])

running_acc += (acc_t - running_acc) / (batch_index + 1)

train_state['test_loss'] = running_loss

train_state['test_acc'] = running_acc

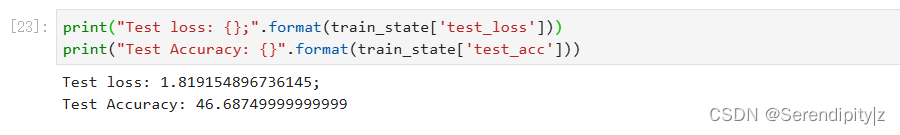

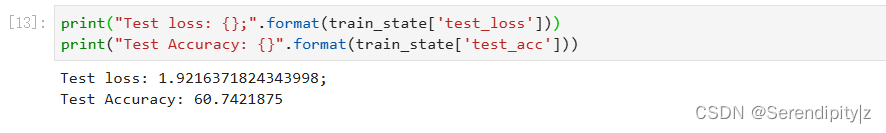

print("Test loss: {};".format(train_state['test_loss']))

print("Test Accuracy: {}".format(train_state['test_acc']))

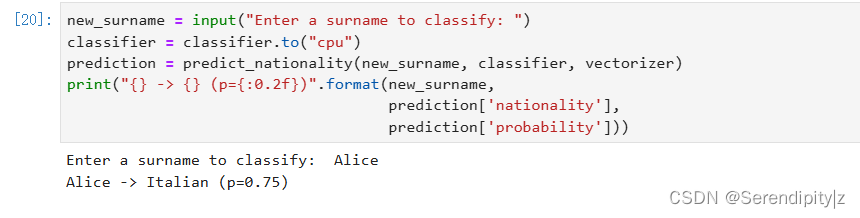

2.1.9 对输入姓氏进行预测

# 对输入姓氏进行预测

def predict_nationality(surname, classifier, vectorizer):

"""Predict the nationality from a new surname

Args:

surname (str): the surname to classifier

classifier (SurnameClassifer): an instance of the classifier

vectorizer (SurnameVectorizer): the corresponding vectorizer

Returns:

a dictionary with the most likely nationality and its probability

"""

vectorized_surname = vectorizer.vectorize(surname)

vectorized_surname = torch.tensor(vectorized_surname).view(1, -1)

result = classifier(vectorized_surname, apply_softmax=True)

probability_values, indices = result.max(dim=1)

index = indices.item()

predicted_nationality = vectorizer.nationality_vocab.lookup_index(index)

probability_value = probability_values.item()

return {'nationality': predicted_nationality, 'probability': probability_value}

new_surname = input("Enter a surname to classify: ")

classifier = classifier.to("cpu")

prediction = predict_nationality(new_surname, classifier, vectorizer)

print("{} -> {} (p={:0.2f})".format(new_surname,

prediction['nationality'],

prediction['probability']))

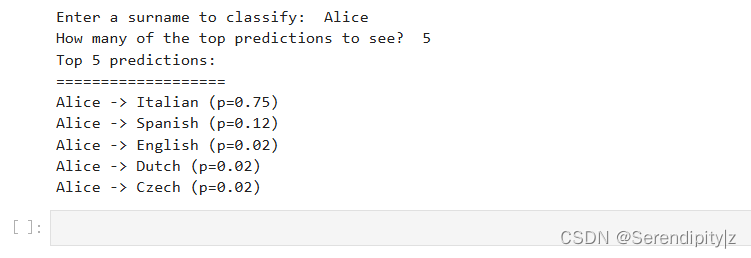

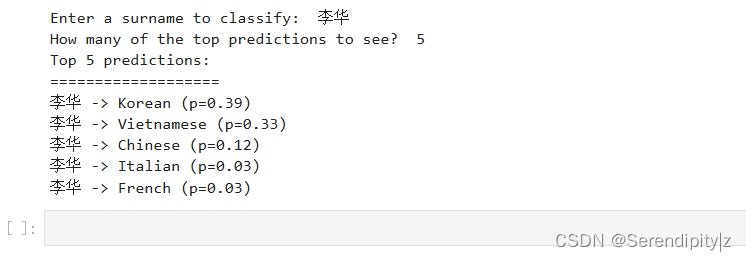

2.1.10 预测的前k个结果

# 输出多个可能的类别(国籍)及其概率

def predict_topk_nationality(name, classifier, vectorizer, k=5):

vectorized_name = vectorizer.vectorize(name)

vectorized_name = torch.tensor(vectorized_name).view(1, -1)

prediction_vector = classifier(vectorized_name, apply_softmax=True)

probability_values, indices = torch.topk(prediction_vector, k=k)

# returned size is 1,k

probability_values = probability_values.detach().numpy()[0]

indices = indices.detach().numpy()[0]

results = []

for prob_value, index in zip(probability_values, indices):

nationality = vectorizer.nationality_vocab.lookup_index(index)

results.append({'nationality': nationality,

'probability': prob_value})

return results

new_surname = input("Enter a surname to classify: ")

classifier = classifier.to("cpu")

k = int(input("How many of the top predictions to see? "))

if k > len(vectorizer.nationality_vocab):

print("Sorry! That's more than the # of nationalities we have.. defaulting you to max size :)")

k = len(vectorizer.nationality_vocab)

predictions = predict_topk_nationality(new_surname, classifier, vectorizer, k=k)

print("Top {} predictions:".format(k))

print("===================")

for prediction in predictions:

print("{} -> {} (p={:0.2f})".format(new_surname,

prediction['nationality'],

prediction['probability']))

2.2 CNN

2.2.1 构建词汇表

from argparse import Namespace

from collections import Counter

import json

import os

import string

import numpy as np

import pandas as pd

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.utils.data import Dataset, DataLoader

from tqdm import tqdm_notebook

class Vocabulary(object):

"""Class to process text and extract vocabulary for mapping"""

def __init__(self, token_to_idx=None, add_unk=True, unk_token="<UNK>"):

"""

Args:

token_to_idx (dict): a pre-existing map of tokens to indices

add_unk (bool): a flag that indicates whether to add the UNK token

unk_token (str): the UNK token to add into the Vocabulary

"""

if token_to_idx is None:

token_to_idx = {}

self._token_to_idx = token_to_idx

self._idx_to_token = {idx: token

for token, idx in self._token_to_idx.items()}

self._add_unk = add_unk

self._unk_token = unk_token

self.unk_index = -1

if add_unk:

self.unk_index = self.add_token(unk_token)

def to_serializable(self):

""" returns a dictionary that can be serialized """

return {'token_to_idx': self._token_to_idx,

'add_unk': self._add_unk,

'unk_token': self._unk_token}

@classmethod

def from_serializable(cls, contents):

""" instantiates the Vocabulary from a serialized dictionary """

return cls(**contents)

def add_token(self, token):

"""Update mapping dicts based on the token.

Args:

token (str): the item to add into the Vocabulary

Returns:

index (int): the integer corresponding to the token

"""

try:

index = self._token_to_idx[token]

except KeyError:

index = len(self._token_to_idx)

self._token_to_idx[token] = index

self._idx_to_token[index] = token

return index

def add_many(self, tokens):

"""Add a list of tokens into the Vocabulary

Args:

tokens (list): a list of string tokens

Returns:

indices (list): a list of indices corresponding to the tokens

"""

return [self.add_token(token) for token in tokens]

def lookup_token(self, token):

"""Retrieve the index associated with the token

or the UNK index if token isn't present.

Args:

token (str): the token to look up

Returns:

index (int): the index corresponding to the token

Notes:

`unk_index` needs to be >=0 (having been added into the Vocabulary)

for the UNK functionality

"""

if self.unk_index >= 0:

return self._token_to_idx.get(token, self.unk_index)

else:

return self._token_to_idx[token]

def lookup_index(self, index):

"""Return the token associated with the index

Args:

index (int): the index to look up

Returns:

token (str): the token corresponding to the index

Raises:

KeyError: if the index is not in the Vocabulary

"""

if index not in self._idx_to_token:

raise KeyError("the index (%d) is not in the Vocabulary" % index)

return self._idx_to_token[index]

def __str__(self):

return "<Vocabulary(size=%d)>" % len(self)

def __len__(self):

return len(self._token_to_idx)2.2.2 将文本数据转换成数值

class SurnameVectorizer(object):

""" The Vectorizer which coordinates the Vocabularies and puts them to use"""

def __init__(self, surname_vocab, nationality_vocab, max_surname_length):

"""

Args:

surname_vocab (Vocabulary): maps characters to integers

nationality_vocab (Vocabulary): maps nationalities to integers

max_surname_length (int): the length of the longest surname

"""

self.surname_vocab = surname_vocab

self.nationality_vocab = nationality_vocab

self._max_surname_length = max_surname_length

def vectorize(self, surname):

"""

Args:

surname (str): the surname

Returns:

one_hot_matrix (np.ndarray): a matrix of one-hot vectors

"""

one_hot_matrix_size = (len(self.surname_vocab), self._max_surname_length)

one_hot_matrix = np.zeros(one_hot_matrix_size, dtype=np.float32)

for position_index, character in enumerate(surname):

character_index = self.surname_vocab.lookup_token(character)

one_hot_matrix[character_index][position_index] = 1

return one_hot_matrix

@classmethod

def from_dataframe(cls, surname_df):

"""Instantiate the vectorizer from the dataset dataframe

Args:

surname_df (pandas.DataFrame): the surnames dataset

Returns:

an instance of the SurnameVectorizer

"""

surname_vocab = Vocabulary(unk_token="@")

nationality_vocab = Vocabulary(add_unk=False)

max_surname_length = 0

for index, row in surname_df.iterrows():

max_surname_length = max(max_surname_length, len(row.surname))

for letter in row.surname:

surname_vocab.add_token(letter)

nationality_vocab.add_token(row.nationality)

return cls(surname_vocab, nationality_vocab, max_surname_length)

@classmethod

def from_serializable(cls, contents):

surname_vocab = Vocabulary.from_serializable(contents['surname_vocab'])

nationality_vocab = Vocabulary.from_serializable(contents['nationality_vocab'])

return cls(surname_vocab=surname_vocab, nationality_vocab=nationality_vocab,

max_surname_length=contents['max_surname_length'])

def to_serializable(self):

return {'surname_vocab': self.surname_vocab.to_serializable(),

'nationality_vocab': self.nationality_vocab.to_serializable(),

'max_surname_length': self._max_surname_length}2.2.3 构建SurnameDataset提供对数据集的结构化访问

class SurnameDataset(Dataset):

def __init__(self, surname_df, vectorizer):

"""

Args:

name_df (pandas.DataFrame): the dataset

vectorizer (SurnameVectorizer): vectorizer instatiated from dataset

"""

self.surname_df = surname_df

self._vectorizer = vectorizer

self.train_df = self.surname_df[self.surname_df.split=='train']

self.train_size = len(self.train_df)

self.val_df = self.surname_df[self.surname_df.split=='val']

self.validation_size = len(self.val_df)

self.test_df = self.surname_df[self.surname_df.split=='test']

self.test_size = len(self.test_df)

self._lookup_dict = {'train': (self.train_df, self.train_size),

'val': (self.val_df, self.validation_size),

'test': (self.test_df, self.test_size)}

self.set_split('train')

# Class weights

class_counts = surname_df.nationality.value_counts().to_dict()

def sort_key(item):

return self._vectorizer.nationality_vocab.lookup_token(item[0])

sorted_counts = sorted(class_counts.items(), key=sort_key)

frequencies = [count for _, count in sorted_counts]

self.class_weights = 1.0 / torch.tensor(frequencies, dtype=torch.float32)

@classmethod

def load_dataset_and_make_vectorizer(cls, surname_csv):

"""Load dataset and make a new vectorizer from scratch

Args:

surname_csv (str): location of the dataset

Returns:

an instance of SurnameDataset

"""

surname_df = pd.read_csv(surname_csv)

train_surname_df = surname_df[surname_df.split=='train']

return cls(surname_df, SurnameVectorizer.from_dataframe(train_surname_df))

@classmethod

def load_dataset_and_load_vectorizer(cls, surname_csv, vectorizer_filepath):

"""Load dataset and the corresponding vectorizer.

Used in the case in the vectorizer has been cached for re-use

Args:

surname_csv (str): location of the dataset

vectorizer_filepath (str): location of the saved vectorizer

Returns:

an instance of SurnameDataset

"""

surname_df = pd.read_csv(surname_csv)

vectorizer = cls.load_vectorizer_only(vectorizer_filepath)

return cls(surname_df, vectorizer)

@staticmethod

def load_vectorizer_only(vectorizer_filepath):

"""a static method for loading the vectorizer from file

Args:

vectorizer_filepath (str): the location of the serialized vectorizer

Returns:

an instance of SurnameDataset

"""

with open(vectorizer_filepath) as fp:

return SurnameVectorizer.from_serializable(json.load(fp))

def save_vectorizer(self, vectorizer_filepath):

"""saves the vectorizer to disk using json

Args:

vectorizer_filepath (str): the location to save the vectorizer

"""

with open(vectorizer_filepath, "w") as fp:

json.dump(self._vectorizer.to_serializable(), fp)

def get_vectorizer(self):

""" returns the vectorizer """

return self._vectorizer

def set_split(self, split="train"):

""" selects the splits in the dataset using a column in the dataframe """

self._target_split = split

self._target_df, self._target_size = self._lookup_dict[split]

def __len__(self):

return self._target_size

def __getitem__(self, index):

"""the primary entry point method for PyTorch datasets

Args:

index (int): the index to the data point

Returns:

a dictionary holding the data point's features (x_data) and label (y_target)

"""

row = self._target_df.iloc[index]

surname_matrix = \

self._vectorizer.vectorize(row.surname)

nationality_index = \

self._vectorizer.nationality_vocab.lookup_token(row.nationality)

return {'x_surname': surname_matrix,

'y_nationality': nationality_index}

def get_num_batches(self, batch_size):

"""Given a batch size, return the number of batches in the dataset

Args:

batch_size (int)

Returns:

number of batches in the dataset

"""

return len(self) // batch_size

def generate_batches(dataset, batch_size, shuffle=True,

drop_last=True, device="cpu"):

"""

A generator function which wraps the PyTorch DataLoader. It will

ensure each tensor is on the write device location.

"""

dataloader = DataLoader(dataset=dataset, batch_size=batch_size,

shuffle=shuffle, drop_last=drop_last)

for data_dict in dataloader:

out_data_dict = {}

for name, tensor in data_dict.items():

out_data_dict[name] = data_dict[name].to(device)

yield out_data_dict2.2.4 定义卷积神经网络

# 定义卷积神经网络

class SurnameClassifier(nn.Module):

def __init__(self, initial_num_channels, num_classes, num_channels):

"""

Args:

initial_num_channels (int): size of the incoming feature vector

num_classes (int): size of the output prediction vector

num_channels (int): constant channel size to use throughout network

"""

super(SurnameClassifier, self).__init__()

# 采用ELU作为非线性函数

self.convnet = nn.Sequential(

nn.Conv1d(in_channels=initial_num_channels,

out_channels=num_channels, kernel_size=3),

nn.ELU(),

nn.Conv1d(in_channels=num_channels, out_channels=num_channels,

kernel_size=3, stride=2),

nn.ELU(),

nn.Conv1d(in_channels=num_channels, out_channels=num_channels,

kernel_size=3, stride=2),

nn.ELU(),

nn.Conv1d(in_channels=num_channels, out_channels=num_channels,

kernel_size=3),

nn.ELU()

)

self.fc = nn.Linear(num_channels, num_classes)

def forward(self, x_surname, apply_softmax=False):

"""The forward pass of the classifier

Args:

x_surname (torch.Tensor): an input data tensor.

x_surname.shape should be (batch, initial_num_channels, max_surname_length)

apply_softmax (bool): a flag for the softmax activation

should be false if used with the Cross Entropy losses

Returns:

the resulting tensor. tensor.shape should be (batch, num_classes)

"""

features = self.convnet(x_surname).squeeze(dim=2)

prediction_vector = self.fc(features)

# 使用softmax归一化

if apply_softmax:

prediction_vector = F.softmax(prediction_vector, dim=1)

return prediction_vector

2.2.5 环境配置、数据处理、模型搭建、训练管理

def make_train_state(args):

return {'stop_early': False,

'early_stopping_step': 0,

'early_stopping_best_val': 1e8,

'learning_rate': args.learning_rate,

'epoch_index': 0,

'train_loss': [],

'train_acc': [],

'val_loss': [],

'val_acc': [],

'test_loss': -1,

'test_acc': -1,

'model_filename': args.model_state_file}

def update_train_state(args, model, train_state):

"""Handle the training state updates.

Components:

- Early Stopping: Prevent overfitting.

- Model Checkpoint: Model is saved if the model is better

:param args: main arguments

:param model: model to train

:param train_state: a dictionary representing the training state values

:returns:

a new train_state

"""

# Save one model at least

if train_state['epoch_index'] == 0:

torch.save(model.state_dict(), train_state['model_filename'])

train_state['stop_early'] = False

# Save model if performance improved

# 当模型效果提升时,保存模型

elif train_state['epoch_index'] >= 1:

loss_tm1, loss_t = train_state['val_loss'][-2:]

# If loss worsened

if loss_t >= train_state['early_stopping_best_val']:

# Update step

train_state['early_stopping_step'] += 1

# Loss decreased

else:

# Save the best model

if loss_t < train_state['early_stopping_best_val']:

torch.save(model.state_dict(), train_state['model_filename'])

# Reset early stopping step

train_state['early_stopping_step'] = 0

# 判断是否早停

# Stop early ?

train_state['stop_early'] = \

train_state['early_stopping_step'] >= args.early_stopping_criteria

return train_state

# 计算准确率

def compute_accuracy(y_pred, y_target):

y_pred_indices = y_pred.max(dim=1)[1]

n_correct = torch.eq(y_pred_indices, y_target).sum().item()

return n_correct / len(y_pred_indices) * 100

args = Namespace(

# Data and Path information

surname_csv="surnames_with_splits.csv",

vectorizer_file="vectorizer.json",

model_state_file="model.pth",

save_dir="cnn",

# Model hyper parameters

hidden_dim=100,

num_channels=256,

# Training hyper parameters

seed=1337,

learning_rate=0.001,

batch_size=128,

num_epochs=100,

early_stopping_criteria=5,

dropout_p=0.1,

# Runtime options

cuda=False,

reload_from_files=False,

expand_filepaths_to_save_dir=True,

catch_keyboard_interrupt=True

)

if args.expand_filepaths_to_save_dir:

args.vectorizer_file = os.path.join(args.save_dir,

args.vectorizer_file)

args.model_state_file = os.path.join(args.save_dir,

args.model_state_file)

print("Expanded filepaths: ")

print("\t{}".format(args.vectorizer_file))

print("\t{}".format(args.model_state_file))

# Check CUDA

if not torch.cuda.is_available():

args.cuda = False

args.device = torch.device("cuda" if args.cuda else "cpu")

print("Using CUDA: {}".format(args.cuda))

def set_seed_everywhere(seed, cuda):

np.random.seed(seed)

torch.manual_seed(seed)

if cuda:

torch.cuda.manual_seed_all(seed)

def handle_dirs(dirpath):

if not os.path.exists(dirpath):

os.makedirs(dirpath)

# Set seed for reproducibility

set_seed_everywhere(args.seed, args.cuda)

# handle dirs

handle_dirs(args.save_dir)

if args.reload_from_files:

# training from a checkpoint

dataset = SurnameDataset.load_dataset_and_load_vectorizer(args.surname_csv,

args.vectorizer_file)

else:

# create dataset and vectorizer

dataset = SurnameDataset.load_dataset_and_make_vectorizer(args.surname_csv)

dataset.save_vectorizer(args.vectorizer_file)

vectorizer = dataset.get_vectorizer()

classifier = SurnameClassifier(initial_num_channels=len(vectorizer.surname_vocab),

num_classes=len(vectorizer.nationality_vocab),

num_channels=args.num_channels)

classifer = classifier.to(args.device)

dataset.class_weights = dataset.class_weights.to(args.device)

loss_func = nn.CrossEntropyLoss(weight=dataset.class_weights)

optimizer = optim.Adam(classifier.parameters(), lr=args.learning_rate)

scheduler = optim.lr_scheduler.ReduceLROnPlateau(optimizer=optimizer,

mode='min', factor=0.5,

patience=1)

train_state = make_train_state(args)2.2.6 训练过程

# 训练过程

epoch_bar = tqdm_notebook(desc='training routine',

total=args.num_epochs,

position=0)

dataset.set_split('train')

train_bar = tqdm_notebook(desc='split=train',

total=dataset.get_num_batches(args.batch_size),

position=1,

leave=True)

dataset.set_split('val')

val_bar = tqdm_notebook(desc='split=val',

total=dataset.get_num_batches(args.batch_size),

position=1,

leave=True)

try:

for epoch_index in range(args.num_epochs):

train_state['epoch_index'] = epoch_index

# Iterate over training dataset

# setup: batch generator, set loss and acc to 0, set train mode on

dataset.set_split('train')

batch_generator = generate_batches(dataset,

batch_size=args.batch_size,

device=args.device)

running_loss = 0.0

running_acc = 0.0

classifier.train()

for batch_index, batch_dict in enumerate(batch_generator):

# the training routine is these 5 steps:

# --------------------------------------

# step 1. zero the gradients

optimizer.zero_grad()

# step 2. compute the output

y_pred = classifier(batch_dict['x_surname'])

# step 3. compute the loss

loss = loss_func(y_pred, batch_dict['y_nationality'])

loss_t = loss.item()

running_loss += (loss_t - running_loss) / (batch_index + 1)

# step 4. use loss to produce gradients

loss.backward()

# step 5. use optimizer to take gradient step

optimizer.step()

# -----------------------------------------

# compute the accuracy

acc_t = compute_accuracy(y_pred, batch_dict['y_nationality'])

running_acc += (acc_t - running_acc) / (batch_index + 1)

# update bar

train_bar.set_postfix(loss=running_loss, acc=running_acc,

epoch=epoch_index)

train_bar.update()

train_state['train_loss'].append(running_loss)

train_state['train_acc'].append(running_acc)

# Iterate over val dataset

# setup: batch generator, set loss and acc to 0; set eval mode on

dataset.set_split('val')

batch_generator = generate_batches(dataset,

batch_size=args.batch_size,

device=args.device)

running_loss = 0.

running_acc = 0.

classifier.eval()

for batch_index, batch_dict in enumerate(batch_generator):

# compute the output

y_pred = classifier(batch_dict['x_surname'])

# step 3. compute the loss

loss = loss_func(y_pred, batch_dict['y_nationality'])

loss_t = loss.item()

running_loss += (loss_t - running_loss) / (batch_index + 1)

# compute the accuracy

acc_t = compute_accuracy(y_pred, batch_dict['y_nationality'])

running_acc += (acc_t - running_acc) / (batch_index + 1)

val_bar.set_postfix(loss=running_loss, acc=running_acc,

epoch=epoch_index)

val_bar.update()

train_state['val_loss'].append(running_loss)

train_state['val_acc'].append(running_acc)

train_state = update_train_state(args=args, model=classifier,

train_state=train_state)

scheduler.step(train_state['val_loss'][-1])

if train_state['stop_early']:

break

train_bar.n = 0

val_bar.n = 0

epoch_bar.update()

except KeyboardInterrupt:

print("Exiting loop")

2.2.7 损失和准确率

# 在测试集上测试,输出损失和准确率

classifier.load_state_dict(torch.load(train_state['model_filename']))

classifier = classifier.to(args.device)

dataset.class_weights = dataset.class_weights.to(args.device)

loss_func = nn.CrossEntropyLoss(dataset.class_weights)

dataset.set_split('test')

batch_generator = generate_batches(dataset,

batch_size=args.batch_size,

device=args.device)

running_loss = 0.

running_acc = 0.

classifier.eval()

for batch_index, batch_dict in enumerate(batch_generator):

# compute the output

y_pred = classifier(batch_dict['x_surname'])

# compute the loss

loss = loss_func(y_pred, batch_dict['y_nationality'])

loss_t = loss.item()

running_loss += (loss_t - running_loss) / (batch_index + 1)

# compute the accuracy

acc_t = compute_accuracy(y_pred, batch_dict['y_nationality'])

running_acc += (acc_t - running_acc) / (batch_index + 1)

train_state['test_loss'] = running_loss

train_state['test_acc'] = running_acc

print("Test loss: {};".format(train_state['test_loss']))

print("Test Accuracy: {}".format(train_state['test_acc']))

2.2.8 对输入姓氏进行预测

# 输出对新样本的预测

def predict_nationality(surname, classifier, vectorizer):

"""Predict the nationality from a new surname

Args:

surname (str): the surname to classifier

classifier (SurnameClassifer): an instance of the classifier

vectorizer (SurnameVectorizer): the corresponding vectorizer

Returns:

a dictionary with the most likely nationality and its probability

"""

vectorized_surname = vectorizer.vectorize(surname)

vectorized_surname = torch.tensor(vectorized_surname).unsqueeze(0)

result = classifier(vectorized_surname, apply_softmax=True)

probability_values, indices = result.max(dim=1)

index = indices.item()

predicted_nationality = vectorizer.nationality_vocab.lookup_index(index)

probability_value = probability_values.item()

return {'nationality': predicted_nationality, 'probability': probability_value}

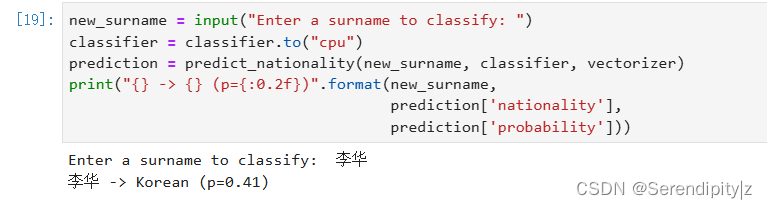

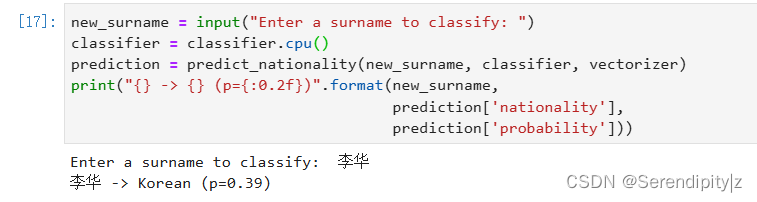

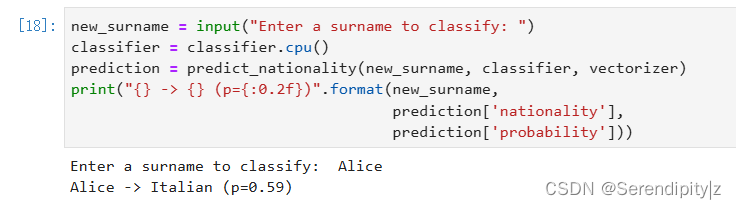

new_surname = input("Enter a surname to classify: ")

classifier = classifier.cpu()

prediction = predict_nationality(new_surname, classifier, vectorizer)

print("{} -> {} (p={:0.2f})".format(new_surname,

prediction['nationality'],

prediction['probability']))

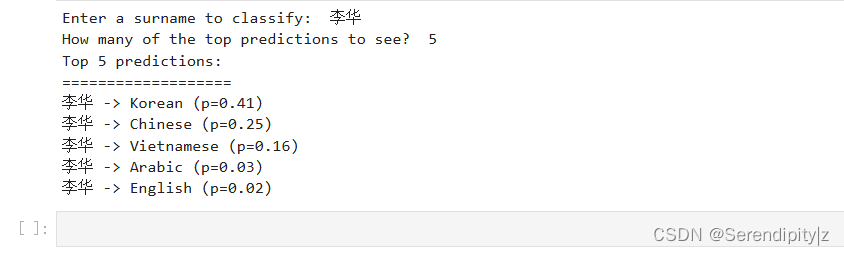

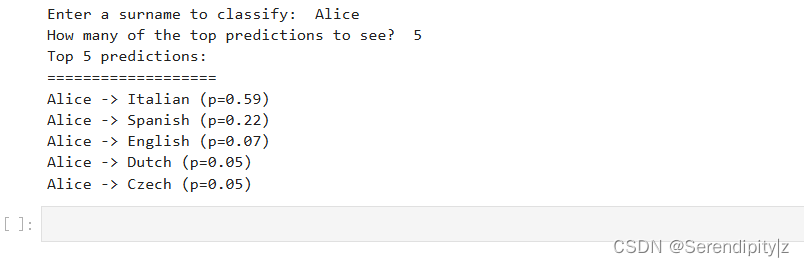

2.2.9 预测的前k个结果

# 输出可能性最大的多个预测

def predict_topk_nationality(surname, classifier, vectorizer, k=5):

"""Predict the top K nationalities from a new surname

Args:

surname (str): the surname to classifier

classifier (SurnameClassifer): an instance of the classifier

vectorizer (SurnameVectorizer): the corresponding vectorizer

k (int): the number of top nationalities to return

Returns:

list of dictionaries, each dictionary is a nationality and a probability

"""

vectorized_surname = vectorizer.vectorize(surname)

vectorized_surname = torch.tensor(vectorized_surname).unsqueeze(dim=0)

prediction_vector = classifier(vectorized_surname, apply_softmax=True)

probability_values, indices = torch.topk(prediction_vector, k=k)

# returned size is 1,k

probability_values = probability_values[0].detach().numpy()

indices = indices[0].detach().numpy()

results = []

for kth_index in range(k):

nationality = vectorizer.nationality_vocab.lookup_index(indices[kth_index])

probability_value = probability_values[kth_index]

results.append({'nationality': nationality,

'probability': probability_value})

return results

new_surname = input("Enter a surname to classify: ")

k = int(input("How many of the top predictions to see? "))

if k > len(vectorizer.nationality_vocab):

print("Sorry! That's more than the # of nationalities we have.. defaulting you to max size :)")

k = len(vectorizer.nationality_vocab)

predictions = predict_topk_nationality(new_surname, classifier, vectorizer, k=k)

print("Top {} predictions:".format(k))

print("===================")

for prediction in predictions:

print("{} -> {} (p={:0.2f})".format(new_surname,

prediction['nationality'],

prediction['probability']))

3.总结

本次博客中学习了基于MLP和CNN的姓氏分类器,进一步理解了MLP和CNN的原理和具体构建过程,希望对大家有所帮助。如果存在不足和错误,恳请指正,万分感谢。

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?