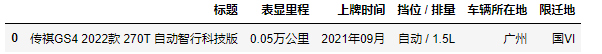

通过 Chrome 浏览器登录数据来源页,使用 Chrome 的检查功能,多次刷新,先分析要获取的数据的规律,搜索汽车类别、厂商、品牌、车型、行驶里程、上牌日期、车身类型、燃油类型、变速箱、发动机功率、汽车有尚未修复的损坏、所在地区、报价类型、汽车售卖时间、二手车交易价格等。然后获取所需页面的信息进行解析,将数据存储到数据库或者其它形式的文件。

1.使用selenium打开网页

#打开某二手车网页并进入全部二手车出售页面

from selenium import webdriver

from selenium.webdriver.support import expected_conditions as EC

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.common.by import By

driver = webdriver.Chrome()

driver.get('https://www.che168.com/guangzhou/')

wait = WebDriverWait(driver,10)

before = driver.current_window_handle

confirm_btn = wait.until(

EC.element_to_be_clickable(

(By.CSS_SELECTOR,'#tab2-2 > div.btn-center > a')

)

)

confirm_btn.click() #点击查看更多

2.打开详情页并获取需要信息

full xpath:/html/body/div[12]/div[1]/ul/li[1] 一共有56个即li[1]~li[56]

driver.find_element_by_xpath(‘/html/body/div[12]/div[1]/ul/li[1]’).click() 点击进入详情页

使用etree获取标题等信息:

html = etree.HTML(driver.page_source,etree.HTMLPullParser())

list_title = html.xpath('/html/body/div[5]/div[2]/h3//text()')#标题

for i in range(1,6):

if i == 1:

licheng.append(html.xpath('/html/body/div[5]/div[2]/ul/li[1]/h4/text()')[0])#里程数

elif i == 2:

time.append(html.xpath('/html/body/div[5]/div[2]/ul/li[2]/h4/text()')[0])#上牌时间

elif i == 3:

cangshu.append(html.xpath('/html/body/div[5]/div[2]/ul/li[3]/h4/text()')[0])#挡位 / 排量

elif i == 4:

location.append(html.xpath('/html/body/div[5]/div[2]/ul/li[4]/h4/text()')[0])#车辆所在地

elif i == 5:

str = html.xpath('/html/body/div[5]/div[2]/ul/li[5]/h4/text()')[0].strip()

xianding.append(str)#限迁地

3.循环获取56个车辆信息

for i in range(1, 57): # 一共有56个贩卖车辆

driver.switch_to_window(driver.window_handles[1]) # 跳转

while 1: # 防止太快窗口还没打开便转变窗口发生错误

sleep(1)

driver.find_element_by_xpath('//*[@class="viewlist_ul"]/li[{}]'.format(i)).click()

driver.refresh() # 刷新页面

sleep(2)

if len(driver.window_handles) == 3: # 判断窗口是否打开

driver.switch_to_window(driver.window_handles[2])

break

else:

continue

print('爬取到第{}个'.format(i))

html = etree.HTML(driver.page_source, etree.HTMLPullParser())

# 获取标题

list_title = html.xpath('/html/body/div[5]/div[2]/h3//text()')

str = list_title[0].strip()

title = [str]

price = []

# 获取价格 进行去除符号操作

if len(html.xpath('/html/body/div[5]/div[2]/div[2]/span//text()')) != 0:

price = [(html.xpath('/html/body/div[5]/div[2]/div[2]/span//text()')[0] + '万').strip('¥')]

elif len(html.xpath('//*[@id="overlayPrice"]//text()')) != 0:

price = [(html.xpath('//*[@id="overlayPrice"]//text()')[0] + '万').strip('¥')]

else:

price = [html.xpath('/html/body/div[5]/div[2]/div[2]/div[1]/div/text()')[0].strip(' ¥')]

# print(price)

# 获取其他信息

for j in range(1, 6):

if j == 1:

licheng = []

licheng = [(html.xpath('/html/body/div[5]/div[2]/ul/li[1]/h4/text()')[0])]

elif j == 2:

time = []

time = [(html.xpath('/html/body/div[5]/div[2]/ul/li[2]/h4/text()')[0])]

elif j == 3:

cangshu = []

cangshu = [(html.xpath('/html/body/div[5]/div[2]/ul/li[3]/h4/text()')[0])]

elif j == 4:

location = []

location = [(html.xpath('/html/body/div[5]/div[2]/ul/li[4]/h4/text()')[0])]

elif j == 5:

xianding = []

str = html.xpath('/html/body/div[5]/div[2]/ul/li[5]/h4/text()')[0].strip()

xianding = [str]

4.实现从第一页获取到第100页的全部车辆的全部信息

from selenium import webdriver

from selenium.webdriver.support import expected_conditions as EC

from selenium.webdriver.support.ui import WebDriverWait

from selenium.webdriver.common.by import By

from lxml import etree

import pandas as pd

from time import sleep

# proxy = ['--proxy-server=http://125.119.16.94:9000']

# chrome_options = webdriver.ChromeOptions()

# chrome_options.add_argument(proxy[0])

# driver = webdriver.Chrome(chrome_options=chrome_options)

driver = webdriver.Chrome()

driver.get('https://www.che168.com/guangzhou/')#打开网址

wait = WebDriverWait(driver,10)

before = driver.current_window_handle

confirm_btn = wait.until( #等待可以点击

EC.element_to_be_clickable(

(By.CSS_SELECTOR,'#tab2-2 > div.btn-center > a')

)

)

confirm_btn.click()#点击 ’查看更多‘

driver.switch_to_window(driver.window_handles[1])

driver.find_element_by_xpath('//*[@id="currengpostion"]/div/div/ul[2]/li[1]/a').click()#点击默认排序

b = 0#作为是否是第一次写入的判断变量

for l in range(2,101): #100页

driver.switch_to_window(driver.window_handles[1])#跳转窗口

sleep(1)#等待

if l <= 7:

driver.find_element_by_xpath('//*[@id="listpagination"]/a[{}]'.format(l)).click()#点击第几页

sleep(1)

print('爬取到第{}页'.format(l-1))

if l >= 8:#第六页后翻页模式

driver.find_element_by_xpath('//*[@id="listpagination"]/a[6]'.format(l)).click()

sleep(1)

for i in range(1,57):#一共有56个贩卖车辆

driver.switch_to_window(driver.window_handles[1])#跳转

while 1:#防止太快窗口还没打开便转变窗口发生错误

sleep(1)

driver.find_element_by_xpath('//*[@class="viewlist_ul"]/li[{}]'.format(i)).click()

driver.refresh()#刷新页面

sleep(2)

if len(driver.window_handles) == 3:#判断窗口是否打开

driver.switch_to_window(driver.window_handles[2])

break

else:

continue

print('爬取到第{}个'.format(i))

html = etree.HTML(driver.page_source,etree.HTMLPullParser())

# 获取标题

list_title = html.xpath('/html/body/div[5]/div[2]/h3//text()')

str = list_title[0].strip()

title = [str]

price = []

# 获取价格 进行去除符号操作

if len(html.xpath('/html/body/div[5]/div[2]/div[2]/span//text()')) != 0 :

price = [(html.xpath('/html/body/div[5]/div[2]/div[2]/span//text()')[0]+'万').strip('¥')]

elif len(html.xpath('//*[@id="overlayPrice"]//text()')) != 0 :

price = [(html.xpath('//*[@id="overlayPrice"]//text()')[0]+'万').strip('¥')]

else:

price = [html.xpath('/html/body/div[5]/div[2]/div[2]/div[1]/div/text()')[0].strip(' ¥')]

#print(price)

# 获取其他信息

for j in range(1,6):

if j == 1:

licheng = []

licheng = [(html.xpath('/html/body/div[5]/div[2]/ul/li[1]/h4/text()')[0])]

elif j == 2:

time = []

time = [(html.xpath('/html/body/div[5]/div[2]/ul/li[2]/h4/text()')[0])]

elif j == 3:

cangshu = []

cangshu = [(html.xpath('/html/body/div[5]/div[2]/ul/li[3]/h4/text()')[0])]

elif j == 4:

location = []

location = [(html.xpath('/html/body/div[5]/div[2]/ul/li[4]/h4/text()')[0])]

elif j == 5:

xianding = []

str = html.xpath('/html/body/div[5]/div[2]/ul/li[5]/h4/text()')[0].strip()

xianding = [str]

data = pd.DataFrame({'名称':title,'价格':price,'表显里程':licheng,'上牌时间':time,"挡位 / 排量":cangshu,'车辆所在地':location,'限迁地':xianding})

#print(data)

#写入csv文件

if b == 0:

b+= 1

data.to_csv(r"C:\Users\Crown\Desktop\1.csv", index=False, na_rep='0',header=True, encoding='GB18030',mode='a') # 写入csv文件,包括表头,不需要索引 encoding需要用GB18030否则文字乱码

else:

data.to_csv(r"C:\Users\Crown\Desktop\1.csv", index=False, na_rep='0',header=False, encoding='GB18030',mode='a') # 写入csv文件,包括表头,不需要索引 encoding需要用GB18030否则文字乱码

sleep(2)

driver.close()#关闭页面

1812

1812

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?