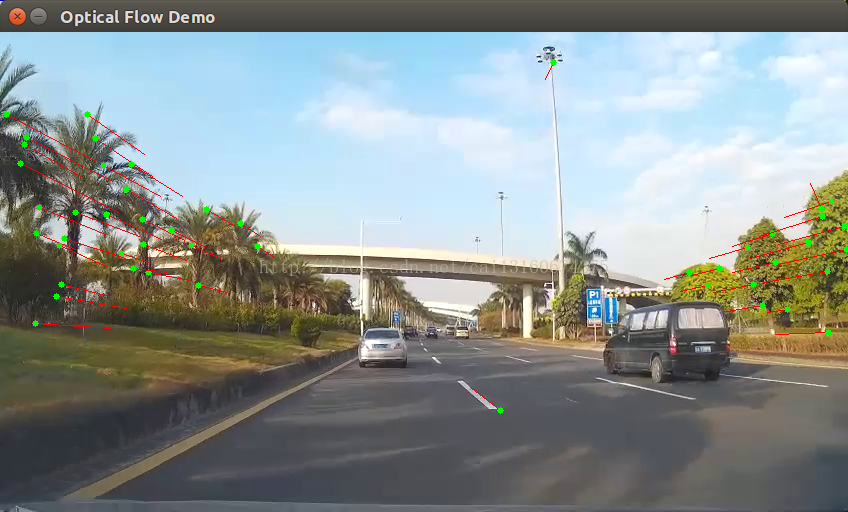

首先是一些一个光流算法实现,关键函数是

goodFeaturesToTrack 能检测出角点,算法是Harris角点和shi-tomasi角点 点击有详细的算法介绍,大概就是计算一个窗口在各个方向上的变化程度,有变化则说明是角点

#include <iostream>

#include <vector>

#include <cstdio>

#include <opencv2/opencv.hpp>

#include <opencv2/xfeatures2d.hpp>

using namespace cv;

using namespace std;

using namespace cv::xfeatures2d;

void OpticalFlow(Mat &frame, Mat & result);

bool addNewPoints();

bool acceptTrackedPoint(int i);

Mat curgray; // 当前图片

Mat pregray; // 预测图片

vector<Point2f> point[2]; // point0为特征点的原来位置,point1为特征点的新位置

vector<Point2f> initPoint; // 初始化跟踪点的位置

vector<Point2f> features; // 检测的特征

int maxCount = 300; // 检测的最大特征数

double qLevel = 0.1; // 特征检测的等级

double minDist = 20.0; // 两特征点之间的最小距离

vector<uchar> status; // 跟踪特征的状态,特征的流发现为1,否则为0

vector<float> err;

//g++ pyrLK.cpp -o pyrLK `pkg-config --cflags --libs opencv`

int main()

{

Mat matSrc;

Mat matRst;

VideoCapture cap("/home/caixing/Videos/car2.mov");

//cap.open(0);

int totalFrameNumber = cap.get(CV_CAP_PROP_FRAME_COUNT);

// perform the tracking process

printf("Start the tracking process, press ESC to quit.\n");

for (int nFrmNum = 0; nFrmNum < totalFrameNumber; nFrmNum++) {

// while(1){

// get frame from the video

double t = (double)cvGetTickCount();

cap >> matSrc;

if (!matSrc.empty())

{

OpticalFlow(matSrc, matRst);

//cout << "This picture is " << nFrmNum << endl;

}

else

{

cout << "Error : Get picture is empty!" << endl;

}

t = (double)cvGetTickCount() - t;

//cout << "cost time: " << t / ((double)cvGetTickFrequency()*1000.) << endl;

waitKey(0);

//if (waitKey(1) == 27) break;

}

return 0;

}

bool addNewPoints()

{

return point[0].size() <= 30;

}

void OpticalFlow(Mat &frame, Mat & result)

{

cvtColor(frame, curgray, CV_BGR2GRAY);

frame.copyTo(result);

if (addNewPoints())

// if (1)

{

goodFeaturesToTrack(curgray, features, maxCount, qLevel, minDist);

// point[0]=features;

// initPoint=features;

point[0].insert(point[0].end(), features.begin(), features.end());

initPoint.insert(initPoint.end(), features.begin(), features.end());

}

if (pregray.empty())

{

curgray.copyTo(pregray);

}

calcOpticalFlowPyrLK(pregray, curgray, point[0], point[1], status, err,Size(7,7));

//cout<<point[0].size()<<" "<<point[1].size()<<" "<<status.size()<<" "<<err.size()<<endl;

int k = 0;

for (size_t i = 0; i<point[1].size(); i++)

{

if (acceptTrackedPoint(i))

{

initPoint[k] = initPoint[i];

point[1][k++] = point[1][i];

}

}

point[1].resize(k);

initPoint.resize(k);

for (size_t i = 0; i<point[1].size(); i++)

{

line(result, initPoint[i], point[1][i], Scalar(0, 0, 255));

circle(result, point[1][i], 3, Scalar(0, 255, 0), -1);

}

swap(point[1], point[0]);

swap(pregray, curgray);

imshow("Optical Flow Demo", result);

//waitKey(50);

}

bool acceptTrackedPoint(int i)

{

return status[i] && ((abs(point[0][i].x - point[1][i].x) + abs(point[0][i].y - point[1][i].y)) > 2);

}

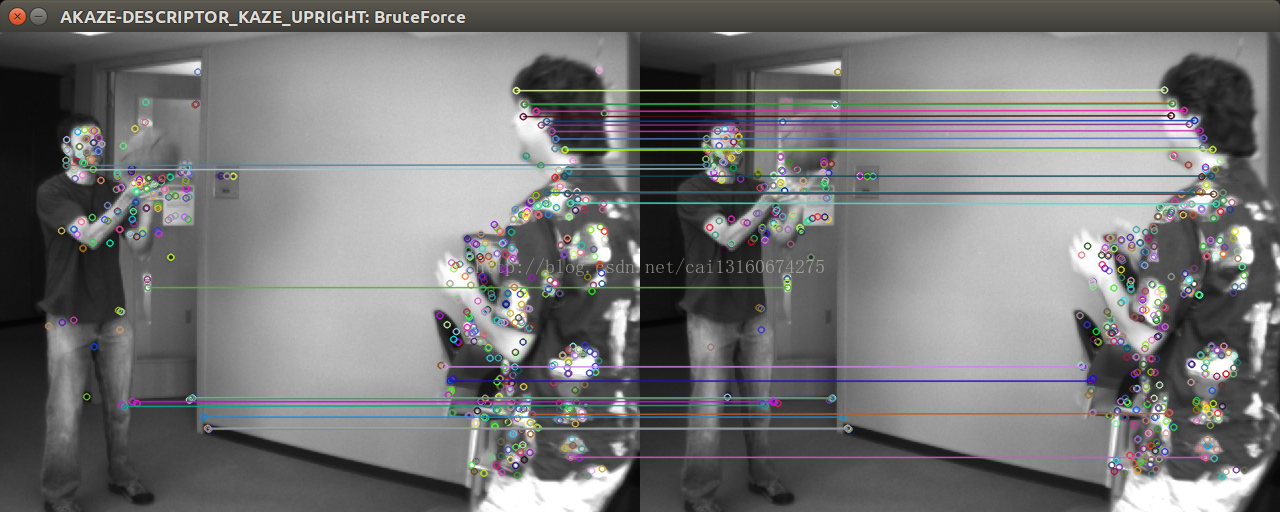

在opencv的sample里面,还有两张图片的特征点进行匹配的代码样例,opencv-3.2.0/samples/cpp/matchmetod_orb_akaze_brisk.cpp 对比了好几种特征点检测的算法,然后匹配结果,主要接口如下

Ptr<Feature2D> b;

if (*itDesc == "AKAZE-DESCRIPTOR_KAZE_UPRIGHT"){

b = AKAZE::create(AKAZE::DESCRIPTOR_KAZE_UPRIGHT);

}

if (*itDesc == "AKAZE"){

b = AKAZE::create();

}

if (*itDesc == "ORB"){

b = ORB::create();

}

else if (*itDesc == "BRISK"){

b = BRISK::create();

}

try

{

// We can detect keypoint with detect method

b->detect(img1, keyImg1, Mat());

// and compute their descriptors with method compute

b->compute(img1, keyImg1, descImg1);

// or detect and compute descriptors in one step

b->detectAndCompute(img2, Mat(),keyImg2, descImg2,false);

6979

6979

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?