25篇机器学习经典论文合集,有需要欢迎积分自取

Efficient sparse coding algorithms论文附有代码

[1] Zheng S, Kwok J T. Follow the moving leader in deep learning[C]//Proceedings of the 34th International Conference on Machine Learning-Volume 70. JMLR. org, 2017: 4110-4119.

[2] Kalai A, Vempala S. Efficient algorithms for online decision problems[J]. Journal of Computer and System Sciences, 2005, 71(3): 291-307.

[3] Kingma, D. and Ba, J. Adam: A method for stochastic optimization. In Proceedings of the International Conference for Learning Representations, 2015.

[4] Lee H, Battle A, Raina R, et al. Efficient sparse coding algorithms[C]//Advances in neural information processing systems. 2007: 801-808.

[5] Fan J, Ding L, Chen Y, et al. Factor Group-Sparse Regularization for Efficient Low-Rank Matrix Recovery[J]. 2019.

[6] Z. Lai, Y. Chen, J. Wu, W. W. Keung, and F. Shen, “Jointly sparse hashing for image retrieval,” IEEE Transactions on Image Processing, vol. 27, no. 12, pp. 6147–6158, 2018.

[7] Z. Zhang, Y. Chen, and V. Saligrama, “Efficient training of very deep neural networks for supervised hashing,” in Proc. IEEE Int. Conf. Computer Vision and Pattern Recognition, 2016, pp. 1487–1495.

[8] Wei-Shi Zheng, Shaogang Gong, Tao Xiang. Person re-identification by probabilistic relative distance comparison[C]// CVPR 2011. IEEE, 2011.

[9] Liao S, Hu Y, Zhu X, et al. Person re-identification by local maximal occurrence representation and metric learning[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2015: 2197-2206.

[10] Liu X, Li H, Shao J, et al. Show, tell and discriminate: Image captioning by self-retrieval with partially labeled data[C]//Proceedings of the European Conference on Computer Vision (ECCV). 2018: 338-354.

[11] Yao T, Pan Y, Li Y, et al. Exploring visual relationship for image captioning[C]//Proceedings of the European conference on computer vision (ECCV). 2018: 684-699.

[12] Chao Dong, Chen Change Loy, Kaiming He, and Xiaoou Tang., ”Image Super-Resolution Using Deep Convolutional Networks, ” IEEE Transactions on Pattern Analysis and Machine Intelligence, Preprint, 2015.

[13] M. D. Zeiler, D. Krishnan, Taylor, G. W., and R. Fergus, "Deconvolutional networks," in Proc. IEEE Comput. Soc. Conf. Comput. Vision Pattern Recog., 2010, pp. 2528-2535.

[14] Girshick R, Donahue J, Darrell T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2014: 580-587.

[15] Girshick R . Fast R-CNN[J]. Computer Science, 2015.

[16] Joseph Redmon, Santosh Divvala, Ross Girshick, et al. You Only Look Once: Unified, Real-Time Object Detection[C]// 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR). IEEE, 2016.

[17] LeCun Y, Bottou L, Bengio Y, et al. Gradient-based learning applied to document recognition[J]. Proceedings of the IEEE, 1998, 86(11): 2278-2324.

[18] Hinton G E, Salakhutdinov R R. Reducing the dimensionality of data with neural networks[J]. science, 2006, 313(5786): 504-507.

[19] Krizhevsky A, Sutskever I, Hinton G E. Imagenet classification with deep convolutional neural networks[C]//Advances in neural information processing systems. 2012: 1097-1105.

[20] Zeiler M D, Fergus R. Visualizing and understanding convolutional networks[C]//European conference on computer vision. Springer, Cham, 2014: 818-833.

[21] Szegedy C, Liu W, Jia Y, et al. Going deeper with convolutions[C]//Proceedings of the IEEE conference on computer vision and pattern recognition. 2015: 1-9.

[22] Wu, Y., & He, K. (2018). Group normalization. In Proceedings of the European Conference on Computer Vision (ECCV) (pp. 3-19).

[23] Goodfellow I,Pouget-Abadie J, Mirza M, et al. Generative adversarial nets[C]//Advances in Neural Information Processing Systems. 2014: 2672-2680.

[24] Tran, L., Yin, X., & Liu, X. (2017). Disentangled representation learning gan for pose-invariant face recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1415-1424).

[25] Pu, Y., Gan, Z., Henao, R., Yuan, X., Li, C., Stevens, A., & Carin, L. (2016). Variational autoencoder for deep learning of images, labels and captions. In Advances in neural information processing systems (pp. 2352-2360).

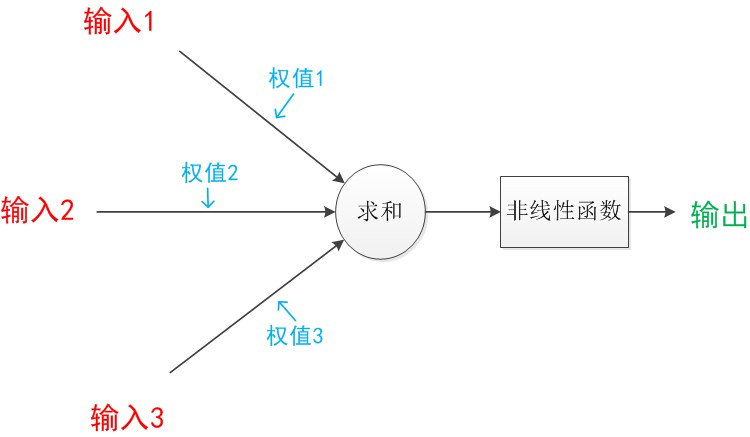

这篇博客介绍了从端到端深度学习的概念,阐述了神经网络的工作原理,并深入探讨了卷积神经网络(CNN)的细节,包括卷积层、池化层和稠密层在图像处理中的作用。此外,还提到了损失函数在模型训练中的重要性以及图像卷积的过程。最后,讨论了深度图和立体视觉深度估计在计算机视觉中的应用。

这篇博客介绍了从端到端深度学习的概念,阐述了神经网络的工作原理,并深入探讨了卷积神经网络(CNN)的细节,包括卷积层、池化层和稠密层在图像处理中的作用。此外,还提到了损失函数在模型训练中的重要性以及图像卷积的过程。最后,讨论了深度图和立体视觉深度估计在计算机视觉中的应用。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2434

2434

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?