推荐新版安装教程

http://blog.csdn.net/chenhaifeng2016/article/details/78874883

在ubuntu 16.04上安装cuda8.0和cudnn 5.1,请参考以下内容

http://blog.csdn.net/chenhaifeng2016/article/details/68957732

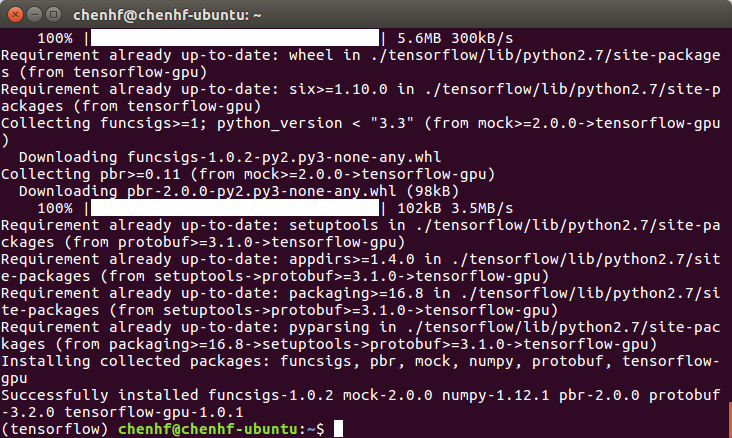

安装TensorFlow

sudo apt-get install libcupti-dev

sudo apt-get install python-pip python-dev python-virtualenv

virtualenv --system-site-packages ~/tensorflow

source ~/tensorflow/bin/activate

pip install --upgrade tensorflow-gpu

测试TensorFlow

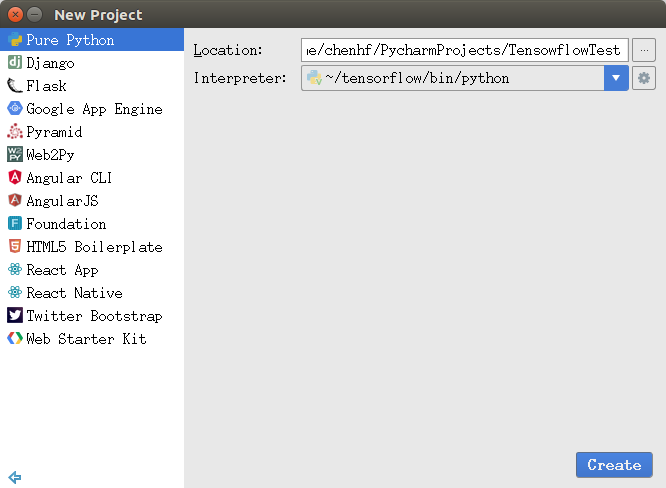

通过Pycharm创建测试工程

from tensorflow.examples.tutorials.mnist import input_data import tensorflow as tf global mnist #远程下载MNIST数据,建议先下载好并保存在MNIST_data目录下 def DownloadData(): global mnist mnist = input_data.read_data_sets("MNIST_data/", one_hot=True) #编码格式:one-hot print(mnist.train.images.shape, mnist.train.labels.shape) print(mnist.test.images.shape, mnist.test.labels.shape) print(mnist.validation.images.shape, mnist.validation.labels.shape) def Train(): sess = tf.InteractiveSession() #Step 1 #定义算法公式Softmax Regression x = tf.placeholder(tf.float32, [None, 784]) #构建占位符,代表输入的图像,None表示样本的数量可以是任意的 W = tf.Variable(tf.zeros([784,10])) #构建一个变量,代表训练目标weights,初始化为0 b = tf.Variable(tf.zeros([10])) #构建一个变量,代表训练目标biases,初始化为0 y = tf.nn.softmax(tf.matmul(x, W) + b) #构建了一个softmax的模型:y = softmax(Wx + b),y指样本标签的预测值 #Step 2 #定义损失函数,选定优化器,并指定优化器优化损失函数 y_ = tf.placeholder(tf.float32, [None, 10]) # 构建占位符,代表样本标签的真实值 # 交叉熵损失函数 cross_entropy = -tf.reduce_sum(y_ * tf.log(y)) #y = tf.matmul(x, W) + b #cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y)) # 使用梯度下降法(0.01的学习率)来最小化这个交叉熵损失函数 train_step = tf.train.GradientDescentOptimizer(0.01).minimize(cross_entropy) #Step 3 #使用随机梯度下降训练数据 tf.global_variables_initializer().run() for i in range(1000): #迭代次数为1000 batch_xs, batch_ys = mnist.train.next_batch(100) #使用minibatch的训练数据,一个batch的大小为100 sess.run(train_step, feed_dict={x: batch_xs, y_: batch_ys}) #用训练数据替代占位符来执行训练 #Step 4 #在测试集上对准确率进行评测 correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1)) #tf.argmax()返回的是某一维度上其数据最大所在的索引值,在这里即代表预测值和真值 accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) #用平均值来统计测试准确率 print(sess.run(accuracy, feed_dict={x: mnist.test.images, y_: mnist.test.labels})) #打印测试信息 sess.close() if __name__ == '__main__': DownloadData(); Train();

运行效果

/home/chenhf/tensorflow/bin/python /home/chenhf/PycharmProjects/TensowflowTest/MnistDemo.py

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcublas.so.8.0 locally

I tensorflow/stream_executor/dso_loader.cc:126] Couldn't open CUDA library libcudnn.so.5. LD_LIBRARY_PATH: /home/chenhf/pycharm-2017.1/bin:/usr/local/cuda-8.0/lib64:

I tensorflow/stream_executor/cuda/cuda_dnn.cc:3517] Unable to load cuDNN DSO

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcufft.so.8.0 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcuda.so.1 locally

I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcurand.so.8.0 locally

Extracting MNIST_data/train-images-idx3-ubyte.gz

Extracting MNIST_data/train-labels-idx1-ubyte.gz

Extracting MNIST_data/t10k-images-idx3-ubyte.gz

Extracting MNIST_data/t10k-labels-idx1-ubyte.gz

((55000, 784), (55000, 10))

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE3 instructions, but these are available on your machine and could speed up CPU computations.

((10000, 784), (10000, 10))

((5000, 784), (5000, 10))

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations.

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations.

I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:910] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

I tensorflow/core/common_runtime/gpu/gpu_device.cc:885] Found device 0 with properties:

name: GeForce GTX 765M

major: 3 minor: 0 memoryClockRate (GHz) 0.8625

pciBusID 0000:01:00.0

Total memory: 1.95GiB

Free memory: 1.93GiB

I tensorflow/core/common_runtime/gpu/gpu_device.cc:906] DMA: 0

I tensorflow/core/common_runtime/gpu/gpu_device.cc:916] 0: Y

I tensorflow/core/common_runtime/gpu/gpu_device.cc:975] Creating TensorFlow device (/gpu:0) -> (device: 0, name: GeForce GTX 765M, pci bus id: 0000:01:00.0)

0.9162

Process finished with exit code 0

准确率在91.6%

--结束--

本文介绍了如何在Ubuntu 16.04上安装CUDA 8.0、cuDNN 5.1以及TensorFlow,并提供了一段使用MNIST数据集测试TensorFlow的示例代码。测试结果显示,在GeForce GTX 765M上运行该模型,准确率达到了91.6%。

本文介绍了如何在Ubuntu 16.04上安装CUDA 8.0、cuDNN 5.1以及TensorFlow,并提供了一段使用MNIST数据集测试TensorFlow的示例代码。测试结果显示,在GeForce GTX 765M上运行该模型,准确率达到了91.6%。

2036

2036

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?