RNN Classifier – Name Classification

实验使用的数据集链接:

链接: https://pan.baidu.com/s/1EXEX3349JrWSuI-Bwp4Ywg 提取码: vzk5

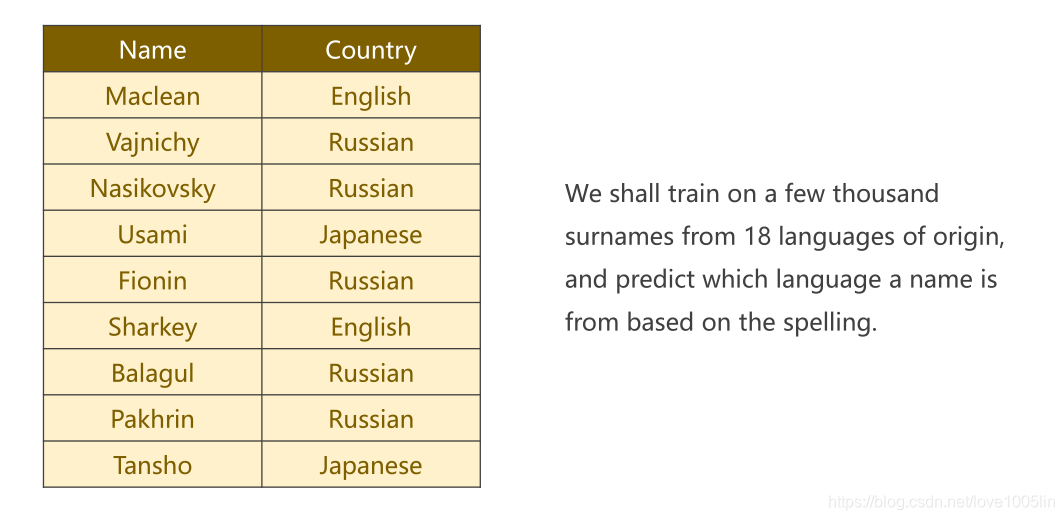

实验目标:预测给出的姓名是属于哪种语言(共有18中语言)。下图示例:

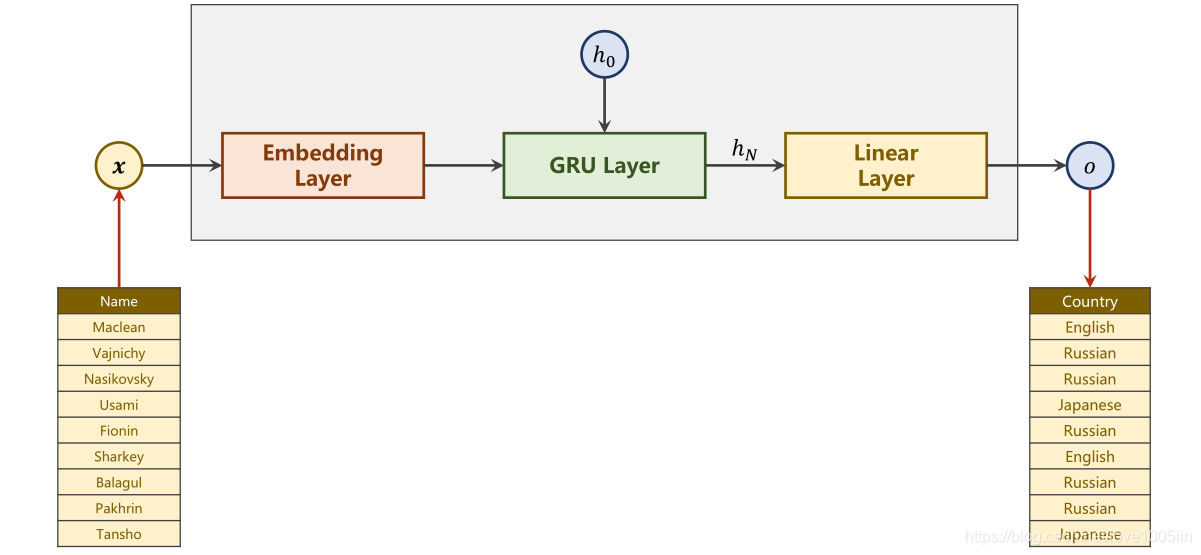

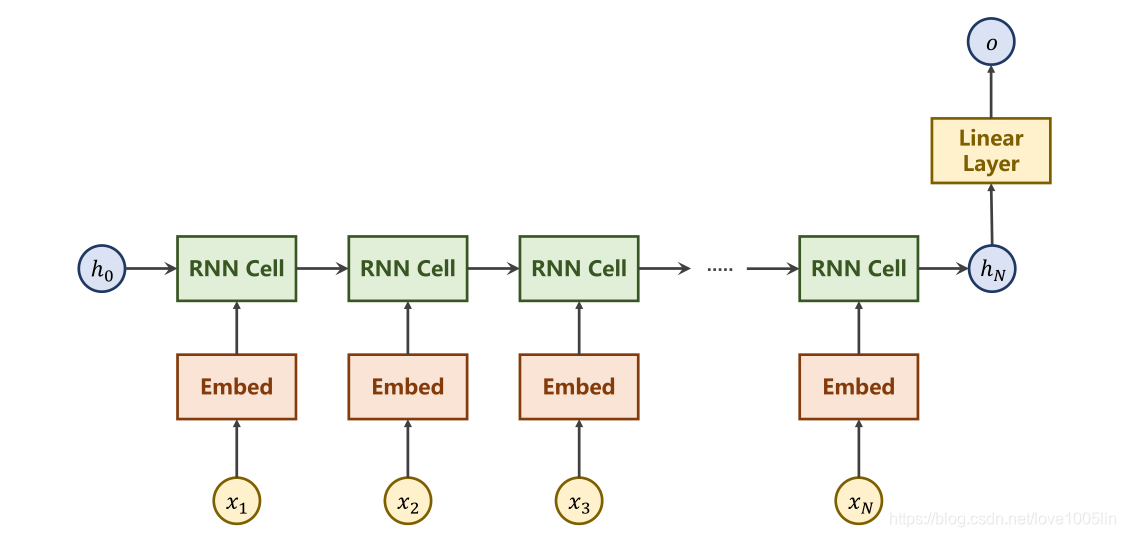

实验模型:

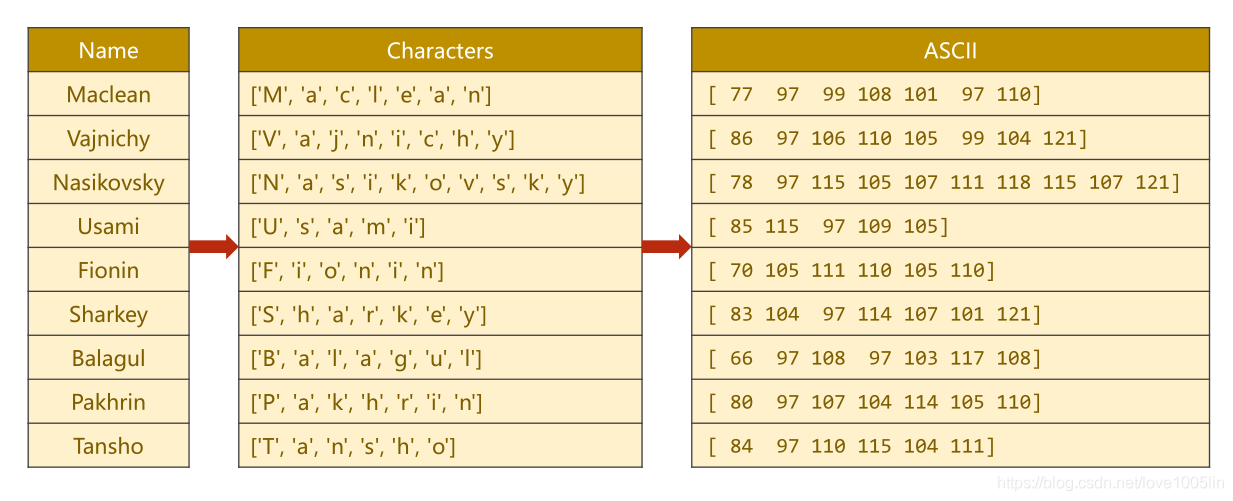

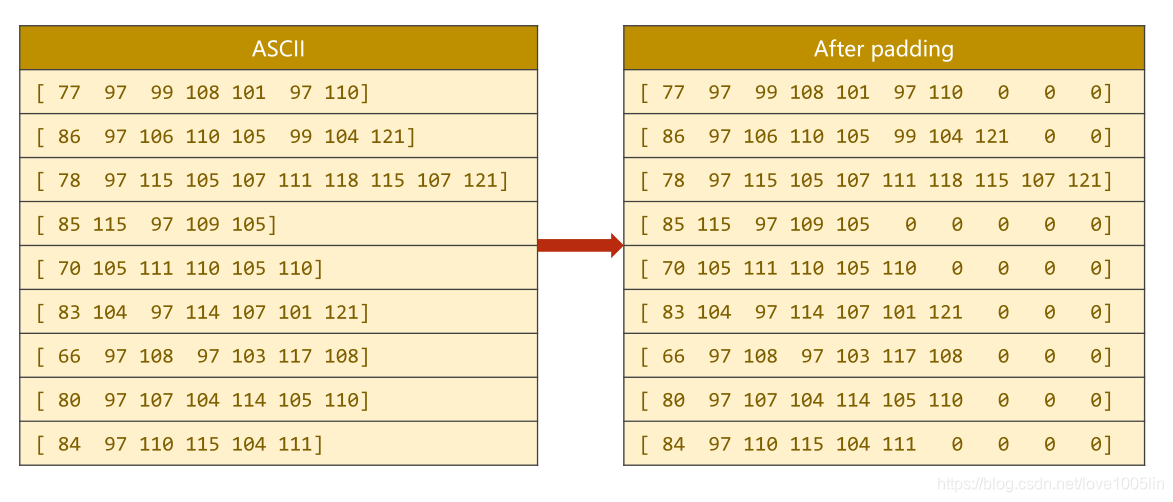

1.将姓名转换成对应的字母序列,方便使用循环神经网络进行处理。

2.使用嵌入层将字母序列进行维度的转变。

3.将转变后的数据放入GRU中进行处理(选择双向循环神经网络)。

4.使用线性层输出18维,用于判断从属类型。

模型流程图:

网络模型:

class RNNClassifier(torch.nn.Module):

def __init__(self, input_size, hidden_size, output_size, n_layers=1, bidirectional=True):

super(RNNClassifier, self).__init__()

self.hidden_size = hidden_size

self.n_layers = n_layers

self.n_directions = 2 if bidirectional else 1

self.embedding = torch.nn.Embedding(input_size, hidden_size)

self.gru = torch.nn.GRU(hidden_size, hidden_size, n_layers, bidirectional=bidirectional)

self.fc = torch.nn.Linear(hidden_size * self.n_directions, output_size)

def _init_hidden(self, batch_size):

hidden = torch.zeros(self.n_layers * self.n_directions, batch_size, self.hidden_size)

return create_tensor(hidden)

def forward(self, inputs, seq_lengths):

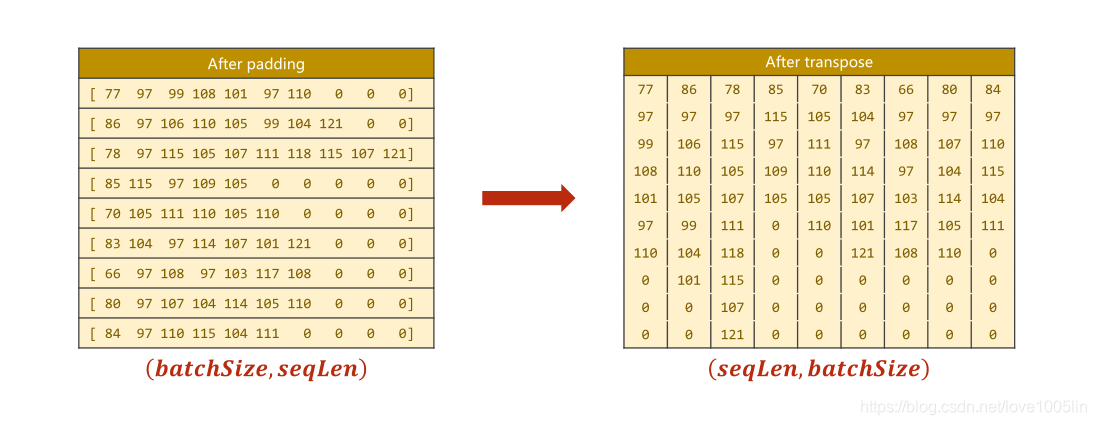

# input shape : B x S -> S x B

# print(type(inputs))

# print(inputs)

inputs = inputs.t()

# Save batch-size for make initial hidden.

batch_size = inputs.size(1)

# 构建 h0-> (nlayer*nDirections,batchSize,hiddenSize)

hidden = self._init_hidden(batch_size)

# embedding 的形状 (seqlen,batchsize,hiddensize)

embedding = self.embedding(inputs)

# pack them up 目的:快速进行整合 整体快速运算 It returns a PackedSquence object.

gru_input = pack_padded_sequence(embedding, seq_lengths)

# print(len(gru_input))

# 返回hidden (nlayer*nDirection,batchsize,hiddensize)

output, hidden = self.gru(gru_input, hidden)

# f we use bidirectional GRU, the forward hidden and backward hidden should be concatenate.

if self.n_directions == 2:

hidden_cat = torch.cat([hidden[-1], hidden[-2]], dim=1)

else:

hidden_cat = hidden[-1]

fc_output = self.fc(hidden_cat)

return fc_output

Convert name to tensor:

# Convert name to tensor

def make_tensors(names, countries):

"""

:param names: 人名

:param countries: 人名->国家

:return:

"""

# 构建姓名字母列表

sequences_and_lengths = [name2list(name) for name in names]

name_sequences = [sl[0] for sl in sequences_and_lengths]

seq_lengths = torch.LongTensor([sl[1] for sl in sequences_and_lengths])

countries = countries.long()

# 做填充padding

# make tensor of name, BatchSize x SeqLen

seq_tensor = torch.zeros(len(name_sequences), seq_lengths.max()).long()

for idx, (seq, seq_len) in enumerate(zip(name_sequences, seq_lengths), 0):

seq_tensor[idx, :seq_len] = torch.LongTensor(seq)

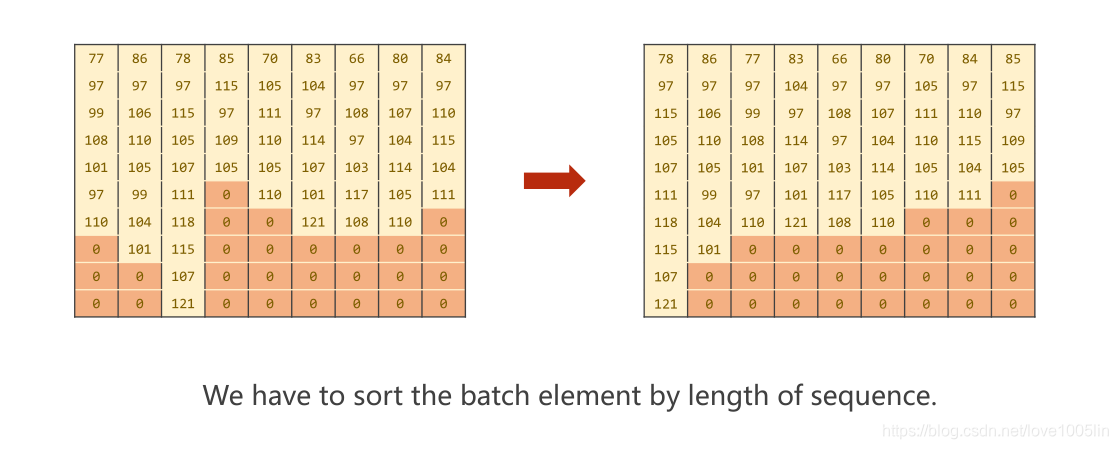

# sort by length to use pack_padded_sequence

seq_lengths, perm_idx = seq_lengths.sort(dim=0, descending=True)

seq_tensor = seq_tensor[perm_idx]

countries = countries[perm_idx]

return create_tensor(seq_tensor), create_tensor(seq_lengths), create_tensor(countries)

Datesets 处理:

class NameDataset(Dataset):

def __init__(self, is_train_set=True):

super(NameDataset, self).__init__()

filename = './names_train.csv.gz' if is_train_set else './names_test.csv.gz'

with gzip.open(filename, 'rt') as f:

reader = csv.reader(f)

rows = list(reader)

self.names = [row[0] for row in rows]

self.len = len(self.names)

self.countries = [row[1] for row in rows]

self.country_list = list(sorted(set(self.countries))) # set 去重

self.country_dict = self.getCountryDict()

self.country_num = len(self.country_list)

def __getitem__(self, index):

return self.names[index], self.country_dict[self.countries[index]]

def __len__(self):

return self.len

def getCountryDict(self):

# 将list 转换成 dictionary

country_dict = dict()

for idx, country_name in enumerate(self.country_list, 0):

country_dict[country_name] = idx

return country_dict

def idx2country(self, index):

return self.country_list[index]

def getCountriesNum(self):

return self.country_num

trainset = NameDataset(is_train_set=True)

trainloader = DataLoader(trainset, batch_size=BATCH_SIZE, shuffle=True)

testset = NameDataset(is_train_set=False)

testloader = DataLoader(testset, batch_size=BATCH_SIZE, shuffle=False)

N_COUNTRY = trainset.getCountriesNum()

完整实验代码:

import csv

import gzip

import math

import time

import matplotlib.pyplot as plt

import numpy as np

import torch

from torch.nn.utils.rnn import pack_padded_sequence

from torch.utils.data import DataLoader, Dataset

# 数据准备

# Parameters

HIDDEN_SIZE = 100

BATCH_SIZE = 256

N_LAYER = 2

N_EPOCHS = 100

N_CHARS = 128

USE_GPU = False

class NameDataset(Dataset):

def __init__(self, is_train_set=True):

super(NameDataset, self).__init__()

filename = './names_train.csv.gz' if is_train_set else './names_test.csv.gz'

with gzip.open(filename, 'rt') as f:

reader = csv.reader(f)

rows = list(reader)

self.names = [row[0] for row in rows]

self.len = len(self.names)

self.countries = [row[1] for row in rows]

self.country_list = list(sorted(set(self.countries))) # set 去重

self.country_dict = self.getCountryDict()

self.country_num = len(self.country_list)

def __getitem__(self, index):

return self.names[index], self.country_dict[self.countries[index]]

def __len__(self):

return self.len

def getCountryDict(self):

country_dict = dict()

for idx, country_name in enumerate(self.country_list, 0):

country_dict[country_name] = idx

return country_dict

def idx2country(self, index):

return self.country_list[index]

def getCountriesNum(self):

return self.country_num

trainset = NameDataset(is_train_set=True)

trainloader = DataLoader(trainset, batch_size=BATCH_SIZE, shuffle=True)

testset = NameDataset(is_train_set=False)

testloader = DataLoader(testset, batch_size=BATCH_SIZE, shuffle=False)

N_COUNTRY = trainset.getCountriesNum()

# 将Tensor 转到gpu上

def create_tensor(tensor):

device = ('cuda' if torch.cuda.is_available() else 'cpu')

tensor = tensor.to(device)

return tensor

# 将姓名字母转换成对应的ASCll值

def name2list(name):

arr = [ord(c) for c in name]

return arr, len(arr)

# Convert name to tensor

def make_tensors(names, countries):

"""

:param names: 人名

:param countries: 人名->国家

:return:

"""

sequences_and_lengths = [name2list(name) for name in names]

name_sequences = [sl[0] for sl in sequences_and_lengths]

seq_lengths = torch.LongTensor([sl[1] for sl in sequences_and_lengths])

countries = countries.long()

# 做填充padding

# make tensor of name, BatchSize x SeqLen

seq_tensor = torch.zeros(len(name_sequences), seq_lengths.max()).long()

for idx, (seq, seq_len) in enumerate(zip(name_sequences, seq_lengths), 0):

seq_tensor[idx, :seq_len] = torch.LongTensor(seq)

# sort by length to use pack_padded_sequence

seq_lengths, perm_idx = seq_lengths.sort(dim=0, descending=True)

seq_tensor = seq_tensor[perm_idx]

countries = countries[perm_idx]

return create_tensor(seq_tensor), create_tensor(seq_lengths), create_tensor(countries)

class RNNClassifier(torch.nn.Module):

def __init__(self, input_size, hidden_size, output_size, n_layers=1, bidirectional=True):

super(RNNClassifier, self).__init__()

self.hidden_size = hidden_size

self.n_layers = n_layers

self.n_directions = 2 if bidirectional else 1

self.embedding = torch.nn.Embedding(input_size, hidden_size)

self.gru = torch.nn.GRU(hidden_size, hidden_size, n_layers, bidirectional=bidirectional)

self.fc = torch.nn.Linear(hidden_size * self.n_directions, output_size)

def _init_hidden(self, batch_size):

hidden = torch.zeros(self.n_layers * self.n_directions, batch_size, self.hidden_size)

return create_tensor(hidden)

def forward(self, inputs, seq_lengths):

# input shape : B x S -> S x B

# print(type(inputs))

# print(inputs)

inputs = inputs.t()

batch_size = inputs.size(1)

hidden = self._init_hidden(batch_size)

embedding = self.embedding(inputs)

# pack them up 快速进行整合 整体快速运算

gru_input = pack_padded_sequence(embedding, seq_lengths)

# print(len(gru_input))

output, hidden = self.gru(gru_input, hidden)

if self.n_directions == 2:

hidden_cat = torch.cat([hidden[-1], hidden[-2]], dim=1)

else:

hidden_cat = hidden[-1]

fc_output = self.fc(hidden_cat)

return fc_output

# 模型训练

def trainModel():

"""

:return: 返回损失

"""

total_loss = 0

for i, (names, countries) in enumerate(trainloader, 1):

inputs, seq_lengths, target = make_tensors(names, countries)

output = classifier(inputs, seq_lengths)

loss = criterion(output, target)

optimizer.zero_grad()

loss.backward()

optimizer.step()

total_loss += loss.item()

if i % 10 == 0:

print(f'[{time_since(start)}] Epoch {epoch} ', end='')

print(f'[{i * len(inputs)}/{len(trainset)}] ', end='')

print(f'loss={total_loss / (i * len(inputs))}')

return total_loss

# 测试

def testModel():

"""

:return: 返回准确率

"""

correct = 0

total = len(testset)

print("evaluating trained model ...")

with torch.no_grad():

for i, (names, countries) in enumerate(testloader, 1):

inputs, seq_lengths, target = make_tensors(names, countries)

output = classifier(inputs, seq_lengths)

pred = output.max(dim=1, keepdim=True)[1]

correct += pred.eq(target.view_as(pred)).sum().item()

percent = '%.2f' % (100 * correct / total)

print(f'Test set: Accuracy {correct}/{total} {percent}%')

return correct / total

def time_since(since):

"""

:param since:

:return: 计算每一次运行花费多长时间

"""

s = time.time() - since

m = math.floor(s / 60)

s -= m * 60

return '%dm %ds' % (m, s)

if __name__ == '__main__':

classifier = RNNClassifier(N_CHARS, HIDDEN_SIZE, N_COUNTRY, N_LAYER)

if USE_GPU:

device = torch.device("cuda:0")

classifier.to(device)

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(classifier.parameters(), lr=0.001)

start = time.time()

print("Training for %d epochs..." % N_EPOCHS)

acc_list = []

for epoch in range(1, N_EPOCHS + 1):

# Train cycle

trainModel()

acc = testModel()

acc_list.append(acc)

epoch = np.arange(1, len(acc_list) + 1, 1)

acc_list = np.array(acc_list)

plt.plot(epoch, acc_list)

plt.xlabel('Epoch')

plt.ylabel('Accuracy')

plt.grid()

plt.show()

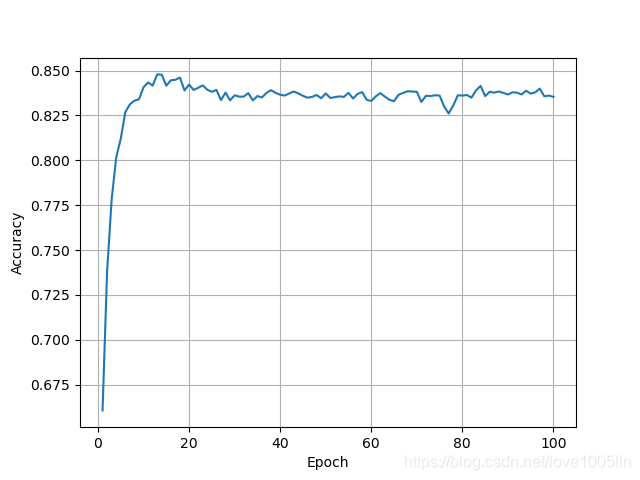

实验结果:

实验在20个epoch内准确率达到了最大,准确率接近85%。

309

309

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?