前言:据说增加注意力机制有望提高目标检测的效果,所以从这里开始添加不同的注意力机制。本次尝试比较基础的轻量级的CBAM,包含CAM(Channel Attention Module)和SAM(Spartial Attention Module)两个子模块,分别进行通道和空间上的注意。这样不只能够节约参数和计算力,并且保证了其能够做为即插即用的模块集成到现有的网络架构中去。YOLOv8自带CBAM。

说明:大家都说要修改代码需要源码安装,所以这里重新开始,进行源码安装。

1. 源码安装YOLOv8:Ubuntu Terminal code

# 创建虚拟环境

conda create -n yolov8_cbam python=3.10

conda activate yolov8_cbam

# 安装PyTorch

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

# Github下载并安装YOLOv8 v8.1.0安装包

# 加压后,转到相应的文件夹

cd /home/bsuo/code/YOLOv8/ultralytics_CBAM/ ## 修改了文件夹名字

pip install -e '.[dev]' ## 源码安装命令2. 添加代码:/ultralytics/nn/tasks.py中添加CBAM

from ultralytics.nn.modules import (

AIFI,

C1,

C2,

C3,

C3TR,

OBB,

SPP,

SPPF,

Bottleneck,

BottleneckCSP,

C2f,

C2fAttn,

ImagePoolingAttn,

C3Ghost,

C3x,

Classify,

Concat,

Conv,

Conv2,

ConvTranspose,

Detect,

DWConv,

DWConvTranspose2d,

Focus,

GhostBottleneck,

GhostConv,

HGBlock,

HGStem,

Pose,

RepC3,

RepConv,

ResNetLayer,

RTDETRDecoder,

Segment,

CBAM, ## 新增内容

WorldDetect,3. 添加代码:/ultralytics/nn/tasks.py添加配置(896行左右)

elif m is RTDETRDecoder: # special case, channels arg must be passed in index 1

args.insert(1, [ch[x] for x in f])

### 新增代码

elif m in {CBAM}:

c1,c2 = ch[f],args[0]

if c2 != nc:

c2 = make_divisible(min(c2,max_channels)*width, 8)

args = [c1,*args[1:]]

### 新增代码

else:

c2 = ch[f]4. 替换代码:/home/bsuo/code/YOLOv8/ultralytics_GAM/ultralytics/cfg/models/v8/yolov8.yaml

# YOLOv8.0n backbone

backbone:

# [from, repeats, module, args]

- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2

- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4

- [-1, 3, C2f, [128, True]]

- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8

- [-1, 6, C2f, [256, True]]

- [-1, 1, CBAM, [256, 7]] ## 添加了注意力机制

- [-1, 1, Conv, [512, 3, 2]] # 6-P4/16

- [-1, 6, C2f, [512, True]]

- [-1, 1, Conv, [1024, 3, 2]] # 8-P5/32

- [-1, 3, C2f, [1024, True]]

- [-1, 1, SPPF, [1024, 5]] # 10

# YOLOv8.0n head

head:

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 7], 1, Concat, [1]] # cat backbone P4

- [-1, 3, C2f, [512]] # 12

- [-1, 1, nn.Upsample, [None, 2, "nearest"]]

- [[-1, 5], 1, Concat, [1]] # cat backbone P3

- [-1, 3, C2f, [256]] # 15 (P3/8-small)

- [-1, 1, Conv, [256, 3, 2]]

- [[-1, 13], 1, Concat, [1]] # cat head P4

- [-1, 3, C2f, [512]] # 18 (P4/16-medium)

- [-1, 1, Conv, [512, 3, 2]]

- [[-1, 10], 1, Concat, [1]] # cat head P5

- [-1, 3, C2f, [1024]] # 21 (P5/32-large)

- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

5. 修改配置和模型文件:

找到配置文件:/home/bsuo/code/YOLOv8/ultralytics_CBAM/ultralytics/cfg/datasets/coco128.yaml

复制配置文件到:/media/bsuo/Seagate/CT_image/LUNA_YOLOv8/training/subset0/

修改配置文件名为:config.yaml(data)

修改配置文件内容:主要是train.txt、val.txt和test.txt文件路径,以及检测的names

重复以上步骤,让每个subset子文件夹都有一个不同的config.yaml配置文件(需修改相应的路径)

找到模型文件:/home/bsuo/code/YOLOv8/ultralytics_CBAM/ultralytics/cfg/models/v8/yolov8.yaml(model)

然后,将yolov8.yaml的nc参数(nc: number of classes)改成1,因为只有1个分类(nodule)

6. 训练数据集(接着之前预处理好的图片数据进行):train_subset.py

import os

import subprocess

# 指定基础路径和子文件夹列表

config_path = "/media/bsuo/Seagate/CT_image/LUNA_YOLOv8/training/subset0/config.yaml"

output_path = "/media/bsuo/Seagate/CT_image/LUNA_YOLOv8/results_CBAM/subset0"

os.makedirs(output_path, exist_ok=True)

# 指定运行参数

command = f"yolo task=detect mode=train model=yolov8x.yaml pretrained=true data={config_path} epochs=150 batch=6 workers=12 device=0"

# 运行训练命令

subprocess.run(command, shell=True, cwd=output_path)

print("训练完成")训练参数:yolo task=detect mode=yolov8x.yaml pretrained=true data=config.yaml epochs=150 batch=6 workers=12 device=0

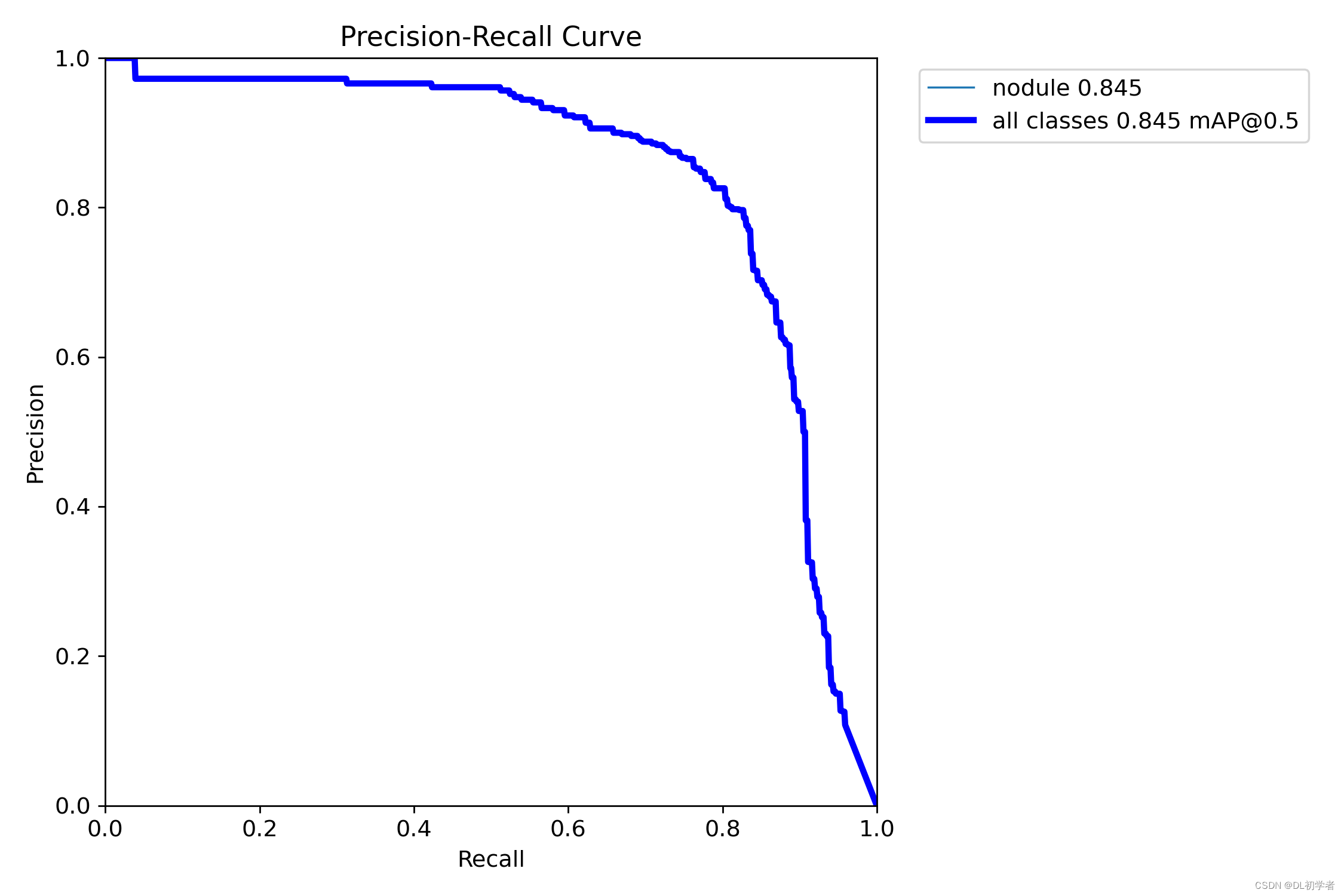

7. 训练结果:仅尝试了subset0

未添加注意力机制:mAP = 83.4%

添加CBAM机制:mAP = 84.5%(提高了1.1%,有那么一丁丁意思)

下一步,将继续改进代码,希望能获得一个更好的训练结果。

1218

1218

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?