跑了一天,出来的值一直很怪

| bbc_dwf | epoch:10,acc:21.9775, nmi:0.1616, ari:-0.0322 |

|---|---|

| bbc_dwf | epoch:10,acc:21.9775, nmi:0.1616, ari:-0.0322 |

| bbc_pw | epoch:10,acc:22.9213, nmi:0.2278, ari:0.0229 |

| cite_pw | epoch:10,acc:19.8978, nmi:0.1718, ari:0.0040 |

| acm_pw | epoch:10,acc:22.2810, nmi:0.1085, ari:0.0112 |

| abstract_pw | epoch:10,acc:22.4570, nmi:0.1977, ari:0.1057 |

记录:

- 正常配置

500, 500, 1000, 1000, 500, 500

n_z = 10,

n_latent = 5

lr = 0.005

n_clusters=5, n_init=20

batch_size=256,shuffle=False

Epoch 117: Train Loss: 0.9996

acc:22.5169, nmi:0.3532, ari:0.1177

- z_mean聚类

Epoch 12: Train Loss: 0.9996

acc:25.2135, nmi:8.4652, ari:0.0945 - 修改网络结构 500, 250, 100, 100, 250, 500, 5000,

lr = 0.005

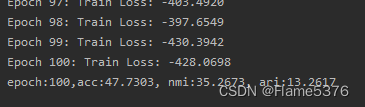

Epoch 100: Train Loss: 0.9997

acc:47.1011, nmi:28.1540, ari:18.9423 - lr=0.002

loss会上升?

Epoch 100: Train Loss: 0.9996

epoch:0,acc:23.9551, nmi:1.3544, ari:0.5867

epoch:0,acc:26.6517, nmi:2.2213, ari:0.9771

epoch:0,acc:51.7303, nmi:33.4441, ari:23.8787

epoch:0,acc:33.1236, nmi:8.7497, ari:6.0201

shuffle=True 50 epoch

epoch:0,acc:26.3820, nmi:1.8062, ari:1.0806

epoch:0,acc:24.9888, nmi:1.3512, ari:0.8620

epoch:0,acc:26.3371, nmi:2.8599, ari:1.1493

- lr=0.01

epoch:0,acc:38.8315, nmi:35.1708, ari:22.3065

epoch:0,acc:25.8876, nmi:11.3914, ari:0.6150

epoch:0,acc:29.9775, nmi:3.9909, ari:3.7405

epoch:0,acc:45.4382, nmi:28.5032, ari:18.9452

epoch:0,acc:35.2809, nmi:17.6080, ari:10.3822

epoch:0,acc:32.9888, nmi:8.1380, ari:4.4337

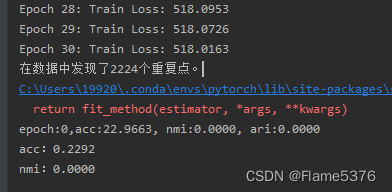

epoch:0,acc:22.9663, nmi:0.0000, ari:0.0000

C:\Users\19920.conda\envs\pytorch\lib\site-packages\sklearn\base.py:1151: ConvergenceWarning: Number of distinct clusters (1) found smaller than n_clusters (5). Possibly due to duplicate points in X.

return fit_method(estimator, *args, **kwargs)

epoch:0,acc:22.0674, nmi:0.7222, ari:-0.0831

epoch:0,acc:23.3258, nmi:1.1470, ari:-0.0626 - MSE->CE

epoch:0,acc:34.1124, nmi:13.1627, ari:8.5426

epoch:0,acc:28.3596, nmi:6.3709, ari:3.9546

500, 100, 250, 250, 100, 500, 5000 batch_size=256, shuffle=True

epoch:0,acc:25.9326, nmi:3.3499, ari:1.3552 - 500, 500, 1000, 500, 500, 1000

n_z = 10,

n_latent = 128

lr = 0.002

batch_size=256, shuffle=True

loss_CE

epoch:0,acc:23.9101, nmi:1.1215, ari:-0.0012 - bbc from rln

epoch:0,acc:23.6404, nmi:0.7112, ari:0.2215 - ???

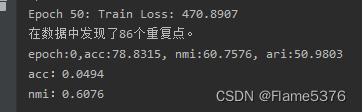

成功!

vae = VAE(128, 256,256, 128, 9635,

n_z = 10,

n_latent = 3

)

acc:59.0562, nmi:39.2102, ari:26.7944

acc:80.4944, nmi:62.3218, ari:58.1344

acc:81.0337, nmi:60.6005, ari:59.7550

acc:73.3933, nmi:51.2723, ari:44.0603

acc:83.3258, nmi:61.1844, ari:63.2995

acc:75.2360, nmi:56.2646, ari:53.6817

Epoch 300: Train Loss: 426.0915

vae = VAE(500, 1000, 1000, 500, 9635,

n_z = 10,

n_latent = 3

)

Epoch 300: Train Loss: 416.8523

acc:63.2809, nmi:45.4132, ari:39.6262

acc:76.0000, nmi:54.7599, ari:50.3674

acc:60.4045, nmi:44.7804, ari:38.7348

296

296

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?