本文将阐述了视差图转换成深度图及点云生成的过程。首先,通过读取视差图像数据,将视差图转换为深度图。利用相机参数和摄像机基线,将视差值映射为对应的深度值并输出深度图。然后,利用深度图像和彩色图像中的像素信息,生成三维点云。对每个像素,从彩色图像中获取颜色信息,并利用深度值和相机内参,将像素位置转换为三维坐标。最后,将生成的点云数据保存到文件中。

原理部分:

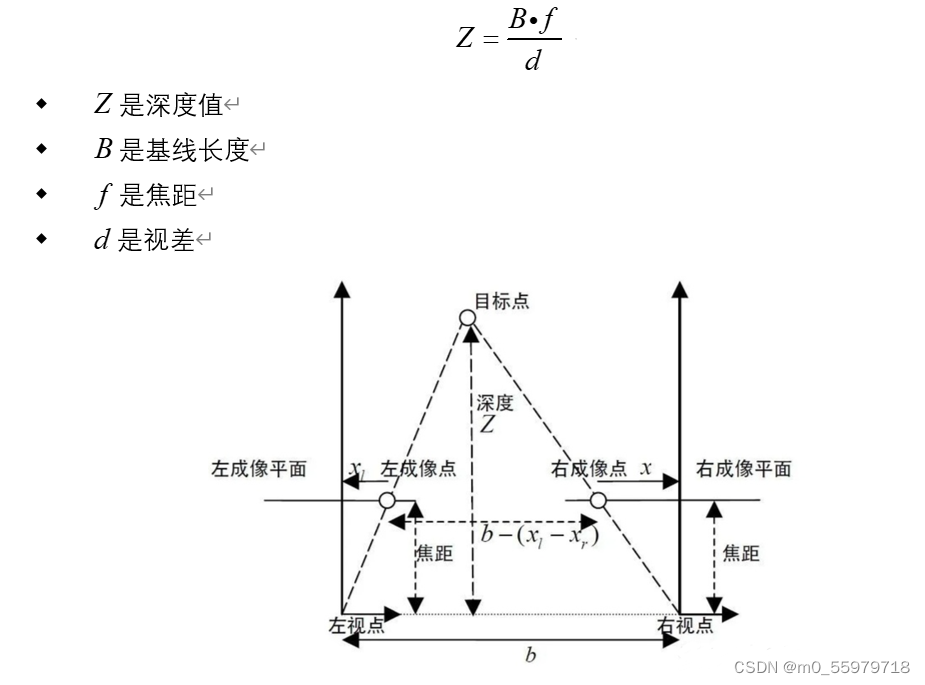

1.视差到深度的转换公式:

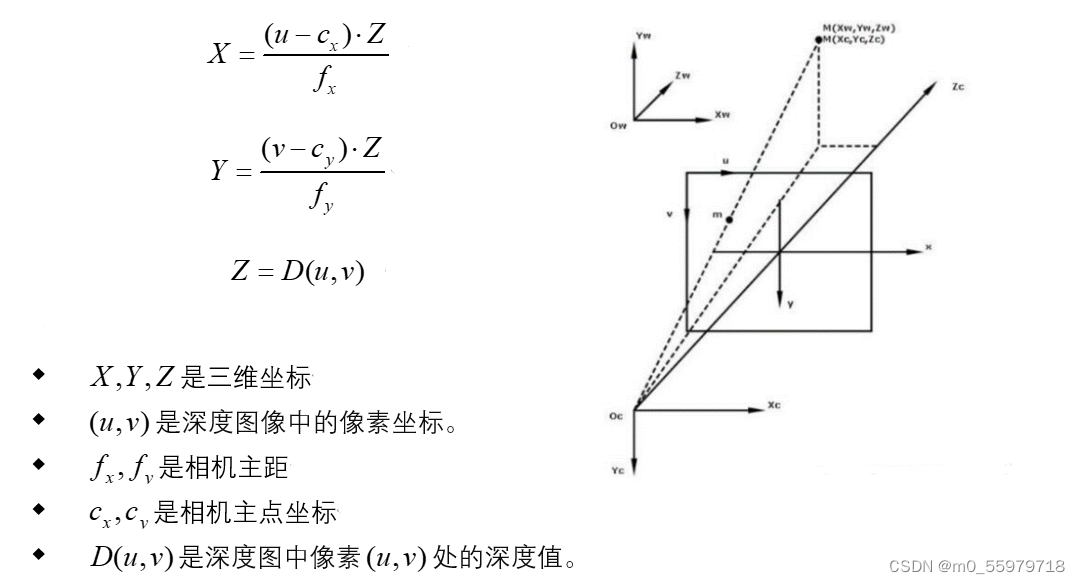

2.深度图中的像素转换为三维坐标:对于深度图中的每个像素,利用相机内参和深度值,可以将像素位置转换为相机坐标系下的三维坐标。这个过程可以使用以下公式进行

3.获取点云颜色信息:如果有彩色图像与深度图对应,可以根据深度图像中的像素坐标,从彩色图像中获取相应位置的像素颜色信息。

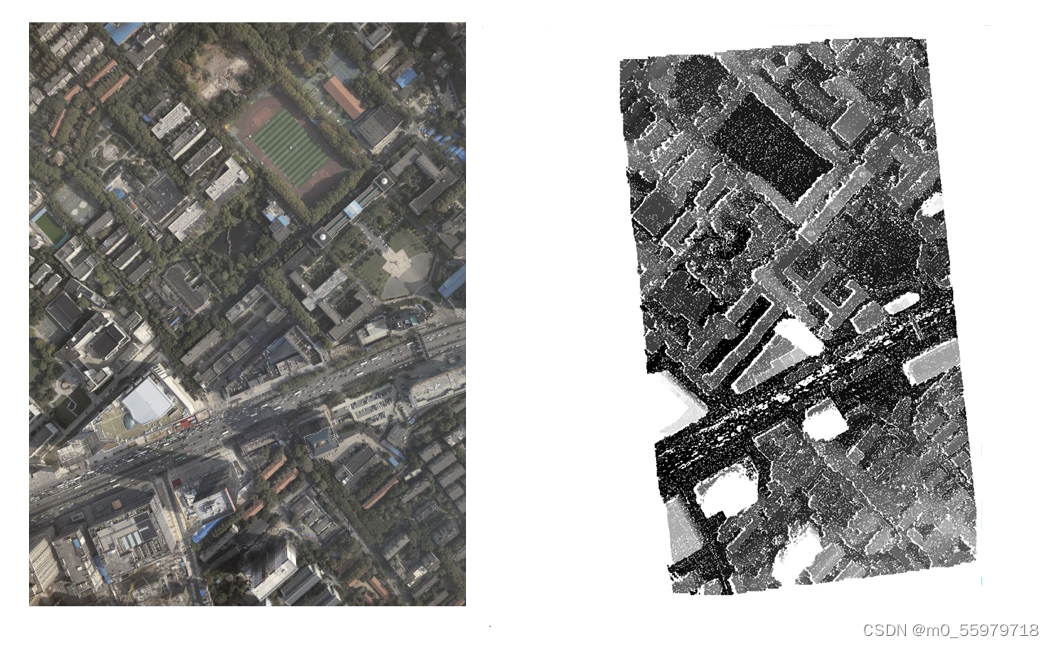

差图转深度图运行结果如下:

深度图转点云并赋色结果如下:

代码部分(Ubuntu系统下编译):

#include <iostream>

#include <fstream>

#include <opencv2/opencv.hpp>

#include "gdal/gdal_priv.h"

#pragma comment(lib, "gdal_i.lib")

#include <filesystem>

#include<tuple>

#include <gdal.h>

#include <gdal_priv.h>

#include <cpl_conv.h>

#include <boost/filesystem.hpp>

#include "gdal_priv.h"

#include "cpl_conv.h"

#include <pcl/point_cloud.h>

#include <pcl/point_types.h>

#include <pcl/io/ply_io.h>

#include <pcl/io/pcd_io.h>

using namespace std;

using namespace cv;

// Function to read data from a txt file and store it in a matrix

bool readDataFromFile(const string& filename, Mat& dataMat) {

ifstream file(filename);

if (!file.is_open()) {

cout << "Error: Could not open the file " << filename << endl;

return false;

}

dataMat.create(3, 4, CV_32FC1);

for (int i = 0; i < dataMat.rows; ++i) {

for (int j = 0; j < dataMat.cols; ++j) {

float value;

if (!(file >> value)) {

cout << "Error: Could not read data properly from the file " << filename << endl;

return false;

}

dataMat.at<float>(i, j) = value;

}

}

file.close();

return true;

}

// Function to convert disparity map to depth map

Mat disparityToDepth(cv::Mat disImg, float baselineLength, float focalLength) {

int width = disImg.cols;

int height = disImg.rows;

std::cout << "distance:" << width << height << std::endl;

Mat depthImage = Mat::zeros(height, width, CV_32FC1);

std::cout << "断点" << " 6 " << std::endl;

for (int row = 0; row < height; ++row)

{

// std::cout << "断点" << " 7 " << std::endl;

for (int col = 0; col < width; ++col)

{

float distance = 0;

distance = disImg.at<float>(row, col);

// std::cout << "distance:" << distance << std::endl;

if (distance != 0.0)

{

depthImage.at<float>(row, col) = (baselineLength * focalLength ) / distance;

}

else

{

continue;

}

}

}

return depthImage; // 返回深度图

}

std::vector<Mat> readEplimg(const std::string& folderPath)

{

GDALAllRegister();

CPLSetConfigOption("GDAL_FILENAME_IS_UTF8", "NO"); //设置支持中文路径和文件名

std::vector<Mat> Eplimg;

if (!boost::filesystem::exists(folderPath) || !boost::filesystem::is_directory(folderPath)) {

std::cerr << "Error: " << folderPath << " is not a valid directory." << std::endl;

return Eplimg;

}

// 遍历文件夹中的每个文件

boost::filesystem::directory_iterator end_itr;

for (boost::filesystem::directory_iterator itr(folderPath); itr != end_itr; ++itr) {

if (boost::filesystem::is_regular_file(itr->status()) &&

(itr->path().extension() == ".tiff" || itr->path().extension() == ".tif")) {

std::string filePath = itr->path().string();

// 打开tiff文件

GDALDataset* poDataset = (GDALDataset*)GDALOpenShared(filePath.c_str(), GA_ReadOnly);

if (poDataset == nullptr) {

std::cerr << "Error opening image " << filePath << std::endl;

continue;

}

// 获取图像宽度和高度

int width = poDataset->GetRasterXSize();

int height = poDataset->GetRasterYSize();

// 获取波段数量

int numBands = poDataset->GetRasterCount();

// 读取图像数据

cv::Mat image(height, width, CV_16U);

//poDataset->RasterIO(GF_Read, 0, 0, width, height, image.data, width, height, GDT_UInt16, 0, 0);

GDALRasterBand* poBand = poDataset->GetRasterBand(1);

poBand->RasterIO(GF_Read, 0, 0, width, height, image.data, width, height, GDT_UInt16, 0, 0);

// 关闭文件

GDALClose(poDataset);

// 将图像添加到向量中

Eplimg.push_back(image);

}

}

return Eplimg;

}

cv::Mat readDisparityImage(const std::string& filePath) {

GDALAllRegister();

// 打开 TIFF 文件

GDALDataset *disparityDataset = (GDALDataset *)GDALOpen(filePath.c_str(), GA_ReadOnly);

if (disparityDataset == NULL) {

std::cerr << "无法打开图像文件!" << std::endl;

return cv::Mat();

}

// 获取图像宽度和高度

int width = disparityDataset->GetRasterXSize();

int height = disparityDataset->GetRasterYSize();

std::cout << "图像尺寸:" << width << " x " << height << std::endl;

// 获取第一个波段

// GDALRasterBand *poBand = disparityDataset->GetRasterBand(1);

// 获取波段数量

int numBands = disparityDataset->GetRasterCount();

// 读取图像数据

cv::Mat image(height, width, CV_32FC1);

GDALRasterBand* poBand = disparityDataset->GetRasterBand(1);

GDALDataType g_type = poBand->GetRasterDataType();

// std::cout << "断点" << width << " 1 " << height << std::endl;

// float *data = new float [width * height];

// std::cout << "断点" << width << " 2 " << height << std::endl;

// poBand->RasterIO(GF_Read, 0, 0, width, height, data, width, height, GDT_Float64, 0, 0);

std::cout << "断点" << width << " 3 " << height << std::endl;

// 将数据转换为 OpenCV 的 cv::Mat 格式

// cv::Mat img(height, width, CV_64F, data);

disparityDataset->GetRasterBand(1)->RasterIO(GF_Read, 0, 0, width, height, image.data, width, height, g_type, 0, 0);

poBand->RasterIO(GF_Read, 0, 0, width, height, image.data, width, height, g_type, 0,0);

std::cout << "断点" << width << " 4 " << height << std::endl;

// 释放内存

// delete[] data;

GDALClose(disparityDataset);

return image;

}

std::vector<cv::Mat> readColorImages(const std::string& folderPath)

{

GDALAllRegister();

CPLSetConfigOption("GDAL_FILENAME_IS_UTF8", "NO"); //设置支持中文路径和文件名

std::vector<cv::Mat> colorImg;

if (!boost::filesystem::exists(folderPath) || !boost::filesystem::is_directory(folderPath)) {

std::cerr << "Error: " << folderPath << " is not a valid directory." << std::endl;

return colorImg;

}

boost::filesystem::directory_iterator end_itr;

for (boost::filesystem::directory_iterator itr(folderPath); itr != end_itr; ++itr) {

if (boost::filesystem::is_regular_file(itr->status()) &&

(itr->path().extension() == ".tiff" || itr->path().extension() == ".tif")) {

std::string filePath = itr->path().string();

GDALDataset* poDataset = (GDALDataset*)GDALOpenShared(filePath.c_str(), GA_ReadOnly);

if (poDataset == nullptr) {

std::cerr << "Error opening image " << filePath << std::endl;

continue;

}

int width = poDataset->GetRasterXSize();

int height = poDataset->GetRasterYSize();

int numBands = poDataset->GetRasterCount();

// 创建一个3通道的Mat矩阵

cv::Mat image(height, width, CV_8UC3);

// 逐波段读取数据并存储到Mat矩阵中

for (int b = 1; b <= std::min(numBands, 3); ++b) { // 限制最多读取前3个波段

GDALRasterBand* poBand = poDataset->GetRasterBand(b);

poBand->RasterIO(GF_Read, 0, 0, width, height, image.data + (b - 1), width, height, GDT_Byte, 3, width * 3);

}

// 关闭文件

GDALClose(poDataset);

// 将图像添加到向量中

cv::cvtColor(image, image, cv::COLOR_RGB2BGR); // 转换为BGR格式

colorImg.push_back(image);

}

}

return colorImg;

}

void saveDepthImageWithGDAL(const cv::Mat& depthImage, const std::string& outputPath) {

// 初始化 GDAL 库

GDALAllRegister();

// 获取深度图像的尺寸信息

int width = depthImage.cols;

int height = depthImage.rows;

// 创建输出文件

GDALDriver* driver = GetGDALDriverManager()->GetDriverByName("GTiff");

if (driver == nullptr) {

std::cerr << "Error: Could not get GDAL driver." << std::endl;

return;

}

// 创建输出数据集

GDALDataset* dataset = driver->Create(outputPath.c_str(), width, height, 1, GDT_Float32, NULL);

if (dataset == nullptr) {

std::cerr << "Error: Could not create GDAL dataset." << std::endl;

return;

}

// 将深度图像数据写入到数据集中

float* data = (float*)CPLMalloc(sizeof(float) * width * height);

for (int i = 0; i < height; ++i) {

for (int j = 0; j < width; ++j) {

data[i * width + j] = depthImage.at<float>(i, j);

}

}

GDALRasterBand* band = dataset->GetRasterBand(1);

band->RasterIO(GF_Write, 0, 0, width, height, data, width, height, GDT_Float32, 0, 0);

// 释放内存和关闭数据集

CPLFree(data);

GDALClose(dataset);

}

void depthToCloudPoint(std::vector<Mat>colorImage,Mat depthImage ,float focalLength_x,float focalLength_y)

{

pcl::PointCloud<pcl::PointXYZRGB>::Ptr cloud(new pcl::PointCloud<pcl::PointXYZRGB>);

std::cout << "size:" << depthImage.rows << "x" << depthImage.cols << std::endl;

for (int y = 0; y < depthImage.rows; ++y) {

for (int x = 0; x < depthImage.cols; ++x) {

if (y >= 0 && y < depthImage.rows && x >= 0 && x < depthImage.cols) {

// 获取深度值

float depth = depthImage.at <float>(y, x);

// 如果深度值为0(无效值),跳过

if (depth == 0.0f)

continue;

// 计算点云位置

pcl::PointXYZRGB point;

point.z = depth;

point.x = (x - focalLength_x) * depth / focalLength_x;

point.y = (y - focalLength_y) * depth / focalLength_y;

std::cout << "size:" << "9" << std::endl;

//std::cout << "color:" << colorImage << std::endl;

// 获取相应位置的彩色像素值作为颜色

cv::Vec3b color = colorImage[0].at<cv::Vec3b>(y, x);

std::cout << "color:" << color << std::endl;

point.r = color[2];

point.g = color[1];

point.b = color[0];

std::cout << "color:" << color << std::endl;

// 将点添加到点云中

cloud->push_back(point);

}

}

}

pcl::io::savePLYFile("pointcloud.ply", *cloud);

pcl::io::savePCDFileBinary("point_cloud.pcd", *cloud);

pcl::io::savePLYFileBinary("point_cloud.ply", *cloud);

std::cout << "Point cloud saved to point_cloud.ply" << std::endl;

}

int main() {

std::vector<Mat> Eplimg = readEplimg("/home/tl/3rdparty/homeworks/build/EpiImg");

std::vector<Mat> colorImage = readColorImages("/home/tl/3rdparty/homeworks/build/EpiImg");

cv::Mat disImg = readDisparityImage("/home/tl/3rdparty/homeworks/build/017ER030_017ER031_DISP_ET0.tif");

// 检查是否成功读取

if (disImg.empty()) {

std::cerr << "图像读取失败!" << std::endl;

}

Mat dataMatleft, dataMatright;

if (!readDataFromFile("017ER030_REC.txt", dataMatleft) || !readDataFromFile("017ER031_REC.txt", dataMatright)) {

return -1;

}

Mat K1, R1, t1, K2, R2, t2;

decomposeProjectionMatrix(dataMatleft, K1, R1, t1);

decomposeProjectionMatrix(dataMatright, K2, R2, t2);

Mat B = t1 - t2;

float baseline_length = norm(B);

float focalLength_x = K1.at<float>(0, 0); // 从相机内参矩阵K1中获取水平方向的焦距

cout << "基线长度: " << baseline_length << endl;

cout << "焦距: " << focalLength_x << endl;

std::cout << "断点" << " 5 " << std::endl;

// 或者

float focalLength_y = K1.at<float>(1, 1); // 从相机内参矩阵K1中获取垂直方向的焦距

Mat depthImage = disparityToDepth(disImg, baseline_length, focalLength_x); // 调用 disparityToDepth 函数获取深度图

// 设置输出路径

std::string outputPath = "output_depth_image.tif";

// 将深度图像保存为图片文件

saveDepthImageWithGDAL(depthImage, outputPath);

// 调用函数进行深度图像到点云的转换

depthToCloudPoint(colorImage, depthImage, focalLength_x, focalLength_y);

cout << "基线长度: " << baseline_length << endl;

cout << "相机内参矩阵 K:" << endl << K1 << endl;

cout << "旋转矩阵 R:" << endl << R1 << endl;

cout << "平移向量 t:" << endl << t1 << endl;

cout << "Data matrix 1:" << endl << dataMatleft << endl;

cout << "Data matrix 2:" << endl << dataMatright << endl;

return 0;

}

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?