MJPG-Streamer介绍

MJPG-Streamer是个JPEG的文件传输流,应用于Linux系统,通过v4l2读取相机图像,之后通过http发送到客户端浏览器。

MJPG 的优点

很多摄像头本身就支持 JPEG、MJPG,所以处理器不需要做太多处理。

一般的低性能处理器就可以传输 MJPG 视频流。

MJPG 的缺点

MJPG 只是多个 JPEG 图片的组合,它不考虑前后两帧数据的变化,总是传输一帧完整的图像,传输带宽要求高。

H264 等视频格式,会考虑前后两帧数据的变化,只传输变化的数据,传输带宽要求低

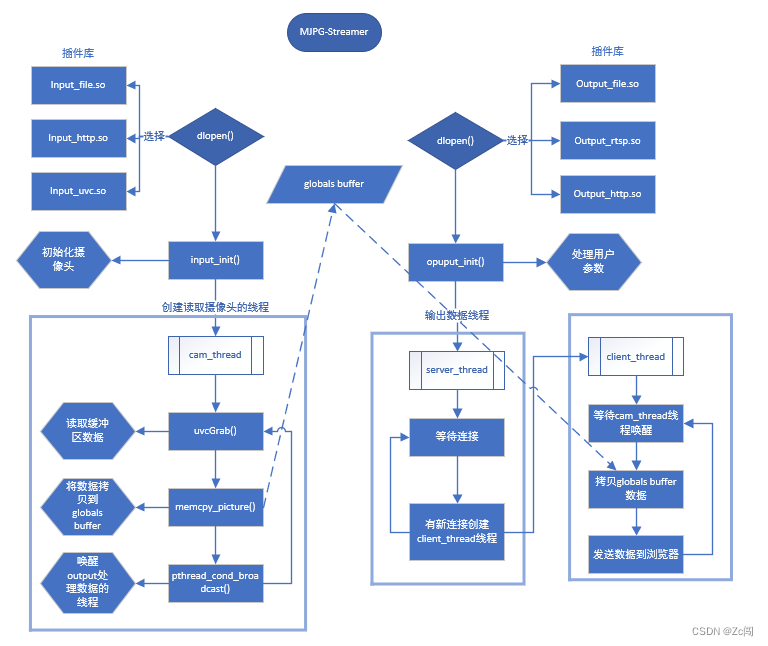

MJPG-Streamer整体架构

MJPG-Streamer采用插件的方式扩展功能,不需要的功能可以不进行加载

代码详细分析

重要数据结构

全局数据,存储抽象出的input对象和output对象

struct _globals {

int stop;

/* input plugin */

input in[MAX_INPUT_PLUGINS];

int incnt;

/* output plugin */

output out[MAX_OUTPUT_PLUGINS];

int outcnt;

/* pointer to control functions */

//int (*control)(int command, char *details);

};

input结构体,将读取数据的操作抽象成此结构体

buf存放数据,context根据不同的插件为不同结构体,init、stop等为操作函数

typedef struct _input input;

struct _input {

char *plugin;

char *name;

void *handle;

input_parameter param; // this holds the command line arguments

// input plugin parameters

struct _control *in_parameters;

int parametercount;

struct v4l2_jpegcompression jpegcomp;

/* signal fresh frames */

pthread_mutex_t db;

pthread_cond_t db_update;

/* global JPG frame, this is more or less the "database" */

unsigned char *buf;

int size;

/* v4l2_buffer timestamp */

struct timeval timestamp;

input_format *in_formats;

int formatCount;

int currentFormat; // holds the current format number

void *context; // private data for the plugin

int (*init)(input_parameter *, int id);

int (*stop)(int);

int (*run)(int);

int (*cmd)(int plugin, unsigned int control_id, unsigned int group, int value, char *value_str);

};

output结构体,将发送数据操作抽象成此结构体

buf存放数据,context根据不同的插件为不同结构体,init、stop等为操作函数

typedef struct _output output;

struct _output {

char *plugin;

char *name;

void *handle;

output_parameter param;

// input plugin parameters

struct _control *out_parameters;

int parametercount;

int (*init)(output_parameter *param, int id);

int (*stop)(int);

int (*run)(int);

int (*cmd)(int plugin, unsigned int control_id, unsigned int group, int value, char *value_str);

};

插件加载

插件加载示例:

./mjpg_streamer -i "./input_uvc.so -y" -o "./output_http.so -w ./www" -o "./output_file.so -f /www/pice -d 15000"

代码处理

所有命令:

static struct option long_options[] = {

{"help", no_argument, NULL, 'h'},

{"input", required_argument, NULL, 'i'},

{"output", required_argument, NULL, 'o'},

{"version", no_argument, NULL, 'v'},

{"background", no_argument, NULL, 'b'},

{NULL, 0, NULL, 0}

};

获取命令,获取到是-o还是-i等命令

c = getopt_long(argc, argv, "hi:o:vb", long_options, NULL);

不同命令不同处理方式,optarg表示参数,对于示例-i的参数就是:“./input_uvc.so -y”

switch(c) {

case 'i':

input[global.incnt++] = strdup(optarg);

break;

case 'o':

output[global.outcnt++] = strdup(optarg);

break;

case 'v':

printf("MJPG Streamer Version: %s\n",

#ifdef GIT_HASH

GIT_HASH

#else

SOURCE_VERSION

#endif

);

return 0;

获取要加载的插件(动态库)名称,之后通过dlopen函数将其加载到内存,并返回句柄

tmp = (size_t)(strchr(input[i], ' ') - input[i]);

...

global.in[i].plugin = (tmp > 0) ? strndup(input[i], tmp) : strdup(input[i]);

global.in[i].handle = dlopen(global.in[i].plugin, RTLD_LAZY);

将刚刚加载的插件中名为"input_init"的函数指针赋值给global.in[i].init,其他同理

global.in[i].init = dlsym(global.in[i].handle, "input_init");

...

global.in[i].stop = dlsym(global.in[i].handle, "input_stop");

...

global.in[i].run = dlsym(global.in[i].handle, "input_run");

...

global.in[i].cmd = dlsym(global.in[i].handle, "input_cmd");

初始化并运行input插件

global.in[i].init(&global.in[i].param, i));

...

global.in[i].run(i);

input_uvc分析

cam_thread线程中运行uvcGrab函数,将缓冲区的数据提出并拷贝到input.buf中

在init_v4l2函数中,将摄像头的缓冲区映射到mem数组中

vd->buf.index = i;

...

vd->mem[i] = mmap(0 /* start anywhere */ ,

vd->buf.length, PROT_READ | PROT_WRITE, MAP_SHARED, vd->fd,

vd->buf.m.offset);

出缓冲区

ret = xioctl(vd->fd, VIDIOC_DQBUF, &vd->buf);

将数据拷贝到vd->tmpbuffer里

memcpy(vd->tmpbuffer, vd->mem[vd->buf.index], vd->buf.bytesused);

入缓冲区

ret = xioctl(vd->fd, VIDIOC_QBUF, &vd->buf);

如果未定义NO_LIBJPEG,通过compress_image_to_jpeg函数,将vd->tmpbuffer中的数据压缩成JPEG后拷贝到pglobal->in[pcontext->id].buf里面

#ifndef NO_LIBJPEG

if ((pcontext->videoIn->formatIn == V4L2_PIX_FMT_YUYV) ||

(pcontext->videoIn->formatIn == V4L2_PIX_FMT_UYVY) ||

(pcontext->videoIn->formatIn == V4L2_PIX_FMT_RGB24) ||

(pcontext->videoIn->formatIn == V4L2_PIX_FMT_RGB565) ) {

DBG("compressing frame from input: %d\n", (int)pcontext->id);

pglobal->in[pcontext->id].size = compress_image_to_jpeg(pcontext->videoIn, pglobal->in[pcontext->id].buf, pcontext->videoIn->framesizeIn, quality);

/* copy this frame's timestamp to user space */

pglobal->in[pcontext->id].timestamp = pcontext->videoIn->tmptimestamp;

} else {

如果定义了NO_LIBJPEG,将vd->tmpbuffer中的数据直接拷贝到pglobal->in[pcontext->id].buf里面

pglobal->in[pcontext->id].size = memcpy_picture(pglobal->in[pcontext->id].buf, pcontext->videoIn->tmpbuffer, pcontext->videoIn->tmpbytesused);

compress_image_to_jpeg函数

通过jpeglib库,完成对图片的压缩

核心结构体

struct jpeg_compress_struct cinfo;

压缩流程

dest_buffer:将buffer赋值到cinfo->dest中的outbuffer和outbuffer_cursor里

jpeg_set_quality:设置质量

jpeg_start_compress:开始转换

line_buffer:存储rgb每个的数值的数组

jpeg_write_scanlines:写一行的rgb数据

jpeg_finish_compress:结束,保证同步

jpeg_destroy_compress:释放cinfo资源

int compress_image_to_jpeg(struct vdIn *vd, unsigned char *buffer, int size, int quality)

{

...

dest_buffer(&cinfo, buffer, size, &written);

...

jpeg_set_defaults(&cinfo);

jpeg_set_quality(&cinfo, quality, TRUE);

jpeg_start_compress(&cinfo, TRUE);

...

row_pointer[0] = line_buffer;

jpeg_write_scanlines(&cinfo, row_pointer, 1);

...

jpeg_finish_compress(&cinfo);

jpeg_destroy_compress(&cinfo);

free(line_buffer);

return (written);

}

output_http分析

server_thread线程

server_thread线程绑定ip和端口,创建监听,并加入到select;当有连接时,创建client_thread线程处理

getaddrinfo:对多个输入的网络参数进行处理,结果存储在aip结构体中

pcontext->sd[i]:监听描述符

setsockopt:设置端口复用

bind:绑定ip和端口号

listen:监听

select:io复用,当监听套接字有连接时,接触阻塞,selectfds结构体中存放接入连接的套接字

accept:接收连接,返回通信的套接字

最后创建通信的线程,将用于通信的相关参数传入

getaddrinfo(pcontext->conf.hostname, name, &hints, &aip)

...

pcontext->sd[i] = socket(aip2->ai_family, aip2->ai_socktype, 0)

setsockopt(pcontext->sd[i], SOL_SOCKET, SO_REUSEADDR, &on, sizeof(on))

...

bind(pcontext->sd[i], aip2->ai_addr, aip2->ai_addrlen)

...

listen(pcontext->sd[i], 10)

...

FD_ZERO(&selectfds);

...

FD_SET(pcontext->sd[i], &selectfds);

...

err = select(max_fds + 1, &selectfds, NULL, NULL, NULL);

...

pcfd->fd = accept(pcontext->sd[i], (struct sockaddr *)&client_addr, &addr_len);

pcfd->pc = pcontext;

...

pthread_create(&client, NULL, &client_thread, pcfd);

...

pthread_detach(client);

client_thread线程

首先获取http请求,然后判断请求,根据不同的请求执行不同的操作

cnt = _readline(lcfd.fd, &iobuf, buffer, sizeof(buffer) - 1, 5)

...

if(strstr(buffer, "GET /?action=snapshot") != NULL) {

req.type = A_SNAPSHOT;

...

switch(req.type) {

case A_SNAPSHOT_WXP:

case A_SNAPSHOT:

DBG("Request for snapshot from input: %d\n", input_number);

send_snapshot(&lcfd, input_number);

break;

...

send_stream函数

http请求头

sprintf(buffer, "HTTP/1.0 200 OK\r\n" \

"Access-Control-Allow-Origin: *\r\n" \

STD_HEADER \

"Content-Type: multipart/x-mixed-replace;boundary=" BOUNDARY "\r\n" \

"\r\n" \

"--" BOUNDARY "\r\n");

write(context_fd->fd, buffer, strlen(buffer));

将读出的pglobal->in[input_number].buf中的数据拷贝到frame

发送请求头,之后发送数据流,最后为结束段

memcpy(frame, pglobal->in[input_number].buf, frame_size);

pthread_mutex_unlock(&pglobal->in[input_number].db);

//发送请求头

sprintf(buffer, "Content-Type: image/jpeg\r\n" \

"Content-Length: %d\r\n" \

"X-Timestamp: %d.%06d\r\n" \

"\r\n", frame_size, (int)timestamp.tv_sec, (int)timestamp.tv_usec);

DBG("sending intemdiate header\n");

if(write(context_fd->fd, buffer, strlen(buffer)) < 0) break;

//发送数据流

DBG("sending frame\n");

if(write(context_fd->fd, frame, frame_size) < 0) break;

//发送结束段

DBG("sending boundary\n");

sprintf(buffer, "\r\n--" BOUNDARY "\r\n");

if(write(context_fd->fd, buffer, strlen(buffer)) < 0) break;

4775

4775

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?