logistic回归

logistic回归是一种机器学习分类中的广义线性模型,主要用于解决二分类问题。它的基本原理是通过将线性回归模型的输出传递给一个逻辑函数,将预测值映射到0到1之间的概率值,从而实现对二分类问题的建模和预测。

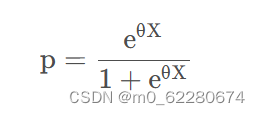

logistic数学模型

在实际应用中,其可有一个sigmoid函数表示,函数如下:

实例展示:

sigmoid函数实现:

def sigmoid(inX):

return 1.0 / (1 + exp(-inX))

分类函数:

def classifyVector(inX, weights):

prob = sigmoid(sum(inX * weights))

if prob > 0.5:

return 1.0

else:

return 0.0

获取最佳拟合系数:

def gradAscent(dataMatIn, classLabels):

dataMatrix = mat(dataMatIn)

labelMat = mat(classLabels).transpose()

m, n = shape(dataMatrix)

alpha = 0.001

maxCycles = 500

weights = ones((n, 1))

for k in range(maxCycles):

h = sigmoid(dataMatrix * weights)

error = (labelMat - h)

weights = weights + alpha * dataMatrix.transpose() * error

return weights

数据处理:

def logisticTest1():

frTrain = open(r'G:\logistic\test\Train.txt')

frTest = open(r'G:\logistic\test\test.txt')

trainingSet = []

trainingLabels = []

for line in frTrain.readlines():

currLine = line.strip().split('\t')

lineArr = []

for i in range(3):

lineArr.append(float(currLine[i]))

trainingSet.append(lineArr)

trainingLabels.append(float(currLine[3]))

trainWeights = gradAscent(array(trainingSet), trainingLabels)

errorCount = 0

numTestVec = 0.0

for line in frTest.readlines():

numTestVec += 1.0

currLine = line.strip().split('\t')

lineArr = []

for i in range(3):

lineArr.append(float(currLine[i]))

if int(classifyVector(array(lineArr), trainWeights)) != int(currLine[3]):

errorCount += 1

errorRate = (float(errorCount) / numTestVec)

print("测试的错误率为: %f" % errorRate)

return errorRate

运行结果如下:

总结:

logistic函数的实现过程中其有着利于实现,便于理解等种种优点,但其在一些情况中也常常会产生欠拟合的结果,我们应考虑不同的情况以善加对其使用。

963

963

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?