github上下载qwen1_5源码

修改finetun.sh

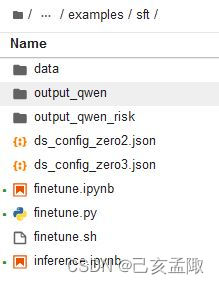

然后在路径qwen1_5/examples/sft下修改finetun.sh, 内容如下

#!/bin/bash

export CUDA_DEVICE_MAX_CONNECTIONS=1

DIR=`pwd`

# Guide:

# This script supports distributed training on multi-gpu workers (as well as single-worker training).

# Please set the options below according to the comments.

# For multi-gpu workers training, these options should be manually set for each worker.

# After setting the options, please run the script on each worker.

# Number of GPUs per GPU worker

GPUS_PER_NODE=$(python -c 'import torch; print(torch.cuda.device_count())')

# Number of GPU workers, for single-worker training, please set to 1

NNODES=${NNODES:-1}

# The rank of this worker, should be in {0, ..., WORKER_CNT-1}, for single-worker training, please set to 0

NODE_RANK=${NODE_RANK:-0}

# The ip address of the rank-0 worker, for single-worker training, please set to localhost

MASTER_ADDR=${MASTER_ADDR:-localhost}

# The port for communication

MASTER_PORT=${MASTER_PORT:-6010}

MODEL="Qwen/Qwen1.5-7B" # Set the path if you do not want to load from huggingface directly

# ATTENTION: specify the path to your training data, which should be a json file consisting of a list of conversations.

# See the section for finetuning in README for more information.

DATA="path_to_data"

DS_CONFIG_PATH="finetune/ds_config_zero3.json"

USE_LORA=False

Q_LORA=False

function usage() {

echo '

Usage: bash finetune/finetune_lora_ds.sh [-m MODEL_PATH] [-d DATA_PATH] [--deepspeed DS_CONFIG_PATH] [--use_lora USE_LORA] [--q_lora Q_LORA]

'

}

while [[ "$1" != "" ]]; do

case $1 in

-m | --model )

shift

MODEL=$1

;;

-d | --data )

shift

DATA=$1

;;

--deepspeed )

shift

DS_CONFIG_PATH=$1

;;

--use_lora )

shift

USE_LORA=$1

;;

--q_lora )

shift

Q_LORA=$1

;;

-h | --help )

usage

exit 0

;;

* )

echo "Unknown argument ${1}"

exit 1

;;

esac

shift

done

DISTRIBUTED_ARGS="

--nproc_per_node $GPUS_PER_NODE \

--nnodes $NNODES \

--node_rank $NODE_RANK \

--master_addr $MASTER_ADDR \

--master_port $MASTER_PORT

"

torchrun $DISTRIBUTED_ARGS finetune.py \

--model_name_or_path $MODEL \

--data_path $DATA \

--bf16 True \

--output_dir output_qwen \

--num_train_epochs 5 \

--per_device_train_batch_size 2 \

--per_device_eval_batch_size 1 \

--gradient_accumulation_steps 8 \

--evaluation_strategy "no" \

--save_strategy "steps" \

--save_steps 10 \

--save_total_limit 10 \

--learning_rate 3e-4 \

--weight_decay 0.01 \

--adam_beta2 0.95 \

--warmup_ratio 0.01 \

--lr_scheduler_type "cosine" \

--logging_steps 1 \

--report_to "none" \

--model_max_length 512 \

--lazy_preprocess True \

--use_lora ${USE_LORA} \

--q_lora ${Q_LORA} \

--gradient_checkpointing \

--deepspeed ${DS_CONFIG_PATH}

训练

(在qwen1_5/examples/sft路径下开个bash里运行finetune.sh,不要在jupyter里跑)

pip install transformers==4.37.0

# 要用命令行运行

# 不想用多卡训练的时候,先 export CUDA_VISIBLE_DEVICE=0

bash finetune.sh -m "/opt/app-root/src/Qwen1.5-14B-Chat" -d "./data/traindata.jsonl" --deepspeed "ds_config_zero3.json" --use_lora True预测

(在qwen1_5/examples/sft路径下建个inference.py)

pip install transformers==4.33.0from transformers import AutoModelForCausalLM, AutoTokenizer

import os

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

device = "cuda" # the device to load the model onto

path = "output_qwen/checkpoint-70"

model = AutoModelForCausalLM.from_pretrained(

path,

torch_dtype="auto",

device_map="cuda:0"

)

tokenizer = AutoTokenizer.from_pretrained(path)

def predict_answer(messages):

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors="pt").to(device)

generated_ids = model.generate(

model_inputs.input_ids,

max_new_tokens=512,

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

return response

text = "xxxx"

messages = [{"role": "user", "content": "我需要起草投标文件中的一个章节,章节内容为:\n\n\n{}\n\n\n\n请将章节内容拆分成多个小节,每个小节覆盖一个信息点,形成一份本章节的提纲。注意,要覆盖所有信息点,不要使用‘同上、略’等省略表述,尽可能保持原文的措词。".format(text)}]

response = predict_answer(messages)

print(response)训练数据格式

格式为jsonl,每行一条json,位于qwen1_5/examples/sft/data下,不妨命名为traindata.jsonl

{"type": "chatml", "messages": [{"role": "user", "content": "PROMPT"}, {"role": "assistant", "content": "ANSWER"}], "source": "self-made"}

3494

3494

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?