peft库微调大模型后合并推理问题排查

先将lora合并到base模型中

from transformers import AutoTokenizer, Qwen2VLForConditionalGeneration

from peft import PeftModel

import torch

base_model_path = "E:\ModelScopeModels\hub\Qwen\Qwen2-VL-2B-Instruct"

lora_model_path = "E:\LLm_finetuning\Qwen2-VL-2B-LatexOCR\checkpoint-124"

merged_model_path = "E:\ModelScopeModels\hub\Qwen\Qwen2-VL-2B-Instruct-LaTexOCR-merge"

model = Qwen2VLForConditionalGeneration.from_pretrained(base_model_path,torch_dtype=torch.float16,trust_remote_code=True)

tokenizer = AutoTokenizer.from_pretrained(base_model_path)

print(model)

model = PeftModel.from_pretrained(model,lora_model_path)

merged_model = model.merge_and_unload()

merged_model.save_pretrained(merged_model_path)

tokenizer.save_pretrained(merged_model_path)

print(f"merged model saved to {merged_model_path}")

inference

from transformers import Qwen2VLForConditionalGeneration, AutoProcessor

from qwen_vl_utils import process_vision_info

from peft import PeftModel, LoraConfig, TaskType

prompt = "你是一个LaText OCR助手,目标是读取用户输入的照片,转换成LaTex公式。"

merged_model_path = r"xxxxxx\Qwen\Qwen2-VL-2B-Instruct-merge"

test_image_path = "997.jpg"

config = LoraConfig(

task_type=TaskType.CAUSAL_LM,

target_modules=["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"],

inference_mode=True,

r=64,

lora_alpha=16,

lora_dropout=0.05,

bias="none",

)

model = Qwen2VLForConditionalGeneration.from_pretrained(

merged_model_path, torch_dtype="auto", device_map="auto"

)

processor = AutoProcessor.from_pretrained(merged_model_path)

messages = [

{

"role": "user",

"content": [

{

"type": "image",

"image": test_image_path,

"resized_height": 100,

"resized_width": 500,

},

{"type": "text", "text": f"{prompt}"},

],

}

]

text = processor.apply_chat_template(

messages, tokenize=False, add_generation_prompt=True

)

image_inputs, video_inputs = process_vision_info(messages)

inputs = processor(

text=[text],

images=image_inputs,

videos=video_inputs,

padding=True,

return_tensors="pt",

)

inputs = inputs.to("cuda")

generated_ids = model.generate(**inputs, max_new_tokens=8192)

generated_ids_trimmed = [

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

]

output_text = processor.batch_decode(

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

)

print(output_text[0])

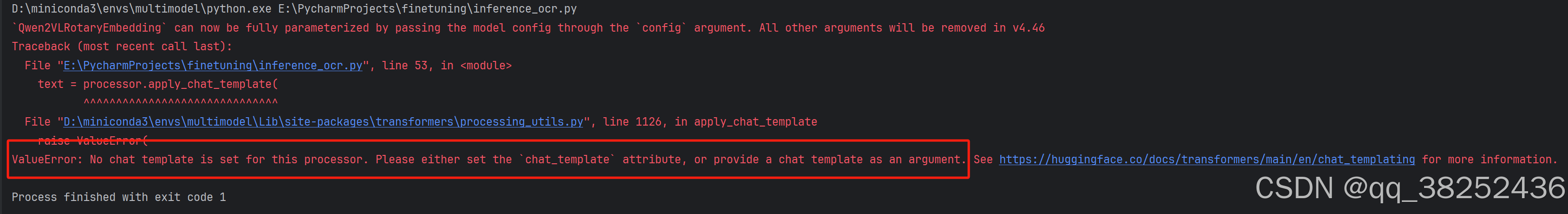

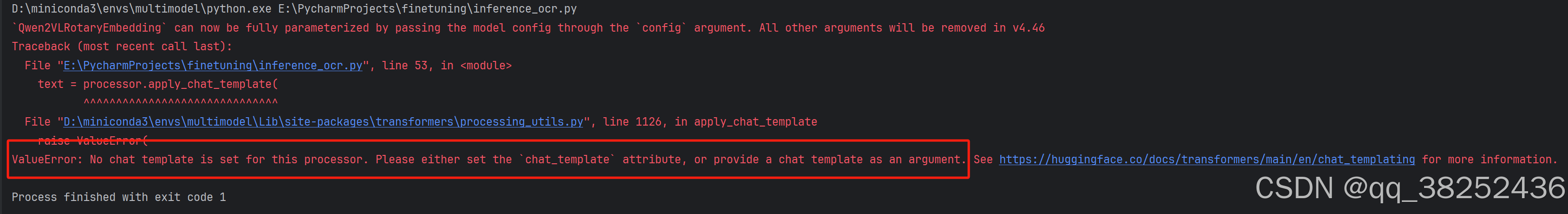

合并后,用合并后的模型进行推理,可能会出现以下错误:No chat template is set for this processor.

原因是合并后的模型文件夹内缺失chat_template.json文件,需要从原基础模型中复制一份到合并后的文件夹内。

239

239

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?