1、logistic回归梯度上升优化算法

'''

logistic回归梯度上升优化算法

'''

def loadDataSet():

'''

读取testSet.txt文件

---------------

返回:

dataMat:list,特征

labelMat:list,标签

'''

dataMat = [] ; labelMat = []

fr = open('D:\\spyder_code\\machinelearning\\逻辑斯蒂回归和最大熵模型\\testSet.txt')

for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0 ,float(lineArr[0]) ,float(lineArr[1])])

labelMat.append(int(lineArr[2]))

return dataMat,labelMat

def sigmoid(inX):

'''

sigmoid函数(一种阶跃函数)

'''

return 1.0/(1+np.exp(-inX))

def gradAscent(dataMatin, classLabelss):

'''

梯度上升算法

---------------

参数

dataMatin:list,特征

classLabelss:list,标签

---------------

返回

weights:numpy.matrix 3x1,权重

'''

dataMatrix = np.mat(dataMatin)

labelMat = np.mat(classLabelss).transpose()

m,n = np.shape(dataMatrix)

alpha = 0.001

maxCycles = 500

weights = np.ones((n,1))

for k in range(maxCycles):

h = sigmoid(dataMatrix*weights)

error = (labelMat - h)

weights = weights + alpha*dataMatrix.transpose()*error

return weights

dataMat,labelMat = loadDataSet()

weights = gradAscent(dataMat,labelMat)

#%%

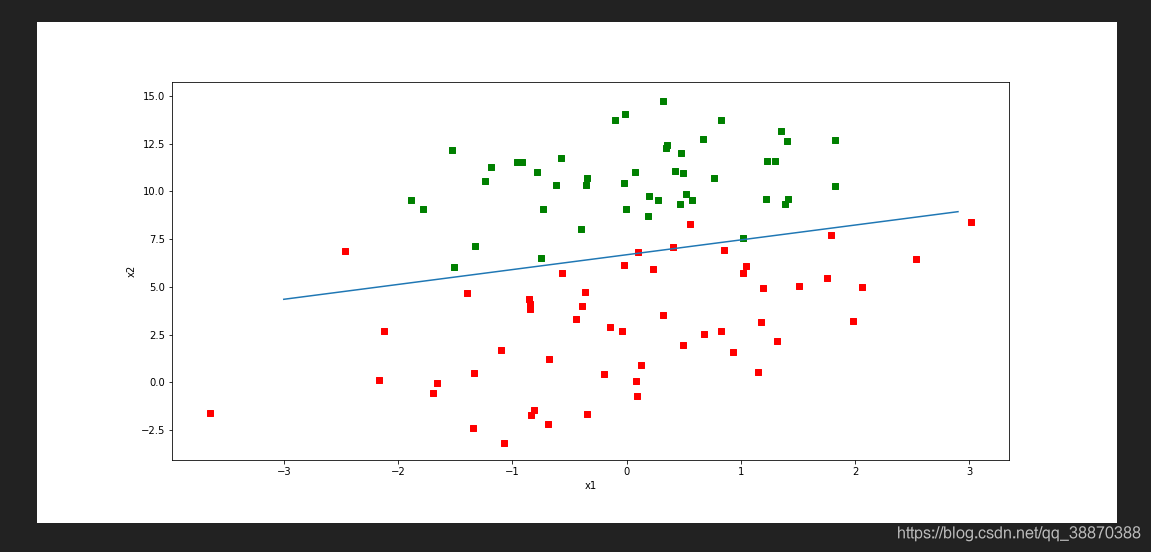

'''

画出数据集和Logistic回归最佳拟合直线函数

'''

def plotBestFit(wei):

matplotlib.use('Qt5Agg')

weights = wei.getA()

dataMat,labelMat = loadDataSet()

dataArr = np.array(dataMat)

n = np.shape(dataArr)[0]

xcord1 = [];ycord1 = []

xcord2 = [];ycord2 = []

for i in range(n):

if int(labelMat[i]) == 1:

xcord1.append(dataArr[i,1]);ycord1.append(dataArr[i,2])

else:

xcord2.append(dataArr[i,1]);ycord2.append(dataArr[i,2])

fig = plt.figure(figsize=(15,7))

ax = fig.add_subplot(111)

ax.scatter(xcord1,ycord1,s=30,c='red',marker='s')

ax.scatter(xcord2,ycord2,s=30,c='green',marker='s')

x = np.arange(-3,3,0.1)

y = (-weights[0] - weights[1]*x)/weights[2]

ax.plot(x,y)

plt.xlabel('x1');plt.ylabel('x2')

plt.show()

plotBestFit(weights)

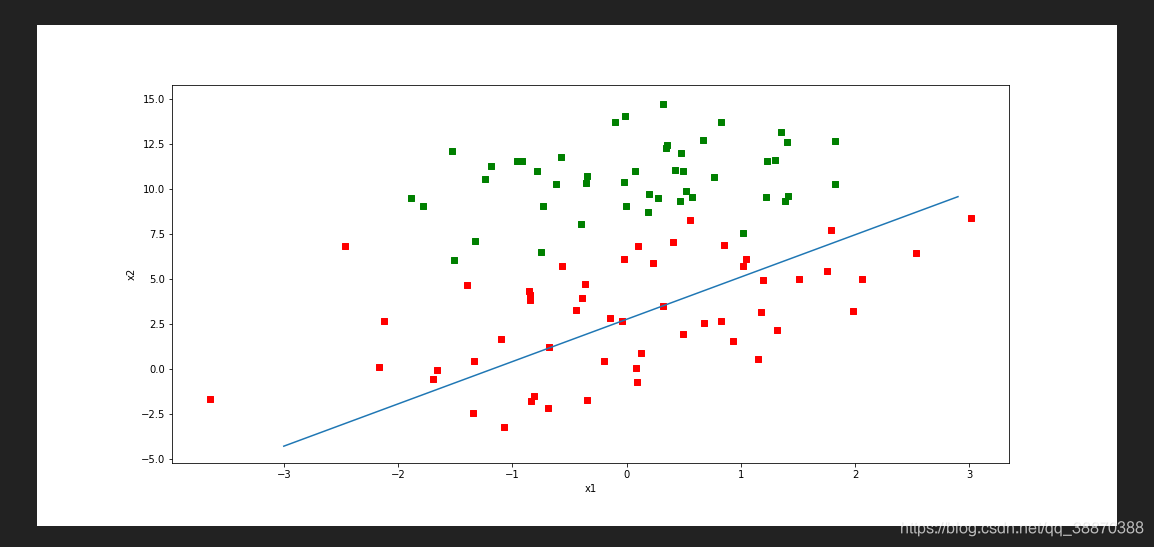

2、训练算法:随机梯度上升

'''

训练算法:随机梯度上升

'''

'''

所有回归系数初始化为1

对数据集中每个样本

计算该样本的梯度

使用alpha x gradient更新回归系数值

返回回归系数值

'''

def stocGraAscent(dataMatrix,classLabels):

'''

随机梯度上升算法

---------------

参数

dataMatin:list,特征

classLabelss:list,标签

---------------

返回

weights:numpy.matrix 3x1,权重

'''

dataMatrix = np.array(dataMatrix)

m,n = np.shape(dataMatrix)

alpha = 0.01

weights = np.ones(n)

for i in range(m):

h = sigmoid(sum(dataMatrix[i]*weights))

error = classLabels[i] - h

weights = weights + alpha * error * dataMatrix[i]

return np.mat(weights).transpose()

weights_01 = stocGraAscent(dataMat,labelMat)

plotBestFit(weights_01)

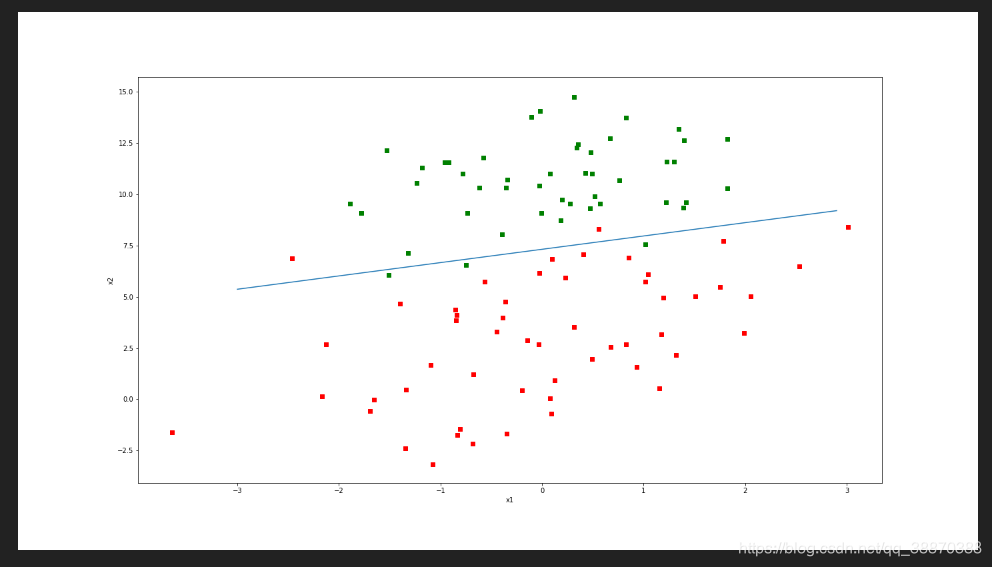

3、改进的随机梯度上升算法

def stocGradAscent1(dataMatrix,classLabels,numIter=150):

'''

随机梯度上升算法

---------------

参数

dataMatin:list,特征

classLabelss:list,标签

---------------

返回

weights:numpy.matrix 3x1,权重

'''

m,n = np.shape(dataMatrix)

weights = np.ones(n)

for j in range(numIter):

data = np.array(dataMatrix).copy()

label = classLabels.copy()

for i in range(m):

alpha = 4/(1.0+j+i)+0.0001

randIndex = int(np.random.uniform(0,len(data)))

h = sigmoid(sum(data[randIndex]*weights))

error = label[randIndex] - h

weights = weights + alpha * error * data[randIndex]

label.pop(randIndex)

data = np.delete(data,randIndex,axis=0)

return np.mat(weights).transpose()

weights_02 = stocGradAscent1(dataMat,labelMat,numIter=150)

plotBestFit(weights_02)

'''

为何说进行了改进:

1、alpha会随着迭代次数不断减小(不会为0)。步长alpha逐渐变小,这是符合正常逻辑的。当经过训练的样本越来越多

时,梯度应该逐渐变小,这时步长alpha应该也越来越小,防止weights变化过大,来回震荡

2、随机选取样本更新数据,将减少周期性波动

3、给定参数numIter表示迭代次数,相较于stocGraAscent(未改进的随机梯度上升算法)就是在原来的基础上迭代了

numIter次。

'''

821

821

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?