首先,运行完整代码,看看效果:

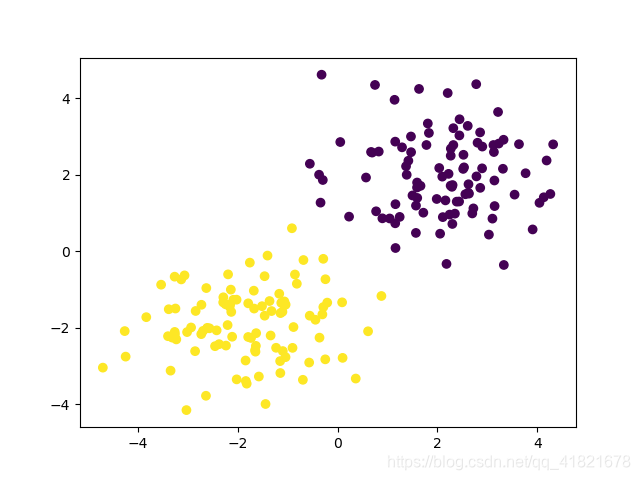

可以看出来,实现了分类功能,损失值降到了0.33左右。下面开始解析代码。实现过程:

- 自定义一个数据集;

- 定义一个神经网络;

- 调用神经网络,计算损失值;

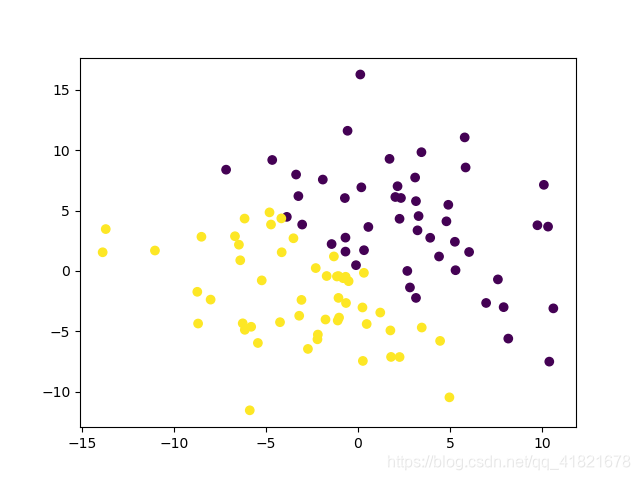

- 测试训练集,显示结果;

- 测试自定义测试集,显示结果。

本文中涉及到的Pytorch主要知识点有:

- torch.ones()

- torch.normal()

- torch.zeros()

- torch.cat()

- torch.nn.Linear()

- torch.relu()

- torch.nn.functional.softmax()

- torch.optim.SGD()

- torch.nn.CrossEntropyLoss()

- torch.max()

# 生成一个tensor,100行2列,元素全是1

n_data = torch.ones(100, 2)

# 返回的结果x0是一个和n_data形状一样,以2为平均值,以1为标准差的正态分布随机数,第一个参数为mean,第二个参数是std

x0 = torch.normal(2*n_data, 1)

# 生成100行1列的tensor

y0 = torch.zeros(100)

# 将x0和x1拼接起来

x = torch.cat((x0, x1)).type(torch.FloatTensor)

# 线性拟合函数,第一个参数输入神经元个数,第二个参数输出神经元个数

self.n_hidden = torch.nn.Linear(n_feature, n_hidden)

# ReLu激活函数,一般使用ReLU作为中间隐层神经元的激活函数,相当于torch.nn.functional.relu()

x_layer = torch.relu(self.n_hidden(x_layer))

# softmax激活函数,用于多分类神经网络输出

x_layer = torch.nn.functional.softmax(x_layer)

# SGD优化器,pytorch中已经封装好了,调用即可

optimizer = torch.optim.SGD(net.parameters(), lr=0.02)

# 交叉熵损失函数,pytorch已封装,直接调用

loss_func = torch.nn.CrossEntropyLoss()

# 梯度清空

optimizer.zero_grad()

# 反向传播

loss.backward()

# 参数更新

optimizer.step()

# 取最大值

train_predict = torch.max(train_result, 1)[1]

扩展延伸

- pytorch封装了哪些激活函数?

- pytorch封装了哪些损失函数?

- pytorch封装了哪些优化器函数?

完整代码:

import torch

import matplotlib.pyplot as plt

# 1.数据集处理

n_data = torch.ones(100, 2)

x0 = torch.normal(2*n_data, 1)

y0 = torch.zeros(100)

x1 = torch.normal(-2*n_data, 1)

y1 = torch.ones(100)

x = torch.cat((x0, x1)).type(torch.FloatTensor)

y = torch.cat((y0, y1)).type(torch.LongTensor)

print(x.shape)

print(y.shape)

# 2.网络结构

class Net(torch.nn.Module):

def __init__(self, n_feature, n_hidden, n_output):

super(Net, self).__init__()

self.n_hidden = torch.nn.Linear(n_feature, n_hidden)

self.out = torch.nn.Linear(n_hidden, n_output)

def forward(self, x_layer):

x_layer = torch.relu(self.n_hidden(x_layer))

x_layer = self.out(x_layer)

x_layer = torch.nn.functional.softmax(x_layer)

return x_layer

if __name__ == '__main__':

# 3.调用网络,计算损失函数

net = Net(n_feature=2, n_hidden=100, n_output=2)

optimizer = torch.optim.SGD(net.parameters(), lr=0.02)

loss_func = torch.nn.CrossEntropyLoss()

for i in range(100):

out = net(x)

loss = loss_func(out, y)

print("Loss is {}".format(loss))

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 4.train result测试训练集

train_result = net(x)

train_predict = torch.max(train_result, 1)[1]

plt.scatter(x.data.numpy()[:, 0], x.data.numpy()[:, 1], c=train_predict.data.numpy())

plt.show()

# 5.test测试测试集

t_data = torch.zeros(100, 2)

test_data = torch.normal(t_data, 5)

test_result = net(test_data)

prediction = torch.max(test_result, 1)[1]

plt.scatter(test_data[:, 0], test_data[:, 1], c=prediction.data.numpy())

plt.show()

4435

4435

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?