Life Long learning

连续学习的概念大概是在2016年以后才开始流行的,虽然今天的工业界中几乎都是使用一个或多个模型对应一个任务,但是为了让机器更像人,让机器能同时解决多个任务,同时把过去的知识运用到新的任务上,也是值得研究的课题。

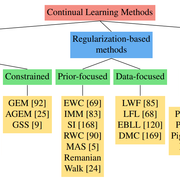

方法

- Regularization-based methods

- Parameter isolation methods

我们要实践的就是这种非常基础,但是又非常有效的方法,Elastic Weight Consolidation(EWC),基于正则化的模型长期学习方法。 这里我们实现一个能同时实现MNIST和USPS识别的长期学习模型。

我们要实践的就是这种非常基础,但是又非常有效的方法,Elastic Weight Consolidation(EWC),基于正则化的模型长期学习方法。 这里我们实现一个能同时实现MNIST和USPS识别的长期学习模型。

模型

使用Relu和线性层组成的全连接网络实现多个MNIST图像分类任务。

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import torch.utils.data as data

import torch.utils.data.sampler as sampler

import torchvision

from torchvision import datasets, transforms

import numpy as np

import os

import random

from copy import deepcopy

import json

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

self.fc1 = nn.Linear(28*28, 1024)

self.fc2 = nn.Linear(1024, 512)

self.fc3 = nn.Linear(512, 256)

self.fc4 = nn.Linear(256, 128)

self.fc5 = nn.Linear(128, 128)

self.fc6 = nn.Linear(128, 10)

self.relu = nn.ReLU()

def forward(self, x):

x = x.view(-1, 28*28)

x = self.fc1(x)

x = self.relu(x)

x = self.fc2(x)

x = self.relu(x)

x = self.fc3(x)

x = self.relu(x)

x = self.fc4(x)

x = self.relu(x)

x = self.fc5(x)

x = self.relu(x)

x = self.fc6(x)

return x

EWC

EWC的基础思想是把已经训练好的模型中的比较重要的参数用正则化项保护起来,让它们变得不那么容易被更新,从而旧的知识就不会被完全洗掉。把参数的损失函数写出则是下面的公式

L B = L ( θ ) + ∑ i λ 2 F i ( θ i − θ A , i ∗ ) 2 \mathcal{L}_B = \mathcal{L}(\theta) + \sum_{i} \frac{\lambda}{2} F_i (\theta_{i} - \theta_{A,i}^{*})^2 LB=L

本文探讨了Elastic Weight Consolidation (EWC) 方法在终身学习中的应用,旨在保护模型的重要参数免受新任务的影响,以实现连续学习。通过在全连接网络上进行MNIST和USPS手写数字识别任务,展示了EWC如何维持旧任务的性能。实验结果显示,EWC成功地防止了学习新任务时旧任务正确率的显著下降。

本文探讨了Elastic Weight Consolidation (EWC) 方法在终身学习中的应用,旨在保护模型的重要参数免受新任务的影响,以实现连续学习。通过在全连接网络上进行MNIST和USPS手写数字识别任务,展示了EWC如何维持旧任务的性能。实验结果显示,EWC成功地防止了学习新任务时旧任务正确率的显著下降。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

860

860

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?