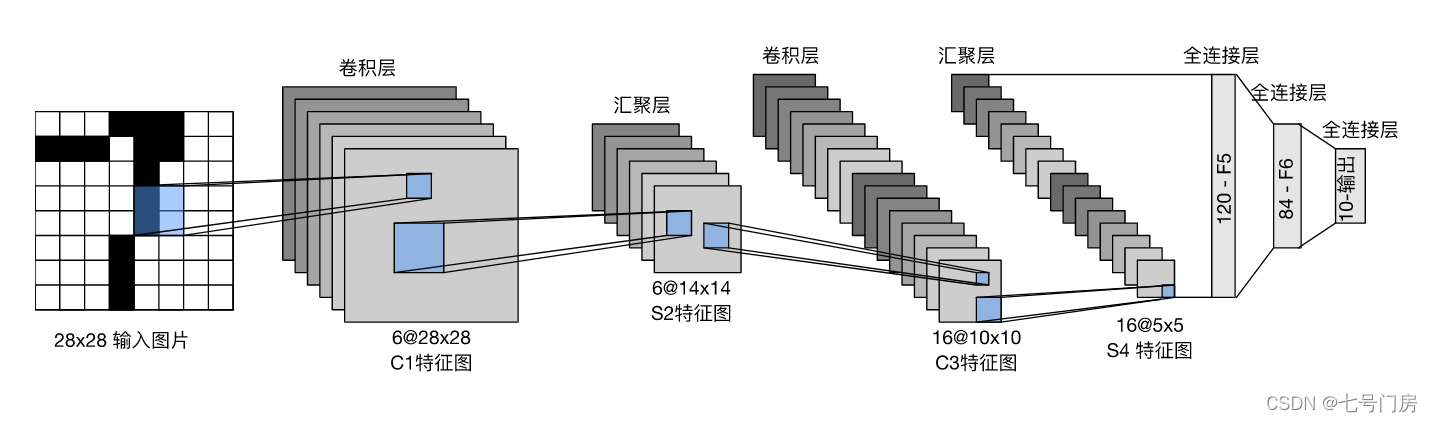

LeNet

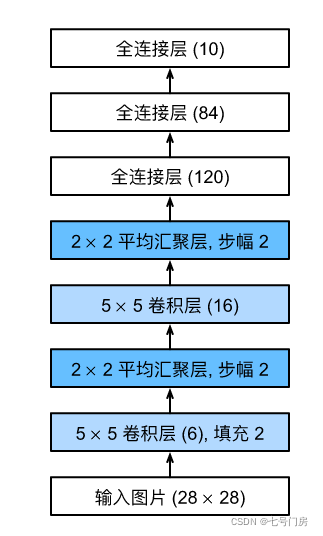

1. 网络架构图

2. 网络实现

net = nn.Sequential(

nn.Conv2d(1,6,kernel_size=5,padding=2), # (1,6,28,28)

nn.Sigmoid(), # (1,6,28,28)

nn.AvgPool2d(kernel_size=2,stride=2),# (1,6,14,14) 【(28-2+2)/2 = 14】

nn.Conv2d(6,16,kernel_size=5),# (6,16,10,10)

nn.Sigmoid(),# (6,16,10,10)

nn.AvgPool2d(kernel_size=2,stride=2), # (6,16,5,5)

nn.Flatten(),# 16*5*5 = 400

nn.Linear(400,120),nn.Sigmoid(),

nn.Linear(120,84),nn.Sigmoid(),

nn.Linear(84,10))

整体代码

import torch

from torch import nn

from torchvision import datasets

from torch.utils import data

from torchvision import transforms

batch_size = 256

lr = 0.9

# 准备数据集

train_data = datasets.FashionMNIST(root='./train_Fashiondata',train=True,transform = transforms.ToTensor(),download=True)

test_data = datasets.FashionMNIST(root='./test_Fashiondata',train=False,transform = transforms.ToTensor(),download=True)

train_iter = data.DataLoader(train_data,batch_size=batch_size,shuffle=True)

test_iter = data.DataLoader(test_data,batch_size=batch_size,shuffle=False)

# 构建模型

net = nn.Sequential(

nn.Conv2d(1,6,kernel_size=5,padding=2), # (1,6,28,28)

nn.Sigmoid(), # (1,6,28,28)

nn.AvgPool2d(kernel_size=2,stride=2),# (1,6,14,14) 【(28-2+2)/2 = 14】

nn.Conv2d(6,16,kernel_size=5),# (6,16,10,10)

nn.Sigmoid(),# (6,16,10,10)

nn.AvgPool2d(kernel_size=2,stride=2), # (6,16,5,5)

nn.Flatten(),# 16*5*5 = 400

nn.Linear(400,120),nn.Sigmoid(),

nn.Linear(120,84),nn.Sigmoid(),

nn.Linear(84,10))

# 初始化参数

def init_weights(m):

if type(m) == nn.Linear or type(m) == nn.Conv2d:

nn.init.xavier_uniform_(m.weight)

net.apply(init_weights)

# 使用GPU

device = torch.device("cuda:0"if torch.cuda.is_available() else "cpu")

net.to(device)

# 构建优化函数

optimizer = torch.optim.SGD(net.parameters(), lr=lr)

# 构建损失函数

loss = nn.CrossEntropyLoss()

# 编写训练代码

def train(epoches):

running_loss = 0.0

for i,(X,y) in enumerate(train_iter):

X,y = X.to(device),y.to(device)

y_hat = net(X)

l = loss(y_hat,y)

optimizer.zero_grad()

l.backward()

optimizer.step()

running_loss += l.item()

if i % 100 == 99:

print('[%d,%5d loss: %.3f' % (epoches+1,i + 1,running_loss/100))

running_loss = 0.0

# 编写测试代码

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_iter:

images,labels = data

images, labels = images.to(device), labels.to(device)

outputs = net(images)

_,predicted = torch.max(outputs.data,dim=1)

total += labels.size(0)

correct += (predicted==labels).sum().item()

print("正确率 %d %%"% (100* correct / total))

for k in range(20):

train(k)

test()

该博客介绍了如何使用PyTorch实现经典的LeNet网络,并用其训练FashionMNIST数据集。代码包括网络架构、数据加载、模型构建、参数初始化、GPU使用、优化器设置、损失函数定义、训练与测试过程。

该博客介绍了如何使用PyTorch实现经典的LeNet网络,并用其训练FashionMNIST数据集。代码包括网络架构、数据加载、模型构建、参数初始化、GPU使用、优化器设置、损失函数定义、训练与测试过程。

325

325

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?