#绘制激活函数代码

import numpy as np

import matplotlib.pyplot as plt

# 定义激活函数

def logistic(x):

return 1 / (1 + np.exp(-x))

def tanh(x):

return np.tanh(x)

def relu(x):

return np.maximum(0, x)

def leaky_relu(x, alpha=0.01):

return np.where(x >= 0, x, alpha * x)

def elu(x, alpha=1.0):

return np.where(x >= 0, x, alpha * (np.exp(x) - 1))

# 绘制激活函数图像

x = np.linspace(-10, 10, 1000)

# Logistic

plt.figure(figsize=(6, 4))

plt.plot(x, logistic(x), label='Logistic')

plt.plot(x, tanh(x), label='Tanh')

#plt.title('Logistic Activation Function')

plt.title('Logistic & Tanh')

plt.xlabel('x')

plt.ylabel('f(x)')

plt.legend()

plt.grid(True)

plt.show()

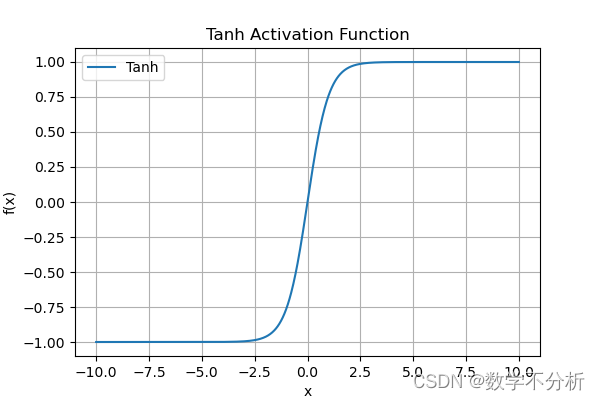

# Tanh

plt.figure(figsize=(6, 4))

plt.plot(x, tanh(x), label='Tanh')

plt.title('Tanh Activation Function')

plt.xlabel('x')

plt.ylabel('f(x)')

plt.legend()

plt.grid(True)

plt.show()

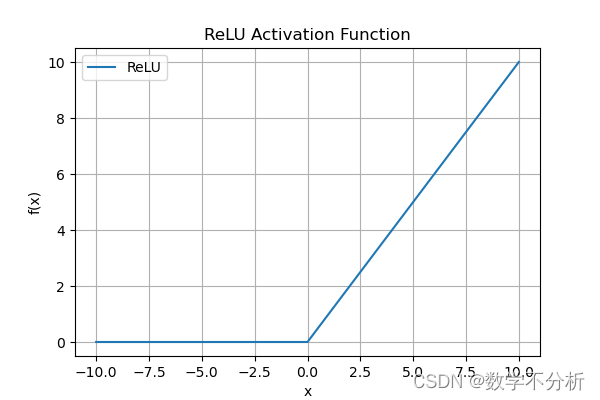

# ReLU

plt.figure(figsize=(6, 4))

plt.plot(x, relu(x), label='ReLU')

plt.title('ReLU Activation Function')

plt.xlabel('x')

plt.ylabel('f(x)')

plt.legend()

plt.grid(True)

plt.show()

# Leaky ReLU

plt.figure(figsize=(6, 4))

plt.plot(x, leaky_relu(x), label='Leaky ReLU')

plt.title('Leaky ReLU Activation Function')

plt.xlabel('x')

plt.ylabel('f(x)')

plt.legend()

plt.grid(True)

plt.show()

# ELU

plt.figure(figsize=(6, 4))

plt.plot(x, elu(x), label='ELU')

plt.title('ELU Activation Function')

plt.xlabel('x')

plt.ylabel('f(x)')

plt.legend()

plt.grid(True)

plt.show()

2209

2209

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?