来源:深度学习与图网络

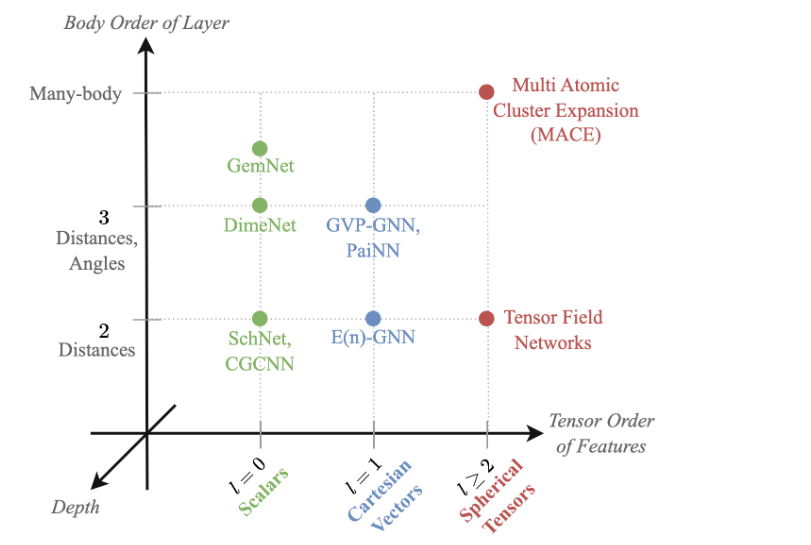

本文约1000字,建议阅读5分钟几何GNN有多强大?关键设计选择如何影响表现力,如何建立最强大的GNN?Geometric GNN是科学和工程领域空间嵌入图的新兴GNN类别,例如SchNet(用于分子),Tensor Field Networks(用于材料),GemNet(用于电催化剂),MACE(用于分子动力学)和E(n)-等变图卷积网络(用于高分子)。

几何GNN有多强大?关键设计选择如何影响表现力,如何建立最强大的GNN?

请查看Chaitanya K. Joshi,Cristian Bodnar,Simon V. Mathis,Taco Cohen和Pietro Liò的最新论文:

📄 PDF:http://arxiv.org/abs/2301.09308

💻代码:http://github.com/chaitjo/geometric-gnn-dojo

❓Research gap:标准的GNN理论工具,例如Weisfeiler-Leman图形同构测试,不适用于几何图形。这是由于需要考虑的额外的物理对称性(旋转平移)。

💡关键思想:几何图形同构概念+新的几何WL框架-->几何GNN表现力的上限。

几何WL框架形式化了几何GNN中深度,不变性与等变性,体顺序的作用。

不变GNN不能区分one-hop相同的几何图形,无法计算全局属性。

等变GNN区分更多的图形;这是怎么做到的?深度传播了解决了one-hop的局限。

那么实际意义呢?合成实验突出了构建最强大几何GNN的挑战:

随着深度的增加,几何信息过度折叠。

高阶球形张量对直角坐标向量的实用性。

附:你是否是Geometric GNNs,GDL,PyTorch Geometric等的新手?希望了解理论/方程如何与真实代码相连?

试试这个几何图卷积神经网络101笔记本:https://github.com/chaitjo/geometric-gnn-dojo/blob/main/geometric_gnn_101.ipynb

原文

Geometric GNNs are an emerging class of GNNs for spatially embedded graphs across science and engineering, e.g. SchNet for molecules, Tensor Field Networks for materials, GemNet for electrocatalysts, MACE for molecular dynamics, and E(n)-Equivariant Graph ConvNet for macromolecules.

How powerful are geometric GNNs? How do key design choices influence expressivity and how to build maximally powerful ones?

Check out this recent paper from Chaitanya K. Joshi, Cristian Bodnar, Simon V. Mathis, Taco Cohen, and Pietro Liò for more:

📄 PDF: http://arxiv.org/abs/2301.09308

💻 Code: http://github.com/chaitjo/geometric-gnn-dojo

❓Research gap: Standard theoretical tools for GNNs, such as the Weisfeiler-Leman graph isomorphism test, are inapplicable for geometric graphs. This is due to additional physical symmetries (roto-translation) that need to be accounted for.

💡Key idea: notion of geometric graph isomorphism + new geometric WL framework --> upper bound on geometric GNN expressivity.

The Geometric WL framework formalises the role of depth, invariance vs. equivariance, body ordering in geometric GNNs.

Invariant GNNs cannot tell apart one-hop identical geometric graphs, fail to compute global properties.

Equivariant GNNs distinguish more graphs; how? Depth propagates local geometry beyond one-hop.

What about practical implications? Synthetic experiments highlight challenges in building maximally powerful geom. GNNs:

Oversquashing of geometric information with increased depth.

Utility of higher order order spherical tensors over cartesian vectors.

P.S. Are you new to Geometric GNNs, GDL, PyTorch Geometric, etc.? Want to understand how theory/equations connect to real code?

Try this Geometric GNN 101 notebook before diving in:

https://github.com/chaitjo/geometric-gnn-dojo/blob/main/geometric_gnn_101.ipynb

编辑:文婧

文章讨论了几何图神经网络(GNNs)在科学和工程中的应用,如分子和材料建模。研究发现标准的GNN理论工具不适用于处理几何图形的物理对称性。作者提出了一种新的几何WL框架,以确定GNN的表现力上限,并强调了深度、不变性和等变性在GNN设计中的重要性。实验揭示了随着深度增加,几何信息可能被过度压缩的问题,同时提供了一个入门级的GNN笔记本以帮助理解理论与实现的联系。

文章讨论了几何图神经网络(GNNs)在科学和工程中的应用,如分子和材料建模。研究发现标准的GNN理论工具不适用于处理几何图形的物理对称性。作者提出了一种新的几何WL框架,以确定GNN的表现力上限,并强调了深度、不变性和等变性在GNN设计中的重要性。实验揭示了随着深度增加,几何信息可能被过度压缩的问题,同时提供了一个入门级的GNN笔记本以帮助理解理论与实现的联系。

295

295

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?