Ubuntu18配置CUDA10.2 cudnn8.0.1 TensorRT7.1.3.4

来自实际项目,项目简述:自动驾驶测试阶段,需要在车端测试算法运行在一个带有2080Ti工控机,这里使用tengine来部署模型。由于项目保密原因,本文不使用实际本团队开发的算法,就仅仅使用tengine自带的demo,完全够用。

配置分为两个部分:

-

ubuntu18配置cuda和cudnn,参见我的另一篇博客,Ubuntu18配置CUDA10.2 cudnn8.0.1(cuda_10.2.run格式+cudnn-10.2-linux-x64-v8.0.1.13.tgz格式)

-

ubuntu18配置TensorRT

https://zhuanlan.zhihu.com/p/371239130

一. ubuntu18配置cuda和cudnn

不多说了,网上一大堆,但是这里需要注意不同的cuda文件格式,有很大不同,我在我的另一篇博客里面介绍了cuda.run+cudnn.tar格式配置的内容,连接如上所述。

二. ubuntu18配置TensorRT

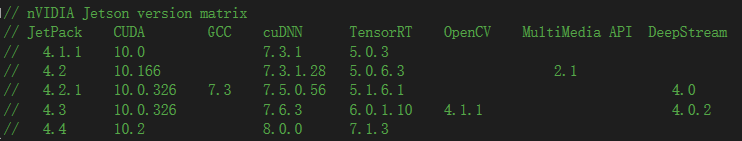

注意版本,注意版本,注意版本。cuda、cudnn和tensorrt这三者的版本需要严格匹配,在tengine项目的源码中可以清楚地看到各个版本的匹配信息:

实践证明,本人使用cuda10.2+cudnn8.0.1.13+tensorrt7.1.3.4也行!

实践证明,本人使用cuda10.2+cudnn8.0.1.13+tensorrt7.1.3.4也行!

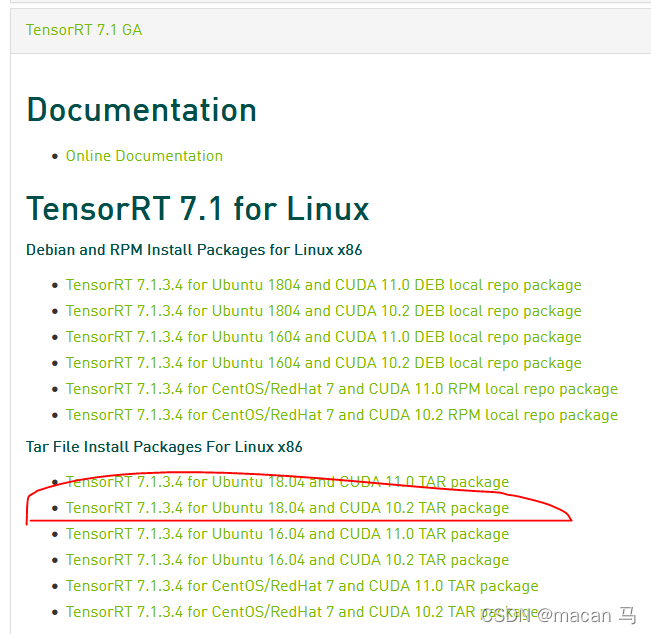

(1)下载相应版本的TensorRT

确定自己需要的版本,去官网下载

(2)解压TensorRT

(2)解压TensorRT

$ tar -zxvf TensorRT-7.1.3.4.Ubuntu-18.04.x86_64-gnu.cuda-10.2.cudnn8.0.tar.gz -C /usr/local/TensorRT

(3)添加环境变量

解压之后需要添加环境变量,以便让我们的程序能够找到TensorRT的libs。

$ vim ~/.bashrc

添加以下内容

$ export LD_LIBRARY_PATH=/usr/local/TensorRT/lib:$LD_LIBRARY_PATH

$ export LIBRARY_PATH=/usr/local/TensorRT/lib::$LIBRARY_PATH

这样TensorRT就安装好了

关于在tengine中使用TensorRT,烦请关注本博主,参加另一篇博客:[在tengine平台使用TensorRT运行目标检测算法](https://blog.csdn.net/weixin_42211626/article/details/122425281)

1800

1800

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?