import numpy as np

import torch.nn as nn

import torch.optim as optim

sentences = ["i like dog",'i like animal',

"dog cat animal","apple cat dog like","cat like fish",

"dog like meat","i like apple","i hate apple",

"i like movie book music apple","dog like bak","dog friend cat"]

work_list = ' '.join(sentences).split()

work_list = list(set(work_list))

work_dict = {w:i for i,w in enumerate(work_list)}

number_dict = {i:w for i,w in enumerate(work_list)}

#数据

skip_grams = []

for i in range(1,len(work_list)-1):

target = work_dict[work_list[i]]

context = [work_dict[work_list[i-1]],work_dict[work_list[i+1]]]

for w in context:

skip_grams.append([target,w])

#模型

dtype = torch.FloatTensor

vec_size = len(work_list)

embedding_size = 2

batch_size = 2

class Word2Vec(nn.Module):

def __init__(self):

super(Word2Vec, self).__init__()

self.W = nn.Parameter(torch.rand(vec_size,embedding_size).type(dtype))

self.WT = nn.Parameter(torch.rand(embedding_size,vec_size).type(dtype))

def forward(self,x):

hidden_layer = torch.matmul(x,self.W)

out_layer = torch.matmul(hidden_layer,self.WT)

return out_layer

model = Word2Vec()

loss_fun = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(),lr=0.001)

#训练

def random_batch(data,size):

random_inputs = []

random_lables = []

random_index = np.random.choice(range(len(data)),size,replace=False)

for i in random_index:

random_inputs.append(np.eye(vec_size)[data[i][0]]) #target

random_lables.append(data[i][1]) #context word

return random_inputs,random_lables

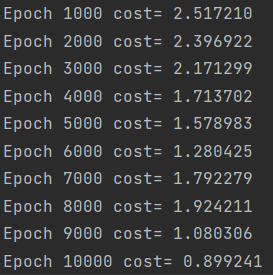

for epoch in range(10000):

input_batch,target_batch = random_batch(skip_grams,batch_size)

input_batch = torch.Tensor(input_batch)

target_batch = torch.LongTensor(target_batch)

optimizer.zero_grad()

output = model(input_batch)

loss = loss_fun(output,target_batch)

if (epoch + 1) % 1000 == 0:

print('Epoch', '%04d' % (epoch + 1), 'cost=', '{:.6f}'.format(loss))

loss.backward()

optimizer.step()

10万+

10万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?