项目介绍

数据集:总过有5类宝可梦,共1165张图片

模型搭建框架:PyTorch.

1.基于torch.utils.data 构建自定义数据读取器,具体细节见代码。

2.通过比较用torchvision 提供的pretrained的resnet18网络和自定义的残差网络训练在测试集的结果,来查看迁移学习对于当前任务的效果。

具体代码

首先自定义数据读取器

import torch

import os,glob

import random,csv

import visdom

from torch.utils.data import Dataset,DataLoader

from torchvision import transforms

from PIL import Image

import time

class Pokemon(Dataset):

def __init__(self,root,resize,mode):

super(Pokemon,self).__init__()

self.root=root

self.resize=resize

self.name2label={}

for name in sorted(os.listdir(os.path.join(root))):

if not os.path.isdir(os.path.join(root,name)):

continue

self.name2label[name]=len(self.name2label.keys())

#print(self.name2label)

self.images,self.labels=self.load_csv('images.csv')

if mode=='train':

self.images=self.images[:int(0.6*len(self.images))]

self.labels=self.labels[:int(0.6*len(self.labels))]

elif mode=='validation':

self.images=self.images[int(0.6*len(self.images)):int(0.8*len(self.images))]

self.labels=self.labels[int(0.6*len(self.labels)):int(0.8*len(self.labels))]

else:

self.images=self.images[int(0.8*len(self.images)):int(len(self.images))]

self.labels=self.labels[int(0.8*len(self.labels)):int(len(self.labels))]

def load_csv(self,filename):

if not os.path.exists(os.path.join(self.root,filename)): #如果csv文件不存在

images=[]

for name in self.name2label.keys():

images+=glob.glob(os.path.join(self.root,name,'*.png')) #返回png格式文件的路径组成的列表,下面同理

images+=glob.glob(os.path.join(self.root,name,'*.jpg'))

images+=glob.glob(os.path.join(self.root,name,'*.jpeg'))

print(len(images))

random.shuffle(images)#重新打乱顺序

with open(os.path.join(self.root,filename),mode='w',newline='') as f:

writer=csv.writer(f)

for img in images:

name=img.split(os.sep)[-2]

label=self.name2label[name]

writer.writerow([img,label])

print('written into csv file:',filename)

f.close()

images,labels=[],[]

with open(os.path.join(self.root,filename)) as f: #读取csv文件

reader=csv.reader(f)

for row in reader:

img,label=row

label=int(label)

images.append(img)

labels.append(label)

f.close()

assert len(images)==len(labels)

return images,labels

def denormalize(self,x_hat):

mean=[0.485,0.456,0.406]

std=[0.229,0.224,0.225]

#一张x的维度:[c,h,w]

#因此mean,std也应该相应地扩充为3维

mean=torch.tensor(mean).unsqueeze(1).unsqueeze(1)

std=torch.tensor(std).unsqueeze(1).unsqueeze(1)

x=x_hat*std+mean

return x

def __len__(self):

return len(self.images)

def __getitem__(self,idx): #获取具体的图片

img,label=self.images[idx],self.labels[idx]

tf=transforms.Compose([

lambda x:Image.open(x).convert('RGB'), #path转为image data

transforms.Resize((int(self.resize*1.25),int(self.resize*1.25))), #统一尺寸

transforms.RandomRotation(15), #旋转过大会导致网络不收敛的情况

transforms.CenterCrop(self.resize), #按原尺寸进行裁剪

transforms.ToTensor(),

transforms.Normalize(mean=[0.485,0.456,0.406],std=[0.229,0.224,0.225])]) #follow imagenet的数据分布

img=tf(img)

label=torch.tensor(label)

return img,label

然后通过继承nn.Module来自定义残差网络

import torch

from torch import nn

from torch.nn import functional as F

class ResBlock(nn.Module): #定义残差网络块

def __init__(self,ch_in,ch_out,stride=1):

super(ResBlock,self).__init__()

self.conv1=nn.Conv2d(ch_in,ch_out,kernel_size=3,stride=stride,padding=1)

self.bn1=nn.BatchNorm2d(ch_out)

self.conv2=nn.Conv2d(ch_out,ch_out,kernel_size=3,stride=1,padding=1) #no size change in this conv layer

self.bn2=nn.BatchNorm2d(ch_out)

#resnet requires the same shape of input and output of the network block

self.extra=nn.Sequential()

if ch_out!=ch_in:

self.extra=nn.Sequential(nn.Conv2d(ch_in,ch_out,kernel_size=1,stride=stride),

nn.BatchNorm2d(ch_out))

def forward(self,x):

out=F.relu(self.bn1(self.conv1(x)))

out=self.bn2(self.conv2(out))

#the kernel_size=3 and padding=1 make sure the size of feature map = orginal image size =[h,w]

#x:[batch_size,ch_in,h,w] => out:[batch_size,ch_out,h,w]

#resnet operation

out=self.extra(x)+out

out=F.relu(out)

return out

class ResNet18(nn.Module):

def __init__(self,num_class):

super(ResNet18,self).__init__()

self.conv1=nn.Sequential(nn.Conv2d(3,16,kernel_size=3,stride=3,padding=0),nn.BatchNorm2d(16))

self.blk1=ResBlock(16,32,stride=3)

self.blk2=ResBlock(32,64,stride=3)

self.blk3=ResBlock(64,128,stride=2)

self.blk4=ResBlock(128,256,stride=2)

self.outlayer=nn.Linear(256*3*3,num_class)

def forward(self,x):

x=F.relu(self.conv1(x))

x=self.blk1(x)

x=self.blk2(x)

x=self.blk3(x)

x=self.blk4(x)

#flatten

x=x.view(x.size(0),-1)

x=self.outlayer(x)

return x

训练时,通过在main()中设置Transfer_learning 的参数值来分别训练自定义残差网络和基于预训练的resnet18。

import torch

from torch import optim,nn

import visdom

import torchvision

from torch.utils.data import DataLoader

from pokemon import Pokemon

from resnet import ResNet18

from torchvision.models import resnet18

from utils import Flatten

#parameters

batch_size=32

lr=1e-3

epochs=10

device=torch.device('cpu')

torch.manual_seed(1)

#data

train_set=Pokemon('./pokemon',224,mode='train')

validation_set=Pokemon('./pokemon',224,mode='validation')

test_set=Pokemon('./pokemon',224,mode='test')

train_loader=DataLoader(train_set,batch_size=batch_size,shuffle=True)

val_loader=DataLoader(validation_set,batch_size=32)

test_loader=DataLoader(test_set,batch_size=32)

def evalute(model,loader):

correct=0

size=len(loader.dataset)

for x,y in loader:

x,y=x.to(device),y.to(device)

with torch.no_grad(): #这里使用torch.no_grad()是因为下面的计算过程只是用于验证集查看模型效果,

#我们并不想要用这里的计算结果用于后续的网络参数优化。

output=model(x)

pred=output.argmax(dim=1)

correct+=torch.eq(pred,y).sum().float().item() #item() 将一个张量转化为一个元素值

return correct/size

viz=visdom.Visdom() #可视化对象

def main(transfer_learning=True):

if transfer_learning: #基于预训练的resnet18模型

trained_model=resnet18(pretrained=True)

model=nn.Sequential(*list(trained_model.children())[:-1], #经过这部分网络后的输出size=[b,512,1,1]

Flatten(),

nn.Linear(512,5)).to(device)

print('Transfer Learning Model Loaded!')

else:

model=ResNet18(5).to(device)

optimizer=optim.Adam(model.parameters(),lr=lr)

metric=nn.CrossEntropyLoss()

best_acc,best_epoch=0,0

global_step=0

viz.line([0],[-1],win='loss',opts=dict(title='loss'))

viz.line([0],[-1],win='val_acc',opts=dict(title='val_acc'))

for epoch in range(epochs):

for step,(x,y) in enumerate(train_loader):

x,y=x.to(device),y.to(device)

output=model(x)

loss=metric(output,y)

optimizer.zero_grad() #清空累积的梯度值

loss.backward() #计算本轮的梯度

optimizer.step() #利用本轮计算得到的梯度更新参数

viz.line([loss.item()],[global_step],win='loss',update='append')

global_step+=1

if epoch %1==0:

val_acc=evalute(model,val_loader)

if val_acc>best_acc:

best_epoch=epoch

best_acc=val_acc

torch.save(model.state_dict(),'bestmodel.para') #文件后缀随意

viz.line([val_acc],[global_step],win='val_acc',update='append')

print('best acc:',best_acc,'best epoch:',best_epoch)

model.load_state_dict(torch.load('bestmodel.para'))

print('loaded from ckpt!')

test_acc=evalute(model,test_loader)

print('test acc:',test_acc)

if __name__=='__main__':

main(transfer_learning=False)

因为在PyTorch中没有实现Flatten(), 展平操作,因此需要自己定义。

import torch

from torch import nn

class Flatten(nn.Module):

def __init__(self):

super(Flatten,self).__init__()

def forward(self,x):

shape=torch.prod(torch.tensor(x.shape[1:])).item()

return x.view(-1,shape)

最后是训练结果

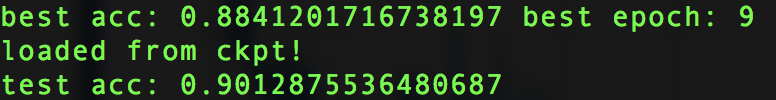

首先是自定义的网络的训练结果,可以看到在训练集上的表现为0.9的准确率,有点意料之外?

而且仔细观察还能发现,在测试集上的表现居然还比验证集上的表现好2个百分点,意料之外的没有overfitting。

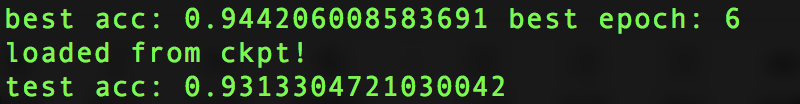

然后是基于pre-trained的resnet18的模型效果

意料之中的有所提高,但是提升效果没有想象中那么高,在测试集上的准确率为0.93。迁移学习在这个task上的作用并不明显,当然部分原因是自定义网络的效果有点超出预期。。。

364

364

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?