目录

16-NIPS-improved deep metric learning with multi-class n-pair loss objective

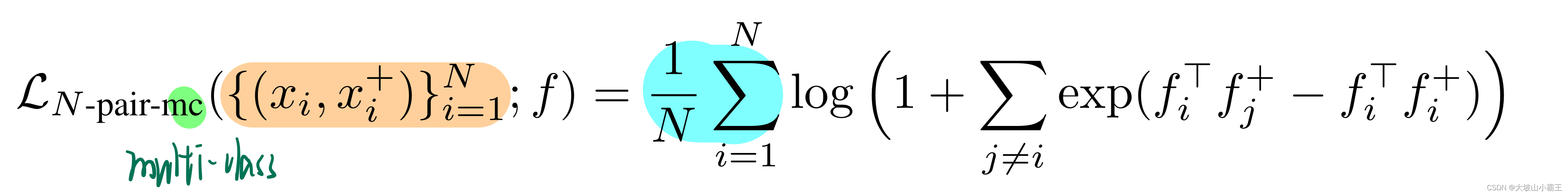

multi-class N-pair loss (N-pair-mc)

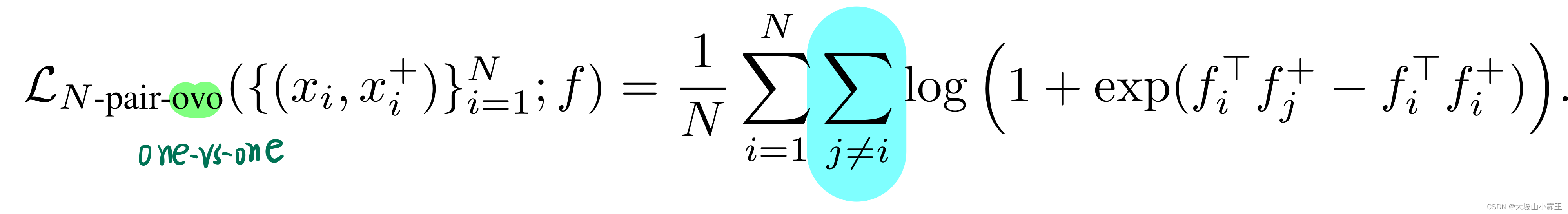

one-vs-one N-pair loss (N-pair-ovo)

L2 norm regularization of embedding vectors

16-NIPS-improved deep metric learning with multi-class n-pair loss objective

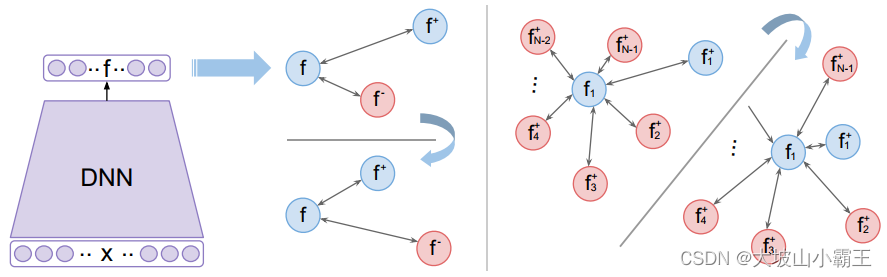

Multiple Negative Examples

(N+1)-tuplet loss

同时推开多个类的负样本

N=2 类似triplet loss

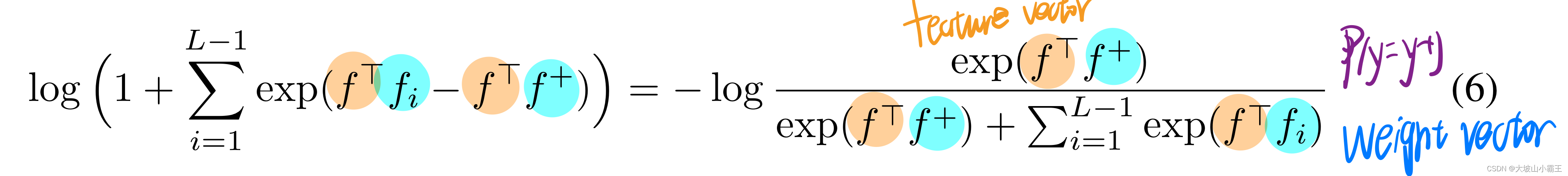

(4)(5)

(4)(5)

- triplet loss的问题是can be unstable并且convergence会很慢

为了解决这个问题,在前人的工作中,使用smooth upper bound of the triplet loss来进行优化(利用 softplus 函数代替 hinge function)

SoftPlus 激活函数:

近似relu函数

不包含0,解决了 Dead ReLU 问题,但不包含负区间,不能加速学习

N=L 类似softmax loss

【后面这里就很像交叉熵了:交叉熵损失采用的是learnable class-wise weights作为class instances的一个代理,而不是直接使用instances的embeddings;在交叉熵中,所有的negative classes都参与了损失的计算,然而在这里,仅仅是将anchor instance远离了那些mini-batch采样中的负样本。所以后来就有proxy不用采样了。。。】

multi-class N-pair loss (N-pair-mc)

one-vs-one N-pair loss (N-pair-ovo)

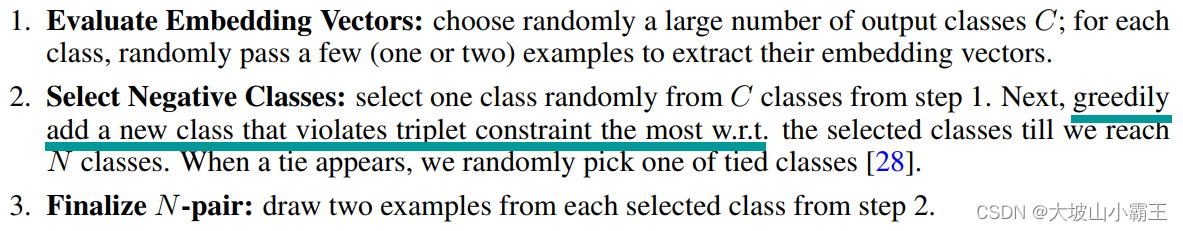

Hard negative class mining

L2 norm regularization of embedding vectors

排除范数的影响——>归一化

——>严格限制|fTf+|<1,优化困难——>正则化嵌入向量的L2范数让它小

for anchor, positive, negative_set in zip(anchors, positives, negatives):

a_embs, p_embs, n_embs = batch[anchor:anchor+1], batch[positive:positive+1], batch[negative_set]

inner_sum = a_embs[:,None,:].bmm((n_embs - p_embs[:,None,:]).permute(0,2,1))

inner_sum = inner_sum.view(inner_sum.shape[0], inner_sum.shape[-1])

loss = loss + torch.mean(torch.log(torch.sum(torch.exp(inner_sum), dim=1) + 1))/len(anchors)

loss = loss + self.l2_weight*torch.mean(torch.norm(batch, p=2, dim=1))/len(anchors)

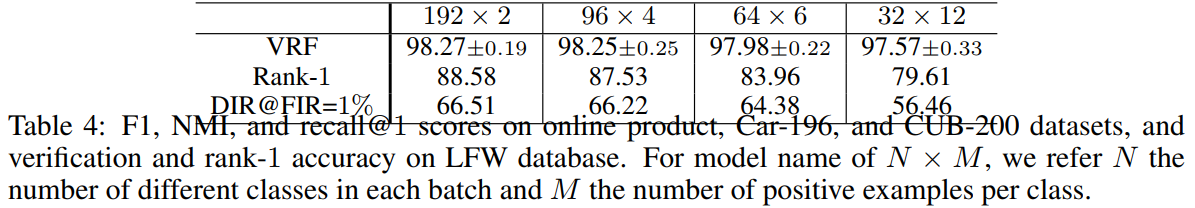

Experimental Results

adapt the smooth upper bound of triplet loss in Equation (4) instead of large-margin formulation in all our experiments to be consistent with N-pair-mc losses.

multi-class N-pair loss表现更好:one-vs-one N-pair loss解耦,每个负样本损失都是独立的

在固定batch size下,每个类采集一对样本,样本来自的类变多。训练涉及的负类越多越好。

5792

5792

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?