非线性激活:给神经网络引入一些非线性的特征。非线性激活越多,才能训练出符合各种曲线或特征的模型(提高泛化能力)

常用的非线性激活:

- ReLu

-

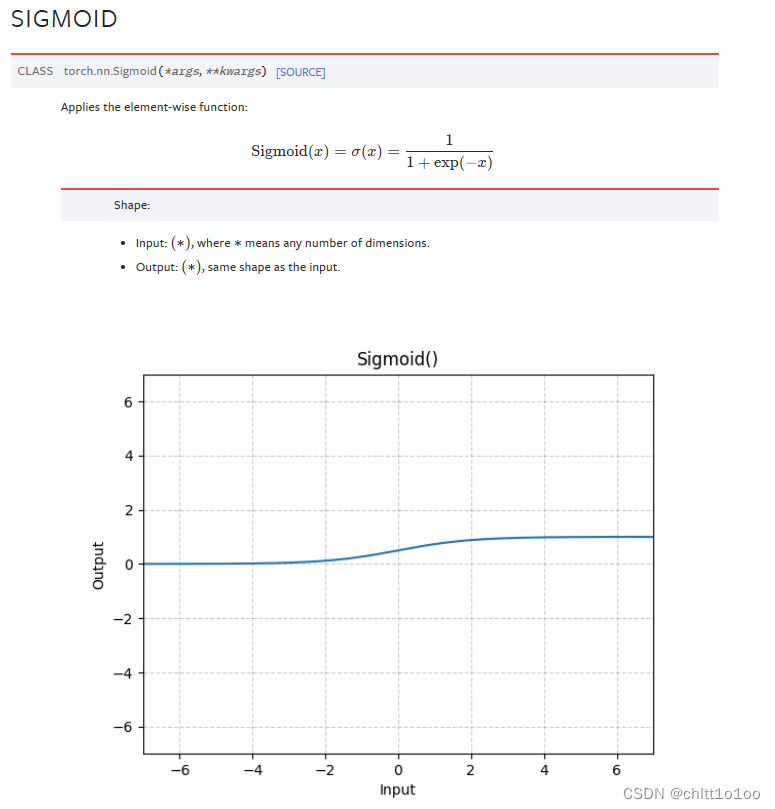

Sigmoid

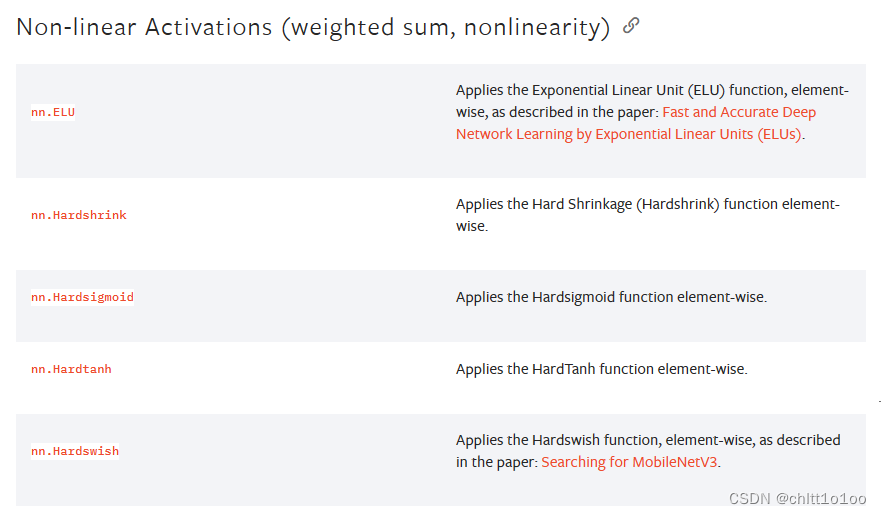

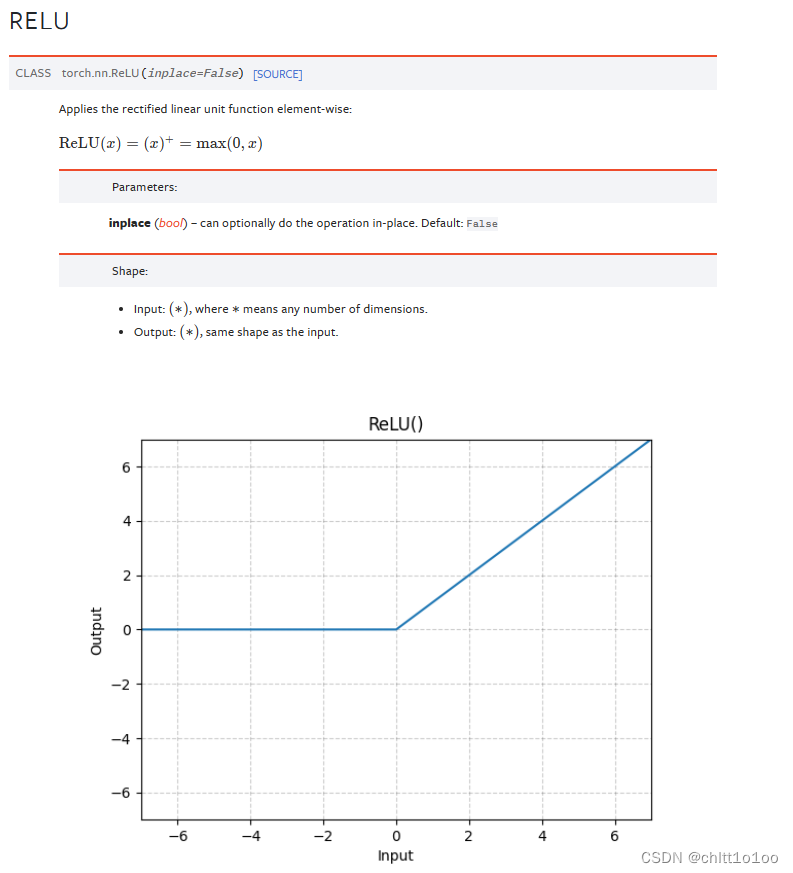

1 ReLu

举例:

import torch

from torch import nn

from torch.nn import ReLU

input = torch.tensor([[1,-0.5],

[-1,3]])

class myNN(nn.Module):

def __init__(self):

super(myNN, self).__init__()

self.relu1 = ReLU() #inplace默认为False

def forward(self,input):

output = self.relu1(input)

return output

mynn = myNN()

output = mynn(input)

print(output)

2 Sigmoid

举例:

import torch

import torchvision

from torch import nn

from torch.nn import Sigmoid

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

dataset = torchvision.datasets.CIFAR10("./datasets",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset,batch_size=64)

class myNN(nn.Module):

def __init__(self):

super(myNN, self).__init__()

self.sigmoid1 = Sigmoid()

def forward(self,input):

output = self.sigmoid1(input)

return output

mynn = myNN()

writer = SummaryWriter("log5")

step = 0

for data in dataloader:

imgs,targets = data

writer.add_images("input", imgs, step)

output = mynn(imgs)

writer.add_images("output", output, step)

step += 1

writer.close()

960

960

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?