opencv调用openpose姿态检测(二)

论文:Realtime Multi-Person 2D Pose Estimation using Part Affinity Fields 提取码:1u2n

论文理解参考博客:

https://blog.csdn.net/w275840140/article/details/89393626

https://blog.csdn.net/wwwhp/article/details/88782851

轻量级openpose模型完成项目地址(模型7M,精度偏低):

百度云地址:https://pan.baidu.com/s/1tpZPeRSup2SNsgOJR0CWUA

提取码:6f5u

代码:

import cv2

import numpy as np

import argparse

import time

BODY_PARTS = {"Nose": 0, "Neck": 1, "RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7, "RHip": 8, "RKnee": 9,

"RAnkle": 10, "LHip": 11, "LKnee": 12, "LAnkle": 13, "REye": 14,

"LEye": 15, "REar": 16, "LEar": 17, "Background": 18}

POSE_PAIRS = [["Neck", "RShoulder"], ["Neck", "LShoulder"], ["RShoulder", "RElbow"],

["RElbow", "RWrist"], ["LShoulder", "LElbow"], ["LElbow", "LWrist"],

["Neck", "RHip"], ["RHip", "RKnee"], ["RKnee", "RAnkle"], ["Neck", "LHip"],

["LHip", "LKnee"], ["LKnee", "LAnkle"], ["Neck", "Nose"], ["Nose", "REye"],

["REye", "REar"], ["Nose", "LEye"], ["LEye", "LEar"]]

inWidth = 368

inHeight = 368

thr = 0.2

net = cv2.dnn.readNetFromTensorflow("graph_opt.pb")

def video_detect():

fps = 0.0

cap = cv2.VideoCapture(0)

while True:

t1 = time.time()

ret, frame = cap.read()

frameWidth = frame.shape[1]

frameHeight = frame.shape[0]

net.setInput(cv2.dnn.blobFromImage(frame, 1.0, (inWidth, inHeight), (127.5, 127.5, 127.5), swapRB=True, crop=False))

out = net.forward()

out = out[:, :19, :, :] # MobileNet output [1, 57, -1, -1], we only need the first 19 elements

assert (len(BODY_PARTS) == out.shape[1])

points = []

for i in range(len(BODY_PARTS)):

# Slice heatmap of corresponging body's part.

heatMap = out[0, i, :, :]

# Originally, we try to find all the local maximums. To simplify a sample

# we just find a global one. However only a single pose at the same time

# could be detected this way.

_, conf, _, point = cv2.minMaxLoc(heatMap)

x = (frameWidth * point[0]) / out.shape[3]

y = (frameHeight * point[1]) / out.shape[2]

points.append((int(x), int(y)) if conf > thr else None)

for pair in POSE_PAIRS:

partFrom = pair[0]

partTo = pair[1]

assert (partFrom in BODY_PARTS)

assert (partTo in BODY_PARTS)

idFrom = BODY_PARTS[partFrom]

idTo = BODY_PARTS[partTo]

if points[idFrom] and points[idTo]:

#cv2.line()

#第一个参数 img:要划的线所在的图像;

# 第二个参数 pt1:直线起点 第三个参数 pt2:直线终点

# 第四个参数 color:直线的颜色 第五个参数 thickness=1:线条粗细

cv2.line(frame, points[idFrom], points[idTo], (0, 255, 0), 3)

# cv2.ellipse()画椭圆

cv2.ellipse(frame, points[idFrom], (3, 3), 0, 0, 360, (0, 0, 255), cv2.FILLED)

cv2.ellipse(frame, points[idTo], (3, 3), 0, 0, 360, (0, 0, 255), cv2.FILLED)

fps = (fps + (1. / (time.time() - t1))) / 2

# print("fps= %.2f" % (fps))

frame = cv2.putText(frame, "fps= %.2f" % (fps), (0, 40), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), 2)

cv2.imshow("video", frame)

c = cv2.waitKey(1) & 0xff

if c == 27:

cap.release()

break

def img_detect(image):

t1 = time.time()

img = cv2.imread(image)

imgWidth = img.shape[1]

imgHeight = img.shape[0]

net.setInput(cv2.dnn.blobFromImage(img, 1.0, (inWidth, inHeight), (127.5, 127.5, 127.5), swapRB=True, crop=False))

out = net.forward()

out = out[:, :19, :, :] # MobileNet output [1, 57, -1, -1], we only need the first 19 elements

assert (len(BODY_PARTS) == out.shape[1])

points = []

for i in range(len(BODY_PARTS)):

# Slice heatmap of corresponging body's part.

heatMap = out[0, i, :, :]

_, conf, _, point = cv2.minMaxLoc(heatMap)

x = (imgWidth * point[0]) / out.shape[3]

y = (imgHeight * point[1]) / out.shape[2]

points.append((int(x), int(y)) if conf > thr else None)

for pair in POSE_PAIRS:

partFrom = pair[0]

partTo = pair[1]

assert (partFrom in BODY_PARTS)

assert (partTo in BODY_PARTS)

idFrom = BODY_PARTS[partFrom]

idTo = BODY_PARTS[partTo]

if points[idFrom] and points[idTo]:

cv2.line(img, points[idFrom], points[idTo], (0, 255, 0), 3)

cv2.ellipse(img, points[idFrom], (3, 3), 0, 0, 360, (0, 0, 255), cv2.FILLED)

cv2.ellipse(img, points[idTo], (3, 3), 0, 0, 360, (0, 0, 255), cv2.FILLED)

fps = time.time() - t1

frame = cv2.putText(img, "time= %.2fs" % (fps), (0, 40), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), 2)

cv2.imshow('img',img)

cv2.waitKey(0)

if __name__ == '__main__':

# 视频检测

video_detect()

# # 图片检测

# img_detect('qin.jpeg')

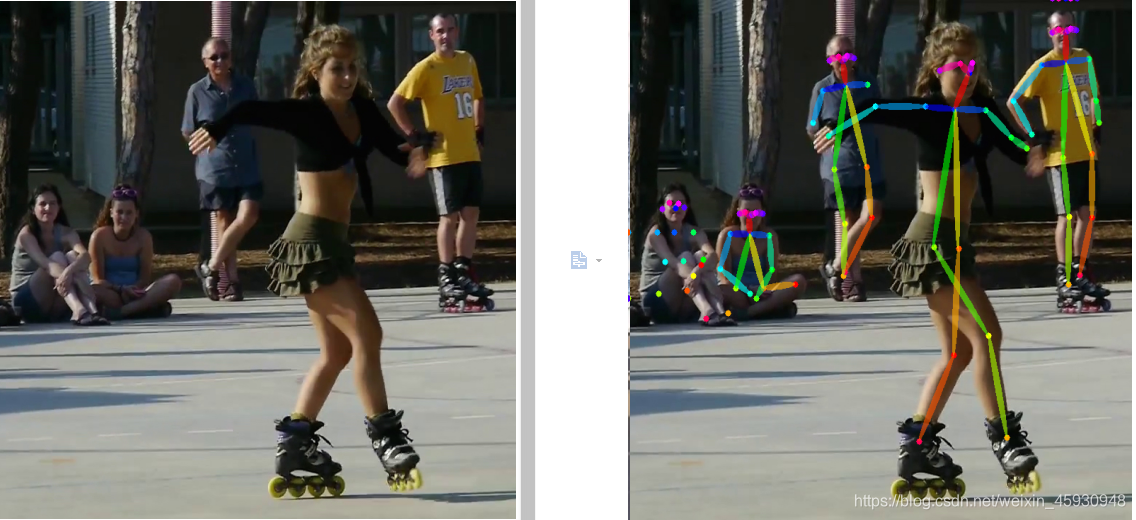

效果图:

基于keras框架openpose姿态检测(190M,精度高)

百度云链接:https://pan.baidu.com/s/1-fqumQWZnz5h4KmW9-Msog

提取码:ety0

复制这段内容后打开百度网盘手机App,操作更方便哦–来自百度网盘超级会员V1的分享

主干模型:VGG19

from keras.models import Model

from keras.layers.merge import Concatenate

from keras.layers import Activation, Input, Lambda

from keras.layers.convolutional import Conv2D

from keras.layers.pooling import MaxPooling2D

from keras.layers.merge import Multiply

from keras.regularizers import l2

from keras.initializers import random_normal,constant

def relu(x):

return Activation('relu')(x)

def conv(x, nf, ks, name, weight_decay):

kernel_reg = l2(weight_decay[0]) if weight_decay else None

bias_reg = l2(weight_decay[1]) if weight_decay else None

x = Conv2D(nf, (ks, ks), padding='same', name=name,

kernel_regularizer=kernel_reg,

bias_regularizer=bias_reg,

kernel_initializer=random_normal(stddev=0.01),

bias_initializer=constant(0.0))(x)

return x

def pooling(x, ks, st, name):

x = MaxPooling2D((ks, ks), strides=(st, st), name=name)(x)

return x

def vgg_block(x, weight_decay):

# Block 1

x = conv(x, 64, 3, "conv1_1", (weight_decay, 0))

x = relu(x)

x = conv(x, 64, 3, "conv1_2", (weight_decay, 0))

x = relu(x)

x = pooling(x, 2, 2, "pool1_1")

# Block 2

x = conv(x, 128, 3, "conv2_1", (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 3, "conv2_2", (weight_decay, 0))

x = relu(x)

x = pooling(x, 2, 2, "pool2_1")

# Block 3

x = conv(x, 256, 3, "conv3_1", (weight_decay, 0))

x = relu(x)

x = conv(x, 256, 3, "conv3_2", (weight_decay, 0))

x = relu(x)

x = conv(x, 256, 3, "conv3_3", (weight_decay, 0))

x = relu(x)

x = conv(x, 256, 3, "conv3_4", (weight_decay, 0))

x = relu(x)

x = pooling(x, 2, 2, "pool3_1")

# Block 4

x = conv(x, 512, 3, "conv4_1", (weight_decay, 0))

x = relu(x)

x = conv(x, 512, 3, "conv4_2", (weight_decay, 0))

x = relu(x)

# Additional non vgg layers

x = conv(x, 256, 3, "conv4_3_CPM", (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 3, "conv4_4_CPM", (weight_decay, 0))

x = relu(x)

return x

def stage1_block(x, num_p, branch, weight_decay):

# Block 1

x = conv(x, 128, 3, "Mconv1_stage1_L%d" % branch, (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 3, "Mconv2_stage1_L%d" % branch, (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 3, "Mconv3_stage1_L%d" % branch, (weight_decay, 0))

x = relu(x)

x = conv(x, 512, 1, "Mconv4_stage1_L%d" % branch, (weight_decay, 0))

x = relu(x)

x = conv(x, num_p, 1, "Mconv5_stage1_L%d" % branch, (weight_decay, 0))

return x

def stageT_block(x, num_p, stage, branch, weight_decay):

# Block 1

x = conv(x, 128, 7, "Mconv1_stage%d_L%d" % (stage, branch), (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 7, "Mconv2_stage%d_L%d" % (stage, branch), (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 7, "Mconv3_stage%d_L%d" % (stage, branch), (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 7, "Mconv4_stage%d_L%d" % (stage, branch), (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 7, "Mconv5_stage%d_L%d" % (stage, branch), (weight_decay, 0))

x = relu(x)

x = conv(x, 128, 1, "Mconv6_stage%d_L%d" % (stage, branch), (weight_decay, 0))

x = relu(x)

x = conv(x, num_p, 1, "Mconv7_stage%d_L%d" % (stage, branch), (weight_decay, 0))

return x

def apply_mask(x, mask1, mask2, num_p, stage, branch):

w_name = "weight_stage%d_L%d" % (stage, branch)

if num_p == 38:

w = Multiply(name=w_name)([x, mask1]) # vec_weight

else:

w = Multiply(name=w_name)([x, mask2]) # vec_heat

return w

def get_training_model(weight_decay):

stages = 6

np_branch1 = 38

np_branch2 = 19

img_input_shape = (None, None, 3)

vec_input_shape = (None, None, 38)

heat_input_shape = (None, None, 19)

inputs = []

outputs = []

img_input = Input(shape=img_input_shape)

vec_weight_input = Input(shape=vec_input_shape)

heat_weight_input = Input(shape=heat_input_shape)

inputs.append(img_input)

inputs.append(vec_weight_input)

inputs.append(heat_weight_input)

img_normalized = Lambda(lambda x: x / 256 - 0.5)(img_input) # [-0.5, 0.5]

# VGG

stage0_out = vgg_block(img_normalized, weight_decay)

# stage 1 - branch 1 (PAF)

stage1_branch1_out = stage1_block(stage0_out, np_branch1, 1, weight_decay)

w1 = apply_mask(stage1_branch1_out, vec_weight_input, heat_weight_input, np_branch1, 1, 1)

# stage 1 - branch 2 (confidence maps)

stage1_branch2_out = stage1_block(stage0_out, np_branch2, 2, weight_decay)

w2 = apply_mask(stage1_branch2_out, vec_weight_input, heat_weight_input, np_branch2, 1, 2)

x = Concatenate()([stage1_branch1_out, stage1_branch2_out, stage0_out])

outputs.append(w1)

outputs.append(w2)

# stage sn >= 2

for sn in range(2, stages + 1):

# stage SN - branch 1 (PAF)

stageT_branch1_out = stageT_block(x, np_branch1, sn, 1, weight_decay)

w1 = apply_mask(stageT_branch1_out, vec_weight_input, heat_weight_input, np_branch1, sn, 1)

# stage SN - branch 2 (confidence maps)

stageT_branch2_out = stageT_block(x, np_branch2, sn, 2, weight_decay)

w2 = apply_mask(stageT_branch2_out, vec_weight_input, heat_weight_input, np_branch2, sn, 2)

outputs.append(w1)

outputs.append(w2)

if (sn < stages):

x = Concatenate()([stageT_branch1_out, stageT_branch2_out, stage0_out])

model = Model(inputs=inputs, outputs=outputs)

return model

def get_testing_model():

stages = 6

np_branch1 = 38

np_branch2 = 19

img_input_shape = (None, None, 3)

img_input = Input(shape=img_input_shape)

img_normalized = Lambda(lambda x: x / 256 - 0.5)(img_input) # [-0.5, 0.5]

# VGG

stage0_out = vgg_block(img_normalized, None)

# stage 1 - branch 1 (PAF)

stage1_branch1_out = stage1_block(stage0_out, np_branch1, 1, None)

# stage 1 - branch 2 (confidence maps)

stage1_branch2_out = stage1_block(stage0_out, np_branch2, 2, None)

x = Concatenate()([stage1_branch1_out, stage1_branch2_out, stage0_out])

# stage t >= 2

stageT_branch1_out = None

stageT_branch2_out = None

for sn in range(2, stages + 1):

stageT_branch1_out = stageT_block(x, np_branch1, sn, 1, None)

stageT_branch2_out = stageT_block(x, np_branch2, sn, 2, None)

if (sn < stages):

x = Concatenate()([stageT_branch1_out, stageT_branch2_out, stage0_out])

model = Model(inputs=[img_input], outputs=[stageT_branch1_out, stageT_branch2_out])

return model

检测结果:

6464

6464

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?