此文章缺乏一些公式推导,只直接给代码和必要的解释

1、 创建一个类

import numpy as np

# 以下包是用来操作数据,检验准确率的。这里没有给出。

from sklearn.model_selection import train_test_split # 划分数据集

from sklearn.preprocessing import StandardScaler # 用来去均值和方差归一化,针对每个特征的处理

from sklearn.metrics import accuracy_score # 分类准确率分数,分类正确的百分比 不同于precise

class LogisticRegression(object):

def __init__(self, n_iter=5000, eta=0.01, diff=None):

# 梯度下降循环次数

self.n_iter = n_iter

# 学习率

self.eta = eta

# 提前停止的阈值

self.diff = diff

# 参数w,训练最终需要的结果

self.w = None

2、 Train Part

# 训练部分

def _Fit(self, X_train, Y_train):

"""

首先进行数据预处理.

X_train 输入的是每个样本水平放置的训练集,在训练集最左侧需要添加一列1.

(原因为了将偏置也放进参数w中,不懂的话需要自己取补补知识了)

"""

X_train = self._X_preprocess(X_train) # 方法①

# 根据X的特征数初始化参数w

_, n = X_train.shape

self.w = np.random.random(n) * 0.05 # 随机初始化 默认shape为(n, 1)

# 进行利用梯度下降的模型学习

self._gradient_descent(self.w, Y_train, X_train) # 方法②

# 部分方法补充

# 方法①

def _X_preprocess(self, Xtrain):

# 数据预处理

m, n = Xtrain.shape

# 创建一个矩阵

new_Train_X = np.empty((m, n + 1))

# 第一列填充1

new_Train_X[:, 0] = 1

# 后面使用源数据集补上

new_Train_X[:, 1:] = Xtrain

return new_Train_X # 返回此新的数据集

# 方法②

def _gradient_descent(self, w, Ytrain, Xtrain):

# 这是计算loss的时候的y的个数

m = Ytrain.size

# 加入有要求 差值(阈值)

if self.diff is not None:

loss_last = np.inf # 这是一个非常大的数

# self.loss_list = [] # 用来存放loss 方便观察 需要时用

for step in range(self.n_iter):

# 计算y_hat

y_hat = 1. / (1. + np.exp(-np.dot(Xtrain, self.w))) # 先得到z,然后扔进sigmoid函数求值

# y_hat = self._predict_proba(Xtrain, self.w)

# 计算梯度

dJ = np.dot(y_hat - Ytrain, Xtrain) / m

# 计算损失(对数损失函数)首先最外层会有一个-1/m(m=y.size)然后是对对数似然函数的计算,再相乘

loss_new = -1 / m * np.sum(Ytrain * np.log(y_hat) + (1 - Ytrain)*np.log(1 - y_hat))

# 这是书籍里的代码原函数,看不懂和公式的关系。

# loss_new = -np.sum(np.log(y_hat * (2 * Ytrain - 1) + (1 - Ytrain))) / m

# 记录损失

# self.loss_list.append(loss_new) # 需要时用

# 显示最新一次损失

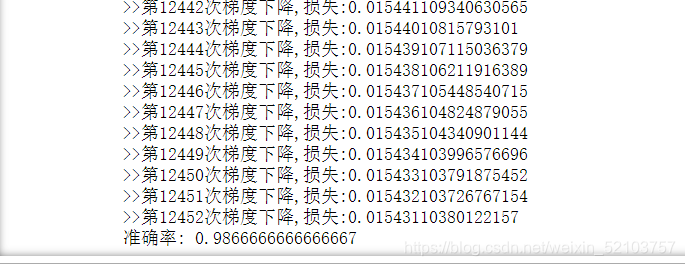

print(f">>第{step+1}次梯度下降,损失:{loss_new}")

# 达到阈值就停止迭代

if self.diff is not None:

if np.abs(loss_new - loss_last) <= self.diff:

break

loss_last = loss_new

# 更新w

self.w -= self.eta * dJ

3、Predict Part

def _Predict(self, X):

# 预处理

X = self._X_preprocess(X)

# 预测y=1的概率

y_pred = 1/(1+np.exp(-np.dot(X, self.w)))

# 根据概率 预测类别

return np.where(y_pred >= 0.5, 1, 0)

检验准确率结果(过程省略)

经过100次预测观察准确率并取平均值,还挺高,该代码应该可放心使用

完整代码

import numpy as np

class LogisticRegression(object):

def __init__(self, n_iter=5000, eta=0.0005, diff=None):

self.n_iter = n_iter

self.eta = eta

self.diff = diff

self.w = None

def _Fit(self, X_train, Y_train):

X_train = self._X_preprocess(X_train)

_, n = X_train.shape

self.w = np.random.random(n) * 0.05

self._gradient_descent(self.w, Y_train, X_train)

def _X_preprocess(self, Xtrain):

m, n = Xtrain.shape

new_Train_X = np.empty((m, n + 1))

new_Train_X[:, 0] = 1

new_Train_X[:, 1:] = Xtrain

return new_Train_X

def _gradient_descent(self, w, Ytrain, Xtrain):

m = Ytrain.size

if self.diff is not None:

loss_last = np.inf

for step in range(self.n_iter):

y_hat = 1. / (1. + np.exp(-np.dot(Xtrain, self.w)))

dJ = np.dot(y_hat - Ytrain, Xtrain) / Ytrain.size

loss_new = -1 / m * np.sum(Ytrain * np.log(y_hat) + (1 - Ytrain)*np.log(1 - y_hat))

print(f">>第{step+1}次梯度下降,损失:{loss_new}")

if self.diff is not None:

if np.abs(loss_new - loss_last) <= self.diff:

break

loss_last = loss_new

self.w -= self.eta * dJ

def _Predict(self, X):

X = self._X_preprocess(X)

y_pred = 1/(1+np.exp(-np.dot(X, self.w)))

return np.where(y_pred >= 0.5, 1, 0)

2745

2745

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?