- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊 | 接辅导、项目定制

🏡我的环境:

- 语言环境:Python3.11.4

- 编译器:Jupyter Notebook

- torcch版本:2.0.1

一、准备工作

pip install gensimimport jieba

import jieba.analyse

jieba.suggest_freq('沙瑞金',True)

jieba.suggest_freq('田国富',True)

jieba.suggest_freq('高育良',True)

jieba.suggest_freq('侯亮平',True)

jieba.suggest_freq('钟小艾',True)

jieba.suggest_freq('陈岩石',True)

jieba.suggest_freq('欧阳菁',True)

jieba.suggest_freq('易学习',True)

jieba.suggest_freq('王大路',True)

jieba.suggest_freq('蔡成功',True)

jieba.suggest_freq('孙连城',True)

jieba.suggest_freq('季昌明',True)

jieba.suggest_freq('丁义珍',True)

jieba.suggest_freq('郑西坡',True)

with open('/Users/wendyweng/Desktop/in_the_name_of_people.txt') as f:

result_cut=[]

lines = f.readlines()

for line in lines:

result_cut.append(list(jieba.cut(line)))

f.close()stopwords_list =[",","。","\n","\u3000",":","!","?","……"]

def remove_stopwords(ls):

return [word for word in ls if word not in stopwords_list]

result_stop=[remove_stopwords(x) for x in result_cut if remove_stopwords(x)]print(result_stop[100:103])二、训练模型

from gensim.models import Word2Vec

model =Word2Vec(result_stop,

vector_size=100,

window=5,

min_count=1)三、模型应用

1.计算词汇相似度

print(model.wv.similarity('沙瑞金','季昌明'))

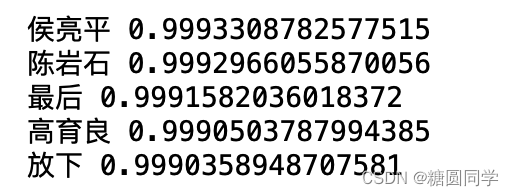

print(model.wv.similarity('沙瑞金','田国富'))for e in model.wv.most_similar(positive=['沙瑞金'],topn=5):

print(e[0],e[1])2.找到不匹配的词汇

odd_word=model.wv.doesnt_match(["苹果","香蕉","橙子","书"])

print(f"在这组词汇中不匹配的词汇:{odd_word}")3.计算词汇的词频

word_frequency= model.wv.get_vecattr("沙瑞金","count")

print(f"沙瑞金:{word_frequency}")

四、总结

word2vec是Google研究团队里的Tomas Mikolov等人于2013年的《Distributed Representations ofWords and Phrases and their Compositionality》以及后续的《Efficient Estimation of Word Representations in Vector Space》两篇文章中提出的一种高效训练词向量的模型,基本出发点是上下文相似的两个词,它们的词向量也应该相似,比如香蕉和梨在句子中可能经常出现在相同的上下文中,因此这两个词的表示向量应该就比较相似。

word2vec模型中比较重要的概念是词汇的上下文。

7339

7339

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?