PettingZoo: A Standard API for Multi-Agent Reinforcement Learning

PettingZoo:多智能体强化学习的标准API

目录

2 Background and Related Works

2.1 Partially Observable Stochastic Games and RLlib

2.2 OpenSpiel and Extensive Form Games

2.2 OpenSpiel和Extensive Form游戏

4 Case Studies of Problems With The POSG Model in MARL

4.1 POSGs Don’t Allow Access To Information You Should Have

4.2 POSGs Based APIs Are Not Conceptually Clear For Games Implemented In Code

4.2 基于posg的api对于代码实现的游戏在概念上并不清晰

5 The Agent Environment Cycle Games Model

6.5 Environment Creation and the Parallel API

Abstract 摘要

This paper introduces the PettingZoo library and the accompanying Agent Environment Cycle (“AEC”) games model. PettingZoo is a library of diverse sets of multi-agent environments with a universal, elegant Python API. PettingZoo was developed with the goal of accelerating research in Multi-Agent Reinforcement Learning (“MARL”), by making work more interchangeable, accessible and reproducible akin to what OpenAI’s Gym library did for single-agent reinforcement learning. PettingZoo’s API, while inheriting many features of Gym, is unique amongst MARL APIs in that it’s based around the novel AEC games model. We argue, in part through case studies on major problems in popular MARL environments, that the popular game models are poor conceptual models of games commonly used in MARL and accordingly can promote confusing bugs that are hard to detect, and that the AEC games model addresses these problems.

本文介绍了PettingZoo库及其附带的Agent Environment Cycle(“AEC”)博弈模型。PettingZoo是一个包含多种多代理环境集的库,具有通用的、优雅的Python API。PettingZoo的开发目标是加速多智能体强化学习(“MARL”)的研究,通过使工作更具互换性、可访问性和可重复性,类似于OpenAI的Gym库为单智能体强化学习所做的工作。PettingZoo的API虽然继承了Gym的许多功能,但在MARL API中是独一无二的,因为它基于新颖的AEC游戏模型。我们认为,在某种程度上,通过对流行MARL环境中主要问题的案例研究,流行的游戏模型是MARL中常用的游戏的糟糕概念模型,因此可能导致难以检测的混淆错误,而AEC游戏模型解决了这些问题。

1 Introduction

1 介绍

Multi-Agent Reinforcement Learning (MARL) has been behind many of the most publicized achievements of modern machine learning — AlphaGo Zero [Silver et al, 2017], OpenAI Five [OpenAI, 2018], AlphaStar [Vinyals et al, 2019]. These achievements motivated a boom in MARL research, with Google Scholar indexing 9,480 new papers discussing multi-agent reinforcement learning in 2020 alone. Despite this boom, conducting research in MARL remains a significant engineering challenge. A large part of this is because, unlike single agent reinforcement learning which has OpenAI’s Gym, no de facto standard API exists in MARL for how agents interface with environments.

多智能体强化学习(MARL)是现代机器学习中许多最广为人知的成就的背后——AlphaGo Zero [Silver等人,2017],OpenAI Five [OpenAI, 2018], AlphaStar [Vinyals等人,2019]。这些成就推动了MARL研究的繁荣,仅在2020年,Google Scholar就索引了9480篇讨论多智能体强化学习的新论文。尽管如此,在MARL中进行研究仍然是一个重大的工程挑战。这在很大程度上是因为,与OpenAI的Gym的单代理强化学习不同,MARL中没有关于代理如何与环境交互的事实上的标准API。

This makes the reuse of existing learning code for new purposes require substantial effort, consuming researchers’ time and preventing more thorough comparisons in research. This lack of a standardized API has also prevented the proliferation of learning libraries in MARL. While a massive number of Gym-based single-agent reinforcement learning libraries or code bases exist (as a rough measure 669 pip-installable packages depend on it at the time of writing GitHub [2021]), only 5 MARL libraries with large user bases exist [Lanctot et al, 2019, Weng et al, 2020, Liang et al, 2018, Samvelyan et al, 2019, Nota, 2020]. The proliferation of these Gym based learning libraries has proved essential to the adoption of applied RL in fields like robotics or finance and without them the growth of applied MARL is a significantly greater challenge. Motivated by this, this paper introduces the PettingZoo library and API, which was created with the goal of making research in MARL more accessible and serving as a multi-agent version of Gym.

这使得为了新的目的重用现有的学习代码需要大量的努力,消耗了研究人员的时间,并阻碍了研究中更彻底的比较。缺乏标准化的API也阻碍了MARL中学习库的扩展。虽然存在大量基于gym的单智能体强化学习库或代码库(在编写GitHub时,粗略估计有669个可安装包依赖于它[2021]),但只有5个具有大量用户基础的MARL库存在[Lanctot等人,2019,Weng等人,2020,Liang等人,2018,Samvelyan等人,2019,Nota, 2020]。事实证明,这些基于Gym的学习库的激增对于在机器人或金融等领域采用应用强化学习至关重要,没有它们,应用强化学习的增长将是一个更大的挑战。受此启发,本文介绍了PettingZoo库和API,其创建的目的是使MARL的研究更容易访问,并作为Gym的多智能体版本。

Prior to PettingZoo, the numerous single-use MARL APIs almost exclusively inherited their design from the two most prominent mathematical models of games in the MARL literature—Partially Observable Stochastic Games (“POSGs”) and Extensive Form Games (“EFGs”). During our development, we discovered that these common models of games are not conceptually clear for multi-agent games implemented in code and cannot form the basis of APIs that cleanly handle all types of multi-agent environments.

在PettingZoo之前,许多一次性使用的MARL api几乎完全继承了MARL文献中两个最突出的游戏数学模型——部分可观察随机游戏(POSGs)和广泛形式游戏(EFGs)。在我们的开发过程中,我们发现这些常见的游戏模型对于用代码执行的多智能体游戏在概念上并不清晰,并且不能形成清晰地处理所有类型的多智能体环境的api基础.

To solve this, we introduce a new formal model of games, Agent Environment Cycle (“AEC”) games that serves as the basis of the PettingZoo API. We argue that this model is a better conceptual fit for games implemented in code. and is uniquely suitable for general MARL APIs. We then prove that any AEC game can be represented by the standard POSG model, and that any POSG can be represented by an AEC game. To illustrate the importance of the AEC games model, this paper further covers two case studies of meaningful bugs in popular MARL implementations. In both cases, these bugs went unnoticed for a long time. Both stemmed from using confusing models of games, and would have been made impossible by using an AEC games based API.

为了解决这个问题,我们引入了一个新的正式游戏模型,Agent Environment Cycle(“AEC”)游戏,作为PettingZoo API的基础。我们认为这种模式更适合用代码实现的游戏。并且只适用于一般的MARL api。然后,我们证明了任何AEC博弈都可以用标准POSG模型表示,并且任何POSG都可以用AEC博弈表示。为了说明AEC游戏模型的重要性,本文进一步介绍了流行的MARL实现中有意义的bug的两个案例研究。在这两种情况下,这些漏洞在很长一段时间内都没有被注意到。两者都源于使用令人困惑的游戏模型,如果使用基于AEC游戏的API,就不可能做到这一点。

The PettingZoo library can be installed via pip install pettingzoo, the documentation is available at https://www.pettingzoo.ml, and the repository is available at https://github.com/ Farama-Foundation/PettingZoo.

PettingZoo库可以通过pip install PettingZoo安装,文档可在https://www.pettingzoo.ml获得,存储库可在https://github.com/ Farama-Foundation/PettingZoo获得。

2 Background and Related Works

2 背景及相关工作

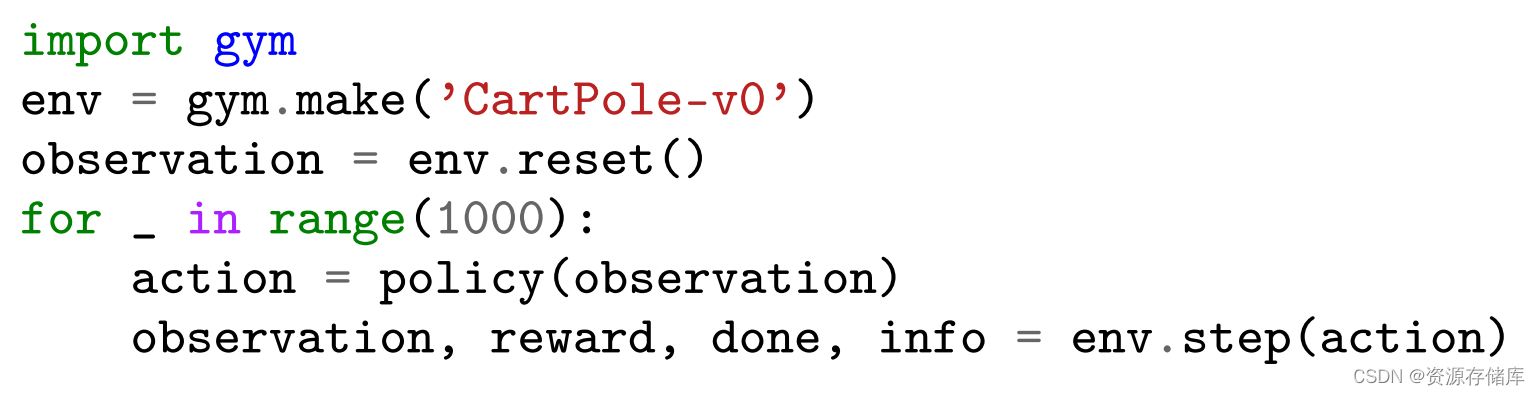

Here we briefly survey the state of modeling and APIs in MARL, beginning by briefly looking at Gym’s API (Figure 1). This API is the de facto standard in single agent reinforcement learning, has largely served as the basis for subsequent multi-agent APIs, and will be compared to later.

在这里,我们简要地调查了MARL中的建模和API的状态,首先简要地看看Gym的API(图1)。这个API是单智能体强化学习的事实上的标准,在很大程度上是后续多智能体API的基础,并将在后面进行比较。

Figure 1: An example of the basic usage of Gym

图1:Gym的基本用法示例

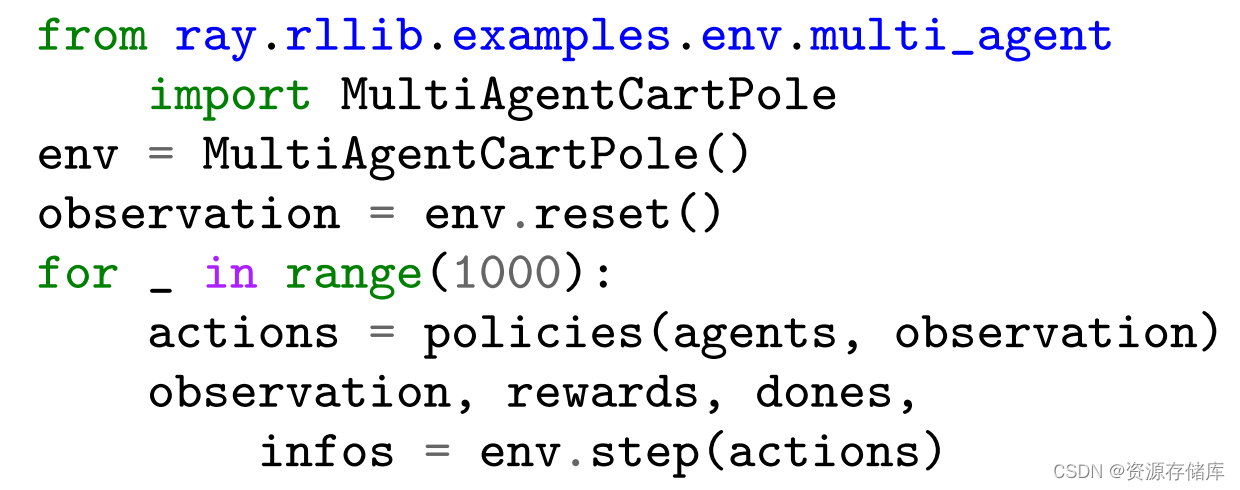

Figure 2: An example of the basic usage of RLlib

图2:RLlib基本用法的一个示例

The Gym API is a fairly straightforward Python API that borrows from the POMDP conceptualization of RL. The API’s simplicity and conceptual clarity has made it highly influential, and it naturally accompanying the pervasive POMDP model that’s used as the pervasive mental and mathematical model of reinforcement learning [Brockman et al, 2016]. This makes it easier for anyone with an understanding of the RL framework to understand Gym’s API in full.

Gym API是一个相当简单的Python API,它借鉴了RL的POMDP概念。API的简单性和概念的清晰性使其具有很高的影响力,并且它自然地伴随着普遍的POMDP模型,该模型被用作强化学习的普遍心理和数学模型[Brockman等人,2016]。这使得任何了解RL框架的人都更容易完整地理解Gym的API。

2.1 Partially Observable Stochastic Games and RLlib

2.1部分可观察随机对策与RLlib

Multi-agent reinforcement learning does not have a universal mental and mathematical model like the POMDP model in single-agent reinforcement learning. One of the most popular models is the partially observable stochastic game (“POSG”). This model is very similar to, and strictly more general than, multi-agent MDPs [Boutilier, 1996], Dec-POMDPs [Bernstein et al, 2002], and Stochastic (“Markov”) games [Shapley, 1953]). In a POSG, all agents step together, observe together, and are rewarded together. The full formal definition is presented in Appendix C.1

多智能体强化学习不像单智能体强化学习中的POMDP模型那样具有通用的心理和数学模型。最流行的模型之一是部分可观察随机博弈(POSG)。该模型与多智能体mdp [Boutilier, 1996]、dec - pomdp [Bernstein et al ., 2002]和随机(“马尔可夫”)博弈[Shapley, 1953]非常相似,而且更普遍。在POSG中,所有代理一起行动,一起观察,一起获得奖励。完整的正式定义见附录C.1

This model of simultaneous stepping naturally translates into Gym-like APIs, where the actions, observations, rewards, and so on are lists or dictionaries of individual values for agents. This design choice has become the standard for MARL outside of strictly turn-based games like poker, where simultaneous stepping would be a poor conceptual fit [Lowe et al, 2017, Zheng et al, 2017, Gupta et al, 2017, Liu et al, 2019, Liang et al, 2018, Weng et al, 2020]. One example of this is shown in Figure 2 with the multi-agent API in RLlib [Liang et al, 2018], where agent-keyed dictionaries of actions, observations and rewards are passed in a simple extension of the Gym API.

这种同步步进模型自然地转化为类似gym的api,其中的动作、观察、奖励等是代理的单个值的列表或字典。这种设计选择已经成为严格基于回合制的游戏(如扑克)之外的MARL标准,在这些游戏中,同步步进的概念契合度很差[Lowe等人,2017,Zheng等人,2017,Gupta等人,2017,Liu等人,2019,Liang等人,2018,Weng等人,2020]。图2中展示了RLlib中的多智能体API [Liang等人,2018],其中通过Gym API的简单扩展传递动作、观察和奖励的智能体关键字典。

This model has made it much easier to apply single agent RL methods to multi-agent settings.

However, there are two immediate problems with this model:

该模型使得将单代理强化学习方法应用于多代理设置变得容易得多。

然而,这种模式存在两个直接的问题:

1. Supporting strictly turn-based games like chess requires constantly passing dummy actions for non-acting agents (or using similar tricks).

2. Changing the number of agents for agent death or creation is very awkward, as learning code has to cope with lists suddenly changing sizes.

1. 支持严格的回合制游戏,如国际象棋,需要不断地向不行动的代理传递虚拟动作(或使用类似的技巧)。

2. 更改代理死亡或创建的代理数量是非常尴尬的,因为学习代码必须处理列表突然变化的大小。

PettingZoo是一个针对多智能体强化学习的库,提供了一种通用且优雅的Python API。它旨在加速MARL研究,类似于Gym在单智能体强化学习中的作用。PettingZoo的API基于Agent Environment Cycle (AEC) 模型,解决了部分可观察随机游戏(POSG)模型在多智能体环境中的局限性。PettingZoo包含多个多智能体环境,已被多个学习库和课程采用,有望成为多智能体强化学习的标准化工具。

PettingZoo是一个针对多智能体强化学习的库,提供了一种通用且优雅的Python API。它旨在加速MARL研究,类似于Gym在单智能体强化学习中的作用。PettingZoo的API基于Agent Environment Cycle (AEC) 模型,解决了部分可观察随机游戏(POSG)模型在多智能体环境中的局限性。PettingZoo包含多个多智能体环境,已被多个学习库和课程采用,有望成为多智能体强化学习的标准化工具。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

496

496

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?