论文名称:

Going deeper with convolutions

一、网络结构详解

网络中的亮点:

1.引入了Inception结构(融合不同尺度的特征信息)

2.使用1x1的卷积核进行降维以及映射处理

3.添加两个辅助分类器帮助训练

AlexNet和VGG都只有一个输出层,GoogLeNet有三个输出层(其中两个辅助分类层)

图中红色圆圈为辅助分类层,黑色圆圈为主分类器

4.丢弃全连接层,使用平均池化层(大大减少模型参数)

网络结构图:

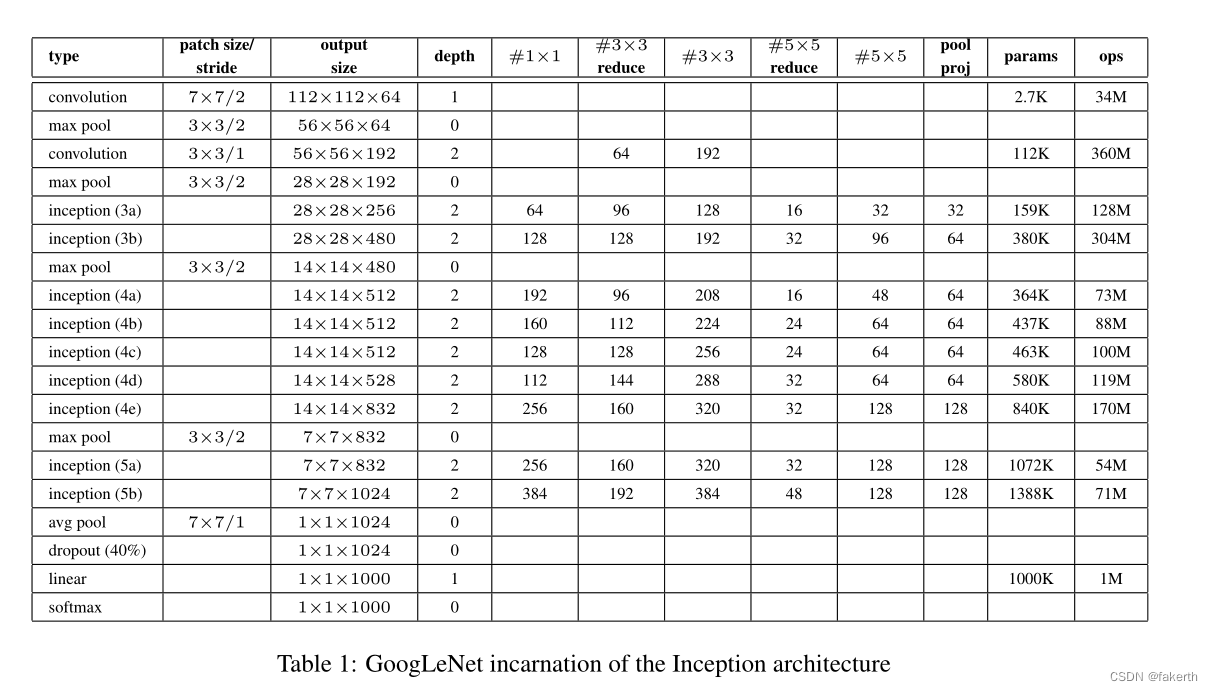

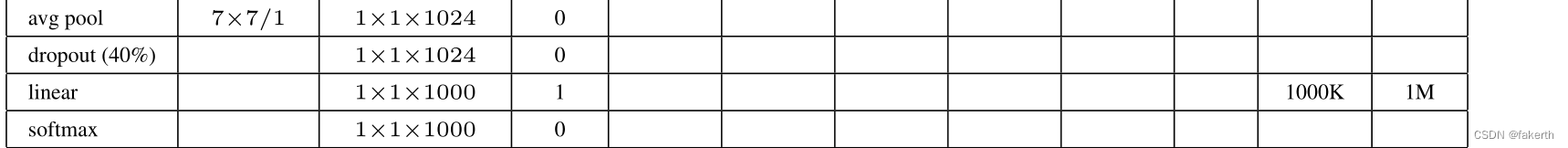

用表格的形式表示GoogLeNet的网络结构如下所示:

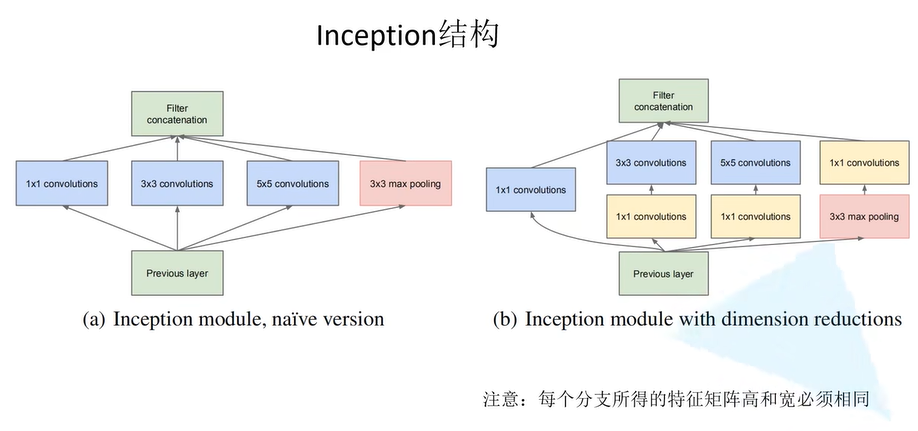

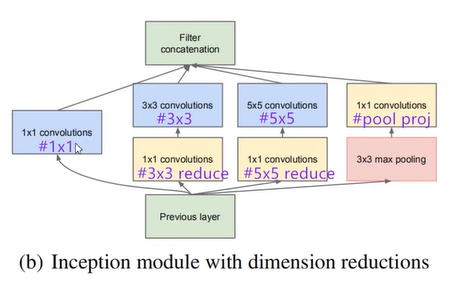

Inception结构

图a:

Inception Module基本组成结构有四个成分。1x1卷积,3x3卷积,5x5卷积,3x3最大池化下采样。

先将上一层的结构分别经过1x1卷积,3x3卷积,5x5卷积,3x3最大池化,然后根据深度进行拼接

最后对四个成分运算结果进行通道上组合,这就是Naive Inception(上图a)的核心思想:利用不同大小的卷积核实现不同尺度的感知,最后进行融合,可以得到图像更好的表征。注意,**每个分支得到的特征矩阵高和宽必须相同。**但是Naive Inception有两个非常严重的问题:

1.所有卷积层直接和前一层输入的数据对接,所以卷积层中的计算量会很大

2.在这个单元中使用的最大池化层保留了输入数据的特征图的深度,所以在最后进行合并时,总的输出的特征图的深度只会增加,这样增加了该单元之后的网络结构的计算量。

图b:

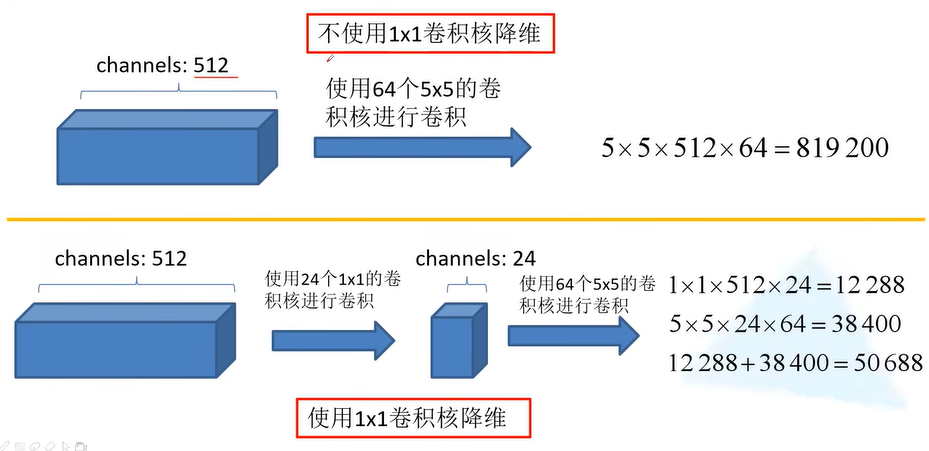

这里使用1x1 卷积核主要目的是进行压缩降维,减少参数量,也就是上图b,从而让网络更深、更宽,更好的提取特征,这种思想也称为Pointwise Conv,简称PW。

小算一下,假设输入图像的通道是512,使用64个5x5的卷积核进行卷积,不使用1x1卷积核降维需要的参数为512x64x5x5=819200。若使用24个1x1的卷积核降维,得到图像通道为24,再与65个卷积核进行卷积,此时需要的参数为512x24x1x1+24x65x5x5=50688。参数量明显的下降了

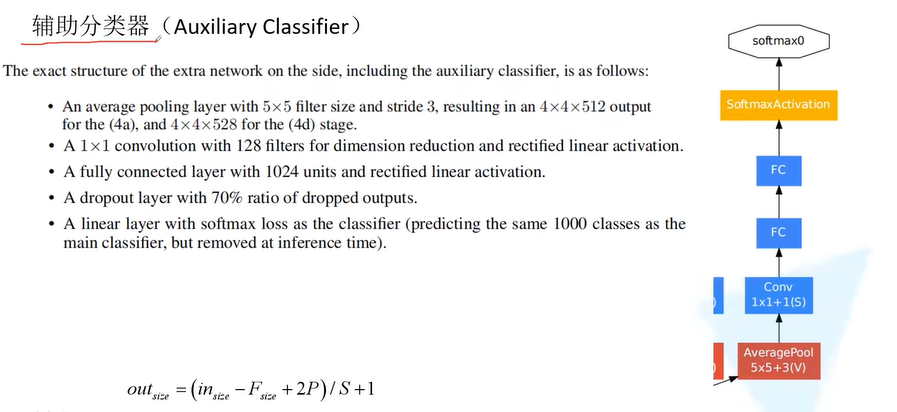

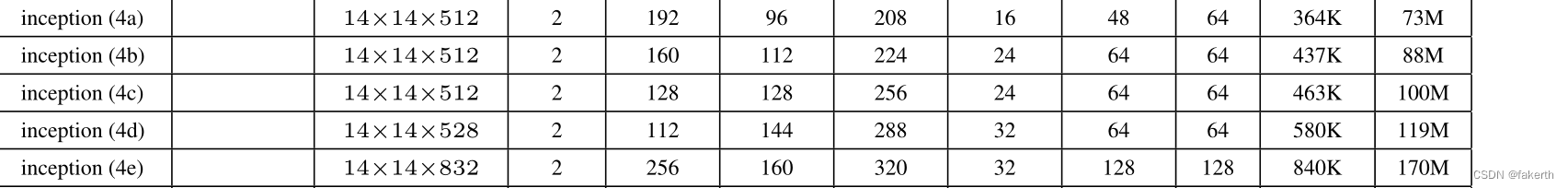

辅助分类器:

它的结构为:

1.平均池化层

窗口大小为5×5,stride=3,第一个辅助分类器的输入来源于Inception(4a),第二个辅助分类器的输入来源于Inception(4d)。从给出的表格可以看出Inception(4a)的输出为14×14×512, Inception(4d)阶段的输出为14×14×528。所以(4a)经过平均池化层以后的特征矩阵大小为为4×4×512,(4d)经过平均池化层以后的特征矩阵大小为为4×4×528。

2.卷积层

128个1×1卷积核进行卷积(降维),使用ReLU激活函数。

3.全连接层

1024个结点的全连接层,同样使用ReLU激活函数。

4.dropout

dropout,以70%比例随机失活神经元。

5.softmax

通过softmax输出1000个预测结果。

Inception结构的参数怎么看呢?在下面这张图标注出来了。

紫色字分别对应表格的表头名称

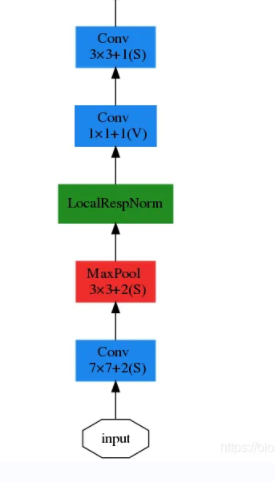

GoogLeNet详细介绍:

1.卷积层

输入图像为224x224x3,卷积核大小7x7,步长为2,padding为3,输出通道数64,输出大小为(224-7+3x2)/2+1=112.5(向下取整)=112,输出为112x112x64,卷积后进行ReLU操作。

2.最大池化层

窗口大小3x3,步长为2,输出大小为((112 -3)/2)+1=55.5(向上取整)=56,输出为56x56x64。

3.两层卷积层

第一层:用64个1x1的卷积核(3x3卷积核之前的降维)将输入的特征图(56x56x64)变为56x56x64,然后进行ReLU操作。

第二层:用卷积核大小3x3,步长为1,padding为1,输出通道数192,进行卷积运算,输出大小为(56-3+1x2)/1+1=56,输出为56x56x192,然后进行ReLU操作。

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-QAL4Q9oX-1676712626446)(C:\Users\GuoShuXian\AppData\Roaming\Typora\typora-user-images\image-20221225145838823.png)]

4.最大池化层

窗口大小3x3,步长为2,输出通道数192,输出为((56 - 3)/2)+1=27.5(向上取整)=28,输出特征图维度为28x28x192。

5.Inception(3a)

1.使用64个1x1的卷积核,卷积后输出为28x28x64,然后RuLU操作。

2.96个1x1的卷积核(3x3卷积核之前的降维)卷积后输出为28x28x96,进行ReLU计算,再进行128个3x3的卷积,输出28x28x128。

3.16个1x1的卷积核(5x5卷积核之前的降维)卷积后输出为28x28x16,进行ReLU计算,再进行32个5x5的卷积,输出28x28x32。

4.最大池化层,窗口大小3x3,输出28x28x192,然后进行32个1x1的卷积,输出28x28x32.。

6.Inception(3b)

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-7xSSennm-1676712626450)(C:\Users\GuoShuXian\AppData\Roaming\Typora\typora-user-images\image-20221225145927002.png)]

7.最大池化层

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-BBG4BRLt-1676712626457)(C:\Users\GuoShuXian\AppData\Roaming\Typora\typora-user-images\image-20221225145959969.png)]

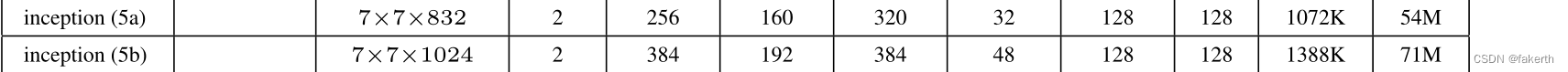

8.Inception (4a)(4b)(4c)(4d)(4e)

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-8Ah89b58-1676712626460)(C:\Users\GuoShuXian\AppData\Roaming\Typora\typora-user-images\image-20221225150039747.png)]

9.最大池化层

10.Inception(5a)(5b)

11.输出层

GoogLeNet采用平均池化层,得到高和宽均为1的卷积层;然后dropout,以40%随机失活神经元;输出层激活函数采用的是softmax。

GoogLeNet与VGGNet的模型参数比较:

辅助分类器只在训练中用得到,在推理中不使用

推理和训练的区别:

经过训练(training)的神经网络可以将其所学到的知识应用于数字世界的任务——识别图像、口语词、血液疾病,或推荐某人之后可能会购买的鞋子等各种各样的应用。神经网络的这种更快更高效的版本可以基于其所训练的内容对其所获得的新数据进行「推导」,用人工智能领域的术语来说是「推理(inference)」。

但VGG搭建比较方便,所以更常用,只针对分类的话GooLeNet更加优秀

二、GoogLeNet代码实现

1.model.py

import torch.nn as nn

import torch

import torch.nn.functional as F

class GoogLeNet(nn.Module):

def __init__(self, num_classes=1000, aux_logits=True, init_weights=False):#传入的参数为分类的数量,是否使用辅助分类器,是否初始化权重

super(GoogLeNet, self).__init__()

self.aux_logits = aux_logits

# 最开始输入深度是3,卷积核个数是64,卷积核大小是7,输出特征矩阵大小为112*112,(224-7+2*3)/2+1=112.5,在pytorch中默认向下取整也就是112

self.conv1 = BasicConv2d(3, 64, kernel_size=7, stride=2, padding=3)

##池化核大小等于3,(112-3+0*2)/2+1=55.5,向上取整等于56,输出特征矩阵大小为56*56

self.maxpool1 = nn.MaxPool2d(3, stride=2, ceil_mode=True)#ceil_mode=True则得到的矩阵尺寸大小会向上取整,如果为False就向下取整

#输入深度为上一层卷积核的个数是64,卷积核个数是64,卷积核大小是1,(56-1+0*2)/1+1=56输出特征矩阵大小为56*56

self.conv2 = BasicConv2d(64, 64, kernel_size=1)

# 输入深度为上一层卷积核的个数是64,卷积核个数是192,卷积核大小是3,(56-3+1*2)/1+1=56输出特征矩阵大小为56*56

self.conv3 = BasicConv2d(64, 192, kernel_size=3, padding=1)

#池化核大小等于3,(56-3+0*2)/2+1=27.5,向上取整等于28,输出特征矩阵大小为28*28

self.maxpool2 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

#使用刚刚定义好的Inception,深度是上一层卷积核的个数92,剩余参数对照表格进行填写

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32)

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64)

# 池化核大小等于3,(28-3+0*2)/2+1=13.5,向上取整等于14,输出特征矩阵大小为14*14

self.maxpool3 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64)

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)

# 池化核大小等于3,(14-3+0*2)/2+1=6.5,向上取整等于7,输出特征矩阵大小为7*7

self.maxpool4 = nn.MaxPool2d(3, stride=2, ceil_mode=True)

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)

#如果使用辅助分类,也就是传入的参数aux_logits为true的话

if self.aux_logits:

#就构建辅助分类器1和2

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

#AdaptiveAvgPool2d是自适应平均池化下采样操作,无论输入尺寸多大,都能得到1*1的特征矩阵

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

self.dropout = nn.Dropout(0.4)

#输入的展平后的向量节点个数为1024,输出的节点个数是num_class

self.fc = nn.Linear(1024, num_classes)

if init_weights:

self._initialize_weights()

#定义正向传播过程

def forward(self, x):

# N x 3 x 224 x 224

x = self.conv1(x)

# N x 64 x 112 x 112

x = self.maxpool1(x)

# N x 64 x 56 x 56

x = self.conv2(x)

# N x 64 x 56 x 56

x = self.conv3(x)

# N x 192 x 56 x 56

x = self.maxpool2(x)

# N x 192 x 28 x 28

x = self.inception3a(x)

# N x 256 x 28 x 28

x = self.inception3b(x)

# N x 480 x 28 x 28

x = self.maxpool3(x)

# N x 480 x 14 x 14

x = self.inception4a(x)

# N x 512 x 14 x 14

#在这里判断是否使用辅助分类器,training是判断当前处于训练模式还是预测模式,aux_logits就是是否使用辅助分类器的参数

if self.training and self.aux_logits: # eval model lose this layer

aux1 = self.aux1(x)

x = self.inception4b(x)

# N x 512 x 14 x 14

x = self.inception4c(x)

# N x 512 x 14 x 14

x = self.inception4d(x)

# N x 528 x 14 x 14

if self.training and self.aux_logits: # eval model lose this layer

aux2 = self.aux2(x)

x = self.inception4e(x)

# N x 832 x 14 x 14

x = self.maxpool4(x)

# N x 832 x 7 x 7

x = self.inception5a(x)

# N x 832 x 7 x 7

x = self.inception5b(x)

# N x 1024 x 7 x 7

x = self.avgpool(x)

# N x 1024 x 1 x 1

x = torch.flatten(x, 1)

# N x 1024

x = self.dropout(x)

x = self.fc(x)

# N x 1000 (num_classes)

#再次进行判断,如果是训练模式并且是主分类器的话,就返回三个结果,如果条件不满足就只返回主分类器的结果

if self.training and self.aux_logits: # eval model lose this layer

return x, aux2, aux1

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

if m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.normal_(m.weight, 0, 0.01)

nn.init.constant_(m.bias, 0)

#定义Inception

class Inception(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):#传入所需的参数

super(Inception, self).__init__()

#结合结构进行搭建

#第一个分支:1*1卷积

self.branch1 = BasicConv2d(in_channels, ch1x1, kernel_size=1)

#第二个分支:有两个卷积3*3reduce和3*3

self.branch2 = nn.Sequential(

BasicConv2d(in_channels, ch3x3red, kernel_size=1),

BasicConv2d(ch3x3red, ch3x3, kernel_size=3, padding=1) # 为了让四个分支最后可以进行合并,必须保证四个分支的输出的高度和深度都是相同的,所以为了保证输出大小等于输入大小,将padding设为1

)

# 第三个分支:有两个卷积5*5reduce和5*5

self.branch3 = nn.Sequential(

BasicConv2d(in_channels, ch5x5red, kernel_size=1),

# 在官方的实现中,其实是3x3的kernel并不是5x5,这里我也懒得改了,具体可以参考下面的issue

# Please see https://github.com/pytorch/vision/issues/906 for details.

BasicConv2d(ch5x5red, ch5x5, kernel_size=5, padding=2) # 保证输出大小等于输入大小

)

# 第四个分支:一个3*3的池化和一个1*1卷积

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1),

BasicConv2d(in_channels, pool_proj, kernel_size=1)

)

#定义正向传播过程

def forward(self, x):

branch1 = self.branch1(x)

branch2 = self.branch2(x)

branch3 = self.branch3(x)

branch4 = self.branch4(x)

#将输出先放入列表中

outputs = [branch1, branch2, branch3, branch4]

#通过torch.cat(需要和并的列表,合并的维度)函数对四个输出进行合并,

# 因为是对深度进行合并所以根据pttorch tensor的通道排列顺序[batch, channel, height, width]来看,channel的通道怕排列顺序是1

return torch.cat(outputs, 1)

#定义辅助分类器

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):#输入深度和要分类的类别个数

super(InceptionAux, self).__init__()

#定义其结构

self.averagePool = nn.AvgPool2d(kernel_size=5, stride=3)

self.conv = BasicConv2d(in_channels, 128, kernel_size=1) # output[batch, 128, 4, 4]

self.fc1 = nn.Linear(2048, 1024)

self.fc2 = nn.Linear(1024, num_classes)

#正向传播

def forward(self, x):

# aux1: N x 512 x 14 x 14, aux2: N x 528 x 14 x 14

x = self.averagePool(x)

# aux1: N x 512 x 4 x 4, aux2: N x 528 x 4 x 4

x = self.conv(x)

# N x 128 x 4 x 4

x = torch.flatten(x, 1)#展平

#原论文中是70%,但是其实50%

#self.training会随着测试和训练的不同而变化,当我们实例化一个模型model后,可以通过model:train()和model.eval()来控制模型的状态,

# 在model.train()模式下self.training=True,在model.eval()模式下self.training=False

x = F.dropout(x, 0.5, training=self.training)

# N x 2048

x = F.relu(self.fc1(x), inplace=True)

x = F.dropout(x, 0.5, training=self.training)

# N x 1024

x = self.fc2(x)

# N x num_classes

return x

#卷积模板,将卷积和relu放在一起

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):#传入两个参数:输入矩阵深度和输出矩阵深度

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels, **kwargs)

self.relu = nn.ReLU(inplace=True)

#正向传播

def forward(self, x):#将输入矩阵传入

x = self.conv(x)

x = self.relu(x)

return x

2.train.py

import os

import sys

import json

import torch

import torch.nn as nn

from torchvision import transforms, datasets

import torch.optim as optim

from tqdm import tqdm

from model import GoogLeNet

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

print("using {} device.".format(device))

data_transform = {

"train": transforms.Compose([transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]),

"val": transforms.Compose([transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])}

data_root = os.path.abspath(os.path.join(os.getcwd(), "../..")) # get data root path

image_path = os.path.join(data_root, "data_set", "flower_data") # flower data set path

assert os.path.exists(image_path), "{} path does not exist.".format(image_path)

train_dataset = datasets.ImageFolder(root=os.path.join(image_path, "train"),

transform=data_transform["train"])

train_num = len(train_dataset)

# {'daisy':0, 'dandelion':1, 'roses':2, 'sunflower':3, 'tulips':4}

flower_list = train_dataset.class_to_idx

cla_dict = dict((val, key) for key, val in flower_list.items())

# write dict into json file

json_str = json.dumps(cla_dict, indent=4)

with open('class_indices.json', 'w') as json_file:

json_file.write(json_str)

batch_size = 32

nw = min([os.cpu_count(), batch_size if batch_size > 1 else 0, 8]) # number of workers

print('Using {} dataloader workers every process'.format(nw))

train_loader = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size, shuffle=True,

num_workers=nw)

validate_dataset = datasets.ImageFolder(root=os.path.join(image_path, "val"),

transform=data_transform["val"])

val_num = len(validate_dataset)

validate_loader = torch.utils.data.DataLoader(validate_dataset,

batch_size=batch_size, shuffle=False,

num_workers=nw)

print("using {} images for training, {} images for validation.".format(train_num,

val_num))

# test_data_iter = iter(validate_loader)

# test_image, test_label = test_data_iter.next()

net = GoogLeNet(num_classes=5, aux_logits=True, init_weights=True)

# 如果要使用官方的预训练权重,注意是将权重载入官方的模型,不是我们自己实现的模型

# 官方的模型中使用了bn层以及改了一些参数,不能混用

# import torchvision

# net = torchvision.models.googlenet(num_classes=5)

# model_dict = net.state_dict()

# # 预训练权重下载地址: https://download.pytorch.org/models/googlenet-1378be20.pth

# pretrain_model = torch.load("googlenet.pth")

# del_list = ["aux1.fc2.weight", "aux1.fc2.bias",

# "aux2.fc2.weight", "aux2.fc2.bias",

# "fc.weight", "fc.bias"]

# pretrain_dict = {k: v for k, v in pretrain_model.items() if k not in del_list}

# model_dict.update(pretrain_dict)

# net.load_state_dict(model_dict)

net.to(device)

loss_function = nn.CrossEntropyLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0003)

epochs = 30

best_acc = 0.0

save_path = './googleNet.pth'

train_steps = len(train_loader)

for epoch in range(epochs):

# train

net.train()

running_loss = 0.0

train_bar = tqdm(train_loader, file=sys.stdout)

for step, data in enumerate(train_bar):

images, labels = data

optimizer.zero_grad()

#因为有两个辅助分类器和一个主分类器,所以要计算三个损失

logits, aux_logits2, aux_logits1 = net(images.to(device))

loss0 = loss_function(logits, labels.to(device))

loss1 = loss_function(aux_logits1, labels.to(device))

loss2 = loss_function(aux_logits2, labels.to(device))

#将损失综合起来,0.3为论文中参数

loss = loss0 + loss1 * 0.3 + loss2 * 0.3

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

train_bar.desc = "train epoch[{}/{}] loss:{:.3f}".format(epoch + 1,

epochs,

loss)

# validate

net.eval()

acc = 0.0 # accumulate accurate number / epoch

with torch.no_grad():

val_bar = tqdm(validate_loader, file=sys.stdout)

for val_data in val_bar:

val_images, val_labels = val_data

outputs = net(val_images.to(device)) # eval model only have last output layer

predict_y = torch.max(outputs, dim=1)[1]

acc += torch.eq(predict_y, val_labels.to(device)).sum().item()

val_accurate = acc / val_num

print('[epoch %d] train_loss: %.3f val_accuracy: %.3f' %

(epoch + 1, running_loss / train_steps, val_accurate))

if val_accurate > best_acc:

best_acc = val_accurate

torch.save(net.state_dict(), save_path)

print('Finished Training')

if __name__ == '__main__':

main()

3.predict.py

import os

import json

import torch

from PIL import Image

from torchvision import transforms

import matplotlib.pyplot as plt

from model import GoogLeNet

def main():

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

data_transform = transforms.Compose(

[transforms.Resize((224, 224)),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# load image

img_path = "../tulip.jpg"

assert os.path.exists(img_path), "file: '{}' dose not exist.".format(img_path)

img = Image.open(img_path)

plt.imshow(img)

# [N, C, H, W]

img = data_transform(img)

# expand batch dimension

img = torch.unsqueeze(img, dim=0)

# read class_indict

json_path = './class_indices.json'

assert os.path.exists(json_path), "file: '{}' dose not exist.".format(json_path)

with open(json_path, "r") as f:

class_indict = json.load(f)

# create model

# aux_logits = False即不够建辅助分类器

model = GoogLeNet(num_classes=5, aux_logits=False).to(device)

# load model weights

weights_path = "./googleNet.pth"

#因为在保存模型时已经将辅助分类器的参数保存了,所以要设strict=False,默认是true,设为false后就不会进行匹配了

assert os.path.exists(weights_path), "file: '{}' dose not exist.".format(weights_path)

missing_keys, unexpected_keys = modelS.load_state_dict(torch.load(weights_path, map_location=device),

strict=False)

model.eval()

with torch.no_grad():

# predict class

output = torch.squeeze(model(img.to(device))).cpu()

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_cla)],

predict[predict_cla].numpy())

plt.title(print_res)

for i in range(len(predict)):

print("class: {:10} prob: {:.3}".format(class_indict[str(i)],

predict[i].numpy()))

plt.show()

if __name__ == '__main__':

main()

h.squeeze(model(img.to(device))).cpu()

predict = torch.softmax(output, dim=0)

predict_cla = torch.argmax(predict).numpy()

print_res = "class: {} prob: {:.3}".format(class_indict[str(predict_cla)],

predict[predict_cla].numpy())

plt.title(print_res)

for i in range(len(predict)):

print("class: {:10} prob: {:.3}".format(class_indict[str(i)],

predict[i].numpy()))

plt.show()

if name == ‘main’:

main()

1908

1908

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?