第P8周:YOLOv5-C3模块实现

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

环境:

- 编程语言:Python(3.10.12)

- 编译器:Google Colab

- 数据集:天气识别数据

- 深度学习环境:pytorch

- torch == 2.3.0+cu121

- torchvision == 0.18.0+cu121

一、前期准备

1.设置环境

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasets

import os,PIL,pathlib,warnings

warnings.filterwarnings("ignore") #忽略警告信息

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

device

device(type='cuda')

2.导入数据

from google.colab import drive

drive.mount("/content/drive/")

Mounted at /content/drive/

%cd "/content/drive/MyDrive/Colab Notebooks/jupyter notebook/data/"

/content/drive/Othercomputers/My laptop/jupyter notebook/data

import os,PIL,random,pathlib

data_dir = './weather_photos/'

data_dir = pathlib.Path(data_dir)

data_paths = list(data_dir.glob('*'))

classNames = [str(path).split("/")[1] for path in data_paths]

classNames

['rain', 'shine', 'sunrise', 'cloudy']

# 关于transforms.Compose的更多介绍可以参考:https://blog.csdn.net/qq_38251616/article/details/124878863

train_transforms = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

# transforms.RandomHorizontalFlip(), # 随机水平翻转

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

test_transform = transforms.Compose([

transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])

total_data = datasets.ImageFolder("./weather_photos/",transform=train_transforms)

total_data

Dataset ImageFolder

Number of datapoints: 1125

Root location: ./weather_photos/

StandardTransform

Transform: Compose(

Resize(size=[224, 224], interpolation=bilinear, max_size=None, antialias=True)

ToTensor()

Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

)

total_data.class_to_idx

{'cloudy': 0, 'rain': 1, 'shine': 2, 'sunrise': 3}

3.划分数据集

train_size = int(0.8*len(total_data))

test_size = len(total_data) - train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data,[train_size, test_size])

train_dataset, test_dataset

(<torch.utils.data.dataset.Subset at 0x79e9dcb49bd0>,

<torch.utils.data.dataset.Subset at 0x79e9dcb48fd0>)

batch_size = 4

train_dl = torch.utils.data.DataLoader(train_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

test_dl = torch.utils.data.DataLoader(test_dataset,

batch_size=batch_size,

shuffle=True,

num_workers=1)

for X, y in test_dl:

print("Shape of X [N, C, H, W]: ", X.shape)

print("Shape of y: ", y.shape, y.dtype)

break

Shape of X [N, C, H, W]: torch.Size([4, 3, 224, 224])

Shape of y: torch.Size([4]) torch.int64

二、搭建含C3模块的模型

1.YOLOv5介绍:

版权-https://blog.csdn.net/u012856866/article/details/130948742

以下博客均较为详细介绍了YOLOv5的结构:

- yolov5原理详解 (涉及内容:Yolov5框架,各组件分析,特征融合是怎么实现的?yolov5的具体特征融合方式等)

- YOLOv5【网络结构】超详细解读总结!!!建议收藏✨✨!

- ❗重点参考:【目标检测】yolov5模型详解

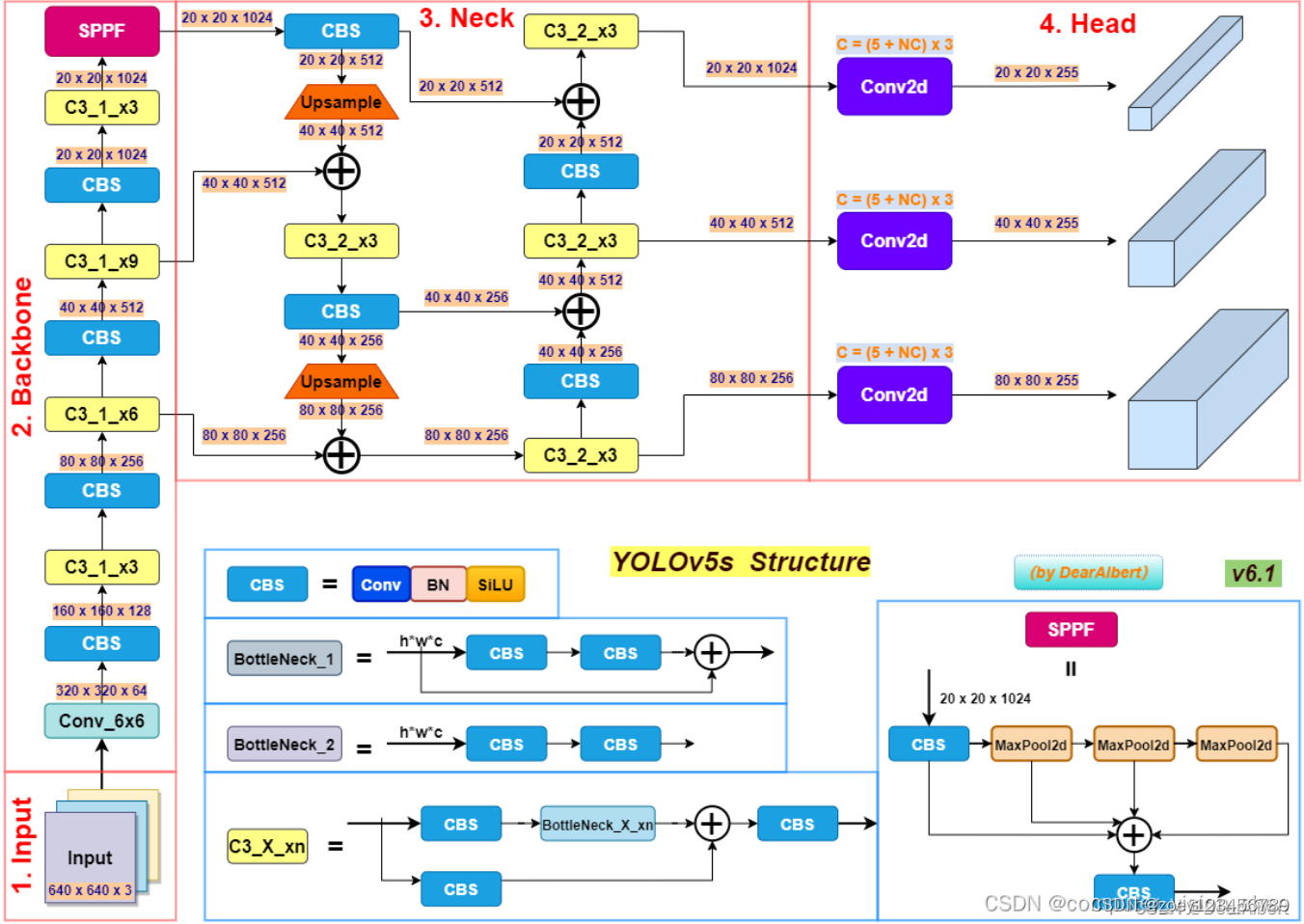

YOLOv5(You Only Look Once version 5)是一个流行的目标检测算法,它是 YOLO 系列算法的最新版本之一。YOLOv5 以其高速和高精度的特点,在实时目标检测任务中表现出色。如下是YOLOv5s的结构图:

可以看出YOLOv5s包含输入、Backbone、Neck、Head、输出等几个主要部分

-

backbone:主干网络,其大多时候指的是提取特征的网络。主干网络的作用就是提取图片中的信息,供后面的网络使用。在Yolov5中,常见的Backbone网络包括CSPDarknet53或ResNet。这些网络都是相对轻量级的,能够在保证较高检测精度的同时,尽可能地减少计算量和内存占用。

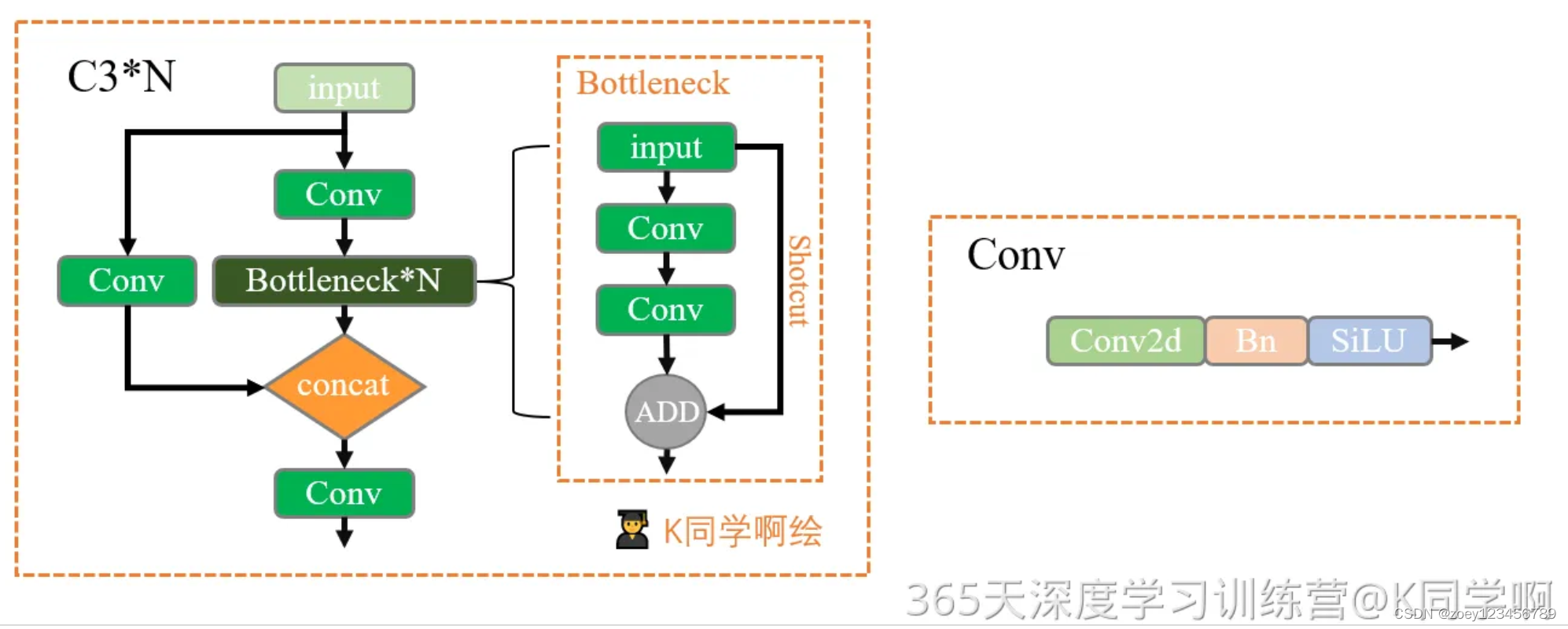

其结构主要有Conv模块、C3模块、SPPF模块。Conv模块主要由卷积层、BN层和激活函数组成,C3模块则将前面的特征图进行自适应聚合,SPPF模块通过全局特征与局部特征的加权融合,获取更全面的空间信息 -

neck:负责对Backbone提取的特征进行多尺度特征融合,并把这些特征传递给预测层。

例如,在Yolov5采用的PANet结构中,通过多次上采样、拼接、点和点积来设计聚合策略,以此更好地利用多尺度特征。 -

head:主要负责进行最终的回归预测,即利用Backbone骨干网络提取的特征图来检测目标的位置和类别。

最后,输出端是模型预测的结果,包括每个目标的类别和其对应的边界框坐标等信息。 -

组件:

-

CBS:由Conv+BN+SiLU激活函数组成;

-

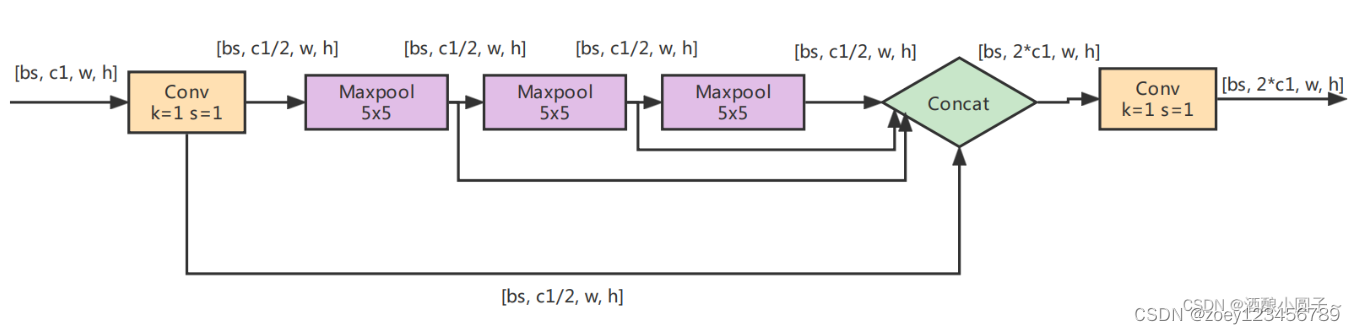

SPPF:spatial pyramid pooling-fast

-

C3:3指3个卷积

Concat:张量拼接,会扩充两个张量的维度,例如26×26×256和26×26×512两个张量拼接,结果是26×26×768。

add:张量相加,张量直接相加,不会扩充维度,例如104×104×128和104×104×128相加,结果还是104×104×128。

Input

YOLOv5在输入端Input采用了Mosaic数据增强,参考了CutMix数据增强的方法,Mosaic数据增强由原来的两张图像提高到四张图像进行拼接,并对图像进行随机缩放,随机裁剪和随机排列。使用数据增强可以改善数据集中,小、中、大目标数据不均衡的问题。

主要步骤为:

- Mosaic

- Copy paste

- Random affine(Scale, Translation and Shear)

- Mixup

- Albumentations

- Augment HSV(Hue, Saturation, Value)

- Random horizontal flip.

采用Mosaic数据增强的方式有几个优点:

- 丰富数据集:随机使用4张图像,随机缩放后随机拼接,增加很多小目标,大大丰富了数据集,提高了网络的鲁棒性。

- 减少GPU占用:随机拼接的方式让一张图像可以计算四张图像的数据,减少每个batch的数量,即使只有一个GPU,也能得到较好的结果。

- 同时通过对识别物体的裁剪,使模型根据局部特征识别物体,有助于被遮挡物体的检测,从而提升了模型的检测能力。

Backbone

- Conv模块(前文的CBS结构)

- BN层具有防止过拟合和加速收敛的作用;

- SiLu激活函数是Sigmoid 加权线性组合,SiLU 函数也称为 swish 函数。

公式:silu(x)=x∗σ(x), where σ(x) is the logistic sigmoid.Silu函数处处可导,且连续光滑。Silu并非一个单调的函数,最大的缺点是计算量大。

- C3模块

该模块是对残差特征进行学习的主要模块,结构分为两支:

- Bottleneck堆叠

- 仅经过一个基本卷积模块

最后两支进行concat操作

C3中的Bottleneck:

借鉴了ResNet的残差结构,如下:

- 其中一路先进行1×1卷积将特征图的通道数减小一半,从而减少计算量,再通过3 ×3卷积提取特征,并且将通道数加倍,其输入与输出的通道数是不发生改变的。

- 另外一路通过shortcut进行残差连接,与第一路的输出特征图相加,从而实现特征融合。

在YOLOv5中,Backbone中的Bottleneck都默认使shortcut为True,而在Head中的Bottleneck都不使用shortcut。

- SPPF模块

SPPF由SPP改进而来,SPP先通过一个标准卷积模块将输入通道减半,然后分别做kernel-size为5,9,13的max pooling(对于不同的核大小,padding是自适应的)。

对三次最大池化的结果与未进行池化操作的数据进行concat,最终合并后channel数是原来的2倍。

yolo的SPP借鉴了空间金字塔的思想,通过SPP模块实现了局部特征和全部特征。经过局部特征与全矩特征相融合后,丰富了特征图的表达能力,有利于待检测图像中目标大小差异较大的情况,对yolo这种复杂的多目标检测的精度有很大的提升。

SPPF(Spatial Pyramid Pooling - Fast )使用3个5×5的最大池化,代替原来的5×5、9×9、13×13最大池化,多个小尺寸池化核级联代替SPP模块中单个大尺寸池化核,从而在保留原有功能,即融合不同感受野的特征图,丰富特征图的表达能力的情况下,进一步提高了运行速度。

Neck

在Neck部分,yolov5主要采用了PANet结构。

PANet在FPN(feature pyramid network)上提取网络内特征层次结构,FPN中顶部信息流需要通过骨干网络(Backbone)逐层地往下传递,由于层数相对较多,因此计算量比较大(a)。

PANet在FPN的基础上又引入了一个自底向上(Bottom-up)的路径。经过自顶向下(Top-down)的特征融合后,再进行自底向上(Bottom-up)的特征融合,这样底层的位置信息也能够传递到深层,从而增强多个尺度上的定位能力。

- FPN(Feature Pyramid Networks) 特征金字塔模型:https://blog.csdn.net/u012856866/article/details/130271655

Head

Head部分主要用于检测目标,分别输出20×20,40×40和80×80的特征图大小,对应的是32×32,16×16和8×8像素的目标。

2.搭建模型

import torch.nn.functional as F

def autopad(k, p=None): # kernel, padding

# Pad to 'same'

if p is None:

p = k // 2 if isinstance(k, int) else [x // 2 for x in k] # auto-pad

return p

class Conv(nn.Module):

# Standard convolution--CBS module

def __init__(self, c1, c2, k=1, s=1, p=None, g=1, act=True): # ch_in, ch_out, kernel, stride, padding, groups

super().__init__()

self.conv = nn.Conv2d(c1, c2, k, s, autopad(k, p), groups=g, bias=False)

self.bn = nn.BatchNorm2d(c2)

self.act = nn.SiLU() if act is True else (act if isinstance(act, nn.Module) else nn.Identity())

def forward(self, x):

return self.act(self.bn(self.conv(x)))

class Bottleneck(nn.Module):

# Standard bottleneck

def __init__(self, c1, c2, shortcut=True, g=1, e=0.5): # ch_in, ch_out, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c_, c2, 3, 1, g=g)

self.add = shortcut and c1 == c2

def forward(self, x):

return x + self.cv2(self.cv1(x)) if self.add else self.cv2(self.cv1(x))

class C3(nn.Module):

# CSP + n*Bottleneck

def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5): # ch_in, ch_out, number, shortcut, groups, expansion

super().__init__()

c_ = int(c2 * e) # hidden channels

self.cv1 = Conv(c1, c_, 1, 1)

self.cv2 = Conv(c1, c_, 1, 1)

self.cv3 = Conv(2 * c_, c2, 1) # act=FReLU(c2)

self.m = nn.Sequential(*(Bottleneck(c_, c_, shortcut, g, e=1.0) for _ in range(n)))

def forward(self, x):

return self.cv3(torch.cat((self.m(self.cv1(x)), self.cv2(x)), dim=1))

class model_K(nn.Module):

def __init__(self):

super(model_K, self).__init__()

# 卷积模块

self.Conv = Conv(3, 32, 3, 2)

# C3模块1

self.C3_1 = C3(32, 64, 3, 2)

# 全连接网络层,用于分类

self.classifier = nn.Sequential(

nn.Linear(in_features=802816, out_features=100),

nn.ReLU(),

nn.Linear(in_features=100, out_features=4)

)

def forward(self, x):

x = self.Conv(x)

x = self.C3_1(x)

x = torch.flatten(x, start_dim=1)

x = self.classifier(x)

return x

device = "cuda" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))

model = model_K().to(device)

model

Using cuda device

model_K(

(Conv): Conv(

(conv): Conv2d(3, 32, kernel_size=(3, 3), stride=(2, 2), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(C3_1): C3(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv3): Conv(

(conv): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(m): Sequential(

(0): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(1): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

)

(2): Bottleneck(

(cv1): Conv(

(conv): Conv2d(32, 32, kernel_size=(1, 1), stride=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

(cv2): Conv(

(conv): Conv2d(32, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1), bias=False)

(bn): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)

(act): SiLU()

)

)

)

)

(classifier): Sequential(

(0): Linear(in_features=802816, out_features=100, bias=True)

(1): ReLU()

(2): Linear(in_features=100, out_features=4, bias=True)

)

)

import torchsummary as ts

ts.summary(model,(3,224,224))

----------------------------------------------------------------

Layer (type) Output Shape Param #

================================================================

Conv2d-1 [-1, 32, 112, 112] 864

BatchNorm2d-2 [-1, 32, 112, 112] 64

SiLU-3 [-1, 32, 112, 112] 0

Conv-4 [-1, 32, 112, 112] 0

Conv2d-5 [-1, 32, 112, 112] 1,024

BatchNorm2d-6 [-1, 32, 112, 112] 64

SiLU-7 [-1, 32, 112, 112] 0

Conv-8 [-1, 32, 112, 112] 0

Conv2d-9 [-1, 32, 112, 112] 1,024

BatchNorm2d-10 [-1, 32, 112, 112] 64

SiLU-11 [-1, 32, 112, 112] 0

Conv-12 [-1, 32, 112, 112] 0

Conv2d-13 [-1, 32, 112, 112] 9,216

BatchNorm2d-14 [-1, 32, 112, 112] 64

SiLU-15 [-1, 32, 112, 112] 0

Conv-16 [-1, 32, 112, 112] 0

Bottleneck-17 [-1, 32, 112, 112] 0

Conv2d-18 [-1, 32, 112, 112] 1,024

BatchNorm2d-19 [-1, 32, 112, 112] 64

SiLU-20 [-1, 32, 112, 112] 0

Conv-21 [-1, 32, 112, 112] 0

Conv2d-22 [-1, 32, 112, 112] 9,216

BatchNorm2d-23 [-1, 32, 112, 112] 64

SiLU-24 [-1, 32, 112, 112] 0

Conv-25 [-1, 32, 112, 112] 0

Bottleneck-26 [-1, 32, 112, 112] 0

Conv2d-27 [-1, 32, 112, 112] 1,024

BatchNorm2d-28 [-1, 32, 112, 112] 64

SiLU-29 [-1, 32, 112, 112] 0

Conv-30 [-1, 32, 112, 112] 0

Conv2d-31 [-1, 32, 112, 112] 9,216

BatchNorm2d-32 [-1, 32, 112, 112] 64

SiLU-33 [-1, 32, 112, 112] 0

Conv-34 [-1, 32, 112, 112] 0

Bottleneck-35 [-1, 32, 112, 112] 0

Conv2d-36 [-1, 32, 112, 112] 1,024

BatchNorm2d-37 [-1, 32, 112, 112] 64

SiLU-38 [-1, 32, 112, 112] 0

Conv-39 [-1, 32, 112, 112] 0

Conv2d-40 [-1, 64, 112, 112] 4,096

BatchNorm2d-41 [-1, 64, 112, 112] 128

SiLU-42 [-1, 64, 112, 112] 0

Conv-43 [-1, 64, 112, 112] 0

C3-44 [-1, 64, 112, 112] 0

Linear-45 [-1, 100] 80,281,700

ReLU-46 [-1, 100] 0

Linear-47 [-1, 4] 404

================================================================

Total params: 80,320,536

Trainable params: 80,320,536

Non-trainable params: 0

----------------------------------------------------------------

Input size (MB): 0.57

Forward/backward pass size (MB): 150.06

Params size (MB): 306.40

Estimated Total Size (MB): 457.04

----------------------------------------------------------------

三、训练模型

1.编写训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset) # 训练集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

train_loss, train_acc = 0, 0 # 初始化训练损失和正确率

for X, y in dataloader: # 获取图片及其标签

X, y = X.to(device), y.to(device)

# 计算预测误差

pred = model(X) # 网络输出

loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失

# 反向传播

optimizer.zero_grad() # grad属性归零

loss.backward() # 反向传播

optimizer.step() # 每一步自动更新

# 记录acc与loss

train_acc += (pred.argmax(1) == y).type(torch.float).sum().item()

train_loss += loss.item()

train_acc /= size

train_loss /= num_batches

return train_acc, train_loss

2.编写训练函数

def test (dataloader, model, loss_fn):

size = len(dataloader.dataset) # 测试集的大小

num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)

test_loss, test_acc = 0, 0

# 当不进行训练时,停止梯度更新,节省计算内存消耗

with torch.no_grad():

for imgs, target in dataloader:

imgs, target = imgs.to(device), target.to(device)

# 计算loss

target_pred = model(imgs)

loss = loss_fn(target_pred, target)

test_loss += loss.item()

test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc, test_loss

3.正式训练

import copy

optimizer = torch.optim.Adam(model.parameters(), lr= 1e-4)

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

epochs = 20

train_loss = []

train_acc = []

test_loss = []

test_acc = []

best_acc = 0 # 设置一个最佳准确率,作为最佳模型的判别指标

for epoch in range(epochs):

model.train()

epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, optimizer)

model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)

# 保存最佳模型到 best_model

if epoch_test_acc > best_acc:

best_acc = epoch_test_acc

best_model = copy.deepcopy(model)

train_acc.append(epoch_train_acc)

train_loss.append(epoch_train_loss)

test_acc.append(epoch_test_acc)

test_loss.append(epoch_test_loss)

# 获取当前的学习率

lr = optimizer.state_dict()['param_groups'][0]['lr']

template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')

print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss,

epoch_test_acc*100, epoch_test_loss, lr))

# 保存最佳模型到文件中

PATH = './best_model.pth' # 保存的参数文件名

torch.save(model.state_dict(), PATH)

print('Done')

Epoch: 1, Train_acc:69.0%, Train_loss:1.320, Test_acc:84.9%, Test_loss:0.436, Lr:1.00E-04

Epoch: 2, Train_acc:85.2%, Train_loss:0.410, Test_acc:81.3%, Test_loss:0.507, Lr:1.00E-04

Epoch: 3, Train_acc:93.8%, Train_loss:0.179, Test_acc:88.9%, Test_loss:0.274, Lr:1.00E-04

Epoch: 4, Train_acc:97.0%, Train_loss:0.096, Test_acc:88.0%, Test_loss:0.387, Lr:1.00E-04

Epoch: 5, Train_acc:98.2%, Train_loss:0.046, Test_acc:89.8%, Test_loss:0.261, Lr:1.00E-04

Epoch: 6, Train_acc:98.3%, Train_loss:0.041, Test_acc:87.1%, Test_loss:0.387, Lr:1.00E-04

Epoch: 7, Train_acc:94.7%, Train_loss:0.192, Test_acc:80.0%, Test_loss:0.796, Lr:1.00E-04

Epoch: 8, Train_acc:97.7%, Train_loss:0.069, Test_acc:79.1%, Test_loss:0.918, Lr:1.00E-04

Epoch: 9, Train_acc:98.4%, Train_loss:0.045, Test_acc:84.4%, Test_loss:0.590, Lr:1.00E-04

Epoch:10, Train_acc:97.9%, Train_loss:0.050, Test_acc:73.8%, Test_loss:1.447, Lr:1.00E-04

Epoch:11, Train_acc:98.4%, Train_loss:0.046, Test_acc:89.8%, Test_loss:0.395, Lr:1.00E-04

Epoch:12, Train_acc:99.7%, Train_loss:0.010, Test_acc:90.7%, Test_loss:0.370, Lr:1.00E-04

Epoch:13, Train_acc:98.9%, Train_loss:0.057, Test_acc:88.0%, Test_loss:0.615, Lr:1.00E-04

Epoch:14, Train_acc:96.4%, Train_loss:0.131, Test_acc:84.9%, Test_loss:0.814, Lr:1.00E-04

Epoch:15, Train_acc:98.0%, Train_loss:0.136, Test_acc:86.7%, Test_loss:0.604, Lr:1.00E-04

Epoch:16, Train_acc:97.7%, Train_loss:0.168, Test_acc:83.1%, Test_loss:0.853, Lr:1.00E-04

Epoch:17, Train_acc:99.2%, Train_loss:0.029, Test_acc:90.7%, Test_loss:0.354, Lr:1.00E-04

Epoch:18, Train_acc:99.6%, Train_loss:0.020, Test_acc:89.3%, Test_loss:0.542, Lr:1.00E-04

Epoch:19, Train_acc:100.0%, Train_loss:0.003, Test_acc:89.8%, Test_loss:0.501, Lr:1.00E-04

Epoch:20, Train_acc:99.4%, Train_loss:0.030, Test_acc:90.2%, Test_loss:0.725, Lr:1.00E-04

Done

四、结果可视化

1.Loss与Accuracy图

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

# plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率

epochs_range = range(epochs)

plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

from PIL import Image

classes = list(total_data.class_to_idx)

def predict_one_image(image_path, model, transform, classes):

test_img = Image.open(image_path).convert('RGB')

plt.imshow(test_img) # 展示预测的图片

test_img = transform(test_img)

img = test_img.to(device).unsqueeze(0)

model.eval()

output = model(img)

_,pred = torch.max(output,1)

pred_class = classes[pred]

print(f'预测结果是:{pred_class}')

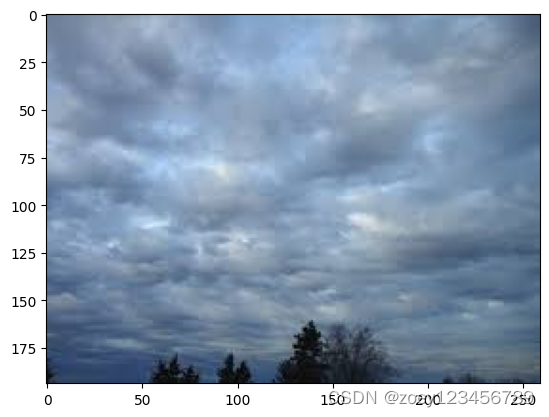

predict_one_image(image_path='./weather_photos/cloudy/cloudy23.jpg',

model=model,

transform=train_transforms,

classes=classes)

预测结果是:cloudy

2.模型评估

best_model.eval()

epoch_test_acc, epoch_test_loss = test(test_dl, best_model, loss_fn)

epoch_test_acc, epoch_test_loss

(0.9066666666666666, 0.36858088395179)

653

653

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?