这节课的exam主要就是神经网络的训练和使用前馈神经网络进行手写数字的预测,代码部分借鉴了很多博客,最后慢慢钻研,记录一些心得体会。

第一部分 多类分类器

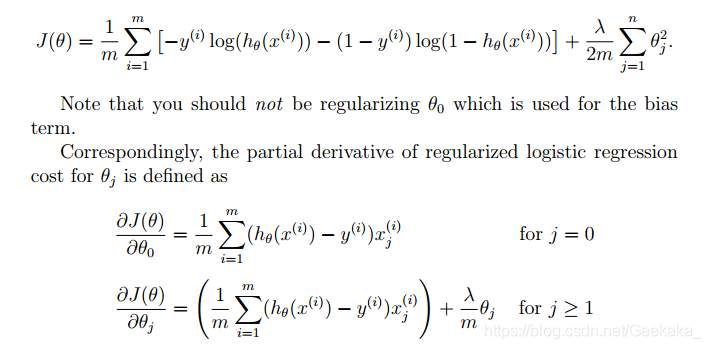

1.lrCostFunction.m

function [J, grad] = lrCostFunction(theta, X, y, lambda)

%LRCOSTFUNCTION Compute cost and gradient for logistic regression with

%regularization

% J = LRCOSTFUNCTION(theta, X, y, lambda) computes the cost of using

% theta as the parameter for regularized logistic regression and the

% gradient of the cost w.r.t. to the parameters.

% Initialize some useful values

m = length(y); % number of training examples

% You need to return the following variables correctly

J = 0;

grad = zeros(size(theta));

% ====================== YOUR CODE HERE ======================

h = sigmoid(X * theta);

J = 1/m*sum(-y'*log(h)-(1-y)'*log(1-h))+lambda/(2*m)*sum(theta(2:end) .^2);

grad(1, : ) = 1/m*(X(:,1))'*(h-y);

grad(2:end , :) = 1/m*(X(: , 2:end))'*(h-y)+lambda/(2*m)*theta(2:end , :);

% Instructions: Compute the cost of a particular choice of theta.

% You should set J to the cost.

% Compute the partial derivatives and set grad to the partial

% derivatives of the cost w.r.t. each parameter in theta

%

% Hint: The computation of the cost function and gradients can be

% efficiently vectorized. For example, consider the computation

%

% sigmoid(X * theta)

%

% Each row of the resulting matrix will contain the value of the

% prediction for that example. You can make use of this to vectorize

% the cost function and gradient computations.

%

% Hint: When computing the gradient of the regularized cost function,

% there're many possible vectorized solutions, but one solution

% looks like:

% grad = (unregularized gradient for logistic regression)

% temp = theta;

% temp(1) = 0; % because we don't add anything for j = 0

% grad = grad + YOUR_CODE_HERE (using the temp variable)

%

% =============================================================

grad = grad(:);

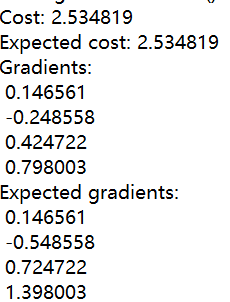

这步主要是计算损失和梯度,和之前几次作业差不多,难点就在公式的实现。

2.oneVsAll.m

这部分核心就是使用fmincg库函数求出所有label分类器模型的参数θ,代码基本都给了,最后把求好的theta放进all_theta变量中就可以了。

function [all_theta] = oneVsAll(X, y, num_labels, lambda)

%ONEVSALL trains multiple logistic regression classifiers and returns all

%the classifiers in a matrix all_theta, where the i-th row of all_theta

%corresponds to the classifier for label i

% [all_theta] = ONEVSALL(X, y, num_labels, lambda) trains num_labels

% logistic regression classifiers and returns each of these classifiers

% in a matrix all_theta, where the i-th row of all_theta corresponds

% to the classifier for label i

% Some useful variables

m = size(X, 1);

n = size(X, 2);

% You need to return the following variables correctly

all_theta = zeros(num_labels, n + 1);

% Add ones to the X data matrix

X = [ones(m, 1) X];

for c=1:num_labels

initial_theta = zeros(n+1,1);

options = optimset('GradObj', 'on', 'MaxIter', 50);

%调用fmincg库函数求出所有分类器的θ向量

[theta] = ...

fmincg (@(t)(lrCostFunction(t, X, (y == c), lambda)), ...

initial_theta, options);

%将每个θ放入all_theta的每一行中

all_theta(c,:) = theta';

end

3.predictOneVsAll

这里就是使用上面训练好的参数theta做一个多分类的工作。

function p = predictOneVsAll(all_theta, X)

%PREDICT Predict the label for a trained one-vs-all classifier. The labels

%are in the range 1..K, where K = size(all_theta, 1).

% p = PREDICTONEVSALL(all_theta, X) will return a vector of predictions

% for each example in the matrix X. Note that X contains the examples in

% rows. all_theta is a matrix where the i-th row is a trained logistic

% regression theta vector for the i-th class. You should set p to a vector

% of values from 1..K (e.g., p = [1; 3; 1; 2] predicts classes 1, 3, 1, 2

% for 4 examples)

m = size(X, 1);

num_labels = size(all_theta, 1);

% You need to return the following variables correctly

p = zeros(size(X, 1), 1);

% Add ones to the X data matrix

X = [ones(m, 1) X];

temp = all_theta *X';

%得到概率最大值

[maxX,pp] = max(temp);

p=pp';

end

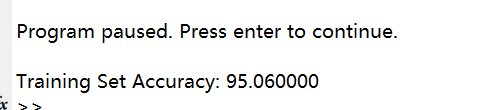

每个分类器(一共10个)迭代50次,准确率为95.06%。

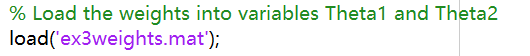

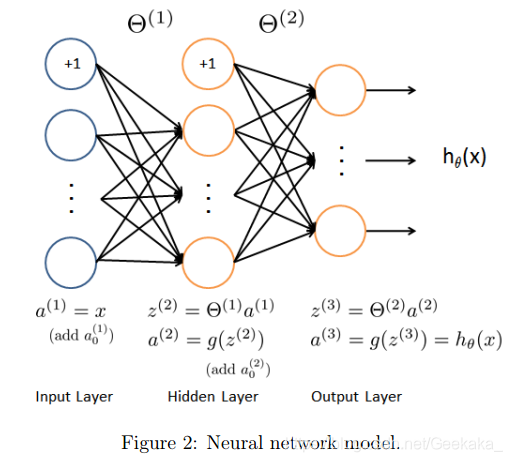

第二部分 神经网络

这部分的神经网络参数部分是文件中已经给出的,只需要load进去就可以了。

其中theta1的尺寸为:25x401;

theta2尺寸为:10x26。

1.predict.m

function p = predict(Theta1, Theta2, X)

%PREDICT Predict the label of an input given a trained neural network

% p = PREDICT(Theta1, Theta2, X) outputs the predicted label of X given the

% trained weights of a neural network (Theta1, Theta2)

% Useful values

m = size(X, 1);

num_labels = size(Theta2, 1);

% You need to return the following variables correctly

p = zeros(size(X, 1), 1);

X = [ones(m,1) X];

z_2 = X* Theta1';

a_2 = sigmoid(z_2);

m_2 = size(a_2, 1);

a_2 = [ones(m_2,1) a_2];

z_3 = a_2* Theta2';

a_3 = sigmoid(z_3);

[~, p] = max(a_3,[],2);

end

这部分就是神经网络三层结构不断计算最后得出h的过程。

最后一行[~, p] = max(a_3,[],2);中,a_3维度为5000×10,max(a_3,[],2)返回a_3中每行最大值以及这个最大值所在的列数,也就是它的label。最大值这项赋空(因为~),所在位置赋给p。

最后主函数中的fprintf('\nTraining Set Accuracy: %f\n', mean(double(pred == y)) * 100);即为输出整个集合预测的准确率,这块我一开始一直有疑问,认为识别数字的准确率难道不是只有0%或100%?但其实这里的准确率指的是整个验证集中所有数字预测的总体准确率,比如一共有100个数字,我预测对的有60个,准确率为60%。(ps:这里的pred==y为逻辑表达式~)

最后的预测部分被写在主函数里:

for i = 1:m

% Display

fprintf('\nDisplaying Example Image\n');

displayData(X(rp(i), :));

pred = predict(Theta1, Theta2, X(rp(i),:));

fprintf('\nNeural Network Prediction: %d (digit %d)\n', pred, mod(pred, 10));

% Pause with quit option

s = input('Paused - press enter to continue, q to exit:','s');

if s == 'q'

break

end

就写到这里~~ 有什么错误欢迎大家帮忙指正!!

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?