本科毕设需要在FPGA/ZYNQ平台实现一个双目深度估计系统,其中大部分图像处理模块都是使用HLS工具实现的。双目深度估计需要对左右图像进行立体校正,实现极线约束。在Xilinx官方的HLS视频库中有直接能用的处理函数,这里稍微记录一下实现过程。

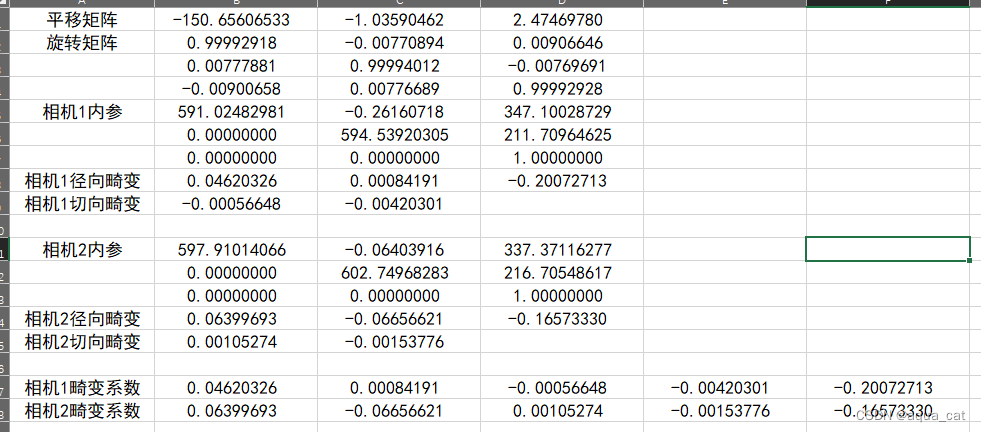

Matlab双目标定

获取棋盘格图像对

保存好双目相机的左右图像对,左右图像命名标号然后分开放在两个文件夹下。

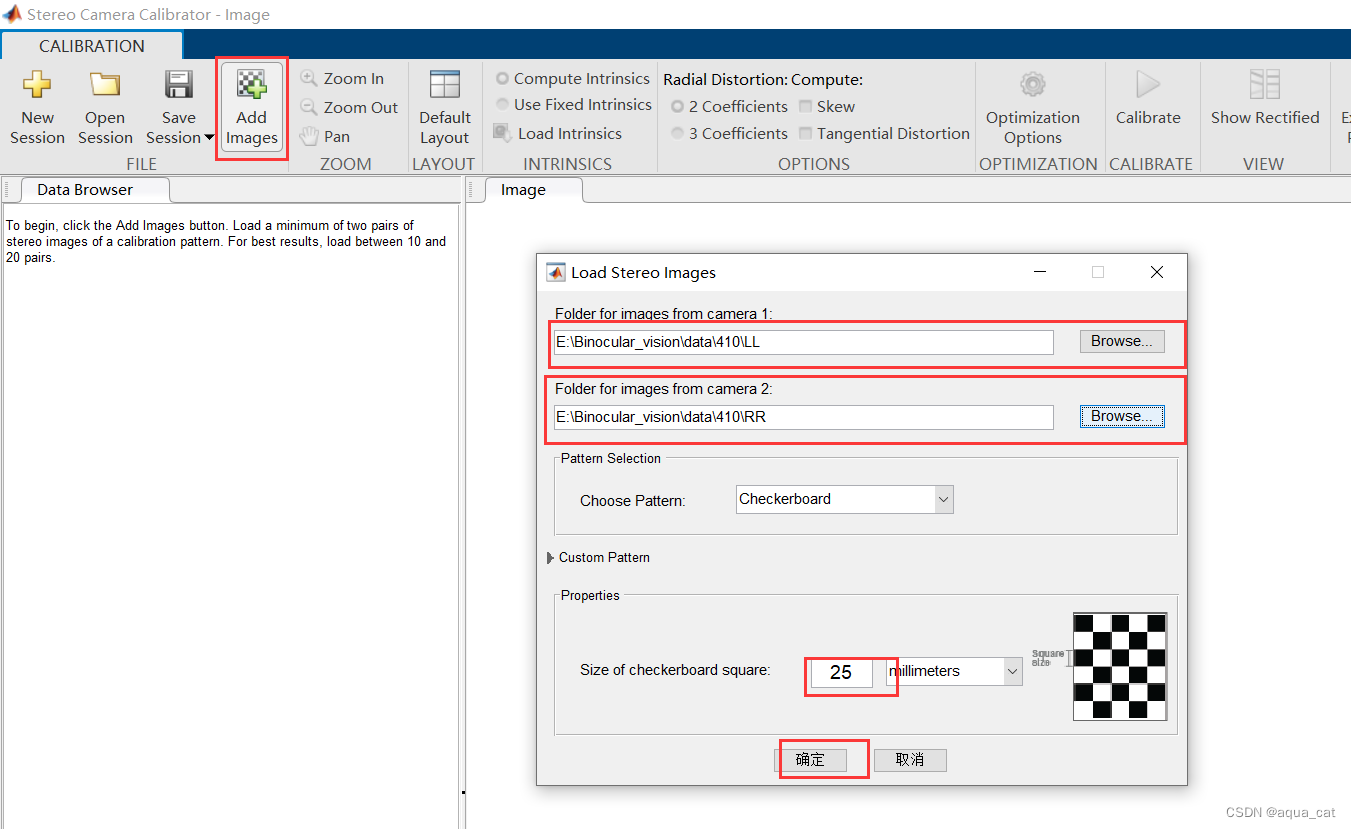

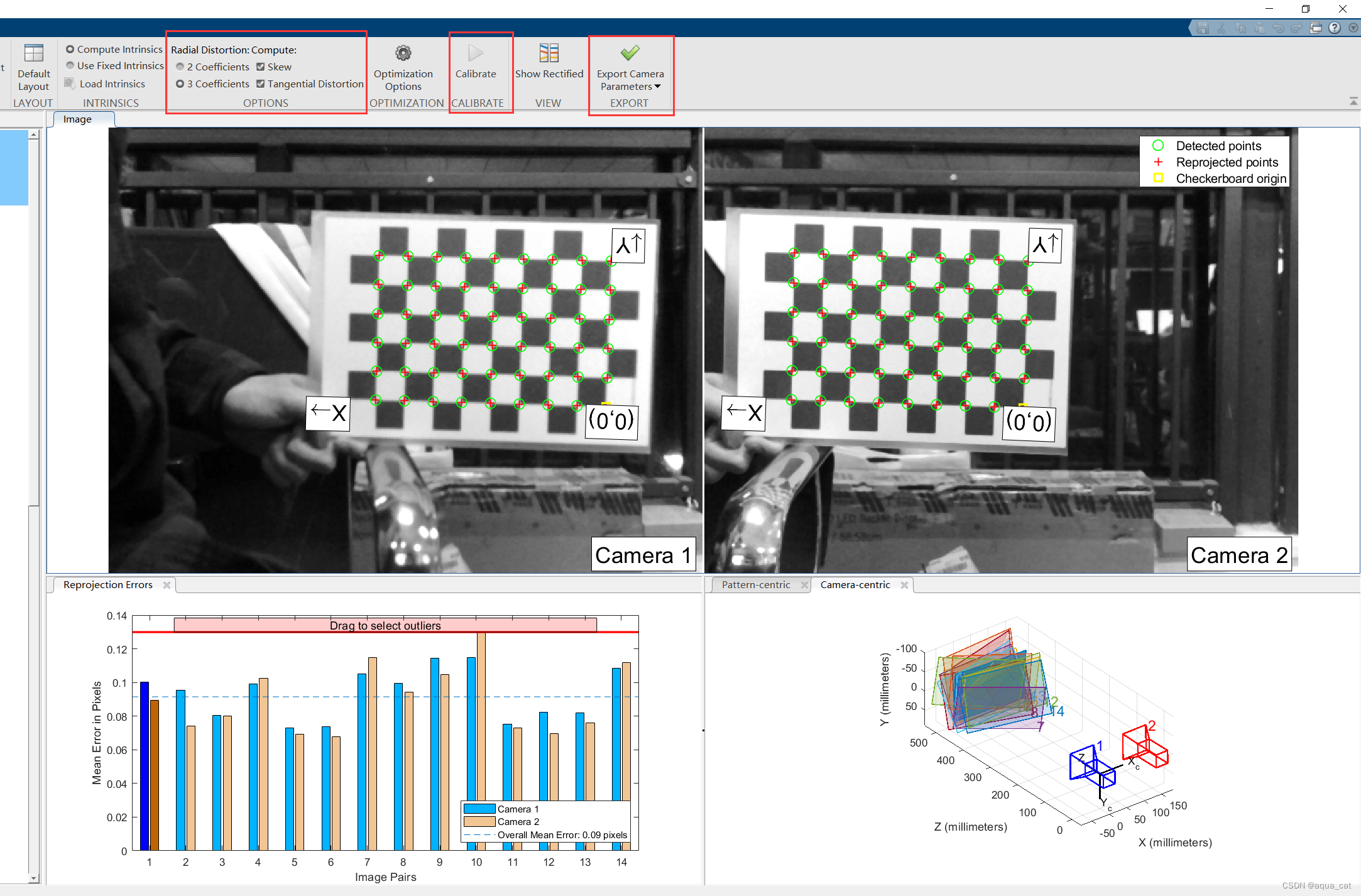

Matlab双目标定工具箱

这个工具箱基本属于傻瓜式操作,简单几步相机的各种参数矩阵就算出来了。

具体操作流程可以参考:matlab双目标定(详细过程)

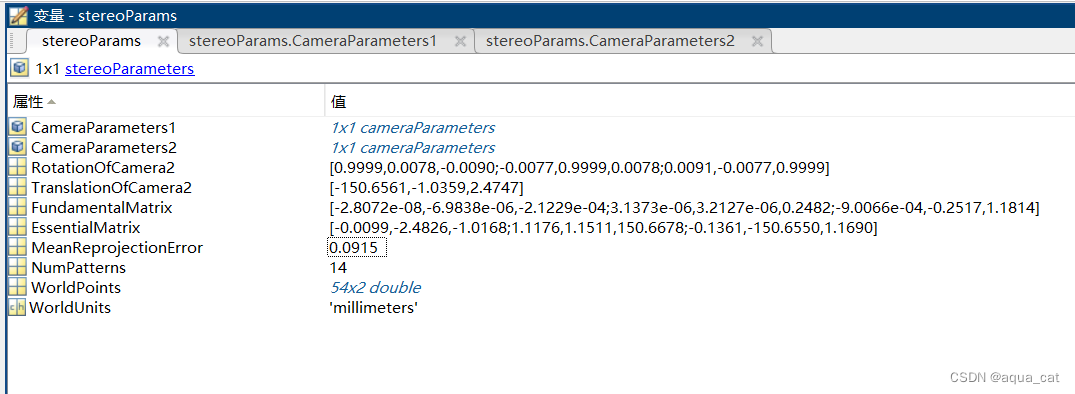

参数保存

整理需要使用的参数并保存好,记得旋转矩阵和相机内参矩阵需要转置。

HLS立体校正

stereoRectify

stereoRectify是Opencv里的函数,用于计算变换矩阵和投影矩阵,具体参数说明参考:OpenCV如何正确使用stereoRectify函数

int main(void)

{

cv::Mat cameraMatrix1 = (cv::Mat_<double>(3, 3) << 591.02482981, -0.26160718, 347.10028729, 0.0, 594.53920305, 211.70964625, 0.0, 0.0, 1.0);

cv::Mat cameraMatrix2 = (cv::Mat_<double>(3, 3) << 597.91014066, -0.06403916, 337.37116277, 0.0, 602.74968283, 216.70548617, 0.0, 0.0, 1.0);

cv::Mat distCoeffs1 = (cv::Mat_<double>(5, 1) << 0.04620326, 0.00084191, -0.00056648, -0.00420301, -0.20072713);

cv::Mat distCoeffs2 = (cv::Mat_<double>(5, 1) << 0.06399693, -0.06656621, 0.00105274, -0.00153776, -0.16573330);

cv::Size imageSize(640, 480);

cv::Mat R = (cv::Mat_<double>(3, 3) << 0.99992918, -0.00770894, 0.00906646, 0.00777881, 0.99994012, -0.00769691, -0.00900658, 0.00776689, 0.99992928);

cv::Mat T = (cv::Mat_<double>(3, 1) << -150.65606533, -1.03590462, 2.47469780);

cv::Mat R1, R2, P1, P2, Q;

cv::stereoRectify(cameraMatrix1, distCoeffs1, cameraMatrix2, distCoeffs2, imageSize, R, T, R1, R2, P1, P2, Q);

cv::FileStorage fw("out.txt", cv::FileStorage::WRITE);

fw << "R1" << R1;

fw << "R2" << R2;

fw << "P1" << P1;

fw << "P2" << P2;

fw.release();

return 0;

}

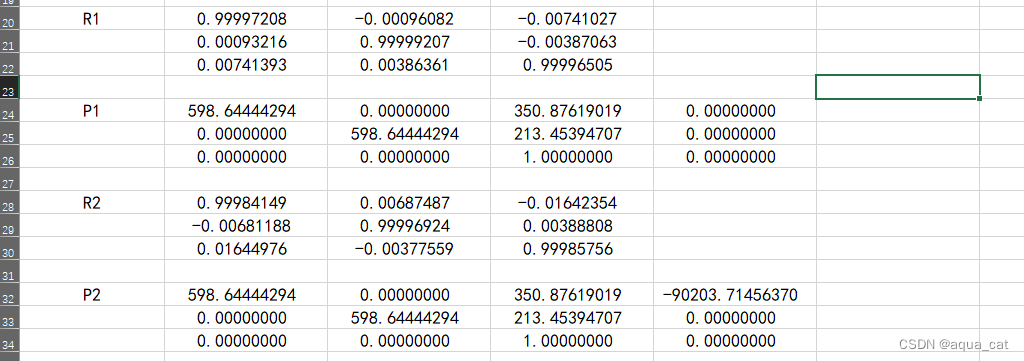

最后得到四个新矩阵:R1、R2、P1、P2。

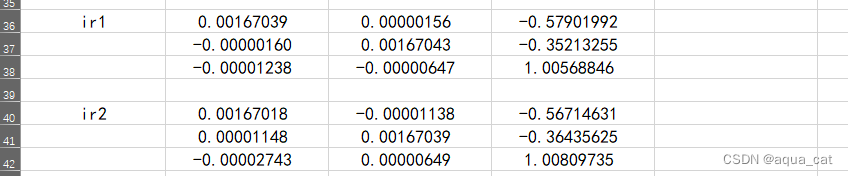

计算IR矩阵

IR矩阵是HLS视频库中InitUndistortRectifyMapInverse函数的其中一个输入,InitUndistortRectifyMapInverse相当于是Opencv中的initUndistortRectifyMap函数,但是因为FPGA中不好计算矩阵的逆,所以需要我们计算好了再用作输入,具体参考:InitUndistortRectifyMapInverse

计算IR矩阵实现:

R1 = [0.99997208, -0.00096082, -0.00741027;

0.00093216, 0.99999207, -0.00387063;

0.00741393, 0.00386361, 0.99996505;];

P = [598.64444294, 0.00000000, 350.87619019;

0.00000000, 598.64444294, 213.45394707;

0.00000000, 0.00000000, 1.00000000;];

R2 = [0.99984149, 0.00687487, -0.01642354;

-0.00681188, 0.99996924, 0.00388808;

0.01644976, -0.00377559, 0.99985756;];

ir1 = inv(P * R1);

ir2 = inv(P * R2);

立体校正

计算得到ir矩阵后,就可以使用InitUndistortRectifyMapInverse函数与remap进行立体校正,左相机校正具体实现(右相机修改对应参数即可):

#define HEIGHT 480

#define WIDTH 640

typedef hls::stream< ap_axiu<8,1,1,1> > AXIS_GRAY;

typedef xf::cv::Mat<XF_8UC1, HEIGHT, WIDTH, XF_NPPC1, 0> GRAY_IMAGE;

typedef xf::cv::Mat<XF_32FC1, HEIGHT, WIDTH, XF_NPPC1, 0> MAP_IMAGE;

void Image_Correct_L(AXIS_GRAY &Video_Input, AXIS_GRAY &Video_Output)

{

#pragma HLS INTERFACE axis port = Video_Input

#pragma HLS INTERFACE axis port = Video_Output

#pragma HLS INTERFACE ap_ctrl_none port = return

GRAY_IMAGE Image_Input, Image_Output;

MAP_IMAGE Image_Mapx, Image_Mapy;

ap_fixed<32, 12> cameraMatrix[9], distCoeffs[5], ir[9];

cameraMatrix[0] = 591.02482981;

cameraMatrix[1] = -0.26160718;

cameraMatrix[2] = 347.10028729;

cameraMatrix[3] = 0.00000000;

cameraMatrix[4] = 594.53920305;

cameraMatrix[5] = 211.70964625;

cameraMatrix[6] = 0.00000000;

cameraMatrix[7] = 0.00000000;

cameraMatrix[8] = 1.00000000;

distCoeffs[0] = 0.04620326;

distCoeffs[1] = 0.00084191;

distCoeffs[2] = -0.00056648;

distCoeffs[3] = -0.00420301;

distCoeffs[4] = -0.20072713;

ir[0] = 0.00167039;

ir[1] = 0.00000156;

ir[2] = -0.57901992;

ir[3] = -0.00000160;

ir[4] = 0.00167043;

ir[5] = -0.35213255;

ir[6] = -0.00001238;

ir[7] = -0.00000647;

ir[8] = 1.00568846;

#pragma HLS DATAFLOW

xf::cv::AXIvideo2xfMat(Video_Input, Image_Input);

xf::cv::InitUndistortRectifyMapInverse<9, 5, XF_32FC1, HEIGHT, WIDTH, XF_NPPC1, 0, 0>

(cameraMatrix, distCoeffs, ir, Image_Mapx, Image_Mapy, 9, 5);

xf::cv::remap<16, XF_INTERPOLATION_BILINEAR, XF_8UC1, XF_32FC1, XF_8UC1, HEIGHT, WIDTH, XF_NPPC1, false, 0, 0, 0, 0>

(Image_Input, Image_Output, Image_Mapx, Image_Mapy);

xf::cv::xfMat2AXIvideo(Image_Output, Video_Output);

}

HLS平台仿真代码:

#include "correct.h"

#include <iostream>

#include "opencv2/opencv.hpp"

#include "common/xf_axi.hpp"

int main(void)

{

AXIS_GRAY Video_IN, Video_OUT;

cv::Mat mat_in = cv::imread("Left.bmp", 0);

cv::Mat mat_out = cv::Mat::zeros(HEIGHT, WIDTH, CV_8U);

xf::cv::cvMat2AXIvideoxf<XF_NPPC1, 8>(mat_in, Video_IN);

Image_Correct_L(Video_IN, Video_OUT);

xf::cv::AXIvideo2cvMatxf<XF_NPPC1, 8>(Video_OUT, mat_out);

cv::imwrite("out.jpg", mat_out);

return 0;

}

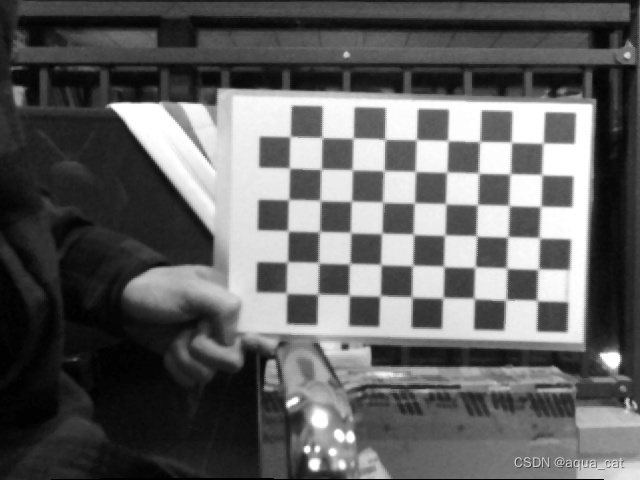

左相机校正结果

右相机校正结果

总结

因为原本相机的镜头畸变较小,所以校正后的图像并没有损失太多的有效显示面积。在HLS中完成设计与功能仿真后,就可以生成相应的IP模块,在Vivado中使用。

7084

7084

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?